Clear Sky Science · en

Adaptive reinforcement learning framework for sustainable microgrid optimization in arid urban environments

Keeping the Lights On in a Desert City

Imagine running a modern city where summer days regularly soar above 40°C, air conditioners roar nonstop, and power lines strain to keep up. That is daily life in places like Riyadh, Saudi Arabia. This paper explores how a new kind of smart control system, inspired by the way computers learn to play complex video games, can juggle solar panels, wind turbines, batteries, diesel generators, and the main grid to keep such a city powered more cheaply and with less pollution.

Why Small Power Networks Matter

Instead of relying only on huge power plants far away, many cities are turning to "microgrids"—small, local networks that combine different energy sources and can even share power with neighbors. In hot, dry regions, this is especially important: demand for cooling jumps up and down with the weather, solar energy comes in bursts during the day, and wind can be weak or unpredictable. Traditional control systems tend to follow fixed rules or schedules. They are not very good at reacting to sudden changes, like a spike in air-conditioning use or a dusty day that blocks the sun. The result is wasted clean energy, more fuel burned in diesel generators, and higher bills.

A Learning Brain for the Power System

The researchers built a detailed computer model of five interconnected microgrids representing typical buildings and districts in Riyadh—large and small homes, mixed-use blocks, and commercial areas. Each microgrid had its own mix of solar panels, small wind turbines, diesel backup, and battery storage, along with a link to the wider electricity network. Using building-energy software (EnergyPlus), they generated hour‑by‑hour data for a full year: how much power people used, how hot it was, how strong the sun shone, and how fast the wind blew. On top of this, they added a reinforcement learning "agent"—a software brain that observes the state of the system (demand, battery charge, available sun and wind, generator status) and decides what to do next: charge or discharge batteries, switch diesel generators on or off, import or export power, and share energy among microgrids.

How the System Learns to Make Better Choices

Reinforcement learning works by trial and error. In the simulation, the agent tries different control actions hour by hour and receives a reward or penalty based on what happens. The reward combines three simple ideas: keep costs low, keep the lights on, and avoid wasting or ignoring renewable energy. If its decisions lead to expensive diesel use, power shortages, or unused solar energy, the agent is penalized. If it manages to meet demand with more sun and wind, fewer emissions, and stable operation, it is rewarded. Over tens of thousands of training rounds, the agent gradually discovers strategies that balance these goals. Once trained, it can make decisions in real time in just a few thousandths of a second.

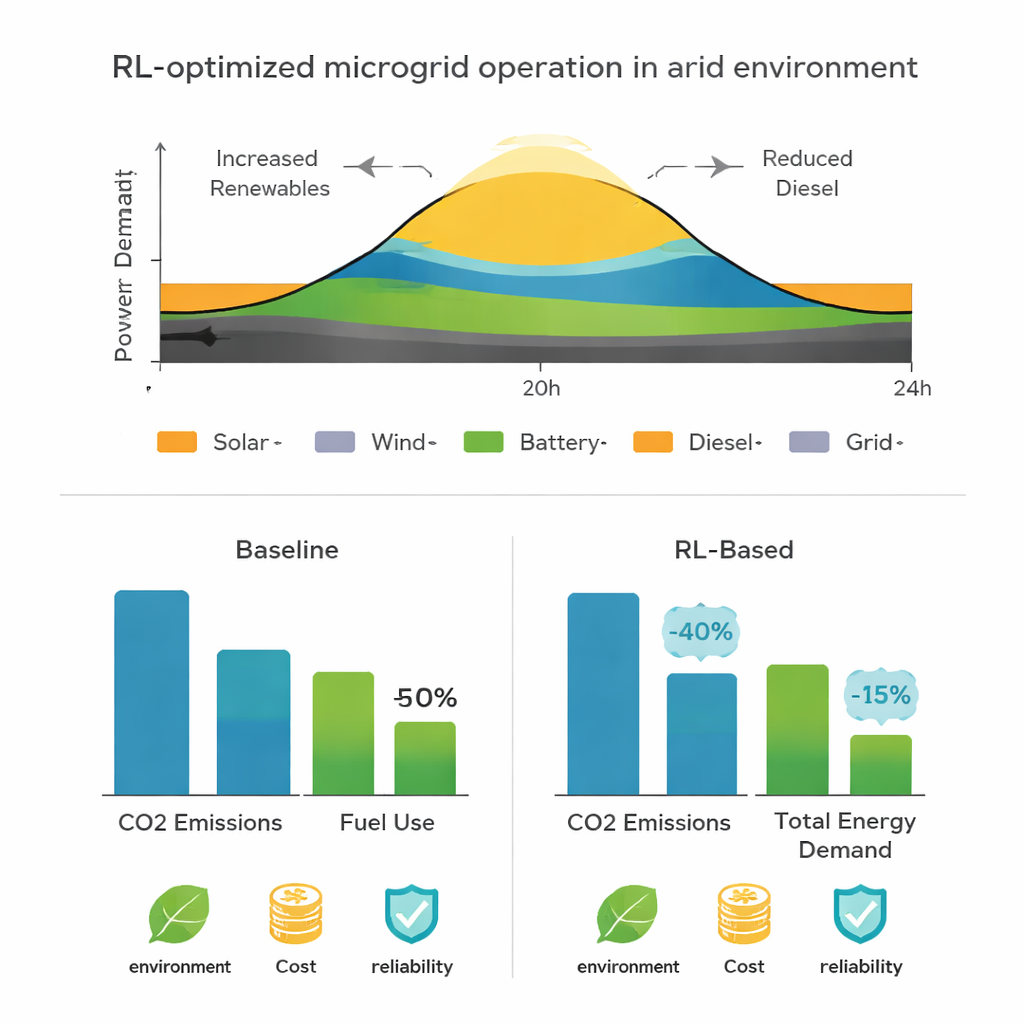

What Happens When the Desert Fights Back

To see whether this approach would truly help in a harsh climate, the team tested it under realistic and stressful conditions. The model reproduced Riyadh’s seasonal swings, with heavy cooling in summer and milder loads in winter. The learning-based controller accurately tracked both hourly and yearly energy use (explaining about 90–94% of the variation), which is crucial for anticipating peaks. It reduced energy losses over a typical day and shifted more of the supply to solar and wind, using batteries to smooth out gaps. When the researchers simulated events like a dust storm that suddenly cut solar power or a heat wave that pushed demand sharply higher, the system responded by discharging batteries, coordinating diesel use, and sharing surplus energy across microgrids—all without cutting off users.

Cleaner Air and Lower Bills

Beyond keeping the power flowing, the study looked at environmental impact using life‑cycle assessment focused on day‑to‑day operation. Compared with a traditional, rule‑based setup, the adaptive system cut carbon dioxide emissions by about 14%, reduced acid‑forming pollution by roughly 14%, and lowered total energy use by around 10%. These gains come mainly from running diesel generators less often and making better use of local renewable energy and storage. In simple terms, giving the microgrid a learning brain allowed it to squeeze more useful work out of every unit of clean power, rely less on fuel, and stay reliable even when the desert climate misbehaves.

Citation: Mohamed, M.A.S., Almazam, K., Alzahrani, M. et al. Adaptive reinforcement learning framework for sustainable microgrid optimization in arid urban environments. Sci Rep 16, 7356 (2026). https://doi.org/10.1038/s41598-026-37752-z

Keywords: microgrids, reinforcement learning, renewable energy, energy management, arid cities