Clear Sky Science · en

Autonomous path planning for intercostal robotic ultrasound imaging using reinforcement learning

Robots Helping Doctors See Through the Ribs

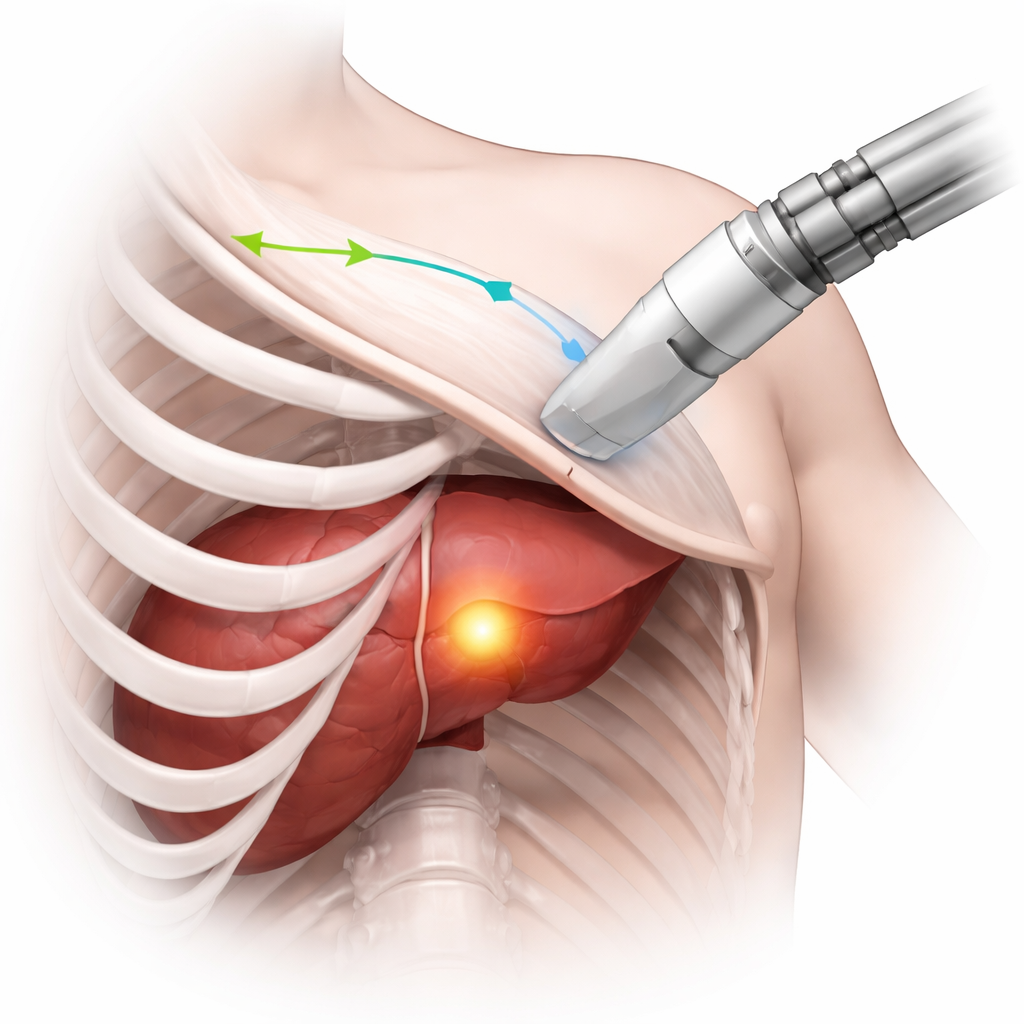

When doctors use ultrasound to monitor organs like the liver or heart, ribs often get in the way, casting dark shadows that hide critical details. Getting a clear view depends heavily on the skill and experience of the person holding the probe. This study explores how a robot, guided by artificial intelligence, can automatically plan an ultrasound scanning path between the ribs so that tumors and other targets are seen clearly and consistently, no matter who is operating the machine.

Why Seeing Between the Ribs Is So Hard

Ultrasound is popular because it is safe, affordable, and provides real-time images. But to image organs tucked behind the ribcage, the probe must be carefully steered through the narrow gaps between the ribs. If the sound waves hit bone, they are blocked, creating large black regions in the image where nothing can be seen. Human operators learn, through training and experience, how to angle and move the probe to avoid these shadows while keeping the area of interest in view. This is especially important in procedures like liver tumor ablation, where surgeons must repeatedly check that the entire tumor has been treated. The challenge is to turn this delicate, three-dimensional skill into something a robot can do on its own.

Teaching a Robot with Virtual Patients

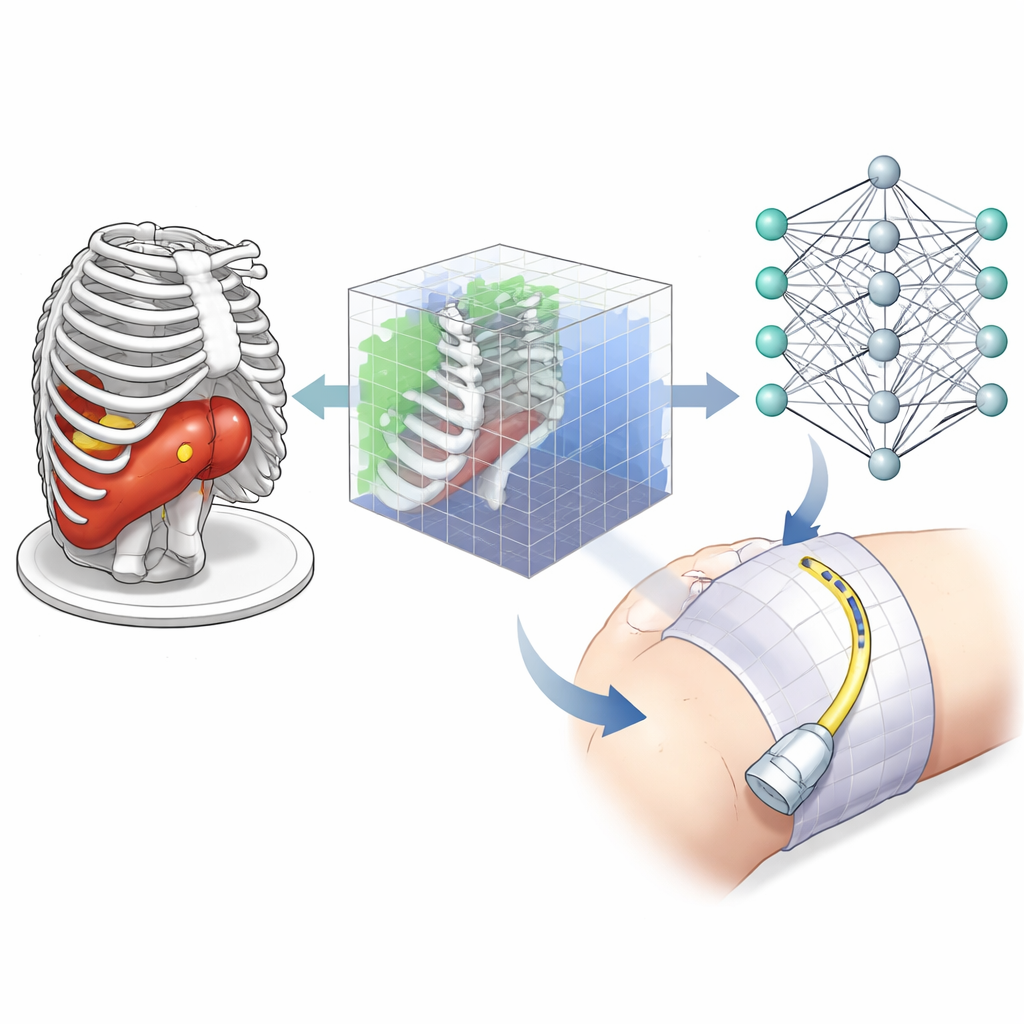

Instead of learning directly from noisy and variable ultrasound images, the researchers built a virtual training ground using computed tomography (CT) scans. CT offers a crisp three-dimensional map of bones, skin, and liver, and tumors can be added with different shapes and positions to create many realistic scenarios. In this simulator, a virtual ultrasound probe moves across the skin surface over the ribs, and the paths of the ultrasound beams are modeled as rays that pass through soft tissue but are blocked by bone. This simple but realistic model tells the system which parts of a tumor are visible, how much the sound is weakened as it travels, and where shadows appear.

How the Learning System Decides Where to Scan

The team used a form of artificial intelligence called reinforcement learning, in which an "agent" learns by trial and error to choose actions that lead to higher rewards. At each step, the agent sees a compact 3D representation of the scene around the tumor: which tiny volume elements contain tumor, which contain bone, and which are crossed by the simulated ultrasound rays. It can then move or tilt the virtual probe in small increments, or switch between an "explore" mode and a "recording" mode used for building the final 3D view. The reward it receives combines three goals: covering as much of the target volume as possible, keeping the probe close enough to reduce signal loss, and avoiding regions where rays are blocked by bone, which would create useless shadowed images.

Putting the Method to the Test

To see if the learned strategy generalizes beyond its training examples, the researchers tested it on new CT scans and new tumor shapes that the agent had never encountered. In these trials, a scanning plan was considered successful if at least 95% of the target volume was imaged within a limited number of steps. Across small, medium, and large targets, the system achieved success rates up to 95%, while maintaining a high percentage of shadow-free views and reasonable distances between probe and tumor. The method also worked when there were multiple targets to cover, such as residual tumor spots scattered in the liver, although performance naturally dipped slightly as the task became more complex.

From Simulation to the Operating Room

For now, the work focuses on planning the path rather than physically moving a real robot. The paths are generated on patient-specific CT scans or on generic CT "atlases" that can later be matched to an individual’s anatomy using existing registration techniques. In the future, this planning module is intended to be combined with robotic control, motion compensation for breathing, and more realistic ultrasound image simulation. For a layperson, the key takeaway is that this approach could make ultrasound monitoring during procedures like liver tumor treatment more reliable and less dependent on operator expertise, by letting a robot find smart, shadow-free routes between the ribs to keep the whole target in view.

Citation: Bi, Y., Qian, C., Zhang, Z. et al. Autonomous path planning for intercostal robotic ultrasound imaging using reinforcement learning. Sci Rep 16, 6356 (2026). https://doi.org/10.1038/s41598-026-37702-9

Keywords: robotic ultrasound, reinforcement learning, liver tumor imaging, intercostal scanning, medical robotics