Clear Sky Science · en

Infrared and visible image fusion algorithm based on NSCT and improved FT saliency detection

Seeing in the Dark and Through the Clutter

Modern cameras give us sharp, colorful views of the world, but they struggle in fog, darkness, or glare—exactly when we most need reliable vision for driving, surveillance, search‑and‑rescue, or drones. Infrared sensors, which capture heat instead of color, excel in these harsh conditions but produce blurry, low‑detail pictures. This paper presents a way to intelligently combine infrared and visible‑light images so that the final picture shows both crisp details and clearly highlighted people or objects, even in difficult scenes.

Why Two Eyes Are Better Than One

Visible‑light cameras record fine textures and rich backgrounds, but their performance collapses at night or in heavy shadow, and targets can blend into similarly colored surroundings. Infrared cameras do the opposite: they pick up warm bodies and heat‑emitting objects against dark backgrounds, day or night, yet lose much of the subtle structure in buildings, trees, and roads. Fusing these two kinds of images into one can, in principle, deliver the best of both worlds. However, many existing fusion methods either wash out contrast, blur object edges, or let noisy infrared patterns overwhelm the useful details from the visible‑light image.

The Core Idea: Let the Important Parts Stand Out

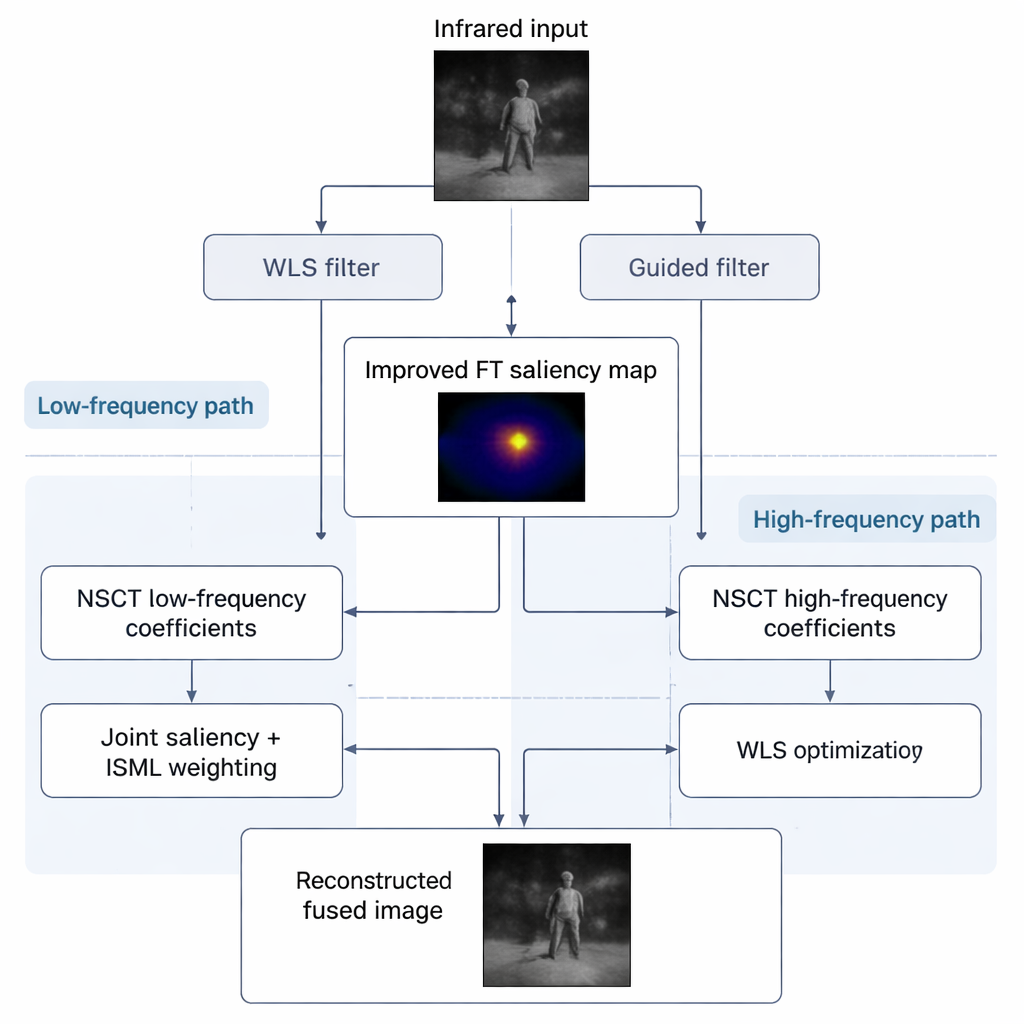

The authors approach fusion as a problem of conflict resolution between the two image types. They focus on three recurring issues: guessing which regions are truly important ("salient"), balancing overall brightness between hot infrared targets and bright visible backgrounds, and preserving delicate textures while suppressing infrared noise. To tackle this, they refine a popular technique called frequency‑tuned saliency detection, which attempts to mimic the human visual system by highlighting regions that naturally draw our attention. Instead of relying on a simple blur, they use a pair of smarter filters—one that smooths while keeping edges, and another that enhances contrast—to carve out a cleaner, sharper map of where the interesting infrared targets are.

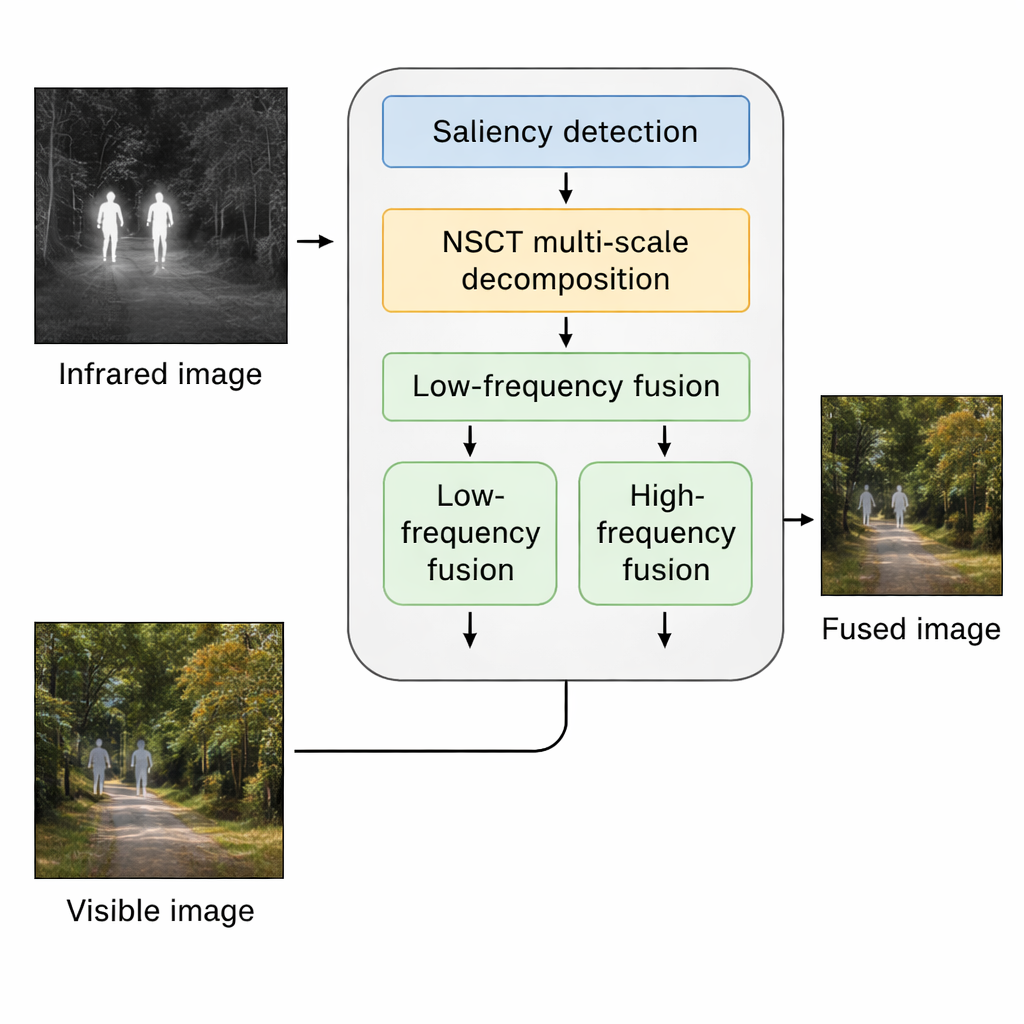

Peeling Apart Coarse Shapes and Fine Details

Once the algorithm knows where the key infrared targets lie, it breaks both the infrared and visible images into layers that separate coarse structures from fine details using a mathematical tool called the Non‑Subsampled Contourlet Transform. The low‑frequency layers contain broad brightness patterns, such as the sky, roads, or walls, while the high‑frequency layers capture edges, textures, and small features. For the coarse layers, the method mixes information using both the improved infrared saliency map and a Laplacian‑based measure of how sharp local structures are. This helps avoid washed‑out images where either the warm objects overpower the scene or the visible background drowns out important targets.

Keeping Textures Sharp, Noise Under Control

The high‑frequency layers require a different strategy, because they are where both useful texture and distracting noise live. Here the method first picks, region by region, whichever sensor offers stronger local detail. Then it refines this initial choice with a weighted least‑squares procedure that leans toward the cleaner, more informative visible‑light textures while still allowing meaningful infrared patterns through. The result is a fused image where tree branches, building edges, and road markings look crisp, but speckled infrared artifacts are reduced.

Better Pictures, Better Machine Decisions

The team tested their approach on several public datasets and their own low‑light images, comparing it to traditional techniques and modern deep‑learning methods. Human inspection showed that their fused images had clearer backgrounds, higher contrast, and more obvious targets, especially in dim corridors, streets at night, and cluttered outdoor scenes. Objective measures of information content, sharpness, and contrast mostly favored the new method or showed it to be well balanced across metrics. Crucially, when these fused images were fed into a popular object‑detection system (YOLOv5s), detection accuracy, precision, and recall all improved noticeably. In plain terms, the algorithm does not just make nicer‑looking pictures; it also helps automated systems find people and objects more reliably. This suggests that smarter fusion of infrared and visible imagery could play a key role in safer autonomous driving, more effective surveillance, and more dependable robots operating in the dark or in visually complex environments.

Citation: Fan, X., Kong, F., Shi, H. et al. Infrared and visible image fusion algorithm based on NSCT and improved FT saliency detection. Sci Rep 16, 7144 (2026). https://doi.org/10.1038/s41598-026-37670-0

Keywords: infrared-visible fusion, image saliency, multi-sensor imaging, night vision, computer vision