Clear Sky Science · en

Cognitive models facilitate real-time inference of latent motives

Why guessing hidden goals matters

Every day, you silently read the intentions of people around you—whether a driver is about to merge into your lane, a cyclist will stop, or a coworker is trying to help or compete. These split-second judgments rely on interpreting hidden motives from visible movements. Today’s artificial intelligence can be extremely accurate in prediction, but often acts as a “black box” that cannot explain why it made a decision. This study asks whether psychological models of human behavior can give AI a more humanlike sense of others’ motives, making it faster, more accurate, and easier to trust.

A simple game of chasing and dodging

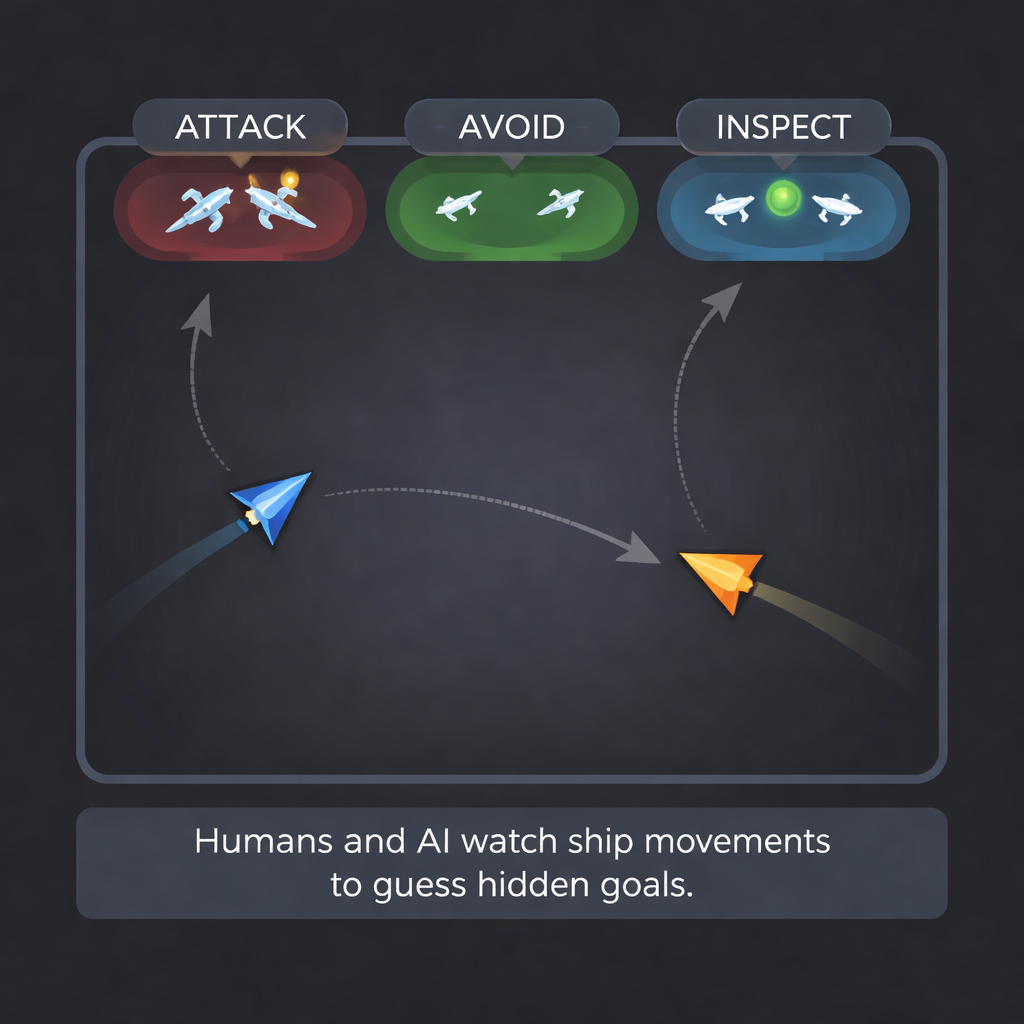

To explore this, the researchers built a stripped-down video game. On each 10-second round, a human player steered a triangular “ship” with a joystick while a computer-controlled ship moved according to one of several patterns. The human was secretly assigned one of three goals: Attack (collide with the other ship), Avoid (stay far away), or Inspect (stay nearby without colliding). The computer ship could behave aggressively, shyly, curiously, defensively, or just wander. These combinations created situations where the ships’ movements either aligned or conflicted—for example, an attacking human chasing a shy computer that kept trying to flee.

Measuring how well humans read hidden goals

The first step was to find out how well people themselves can read motives from motion. The team took gameplay from the eight best-performing ship pilots and turned each round into a short video. New volunteers watched these clips and had to guess the human player’s goal—attack, avoid, or inspect—after seeing only 1, 4, 7, or 10 seconds of movement. Across several groups, including participants with and without an autism diagnosis, people correctly identified the goal about two-thirds of the time. Accuracy rose as they saw more of the trial, and performance was similar across groups, giving a solid human benchmark for comparison.

A psychological blueprint for movement

Instead of feeding raw video-like data directly into a neural network, the authors built a cognitive model to capture the forces that might drive a person’s motion. Their “global-local objective pursuit” (GLOP) model assumes that a player balances several pulls at once: keeping a preferred distance from the opponent (too close feels dangerous, too far misses opportunities), staying in good positions on the screen rather than trapped in a corner, and matching or anticipating the other ship’s pace and direction. These factors are combined into a single “motivational” direction of movement, with extra terms to reflect how smoothly people move and how much randomness there is in their control.

Teaching AI to read minds from motion

To make this model useful in real time, the researchers simulated 100,000 game rounds using many different settings of the GLOP parameters. They then trained a recurrent neural network to take in sequences of ship positions and quickly estimate the hidden parameters—such as preferred distance or how strongly someone cares about global position. This network could recover several key parameters very accurately from just a few seconds of movement. Next, they trained a set of classifier networks to guess the player’s goal in three different ways: directly from raw position data, from simple summary statistics (like average distance and approach versus avoidance), or from the cognitive model’s inferred parameters. Finally, they built “ensemble” classifiers that combined these sources.

Beating the human benchmark

All of the AI classifiers matched or exceeded human performance, but the way information was prepared for them mattered. Networks that relied only on raw movement or only on model parameters performed similarly to people, at about 66% accuracy. Classifiers given simple summary statistics did better, and the best results came from combining those statistics with the cognitive model’s parameters, reaching about 72% accuracy. These model-informed systems also trained faster and more stably than those fed only raw data. When accuracy was tracked moment by moment during each round, the AI could update its guess about a player’s hidden goal in less than the time between screen refreshes, effectively inferring intent in real time.

What this means for everyday AI

For a layperson, the takeaway is that weaving psychological theory into AI can help machines understand not just what people do, but why they do it. By translating messy movements into a small set of interpretable motives—like how close someone wants to be or how they trade safety against opportunity—the system becomes both more accurate and easier to explain. In future applications such as self-driving cars or human–AI teams, this kind of “cognitive front end” could help AI anticipate other agents’ intentions earlier and more reliably, potentially preventing collisions and misunderstandings while offering human-friendly explanations like “the other driver is likely trying to merge, not just drifting.”

Citation: Fitch, A.K., Kvam, P.D. Cognitive models facilitate real-time inference of latent motives. Sci Rep 16, 6444 (2026). https://doi.org/10.1038/s41598-026-37587-8

Keywords: theory of mind, cognitive modeling, intent inference, human–AI interaction, explainable AI