Clear Sky Science · en

Image processing pipeline for AI-driven nanoparticle megalibrary characterization

Why Tiny Particles Need Big Data Help

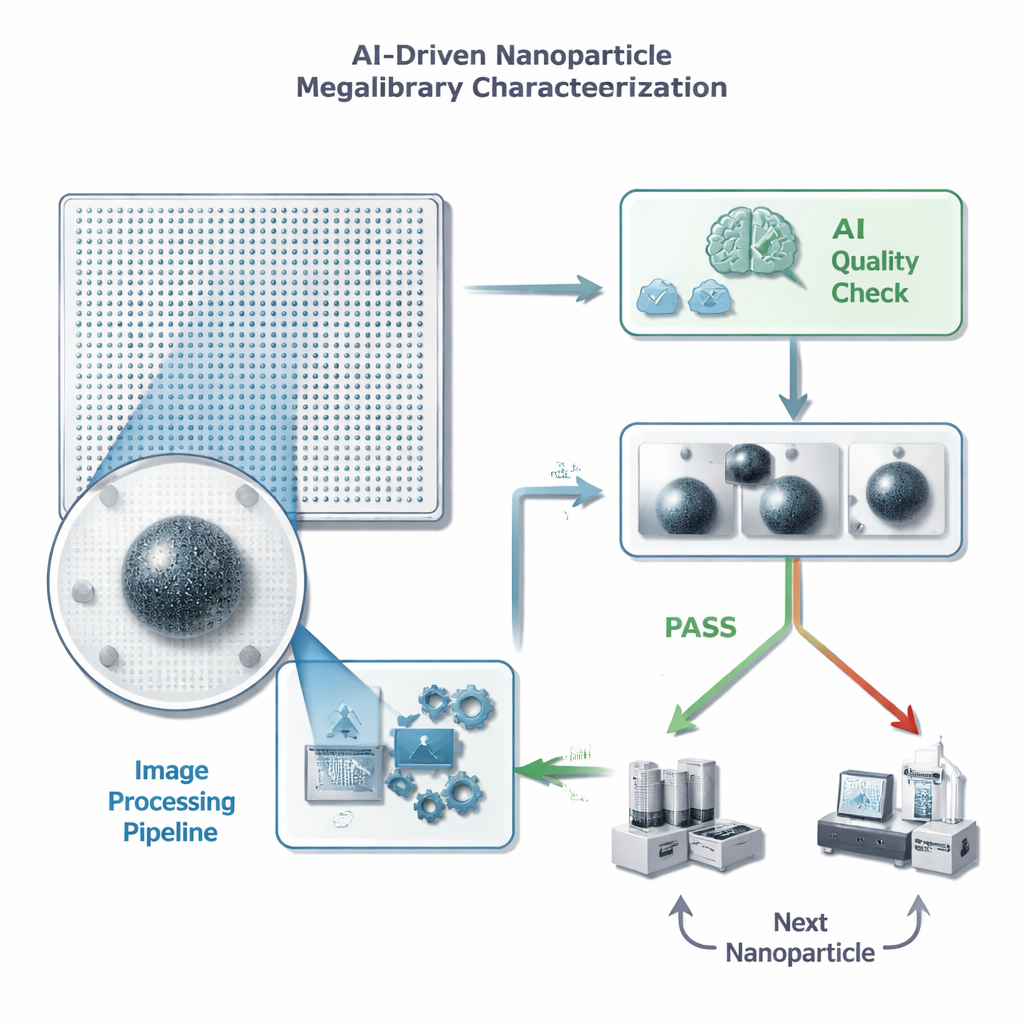

Modern materials science increasingly relies on making and testing huge numbers of tiny particles to discover better catalysts, batteries, and other advanced materials. New methods can now grow millions of different nanoparticles on a single chip, but checking the quality of each one through a microscope produces far more images than any human can reasonably review. This paper describes how researchers built an automated image-processing and AI pipeline that rapidly sorts “good” from “bad” nanoparticle images, cutting computing costs and speeding up experiments while keeping decisions highly reliable.

From Endless Images to Quick Decisions

Each nanoparticle in a “megalibrary” chip sits at a known position and can be imaged by an electron microscope. Before scientists invest time and expensive follow-up measurements on any one particle, they need a fast quality check: is there exactly one well-focused particle in the frame, with no distracting clutter or artifacts? The authors frame this as a simple pass/fail task for a machine-learning model, but with strict limits on how long it can spend per image—less than half a second, because a single chip may hold millions of particles. They also emphasize that false positives are especially harmful: if the AI mistakenly passes a bad image, it wastes time and storage on useless detailed measurements, while the occasional missed good particle is less damaging to overall progress.

Cleaning the View Before the AI Looks

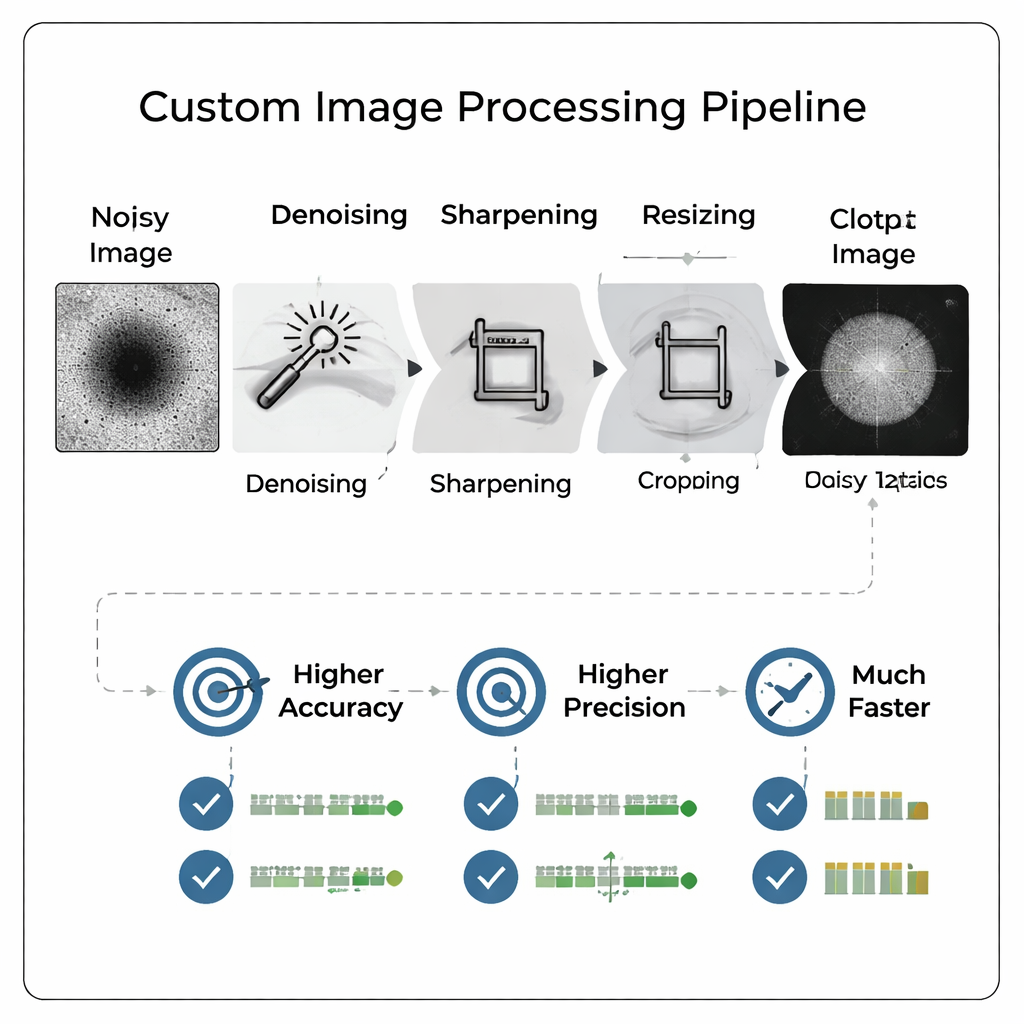

Instead of throwing raw, noisy microscope images directly into a large, complex neural network, the team designed a custom image-processing pipeline that “cleans up” the pictures first. The pipeline removes background noise, sharpens edges, crops tightly around the particle, and then shrinks the image to a much smaller size. Crucially, this preprocessing makes faint features easier to see and mimics the look of a higher magnification image without actually re-imaging the sample. The result is a compact, high-contrast picture that can be fed to a relatively simple neural network, reducing both training time and storage needs while preserving the details that matter for quality judgments.

Smarter Images Beat Bigger Models

The researchers rigorously compared many pipeline variants and resolutions, ultimately training 800 different models to see how image size and processing affect performance. They found that carefully processed images at modest resolutions (such as 128×128 pixels) let a small convolutional neural network outperform a previous, much larger model that had been discovered by an automated architecture search and trained on full 512×512 images. Accuracy improved by over 13 percentage points, while recall—the ability to correctly catch good particles—rose by more than 18 percentage points. Precision, the key measure for avoiding wasted effort on bad particles, reached about 96 percent, and the combined performance metric the authors favor also improved.

Doing More with Far Less Data

One of the most striking results is that processing matters more than raw image size. When the team compared models trained on simple “downsized only” images versus those using the full custom pipeline, the processed images consistently won—even when shrunk to extremely small sizes like 16×16 pixels. In fact, the best model using processed 16×16 images beat the best model using unprocessed 128×128 images across almost all metrics. The pipeline also helped most at lower microscope magnifications, where images are normally harder to interpret. Because lower magnification images are faster to acquire, this means labs can scan chips more quickly without sacrificing decision quality.

Faster Decisions for Self-Driving Labs

By combining smart image processing with a lean AI model, the authors cut training times from many hours on a supercomputer to under a minute on a single graphics processor. Once trained, the system can process and classify a new image in about 75 milliseconds, well below the 500-millisecond target and far faster than a human reviewer. In practical terms, this translates into rapid, reliable screening of nanoparticle megalibraries, helping researchers focus expensive instruments on the most promising candidates. As labs move toward more automated, “self-driving” discovery systems, approaches like this—clean up the data first, then apply streamlined AI—offer a powerful way to turn overwhelming image streams into actionable scientific insight.

Citation: Day, A.L., Wahl, C.B., dos Reis, R. et al. Image processing pipeline for AI-driven nanoparticle megalibrary characterization. Sci Rep 16, 7675 (2026). https://doi.org/10.1038/s41598-026-37566-z

Keywords: nanoparticles, image processing, machine learning, materials discovery, electron microscopy