Clear Sky Science · en

Exploring anatomical similarity in zero-shot learning for bone abnormality detection

Why Smarter X-rays Matter

Broken bones are among the most common injuries, yet confirming a fracture on an X-ray still relies heavily on the trained eye of a radiologist. That expertise is valuable, but it is also time-consuming and in short supply in many hospitals and clinics around the world. This study asks a simple but powerful question: can an artificial intelligence system learn to spot bone problems in one body part—say the elbow—and then successfully find similar problems in other parts, like the wrist or fingers, without ever being retrained on those new regions?

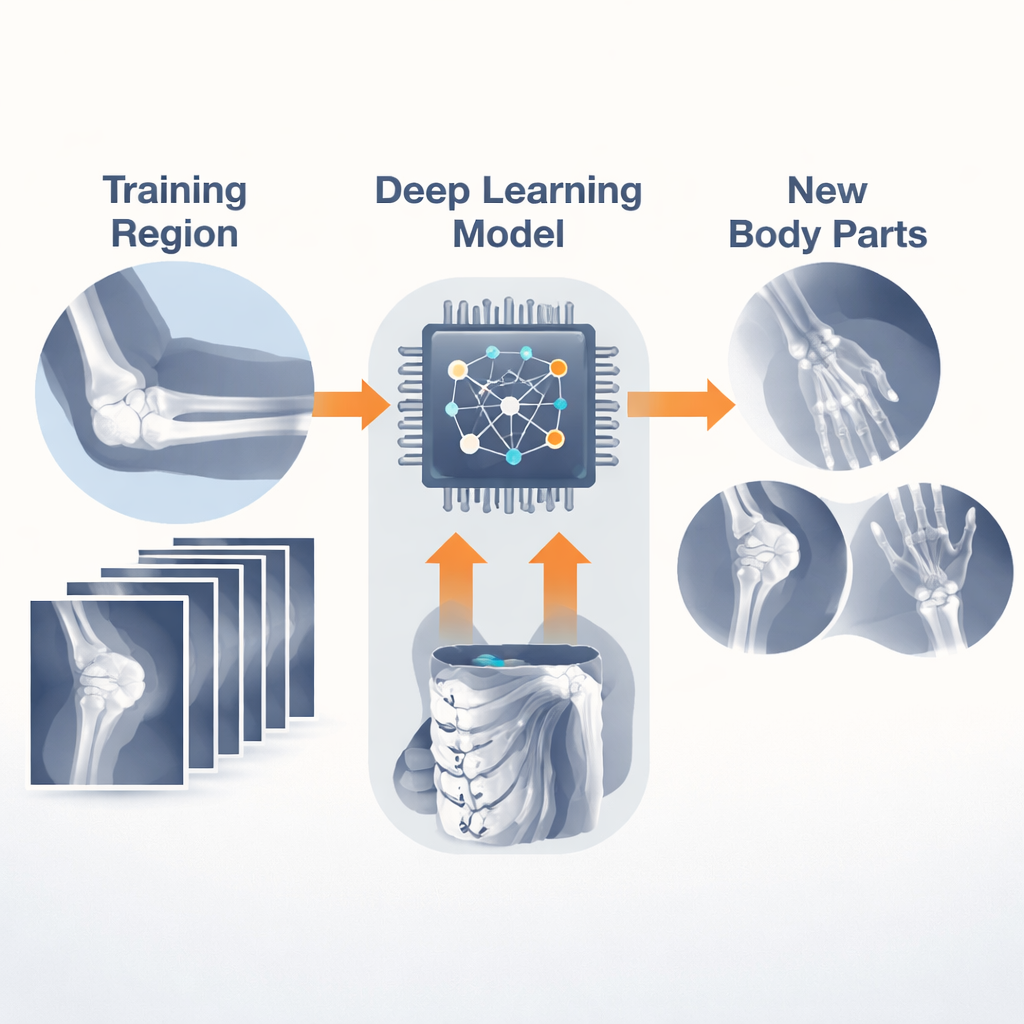

Teaching a Computer to Read Bones

To explore this idea, the researchers turned to a large public collection of upper-limb X-rays called the MURA dataset. Instead of focusing only on fractures, MURA labels each patient study as simply “normal” or “abnormal.” The team trained a compact deep learning model on X-rays from one specific region of the arm, such as the elbow or wrist, and then asked it to judge whether studies from other regions looked healthy or not. Importantly, the model never saw example images from these new regions during training—an approach known as “zero-shot” or out-of-domain learning.

Testing Every Combination of Body Parts

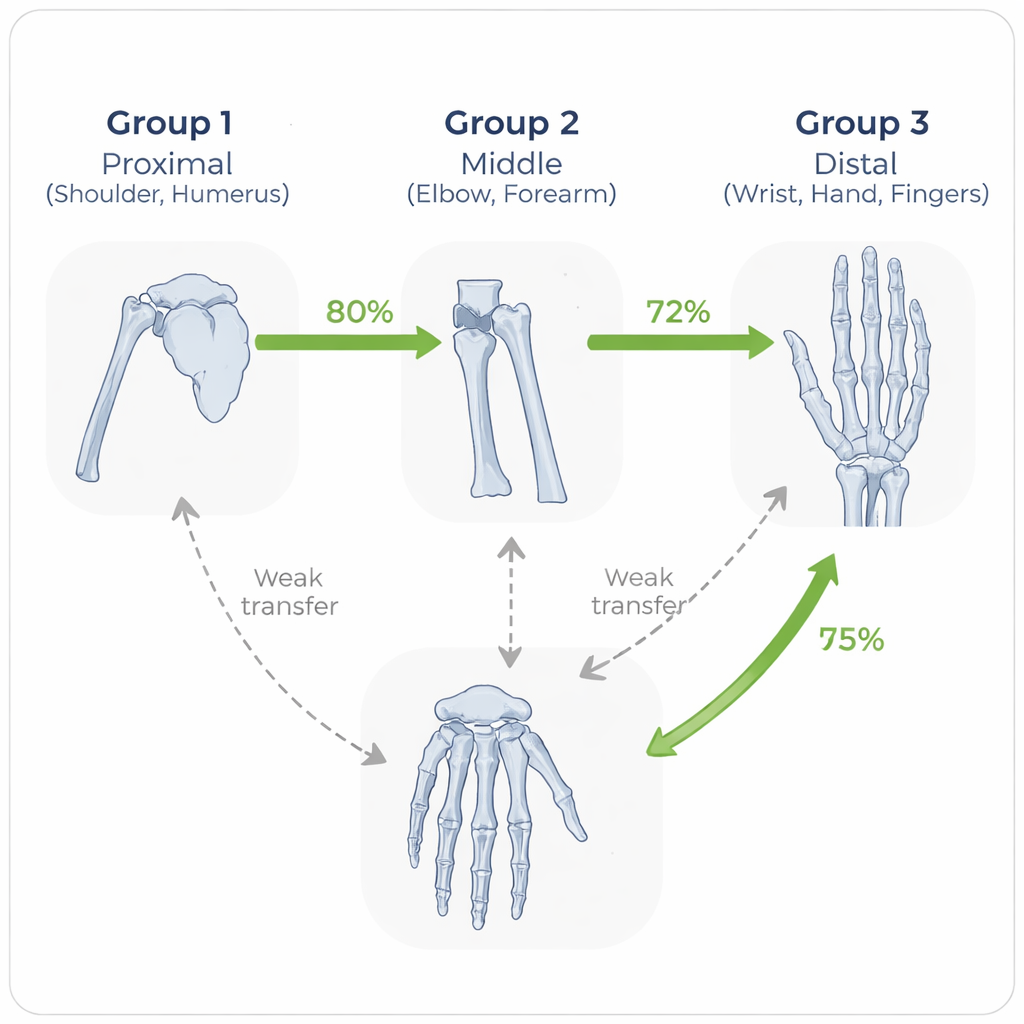

Rather than stopping at a few convenient tests, the authors systematically tried every possible train–test pairing across seven upper-limb regions: shoulder, humerus, elbow, forearm, wrist, hand, and finger. They also treated each patient visit, which can include several X-ray views, as a single decision unit by averaging the model’s confidence across images—closer to how doctors look at a case. For each pairing, they calculated accuracy and rigorous confidence intervals, and even repeated key experiments with a second, more expressive neural network to see if the trends held up regardless of model design.

When Similar Bones Help Each Other

A striking pattern emerged: the model performed best when it was tested on the same body part it was trained on, and next best when training and testing parts were anatomically similar. For example, a model trained on forearm images transferred well to elbow images, and a wrist-trained model did relatively well on hand and finger studies. In contrast, performance dropped when the model had to jump between very different regions, such as from hand to humerus. By grouping bones into proximal (shoulder, humerus), middle (elbow, forearm), and distal (wrist, hand, finger) regions, the team showed that “within-group” transfers were consistently stronger than “between-group” ones.

Beyond a Single Dataset or Network

To check that these observations were not quirks of one dataset or model, the researchers tested their trained systems on a second collection of X-rays called FracAtlas, which includes hand, shoulder, hip, and leg images from different hospitals. Without any fine-tuning, a model trained on MURA hand images did well on leg fractures but showed weaker performance on hip and shoulder. They also repeated some experiments with a different neural network architecture and saw similar cross-region patterns. Additional analyses varied the image resolution and examined where the model “looked” in the X-ray using heat maps, revealing that successful predictions often focused on clinically meaningful bone regions, while mistakes sometimes arose from distractions like labels or borders in the image.

What This Means for Real-World Care

For non-specialists and health systems with limited resources, the message is both encouraging and cautionary. The study shows that an AI tool trained on one well-labeled set of X-rays can meaningfully help evaluate other, similar body parts without requiring huge new datasets each time. However, its reliability falls off when the new regions differ too much from what it has seen before. In everyday terms, a system that learns fractures in the wrist can be a helpful assistant for the hand and fingers, but should not be blindly trusted for the shoulder or hip. Understanding these limits can guide more efficient data collection—prioritizing key anatomical groups—and support safer deployment of AI in clinics that have few radiologists, helping more patients receive timely, accurate assessments of bone injuries.

Citation: Kutbi, M., Shaban, K. & Khogeer, A. Exploring anatomical similarity in zero-shot learning for bone abnormality detection. Sci Rep 16, 6390 (2026). https://doi.org/10.1038/s41598-026-37516-9

Keywords: bone fracture detection, medical imaging AI, zero-shot learning, X-ray analysis, transfer learning