Clear Sky Science · en

A clinically applicable and generalizable deep learning model for anterior mediastinal tumors in CT images across multiple institutions

Why spotting rare chest tumors matters

Most of us will never hear the phrase “anterior mediastinal tumor” in a clinic, precisely because these growths—often involving the thymus gland in front of the heart—are rare. Yet when they do appear, they are hard to recognize and even harder to measure accurately on CT scans, tasks that typically demand specialists at major cancer centers. This study explores whether a carefully trained artificial intelligence (AI) system can help doctors across many hospitals reliably find and outline these elusive tumors on routine CT images, potentially improving diagnosis and treatment planning for patients who might otherwise slip through the cracks.

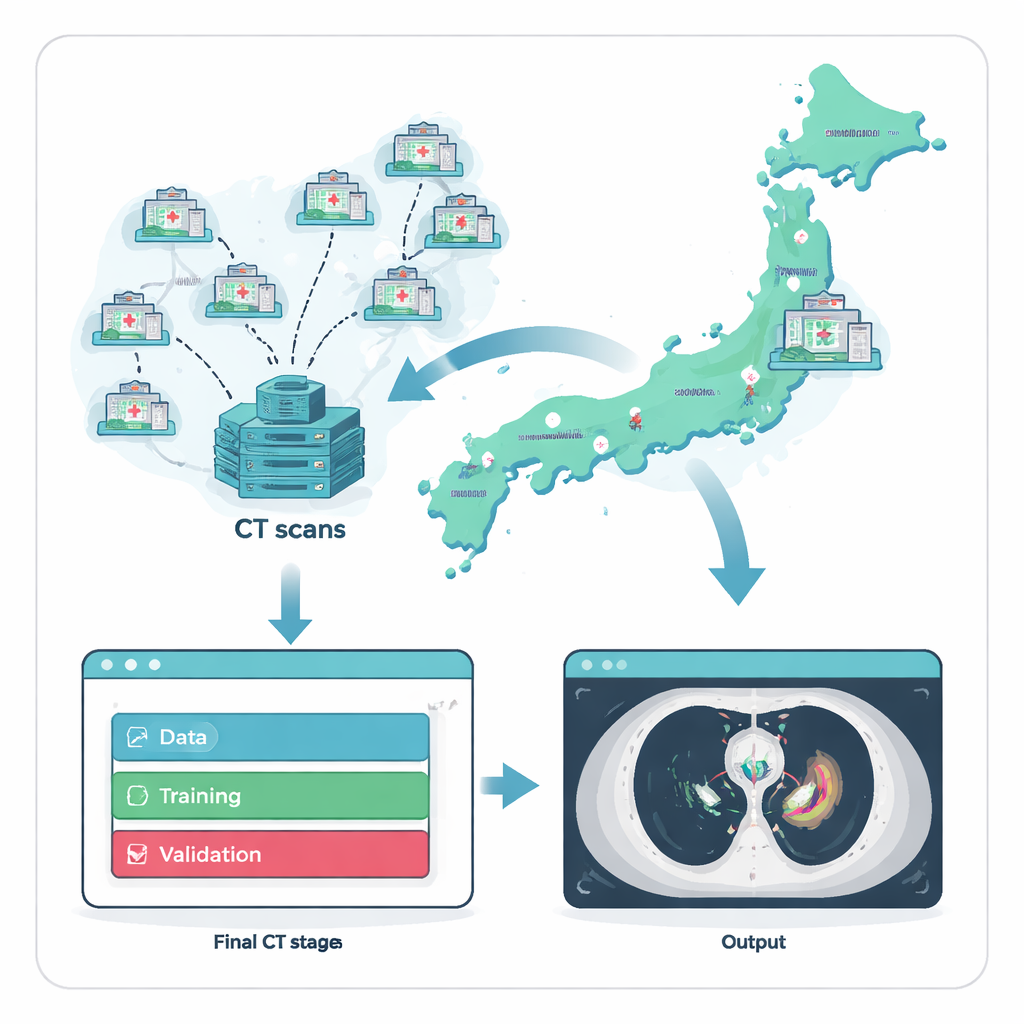

Gathering scarce cases from across a country

Because anterior mediastinal tumors are uncommon, the first hurdle is simply finding enough examples to train an AI system. The researchers tackled this by working with Japan’s National Cancer Center Hospital and 135 referring hospitals nationwide. Over two decades, they compiled 711 chest CT scans, each from a different adult patient whose tumor diagnosis had been confirmed under the microscope. To ensure a fair and realistic test, they split the data into three groups: a large set for training, a smaller set for fine-tuning, and a fully separate external test set of 164 scans drawn from 121 hospitals that did not contribute any training images. This strict separation mimics how the system would perform when introduced into new hospitals it has never “seen” before.

Turning scans into trustworthy teaching material

An AI model is only as good as the examples it learns from, so the team invested heavily in expert labeling. For every CT scan, specialists traced the exact boundaries of tumors in the front part of the chest. A thoracic surgeon or radiology technologist drew the initial outlines, which were then checked by two experienced diagnostic radiologists. Any disagreements were settled by discussion, creating a high-quality reference that reflects how experts would interpret the images in practice. Using a commercial no-code AI platform, clinicians—without writing computer code—then built and trained a three-dimensional model to mimic these expert outlines, allowing medical staff to directly steer the development process.

How the AI sees tumors in three dimensions

The heart of the system is a 3D version of a neural network architecture known as U-Net, designed to analyze whole CT volumes rather than single slices. It takes a stack of chest images and predicts, for every tiny volume element, whether it belongs to tumor or normal tissue, effectively painting a 3D mask over the tumor. During training, the model was exposed to random rotations, rescaling, and cropping of images so it would become robust to slight differences in patient position and scanner settings. The researchers then measured how closely the model’s predicted tumor regions matched the expert drawings, using standard overlap scores that reward both accurate boundary placement and complete coverage of the tumor volume.

Performance across many hospitals and tumor types

On the external test set from 121 independent hospitals, the AI model showed strong agreement with expert segmentations. On average, its overlap score (Dice) was 0.82, with a related measure called Intersection over Union at 0.72; precision and recall were both around 0.82–0.85, meaning the model rarely mislabeled normal tissue as tumor and successfully captured most tumor tissue. Importantly, these results held up across different scanner manufacturers, tumor sizes, and tumor types, suggesting the system can cope with the variety found in real-world clinics. When evaluated as a detector—asking simply whether it finds each lesion at all—the model reached a sensitivity of about 0.87 even under a strict matching rule, with well under one false alarm per scan on average, a profile that is particularly attractive for cancer screening support.

Where the system helps and where humans stay crucial

A closer look at successes and failures revealed a clear pattern: the AI performed best on larger tumors and tended to struggle with very small or faint lesions, either partially missing them or confusing nearby normal structures such as blood vessels or fluid collections. This aligns with everyday experience in radiology, where tiny or low-contrast findings are the easiest to overlook. The authors argue that the tool is therefore best used in a “human-in-the-loop” setting. It can serve as an efficient first reader that flags likely tumors and outlines their boundaries, supplying ready-made volumes for tasks such as treatment planning and surgery, while radiologists focus their attention on double-checking small, subtle, or ambiguous areas.

What this means for patients and future tools

To a layperson, the core message is that an AI system trained on a rare but serious group of chest tumors can reliably help doctors find and outline these cancers on CT scans, even in hospitals that never contributed data to its training. By delivering accurate 3D tumor maps and keeping false alarms low, the model could speed up diagnosis, support more precise radiation and surgical planning, and provide an extra safety net against missed lesions. At the same time, the work highlights that AI is not a replacement for expert judgment—especially for the smallest and faintest tumors—but a promising assistant that becomes more powerful as clinicians, imaging data, and simple-to-use AI platforms are brought together.

Citation: Takemura, C., Miyake, M., Kobayashi, K. et al. A clinically applicable and generalizable deep learning model for anterior mediastinal tumors in CT images across multiple institutions. Sci Rep 16, 6774 (2026). https://doi.org/10.1038/s41598-026-37504-z

Keywords: anterior mediastinal tumors, deep learning in CT imaging, medical image segmentation, cancer diagnosis support, radiology artificial intelligence