Clear Sky Science · en

An explainable deep learning framework for few shot crop disease detection in rice and sugarcane using CNN based feature extraction

Why spotting sick leaves matters

Rice and sugarcane feed billions of people and support many farming communities. When their leaves fall prey to disease, whole harvests can shrink, food prices can climb, and farmers can lose their livelihoods. Yet early diagnosis is hard: problems often start as small spots or color changes that busy farmers may overlook, and experts are not always nearby. This study introduces a computer-based system that can learn from just a handful of leaf photos, flag diseases automatically, and even show people exactly what in the image led to its diagnosis, helping farmers act sooner and with more confidence.

Smart eyes for the field

The researchers focus on two staple crops: rice and sugarcane. They draw on two public image collections of leaves, one captured in real sugarcane fields using many different smartphones, and a smaller, more controlled set of rice leaf photos. Each picture shows either a healthy leaf or one with a specific disease, such as brown spots, rust-colored pustules, or yellow streaks. By building on these shared datasets rather than private collections, the team aims for methods that other groups can test, reuse, and eventually embed in real farming tools ranging from smartphone apps to connected sensors in smart fields.

Teaching machines with very few examples

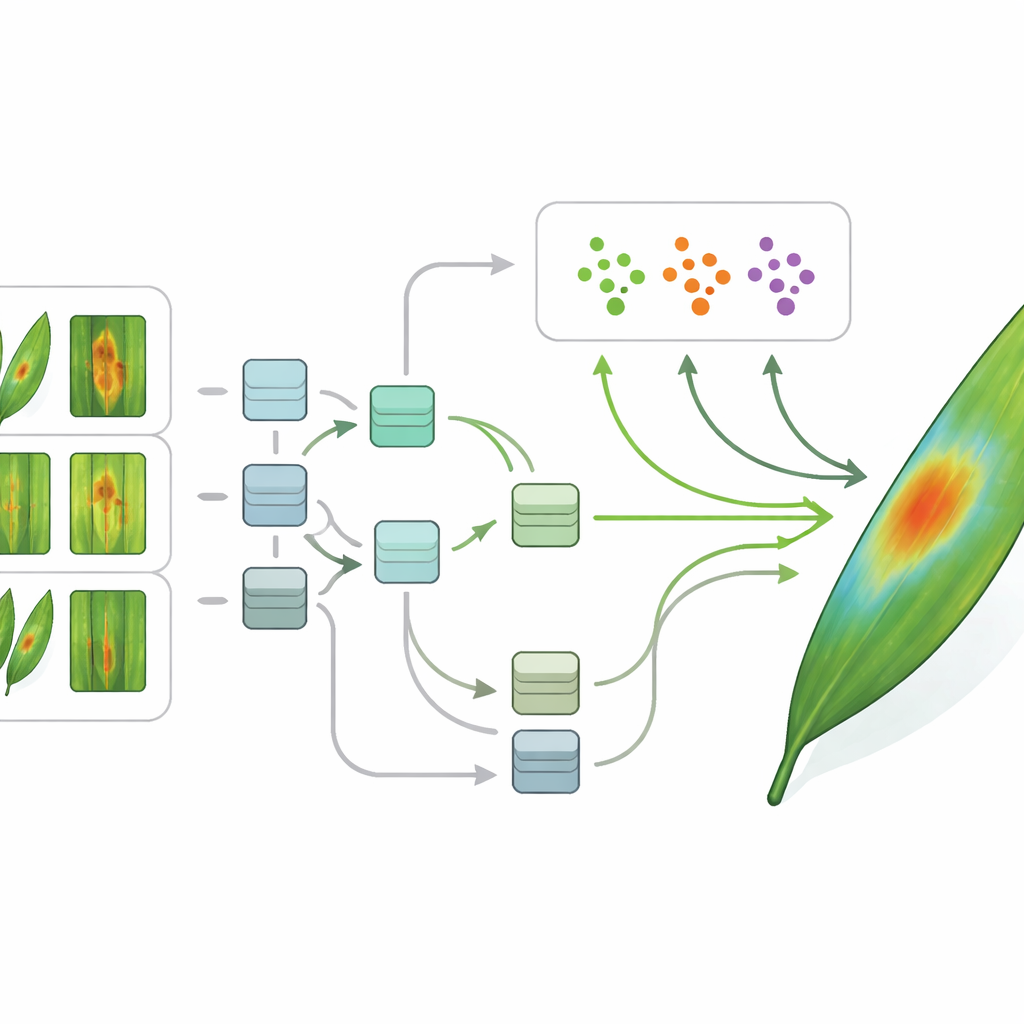

Modern artificial intelligence can be remarkably good at recognizing plant diseases, but it usually demands thousands of labeled images for every condition—a tall order in agriculture, especially for new or rare outbreaks. To sidestep this hurdle, the authors use "few-shot" learning, a family of techniques designed to learn from only a handful of examples. Their framework starts with standard image-processing steps: cleaning, resizing, and normalizing each photo so that the computer sees a consistent view. A type of deep learning model called a convolutional neural network then turns each leaf image into a compact set of numerical features that capture shapes, colors, and textures relevant to disease.

Making the diagnosis understandable

On top of these features, the team trains two advanced few-shot methods called Prototypical Networks and Model-Agnostic Meta-Learning. One learns a kind of "center" for each disease in feature space and assigns new leaves to the closest center; the other learns how to adapt quickly to new tasks with only a few training steps. Crucially, the authors combine these methods with explainable AI tools. Using heatmap-style techniques, the system can highlight which parts of a leaf image most influenced its decision—a cluster of dark spots, a yellow stripe along the midrib, or the absence of obvious lesions in a healthy plant. This makes the model’s reasoning visible, allowing agronomists to check whether the computer is focusing on medically meaningful signs rather than on background clutter.

How well the system performs

To judge whether their approach is truly useful, the researchers compare it with several well-known deep learning models that have been used for plant disease detection. They split each dataset into training and test portions and measure how often each method correctly identifies the disease type. On sugarcane leaves taken in the field, the new framework reaches about 92 percent correct classification, beating standard architectures such as VGG, ResNet, Xception, and EfficientNet. On the rice dataset, it performs even better, correctly identifying around 98 percent of test images. Statistical tools that look at the balance between false alarms and missed cases show that the new method behaves like an excellent medical screener rather than a random guesser.

What this means for farmers

Put simply, the study shows that a computer can learn to spot multiple rice and sugarcane diseases accurately from only a small number of example images, and it can also point to the very spots and streaks on the leaf that drove its verdict. This blend of data efficiency and transparency is key for real-world use: it lowers the barrier to building tools for new crops and emerging diseases, and it gives farmers and experts visual evidence they can trust. With further testing in real fields and friendlier user interfaces, such explainable, few-shot systems could become everyday partners in smart agriculture, helping to protect harvests while reducing unnecessary pesticide use.

Citation: El-Behery, H., Attia, AF. & Rezk, N.G. An explainable deep learning framework for few shot crop disease detection in rice and sugarcane using CNN based feature extraction. Sci Rep 16, 8272 (2026). https://doi.org/10.1038/s41598-026-37501-2

Keywords: crop disease detection, rice and sugarcane, deep learning, explainable AI, smart farming