Clear Sky Science · en

Automated weed segmentation with knowledge based labeling for machine learning applications

Why smarter weed control matters

Weeds quietly steal a large share of the world’s food. They crowd out crops, lower yields, and drive farmers to spray more herbicide, which is costly for both wallets and the environment. This study shows how drones and clever image analysis can automatically map weeds in wheat fields—without anyone having to painstakingly label plants by hand. That kind of automation could speed up the tools needed for more precise spraying, cutting chemical use while keeping harvests high.

From blanket spraying to pinpoint targeting

Across the globe, fields without effective weed control can lose between one-fifth and nearly all of their potential yield. In places like Canada’s prairie provinces, herbicide costs already run into hundreds of millions of dollars each year, and herbicide-resistant weeds are spreading. New “precision agriculture” tools aim to spray only where weeds actually occur, instead of blanket treating entire fields. To do that, machines first need accurate weed maps, and modern approaches rely on machine learning models that look at every pixel of an image. The barrier is that these models demand huge, carefully labeled training datasets—usually created by humans drawing outlines around weeds, one image at a time. This study asks: can we skip that manual labeling step entirely?

A drone’s-eye view of wheat and weeds

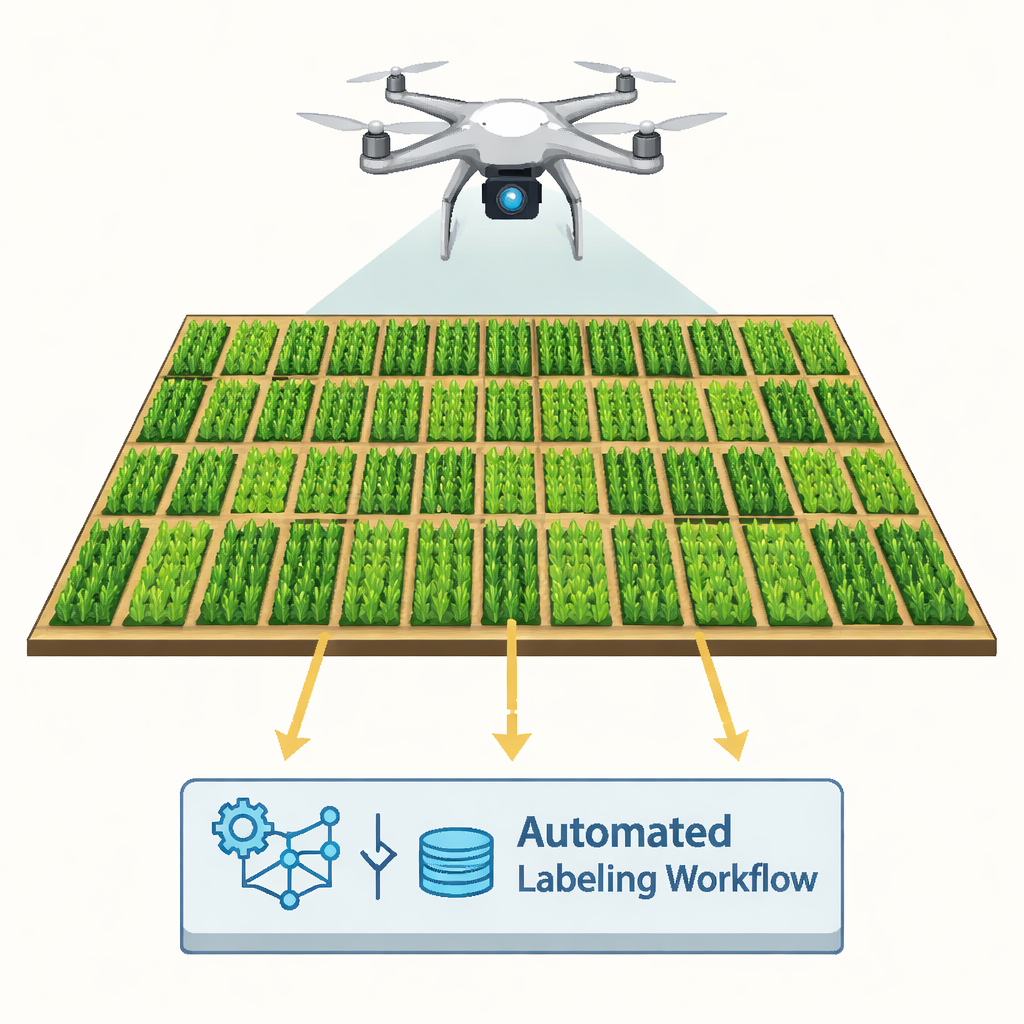

The researchers worked at a 2,000-square-meter experimental wheat field near Saskatoon, Canada. Wheat was planted in straight rows, and strips of several weed species—including kochia, wild oat, wild mustard, and false cleavers—were deliberately seeded between the crop rows. A drone equipped with a high-resolution RGB camera flew 10 meters above the ground, capturing imagery so detailed that each pixel represented less than a millimeter on the field surface. These images were stitched into a single “orthophoto,” essentially a precise map-like picture of the field, which became the input for an automated computer workflow.

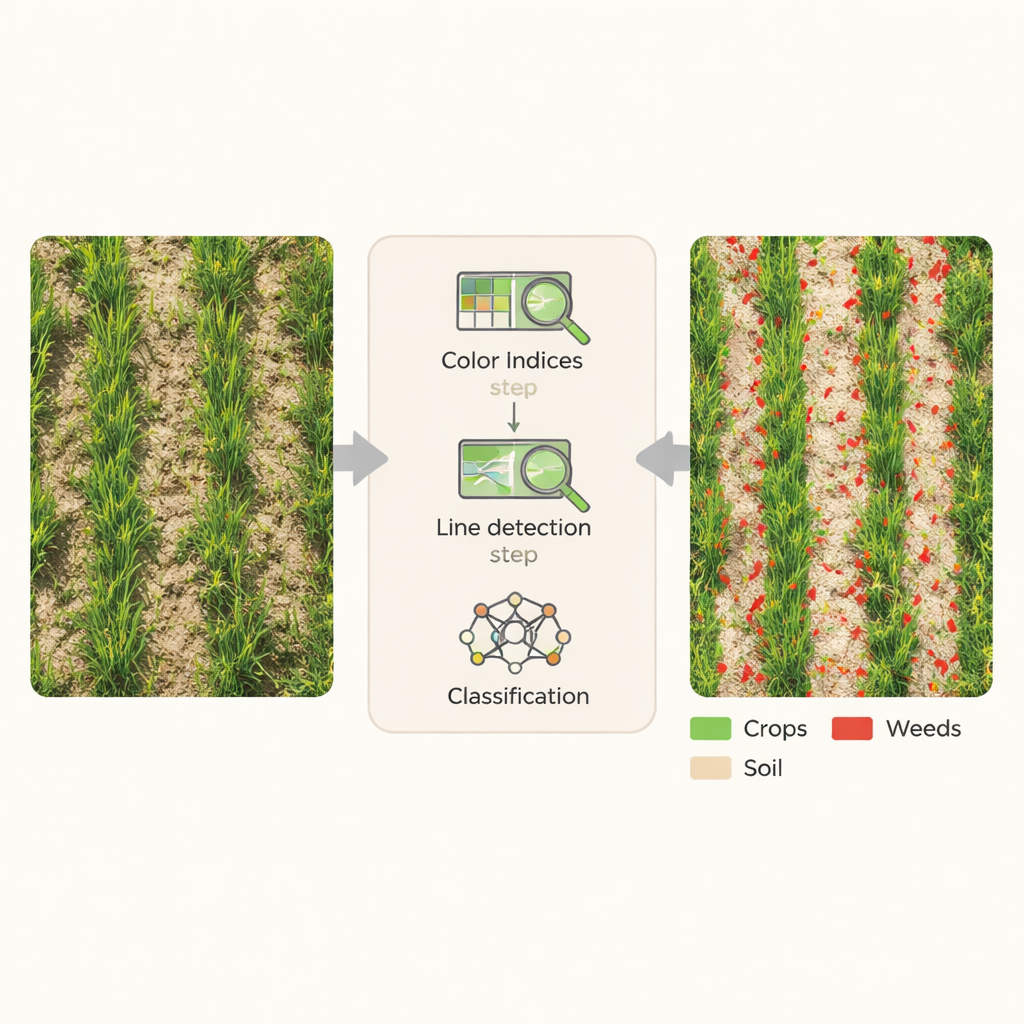

Turning color and shape into automatic labels

Instead of training a deep learning model with thousands of hand-labeled examples, the team built a knowledge-based pipeline inside specialized image-analysis software. First, they enhanced the image using simple color formulas that emphasize green plants against brown soil. Indices such as the Excess Green Index and a Color Index of Vegetation were combined to cleanly separate vegetation from bare ground. Next, the system looked for long, thin, line-like features that match the shape and orientation of wheat leaves and rows. By scanning the image at many angles and applying convolution filters—mathematical sliding windows that highlight repeating structures—the workflow could pinpoint where crop rows lay, and by contrast, where weeds were likely located between or within those rows.

From pixels to weed maps with no hand-drawing

Once crop rows and plant-covered areas were identified, the software applied automatic thresholding to sort every pixel into one of three classes: crop, weed, or bare soil. Chessboard-style segmentation and distance-to-row calculations helped refine these decisions, especially in tricky spots where weeds grew inside the crop rows. Importantly, all of these steps ran from a fixed set of rules—based on agronomic knowledge about how wheat and weeds look and where they grow—without using any manually labeled training samples. The image was processed in small tiles for efficiency, then reassembled into a single, fully classified map of the entire field.

How accurate is “no-training” weed mapping?

To test the method, the team compared their automated map with thousands of random check points in the field imagery, as well as with human estimates of weed cover and counts. Overall, the workflow correctly labeled 87% of the points, and a statistical measure of agreement known as kappa was 0.81, considered strong. Weed detection specifically had a user accuracy of 76%, with most errors occurring where dense crop and weed canopies overlapped. Still, automated weed cover and counts closely tracked human field ratings and visual assessments, with relationships strong enough to give confidence that the system captures real biological patterns, not just image noise.

What this means for future farms

This work demonstrates that high-quality weed maps can be produced from drone images using expert rules instead of hand-labeled training sets. On a standard desktop computer, the 2,000-square-meter field was fully processed in about 20 minutes. The resulting labeled maps can directly support tasks like evaluating herbicide performance, guiding variable-rate sprayers, or feeding more advanced machine and deep learning models with ready-made training data. For farmers and researchers alike, such automated labeling offers a path toward faster, cheaper, and more sustainable weed management, bringing precision agriculture closer to everyday practice.

Citation: Ha, T., Aldridge, K., Johnson, E. et al. Automated weed segmentation with knowledge based labeling for machine learning applications. Sci Rep 16, 6220 (2026). https://doi.org/10.1038/s41598-026-37475-1

Keywords: precision agriculture, weed mapping, drone imagery, automated labeling, crop monitoring