Clear Sky Science · en

Deep learning for construction waste detection using ConvNeXt V2 EMA attention and WIoU v3 loss

Why smarter sorting of building debris matters

Every new building, renovation, or demolition produces mountains of rubble—broken concrete, bricks, tiles, wood, foam, and more. Much of this material could be recycled, yet it often ends up buried in landfills because sorting it by hand is slow, expensive, and error‑prone. This study explores how an advanced form of artificial intelligence can automatically recognize and sort different types of construction waste from images, helping cities cut pollution, save raw materials, and move closer to true circular use of building resources.

Rubble, resources, and a growing global problem

Construction and demolition waste is now one of the world’s fastest‑growing waste streams, with about a billion tons generated each year. These piles of debris consume land, risk polluting soil and water, and squander materials that required energy and emissions to produce in the first place. Today, treatment still relies heavily on landfilling and stockpiling. Automated vision systems that can quickly tell concrete from brick, tile from wood, or foam from gypsum board could dramatically improve recycling rates. Yet real‑world construction sites are chaotic: objects overlap, are covered in dust, and share similar colors and textures, making reliable automatic recognition a tough challenge.

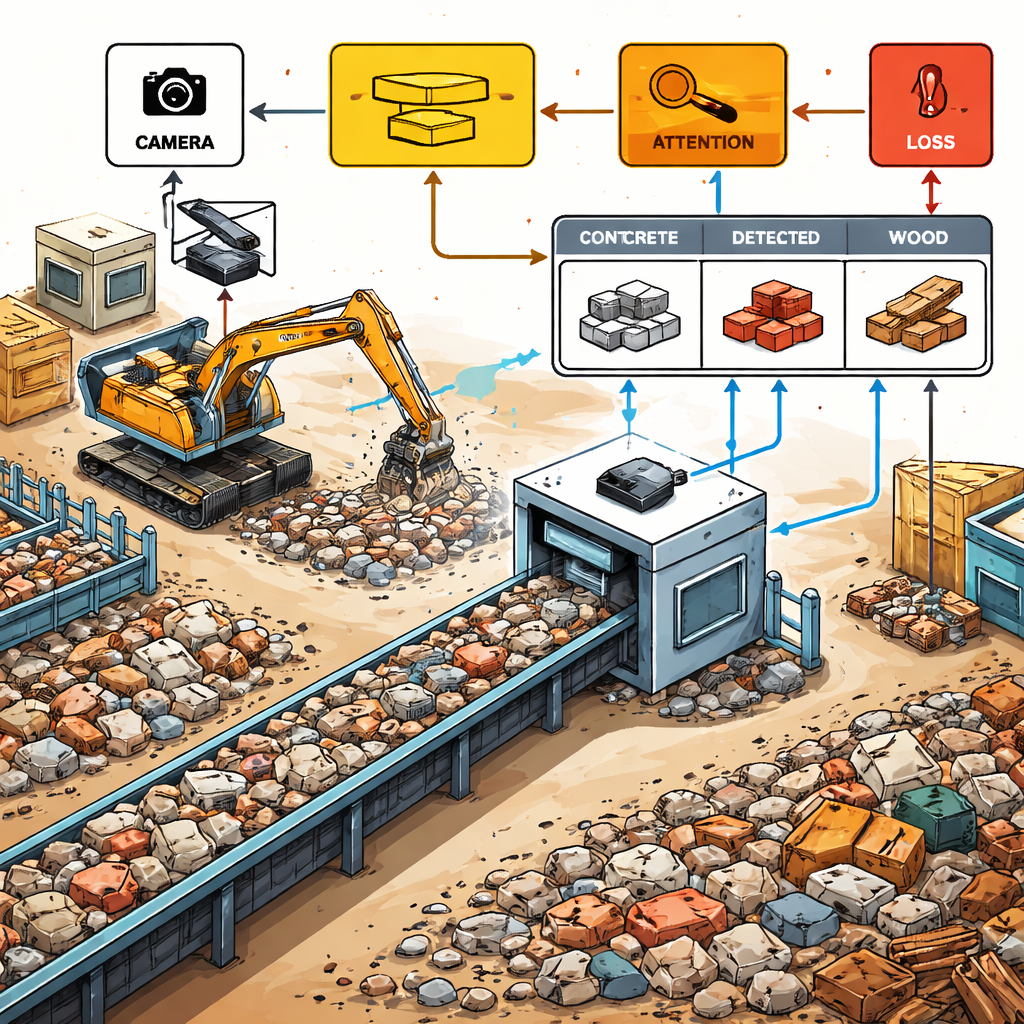

A new digital "eye" for waste on the conveyor belt

The authors present a tailored object‑detection system called YOLO‑CEW, built on top of the popular YOLO family of real‑time vision models. They train it on a specialized dataset of 1,774 images taken at a recycling facility in Cyprus, containing over 11,000 labeled pieces of construction and demolition waste across six common categories: concrete, brick, tile, gypsum board, wood, and foam. Images are split into separate sets for training, validation, and testing to avoid over‑fitting, and the model is run multiple times with different random starts to ensure results are robust. The goal is to keep the system fast enough for use on moving conveyor belts while sharply improving how accurately it finds and labels each piece of debris.

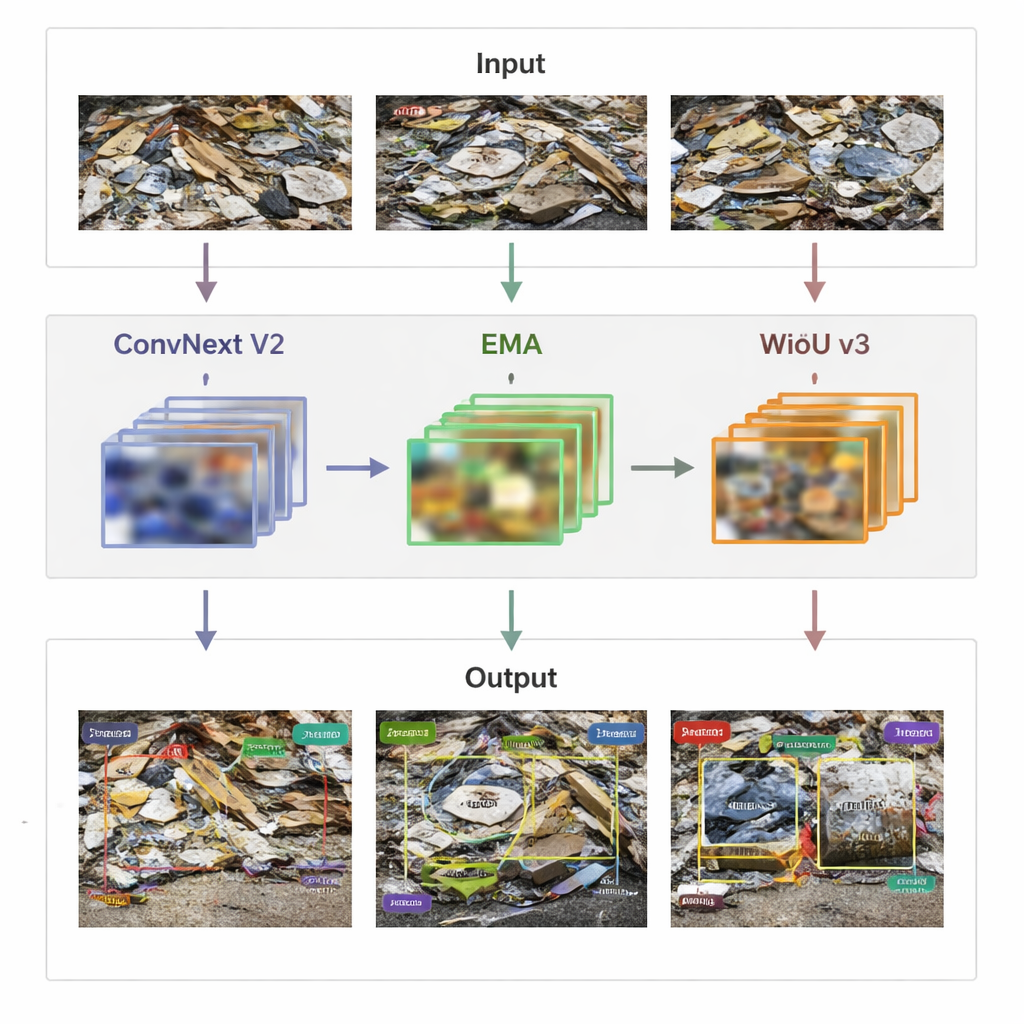

How the upgraded AI looks closer and learns from its mistakes

YOLO‑CEW improves on the baseline YOLOv8 model in three key ways. First, it swaps in a newer feature‑extracting backbone called ConvNeXt V2 at selected stages, which is better at capturing subtle visual differences—like the fine patterns that distinguish tiles from concrete—without slowing the system too much. Second, it adds an Efficient Multi‑scale Attention (EMA) module that teaches the network to focus on the most informative regions across different sizes, boosting its ability to find both large slabs and small, partially hidden fragments while ignoring distracting background clutter. Third, it introduces an updated training loss function, WIoU v3, which down‑weights very poor bounding‑box guesses and concentrates learning on more promising examples, helping the model tighten its boxes around real objects instead of being misled by noisy samples.

Putting the model to the test in realistic conditions

On the construction‑waste dataset, YOLO‑CEW achieves a precision of 96.84%, a recall of 95.95%, and an overall detection score (mAP@50) of 98.13%, all higher than the original YOLOv8 baseline. In practical terms, this means it both misses fewer objects and makes fewer false alarms. The model is especially strong at distinguishing challenging classes such as tiles and foam, although some confusion with brick and concrete remains when dust blurs boundaries. Importantly, the system still runs at about 128 frames per second—far above what is needed for real‑time monitoring—so it is suitable for use in active recycling lines. Statistical tests using a bootstrap procedure confirm that these gains are not due to chance. Comparisons with multiple other YOLO variants show YOLO‑CEW consistently leads in accuracy while keeping a favorable balance between speed and performance.

Beyond one plant: adapting to other waste streams

To see if their approach generalizes, the researchers also test YOLO‑CEW on a separate public trash‑detection dataset that covers common household materials such as plastic, glass, and cardboard. Even without being designed specifically for this new setting, the model still outperforms the standard YOLOv8 in precision, recall, and overall detection quality. This suggests that the architectural improvements—better feature extraction, smarter attention, and more careful handling of poor training examples—could be reused in other recycling and environmental‑monitoring tasks, from household waste sorting to litter detection by drones.

What this means for cleaner, smarter cities

For non‑specialists, the takeaway is that YOLO‑CEW acts like a far more accurate and sharper‑eyed camera system for construction debris. It can watch a moving stream of rubble, pick out each object, and label what material it is made from with very high reliability and speed. This makes it much easier to design automated lines where machines sort and route materials for reuse instead of burial. While challenges remain—such as dealing with extreme clutter, dust, and rarely seen materials—the study shows that carefully tuned deep‑learning models can turn today’s piles of “waste” into tomorrow’s resource streams, supporting greener building practices and smarter cities.

Citation: Han, D., Ma, M., Li, X. et al. Deep learning for construction waste detection using ConvNeXt V2 EMA attention and WIoU v3 loss. Sci Rep 16, 6441 (2026). https://doi.org/10.1038/s41598-026-37473-3

Keywords: construction waste, recycling AI, object detection, smart cities, deep learning