Clear Sky Science · en

Energy-efficient intrusion detection with a protocol-aware transformer–spiking hybrid model

Why smarter, leaner cyber defense matters

As our homes, offices, and cities fill with connected devices, the networks that link them have become both indispensable and vulnerable. Intrusion detection systems watch this digital traffic for signs of attack, but many modern tools are either too power-hungry for small devices or miss the rare, subtle break-ins that cause the most damage. This paper introduces a new kind of intrusion detector that borrows ideas from both language models and brain-inspired computing to spot threats more accurately while using less energy, making it better suited for the next generation of always-connected, resource‑limited hardware.

Today’s defenses hit a wall

Conventional intrusion detection first relied on fixed signatures, like looking for known fingerprints of malware. That approach breaks down when attackers change tactics or invent new tricks. Machine learning and, more recently, deep learning improved matters by learning patterns directly from network data. However, these models still stumble in three crucial areas: they require substantial computation and power; they often behave like black boxes that are hard to interpret; and they tend to overlook rare but dangerous attack types buried in overwhelmingly normal traffic. Transformer models, the same family of algorithms that power many advanced language tools, have raised accuracy by capturing long‑range patterns in network connections. Yet they are computationally heavy, making them a poor fit for low‑power sensors and edge devices in the Internet of Things.

A brain-inspired hybrid approach

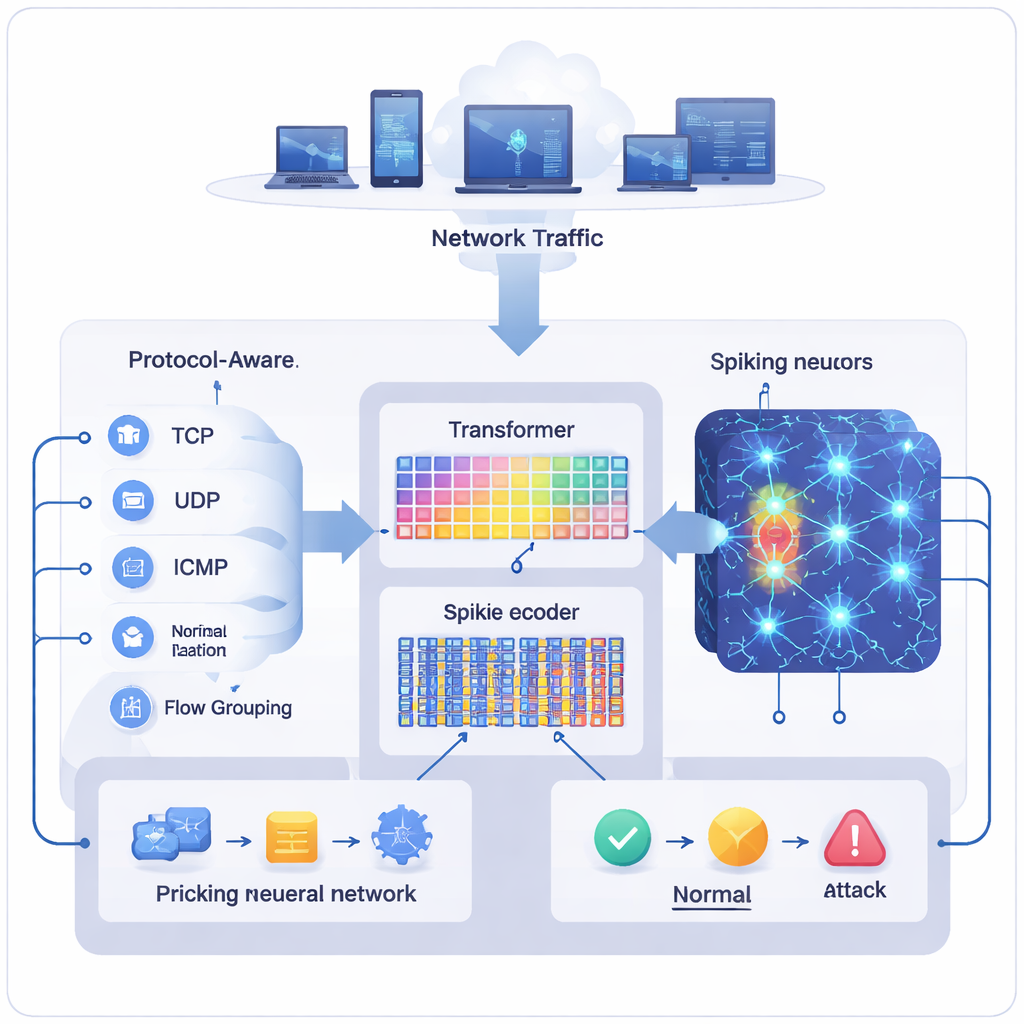

The authors propose a hybrid model called the Transformer‑Augmented Spiking Neural Network (TASNN) that combines a compact transformer with a spiking neural network, a class of models that process information as brief electrical pulses much like biological neurons. The transformer side specializes in understanding context: how a connection’s protocol, service, and recent activity relate to one another over short “pseudo‑flows” of traffic. The spiking side excels at energy‑efficient, event‑driven computation, waking up only when meaningful changes occur. Between them, the system uses specialized preprocessing to treat different network protocols fairly, reconstructs short interaction patterns even from tabular log data, and encodes features into sparse spike trains so that most neurons remain quiet unless something suspicious appears.

Teaching the model what really matters

Much of TASNN’s strength comes from how it prepares and filters data before making a decision. Instead of normalizing all traffic in one lump, it adjusts features separately for TCP, UDP, and ICMP records so that one protocol does not dominate the learning process. It also groups related records into short, flow‑like sequences, capturing signals such as sudden shifts in byte counts or bursts of unusual flags that often accompany scans or break‑in attempts. These engineered cues are then turned into spikes that fire only when values change enough to be noteworthy. An attention mechanism inside the transformer highlights which fields—such as duration, protocol type, or port roles—are most influential, while a gating mechanism uses this attention to decide how much spiking activity to allow. A feature‑selection stage cross‑checks transformer attention with how many spikes a feature triggers, pruning away inputs that add cost without improving decisions.

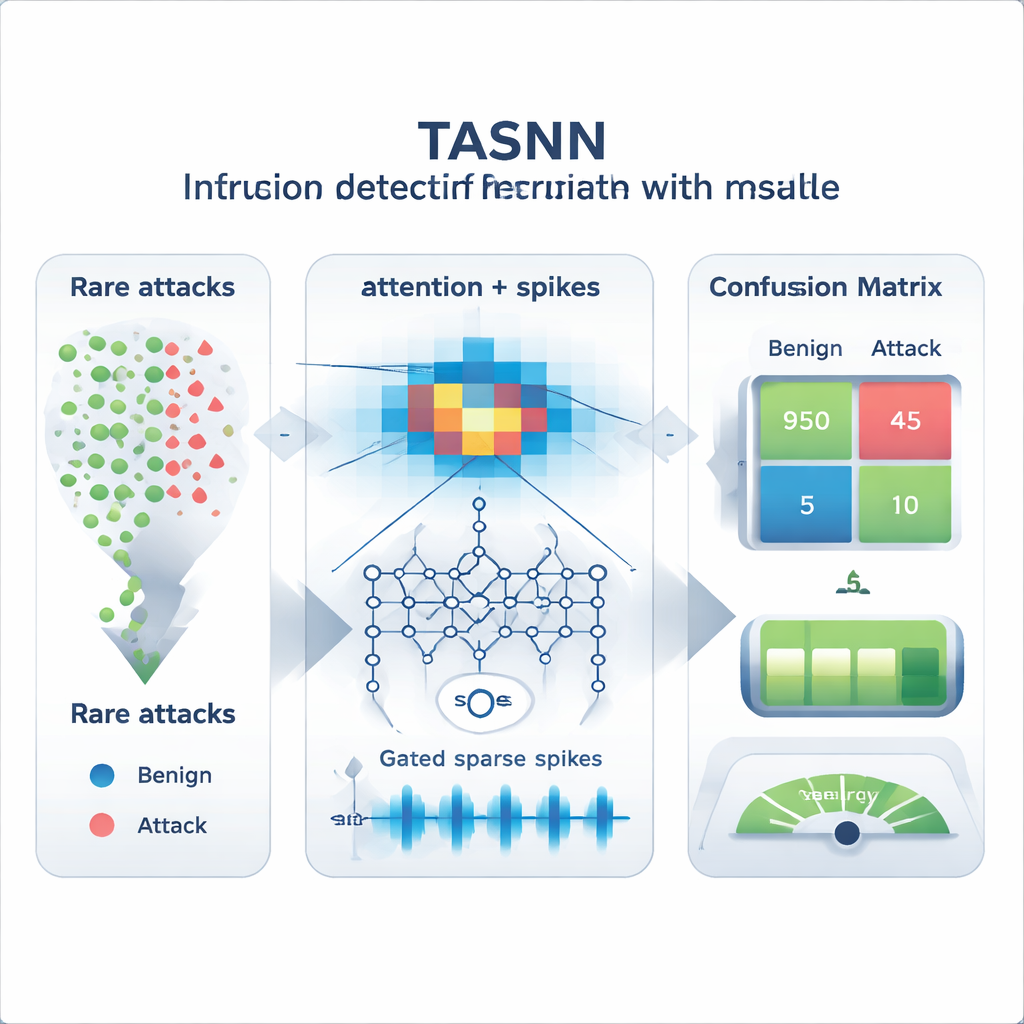

Better at catching the rare and doing more with less

The researchers evaluated TASNN on several standard intrusion datasets, including NSL‑KDD, its harder KDDTest+21 split, and portions of CICIDS‑2017. Across different ways of splitting the data into training and testing sets, the hybrid model consistently achieved higher overall accuracy and stronger macro‑averaged scores than traditional machine learning, convolutional networks, and transformer‑only baselines. In plain terms, it remained good at classifying common traffic while markedly improving detection of rare attacks that earlier systems frequently mislabel as normal. At the same time, simulations of spiking activity showed that neurons fired only about one to two spikes per sample on average, with decisions reached in just a few milliseconds. Compared to a similar non‑spiking model, this translated into roughly 22 percent less energy use, a promising sign for battery‑powered or neuromorphic hardware.

What this means for everyday network security

For non‑specialists, the key takeaway is that TASNN acts like a more observant yet frugal security guard for digital networks. It pays attention to the right details for each kind of traffic, remembers short bursts of unusual behavior, and reacts only when changes truly matter, instead of constantly running at full blast. The result is an intrusion detector that is better at catching both common and rare attacks while conserving computational resources, bringing high‑end cyber defense closer to the tiny, energy‑constrained devices that now anchor our digital lives.

Citation: Karthik, M.G., Keerthika, V., Mantena, S.V. et al. Energy-efficient intrusion detection with a protocol-aware transformer–spiking hybrid model. Sci Rep 16, 7095 (2026). https://doi.org/10.1038/s41598-026-37367-4

Keywords: intrusion detection, cybersecurity, spiking neural networks, transformer models, energy-efficient AI