Clear Sky Science · en

A novel reinforcement learning-based approach for short-term load and price forecasting in energy markets

Why predicting tomorrow’s power matters

Every time you flip a light switch, a vast network of power plants, markets, and computers works behind the scenes to keep electricity both available and affordable. If grid operators can accurately predict how much power people will use and how prices will move in the next few hours, they can avoid blackouts, reduce waste, and save money for everyone. This article explores a new way to make those short‑term predictions using techniques originally developed for learning to play games and control robots.

Smarter guesses for a shifting energy world

Electricity demand and prices can swing wildly from hour to hour. Heatwaves, cold snaps, holidays, and fuel costs all push the system in different directions. Traditional forecasting tools, such as simple trend lines or even standard machine‑learning models, often treat the problem as a one‑way fit to past data. They struggle when conditions change quickly or when many factors interact in complex ways. The authors argue that modern power grids, especially those with growing shares of renewable energy, need forecasting tools that can adapt on the fly and learn directly from their successes and mistakes.

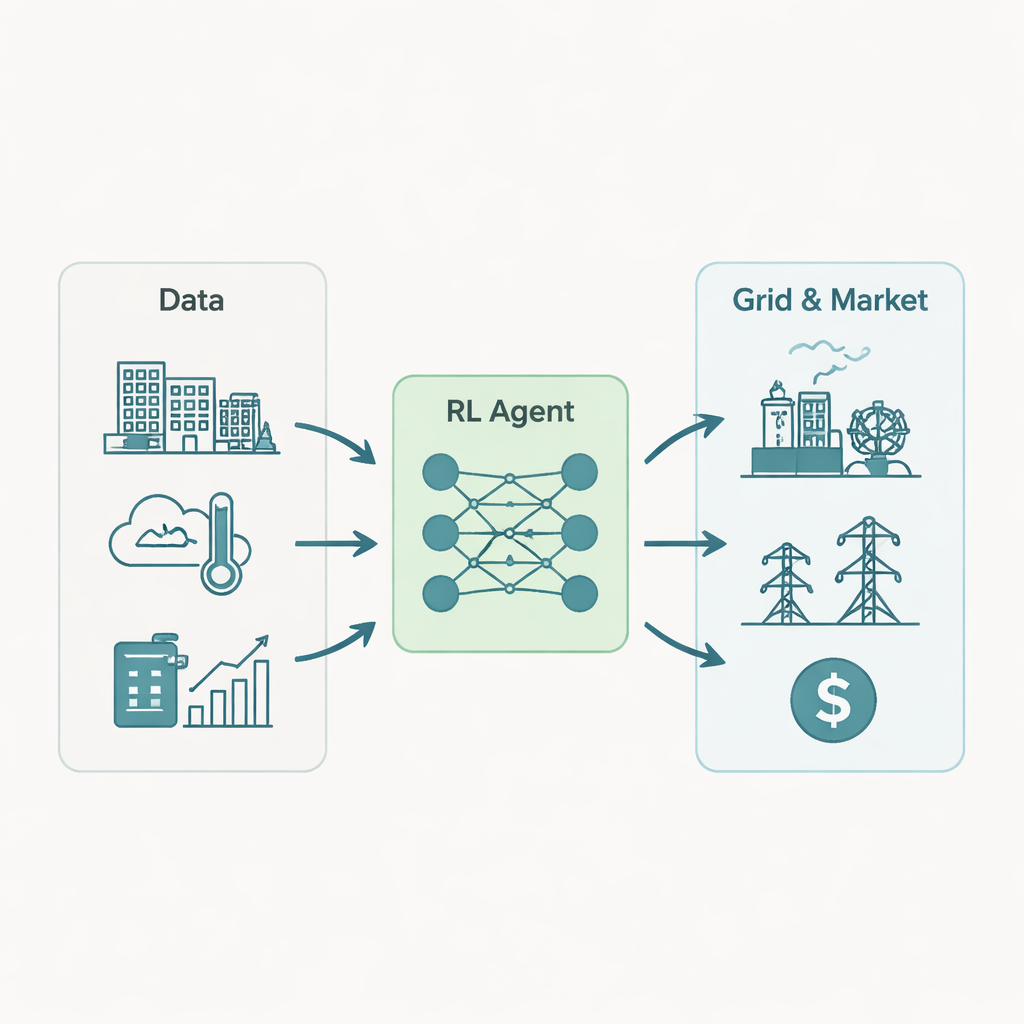

A learning agent in the power market

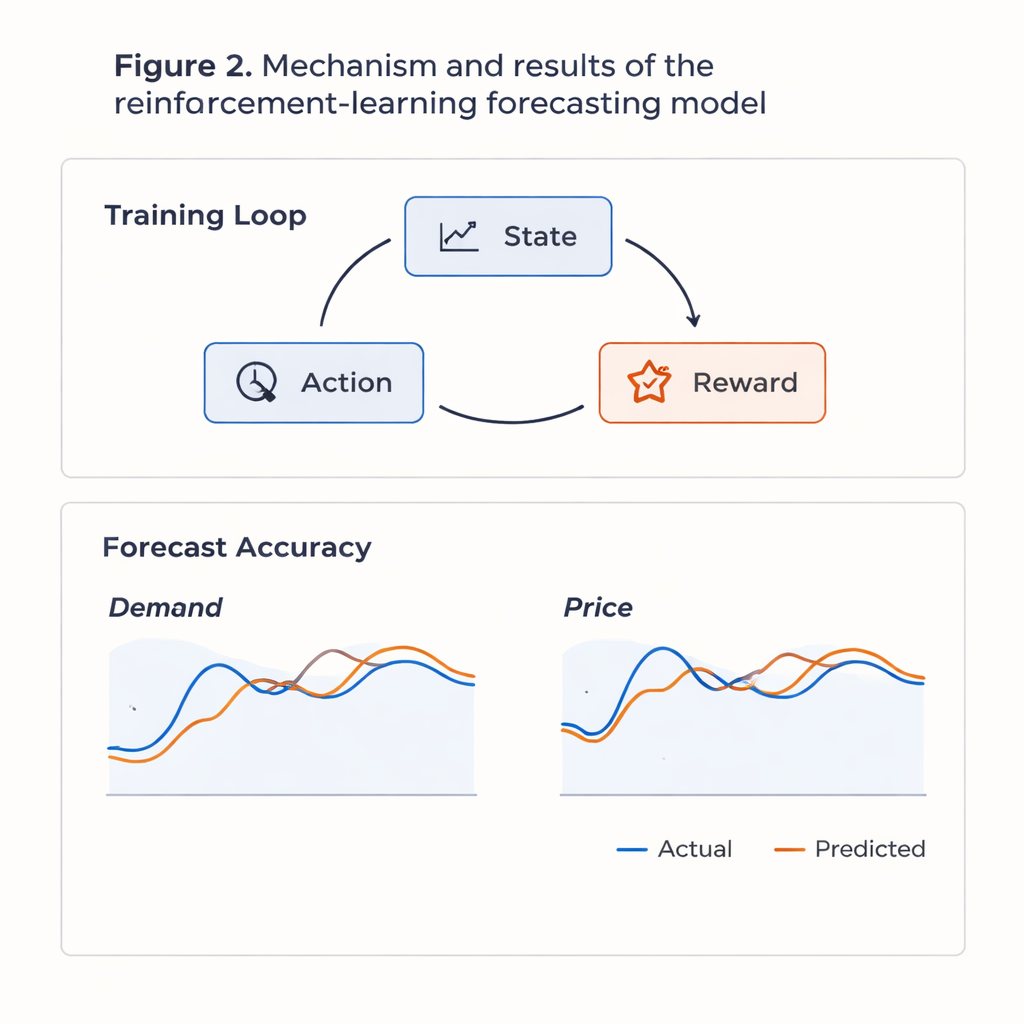

The researchers recast forecasting as a decision‑making game. At each hour, a computer “agent” sees the current situation: recent power demand, past prices, temperature, humidity, day of the week, holidays, and fuel costs. It then chooses an action: its best guess for the next hour’s electricity demand and price. Once the actual values arrive, the agent receives a score based on how far off it was—large errors are punished, small errors are rewarded. Over time, the system searches for a strategy that maximizes its long‑term score, not just the accuracy of a single step. To manage the many inputs involved, the authors use a deep reinforcement learning setup built on a Deep Q‑Network, a type of neural network that estimates how good each possible action is in each situation.

From raw data to reliable forecasts

To test their approach, the team turned to real‑world data from the PJM Interconnection, a major U.S. power market covering parts of the Midwest and East Coast. They used about three years of hourly records (2021–2023), including market prices, electricity demand, weather observations, and fuel price indices. Before training, they cleaned the data, filled in rare missing values, removed unusual outliers, and scaled everything into comparable ranges. They also used statistical techniques to compress the large set of input features while keeping most of the useful variation. The learning agent was then trained in repeated passes over this history, gradually shifting from random trial‑and‑error to exploiting the patterns it had discovered.

How well the learning agent performed

When pitted against widely used forecasting methods—including ARIMA (a traditional time‑series model), LSTM neural networks, and the popular XGBoost algorithm—the reinforcement learning system came out ahead. On held‑out test data that the model had never seen, it cut average percentage errors in demand and price by roughly 15–20 percent compared with these baselines. The forecasts tracked both winter and summer daily cycles closely and followed general price swings, though the model still struggled with the sharpest, rare price spikes and unusual holiday behavior. Analysis of the learned strategy showed that the agent implicitly discovered an economically sensible pattern: after observing very high prices, it tended to expect slightly lower demand next hour, mimicking real‑world demand response without being explicitly told about it.

What this means for everyday energy use

For non‑specialists, the key message is that this learning‑based approach can help grid operators run power systems more smoothly and cheaply. More accurate short‑term forecasts allow generators and market operators to schedule plants more efficiently, integrate renewable sources with fewer surprises, and reduce the risk of sudden price spikes or shortages. While the method is data‑hungry and computationally intensive, and still needs refinement for extreme events, it points toward a future in which electricity markets are guided by adaptive, self‑improving tools that continuously learn from the ever‑changing behavior of consumers, weather, and fuel costs.

Citation: Wu, Y., Ma, Y. & Aliev, H. A novel reinforcement learning-based approach for short-term load and price forecasting in energy markets. Sci Rep 16, 5141 (2026). https://doi.org/10.1038/s41598-026-37366-5

Keywords: smart grid forecasting, electricity price prediction, reinforcement learning, energy demand forecasting, deep Q-network