Clear Sky Science · en

RiemannInfer: improving transformer inference through Riemannian geometry

Why this matters for everyday AI users

Modern chatbots and AI assistants can solve math problems, write essays, and even explain medical topics, yet we still don’t really know how they reach their conclusions—or how to make them reason more reliably. This paper introduces “RiemannInfer,” a new way to look inside large language models (LLMs) by treating their internal activity as motion on a curved geometric surface. That perspective not only offers a more intuitive picture of how these systems think, but also makes their reasoning faster and more accurate.

Turning AI thinking into a geometric journey

Inside an LLM such as GPT-4 or Llama, each word in a sentence is represented by a high‑dimensional vector, and layers of “attention” decide how strongly words influence each other. The authors observe that these hidden states can be viewed as points in a vast space whose overall shape encodes the model’s understanding of language. Instead of treating reasoning as a series of probability calculations over texts, they reinterpret it as a path‑finding problem: the model travels from an initial state (the question) to a final state (the answer) along a route in this space. Using tools from Riemannian geometry—the mathematics of curved surfaces—they construct a curved manifold that captures how attention patterns bend and stretch this internal landscape.

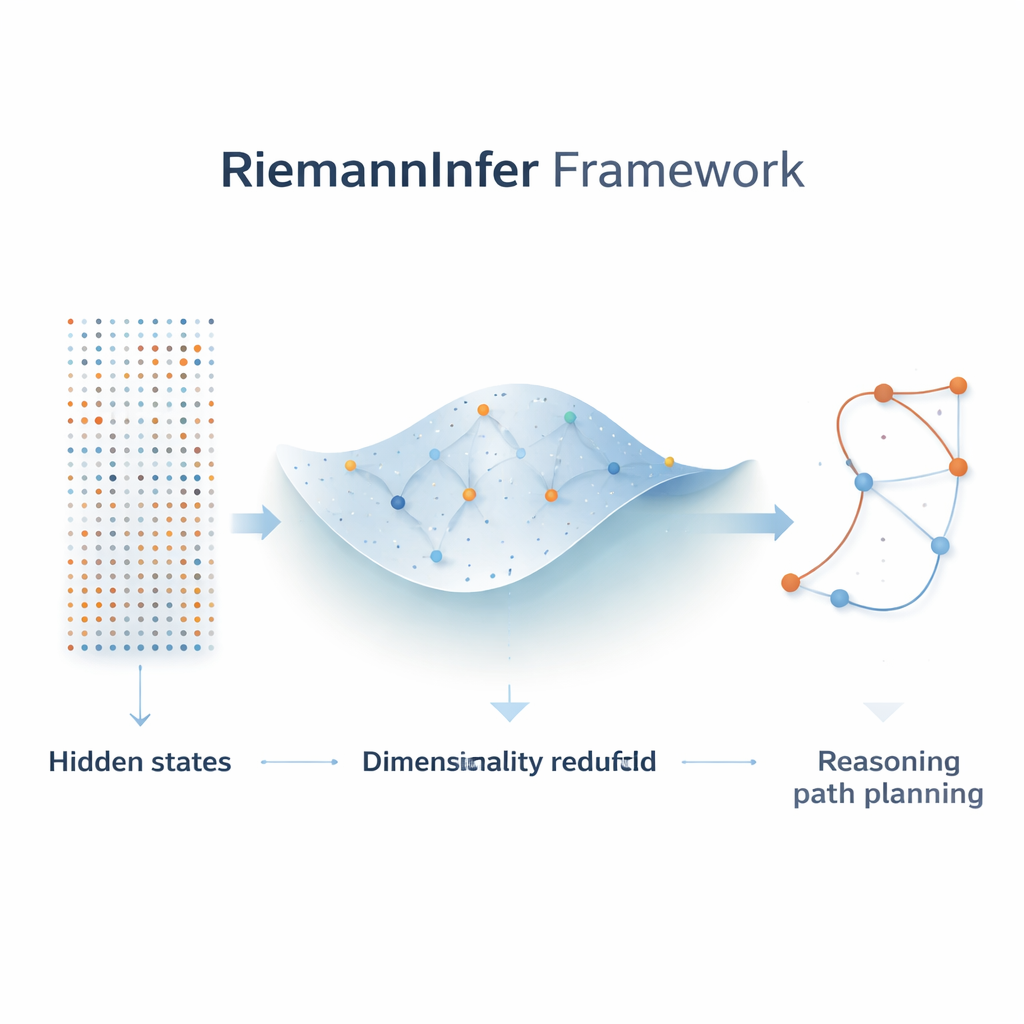

Compressing complexity without losing the big picture

Because the raw internal space of an LLM is enormous, the first step in RiemannInfer is to shrink it while preserving its essential structure. The authors combine techniques from topology, which studies how points connect, with a popular dimensionality‑reduction algorithm called UMAP. Before reducing the number of dimensions, they analyze the “shape” of the cloud of hidden states to ensure that key connectivity patterns survive the compression. The result is a lower‑dimensional space where important relationships between tokens—such as which words pay strong attention to each other—are largely preserved. This compact geometric map makes it feasible to do precise calculations on distances, shortest paths, and curvature.

Building a curved map from attention

The core innovation is to translate attention weights into a geometric measure of distance. When the model pays strong attention between two tokens, RiemannInfer treats them as being close together on the manifold; weak attention places them farther apart. From these relationships, the authors define a metric—a mathematical rule that determines lengths and angles—and use it to compute geodesics, the curved equivalent of straight lines, as well as curvature, which measures how sharply the space bends. Multi‑head attention naturally becomes a blend of multiple metrics, each capturing a different aspect of linguistic structure, such as grammar or meaning. With this construction, model decisions can be interpreted as choosing particular paths through a landscape whose peaks and valleys reflect where information is dense or sparse.

Planning low‑effort reasoning paths

Once the manifold is built, the authors reformulate reasoning as finding an “easy” path from question to answer—one that minimizes the total work done along the way. They borrow an analogy from mountain climbing: climbing a steep, jagged route requires more energy than following a smoother ridge to the same summit. In the LLM setting, curvature plays the role of steepness, and the model’s reasoning work corresponds to how much its internal uncertainty is reduced along a path. Using approximate formulas for geodesics and curvature, combined with an efficient graph‑search algorithm (Dijkstra’s algorithm), RiemannInfer quickly identifies routes that minimize this work, effectively guiding the model toward more efficient chains of thought.

What the experiments show for real models

The authors test RiemannInfer on several state‑of‑the‑art LLMs, including GPT‑4o, Llama‑3‑405B, and DeepSeek‑V2‑400B, across demanding math and reasoning benchmarks such as GSM8K, MATH500, StrategyQA, and AGIEval. In every case, wrapping these models with the RiemannInfer framework boosts their accuracy by a few percentage points—small numbers that are nonetheless meaningful at the frontier of performance—while keeping or slightly improving speed. A comparison with a simpler, purely linear method shows that ignoring the curved geometry of the hidden states dramatically hurts performance, underlining the importance of the manifold view.

Big picture: giving AI reasoning a physical feel

To a lay reader, the key takeaway is that the authors have turned the opaque inner workings of large language models into something more tangible: a journey across a curved landscape where good reasoning corresponds to following smooth, low‑effort trails. By grounding attention patterns and reasoning steps in geometric and physical concepts—distance, curvature, and work—RiemannInfer offers both a practical way to improve results and a conceptual bridge between AI and the physics of continuous spaces. While the current methods are approximate and many details remain to be refined, this framework points toward future AI systems whose thought processes can be analyzed, optimized, and perhaps even designed using the language of geometry and physics.

Citation: Mao, R., Zhang, Z., Yang, M. et al. RiemannInfer: improving transformer inference through Riemannian geometry. Sci Rep 16, 6636 (2026). https://doi.org/10.1038/s41598-026-37328-x

Keywords: large language models, geometric deep learning, Riemannian manifold, attention mechanisms, reasoning efficiency