Clear Sky Science · en

Performance evaluation of generative pre-trained transformer on the National Veterinary Licensing Examination in Japan

Why smarter vet exams matter to everyone

Behind every visit to the animal hospital lies years of rigorous training and a high‑stakes national exam. In Japan, aspiring veterinarians must pass the National Veterinary Licensing Examination (NVLE), which tests everything from basic biology to complex clinical judgment. This study asked a timely question: can today’s advanced AI language models, the same kind that power popular chatbots, solve this demanding exam in Japanese—and what might that mean for veterinary education and animal care?

Testing AI on a real vet licensing exam

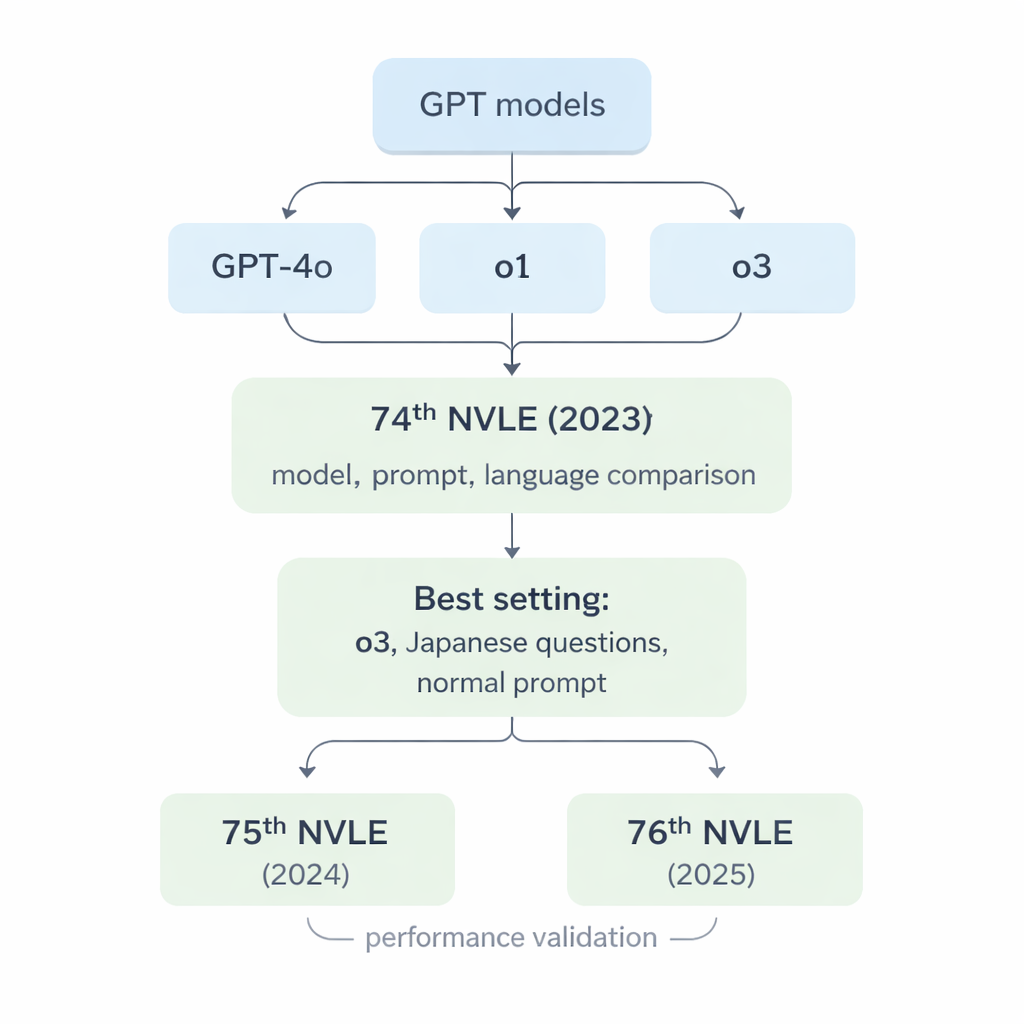

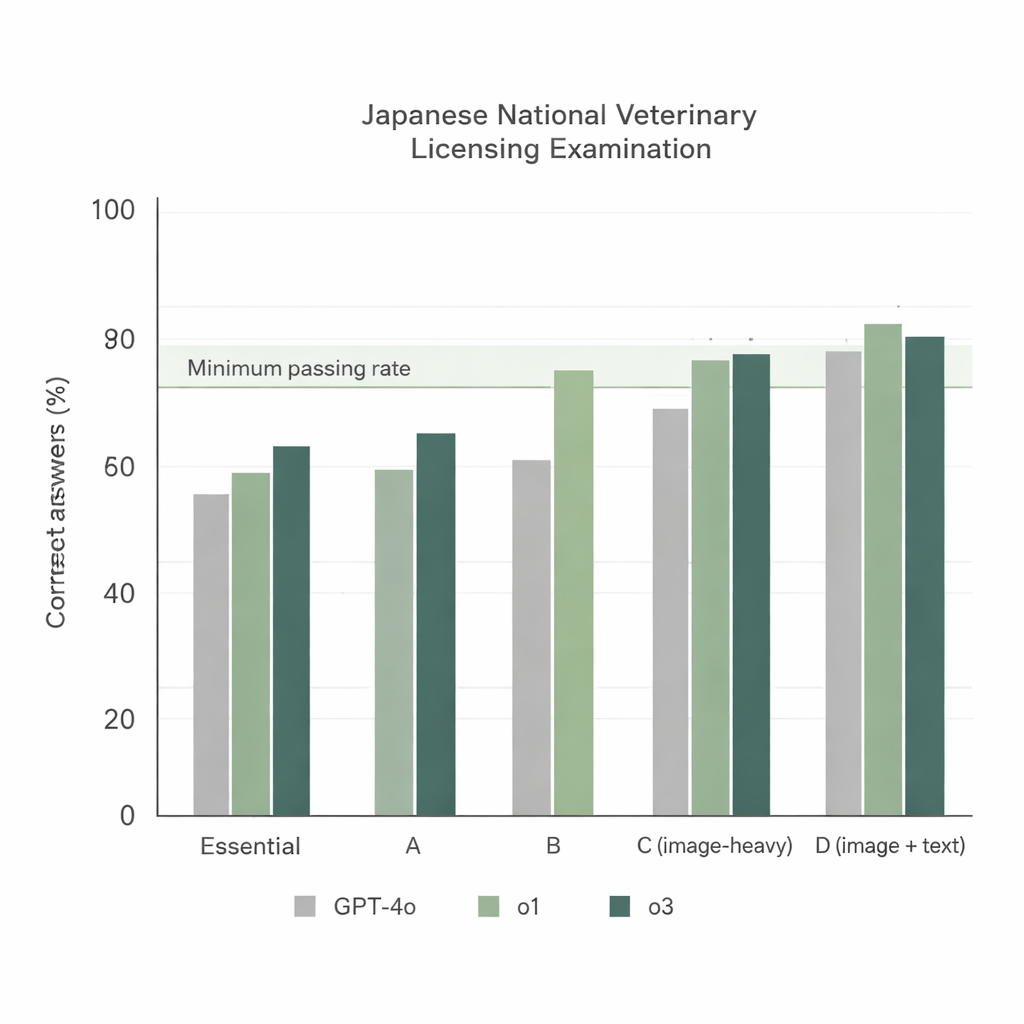

The researchers focused on three generations of large language models from OpenAI: GPT‑4o, o1, and o3. These systems are built to read and generate human‑like text, but they were never specifically trained for veterinary medicine. To put them to the test, the team used Japan’s 74th NVLE (2023) as a benchmark. The exam is divided into five sections, including text‑only questions and image‑based questions that show X‑rays, photos, or diagrams. All questions are multiple‑choice with five options, just like the real exam taken by students. The models were fed each question through a standardized computer script and were required to respond only with the chosen option number, with no chance to “explain” or negotiate their way to credit.

Which AI model came out on top?

When the three models tackled the 74th NVLE using the simplest setup—Japanese questions and a straightforward instruction prompt—two clear trends emerged. First, all models performed strongly on text‑based sections, but o1 and o3 consistently outscored GPT‑4o. Second, performance dropped for image‑heavy sections, yet o1 and o3 still stayed above the official minimum passing rate, whereas GPT‑4o fell short in one of these sections. Overall, GPT‑4o answered about 78% of questions correctly, while o1 reached about 92% and o3 about 93%. Because o3 slightly edged out o1 in total score, the researchers chose o3 for the rest of the experiments.

Do prompts or translations really help?

Much has been written about “prompt engineering”—crafting elaborate instructions to coax better answers from AI—and about translating local exam questions into English to align with models’ training data. The study directly tested these ideas with the o3 model, comparing a basic solving prompt versus a more detailed, optimized prompt, and Japanese questions versus versions first translated into English by the same model. Surprisingly, none of these changes made a meaningful difference: o3 passed comfortably under all six combinations, and the simplest approach (original Japanese text with the basic prompt) worked just as well as the more complicated setups. This suggests that, at least for these veterinary questions, the latest models already understand Japanese reliably and do not require intricate prompting to perform at a high level.

How stable is performance on newer exams?

To see whether the strong results were a fluke, the team next gave o3 the 75th (2024) and 76th (2025) NVLEs, again using only the original Japanese questions and the normal prompt. The model achieved overall scores above 92% on both exams and exceeded the passing threshold in every section, including the image‑heavy areas. Most questions received the same answer across three independent runs, showing that o3’s responses were generally stable even when some randomness was allowed. When the researchers looked closely at the model’s mistakes, they found that errors clustered in two areas: practical veterinary knowledge (such as Japanese veterinary laws) and clinical medicine, which demand country‑specific rules and multi‑step reasoning rather than simple fact recall.

What this means—and what it doesn’t

The study concludes that cutting‑edge GPT‑style models can now pass Japan’s veterinary licensing exam in Japanese, without translation tricks or complex prompts. For veterinary schools and students, this opens the door to using AI as a study partner, question generator, or explainer of exam topics. For the public, it signals that AI is becoming a powerful tool for organizing and sharing veterinary knowledge. However, the authors stress that these systems are not ready to replace veterinarians or make medical decisions on their own. The models can still misunderstand images, struggle with nuanced clinical judgment, and sometimes invent facts. Used carefully, they may become valuable assistants in veterinary education and information support—but the responsibility for animal health will remain firmly in human hands.

Citation: Kako, T., Kato, D., Iguchi, T. et al. Performance evaluation of generative pre-trained transformer on the National Veterinary Licensing Examination in Japan. Sci Rep 16, 4306 (2026). https://doi.org/10.1038/s41598-026-37300-9

Keywords: veterinary licensing exams, large language models, artificial intelligence in medicine, GPT performance, Japanese veterinary education