Clear Sky Science · en

Aerospace aluminum surface defect detection method based on Multi-Scale Convolution and attention mechanism

Why tiny flaws in metal really matter

From airplane wings to smartphone frames, aluminum parts must be nearly perfect. Microscopic scratches, bubbles in paint, or tiny pits on a metal surface can grow into cracks that threaten safety, shorten product lifetimes, or force costly recalls. Inspecting every part by eye is slow and error-prone, and even many automated cameras still miss the smallest flaws. This study explores a new artificial-intelligence method that can spot extremely small defects on aluminum surfaces more reliably and at industrial speeds.

Hidden dangers on smooth metal

Aluminum profiles are the long bars and panels used in aircraft fuselages, wings, fuel tanks, and many other structures. Although they may look smooth, their surfaces can contain a wide variety of problems: leaks in protective layers, regions that do not conduct electricity properly, orange-peel textures, scratches, dirt spots, paint bubbles, streaks from jets of paint, pits, and corner leaks. These flaws are often only a few pixels wide in a high-resolution image and can blend into reflections or background noise. Traditional inspection, whether done by humans or by older machine-vision systems, struggles to distinguish such tiny marks from harmless texture, especially when lighting and backgrounds are complex.

Teaching a camera to look once, but carefully

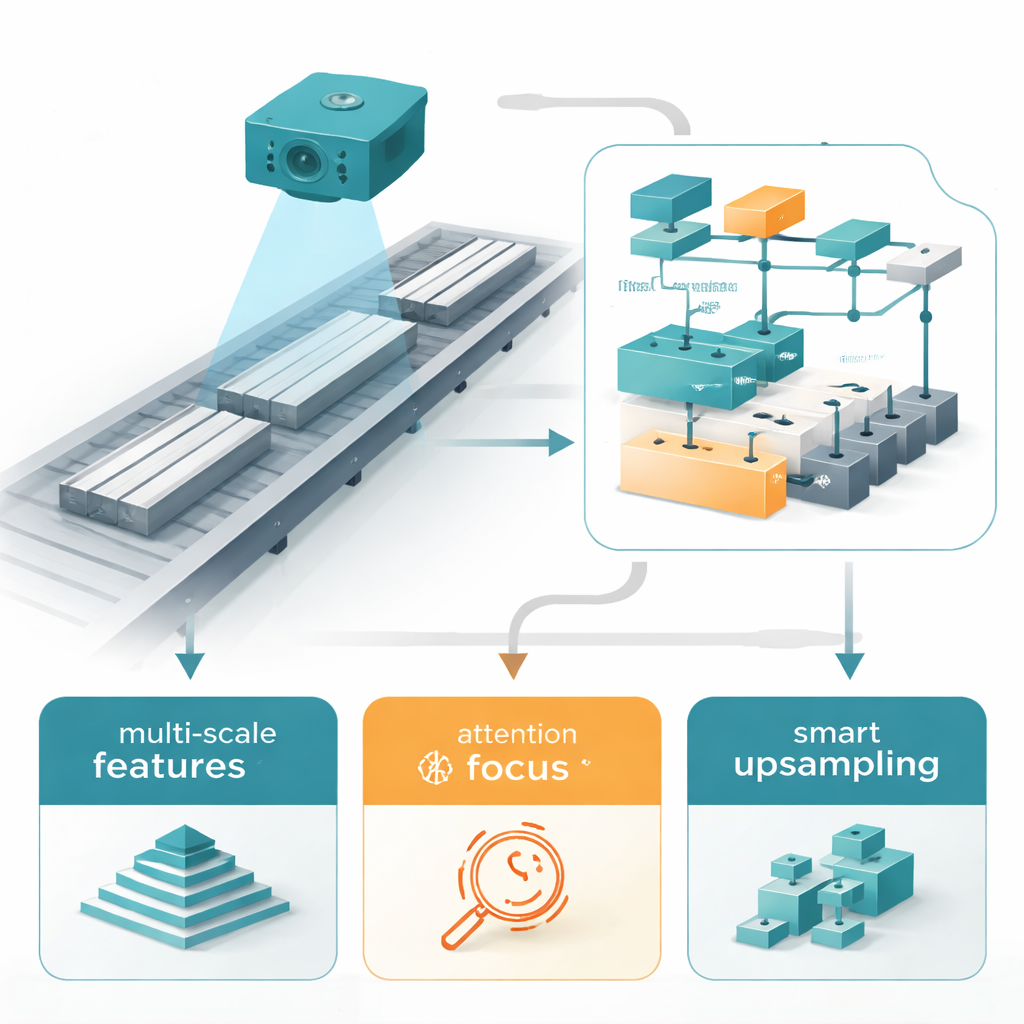

In recent years, object-detection systems based on deep learning—especially the YOLO (“You Only Look Once”) family—have become popular in factories for finding defects. YOLOv11, a recent version, is already fast and accurate for many tasks, but still tends to miss very small flaws on aluminum. The authors build on the lightweight YOLOv11n variant and rework its inner layers to pay more attention to fine detail without slowing it too much. Their approach combines three main ideas: a smarter way to capture patterns at several sizes at once, a way for the network to focus on the most informative pixels, and a more careful method for enlarging small patterns so the model does not lose them during processing.

Seeing details at many scales

The first innovation is a redesigned feature-extraction module, called C3k2-DWR-DRB, which replaces a standard block in YOLOv11n. In everyday terms, this block lets the network look at the same image patch with multiple “zoom levels” at once—very close up for micro-scratches, a bit wider for paint bubbles, and wider still for stains or color changes. It uses special dilated convolutions and a technique that merges several filter paths into a single efficient one, so the model can see both fine textures and larger shapes without becoming heavy or slow. Shallow layers focus on hairline scratches, while deeper layers track broad defects like oil stains, improving recognition of both small and large flaws in one unified system.

Helping the model pay attention where it counts

Next, the researchers add an attention module called SimAM near the end of the network. Rather than introducing many new parameters, SimAM estimates how important each tiny region of the feature map is by measuring how different it is from its surroundings. Areas that stand out—such as a faint bubble or speck of dirt—are boosted, while uniform background regions are toned down. This makes the detector more sensitive to genuine defects and less likely to be distracted by reflections or harmless texture, which in turn reduces missed detections and false alarms.

Rebuilding tiny patterns without blurring them

A third key element is the CARAFE upsampling operator, which replaces the usual “stretching” methods used in many neural networks. Standard techniques, like nearest-neighbor or bilinear interpolation, can blur away the very details that matter most for small flaws. CARAFE instead learns how to reassemble features based on local context, effectively deciding how each new pixel should be formed from its neighbors. This content-aware reconstruction creates sharper, more informative maps of small targets, making bubbles, pits, and specks easier for the detector to lock onto.

Putting the method to the test

To evaluate their system, the authors used a public industrial dataset of aluminum surface images from an online competition and carefully rechecked all the defect labels. They also expanded the dataset with small rotations, flips, and scaling so the model would see defects under varied conditions. On this benchmark, their improved YOLOv11n model reached a mean average precision of 79.4% at a commonly used threshold and a recall of 76.6%, meaning it finds more of the true defects than the original YOLOv11n while keeping the model compact. It showed especially strong gains on difficult small and “extremely small” targets like paint bubbles and dirty spots, and it maintained real-time speed with around 178 frames per second on a powerful graphics card.

What this means for everyday technology

For non-specialists, the takeaway is that the authors have built a smarter, leaner “eye” for factories: a camera-and-algorithm system that can notice almost invisible flaws on aluminum surfaces in real time. By cleverly combining multi-scale analysis, attention, and careful upsampling, their method improves both accuracy and reliability without demanding huge computing resources. If further tested under harsher real-world conditions and adapted to low-power hardware, this approach could help make airplanes, vehicles, electronics, and other metal-based products safer and more reliable, while reducing waste and inspection costs.

Citation: Zhang, R., Cai, S., He, Z. et al. Aerospace aluminum surface defect detection method based on Multi-Scale Convolution and attention mechanism. Sci Rep 16, 6428 (2026). https://doi.org/10.1038/s41598-026-37293-5

Keywords: aluminum surface defects, industrial inspection, deep learning detection, YOLO object detection, aerospace materials