Clear Sky Science · en

A deep learning ensemble framework for multi-subtype renal tumor classification using contrast-enhanced CT

Why spotting kidney tumors early matters

Kidney cancer can be silent for years, showing few symptoms until it has already spread. Yet with modern imaging, many kidney masses are discovered by chance during scans for back pain or other problems. The central challenge then becomes: is the mass dangerous cancer that needs surgery, or a harmless growth that can simply be watched? This study explores how artificial intelligence can help doctors read CT scans more accurately, reducing unnecessary operations while still catching aggressive tumors in time.

Five kinds of kidney lumps, one hard decision

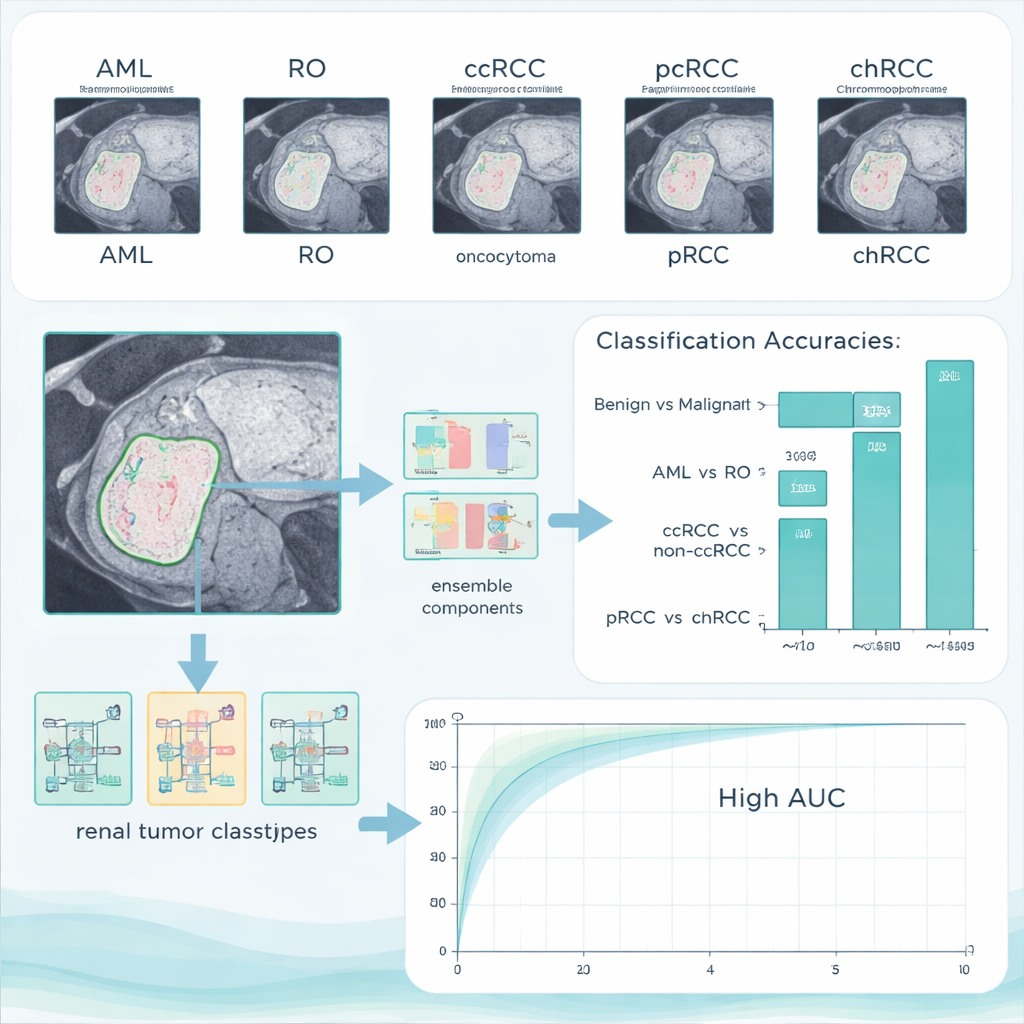

Not all kidney tumors are alike. Some, such as angiomyolipoma (AML) and renal oncocytoma (RO), are benign and may never threaten a person’s life. Others, grouped under renal cell carcinoma (RCC), are malignant and can spread to other organs. Among malignant kidney cancers, clear cell RCC (ccRCC) is the most common and most likely to metastasize; papillary RCC (pRCC) and chromophobe RCC (chRCC) are generally less aggressive but still serious. On ordinary scans, however, these different subtypes can look surprisingly similar, so doctors often rely on biopsy or surgery for a firm diagnosis. The authors set out to see whether a sophisticated computer system could reliably sort these five tumor types using only contrast-enhanced CT images.

Turning CT scans into teachable patterns

The team collected contrast-enhanced CT scans from 280 patients whose kidney tumors had already been confirmed by tissue analysis. Expert radiologists carefully outlined each tumor by hand, slice by slice, to provide precise “ground truth” regions for the computer to learn from. Only one CT phase—the portal-venous phase, commonly used in routine care—was used, emphasizing that the method should work with standard hospital imaging. The dataset ultimately included five clearly labeled groups: 84 ccRCC, 36 pRCC, 48 chRCC, 72 AML, and 40 RO cases, spread across a wide age range and both sexes. The authors then split the cases into training, validation, and test sets by patient, ensuring that images from the same individual never appeared in more than one group.

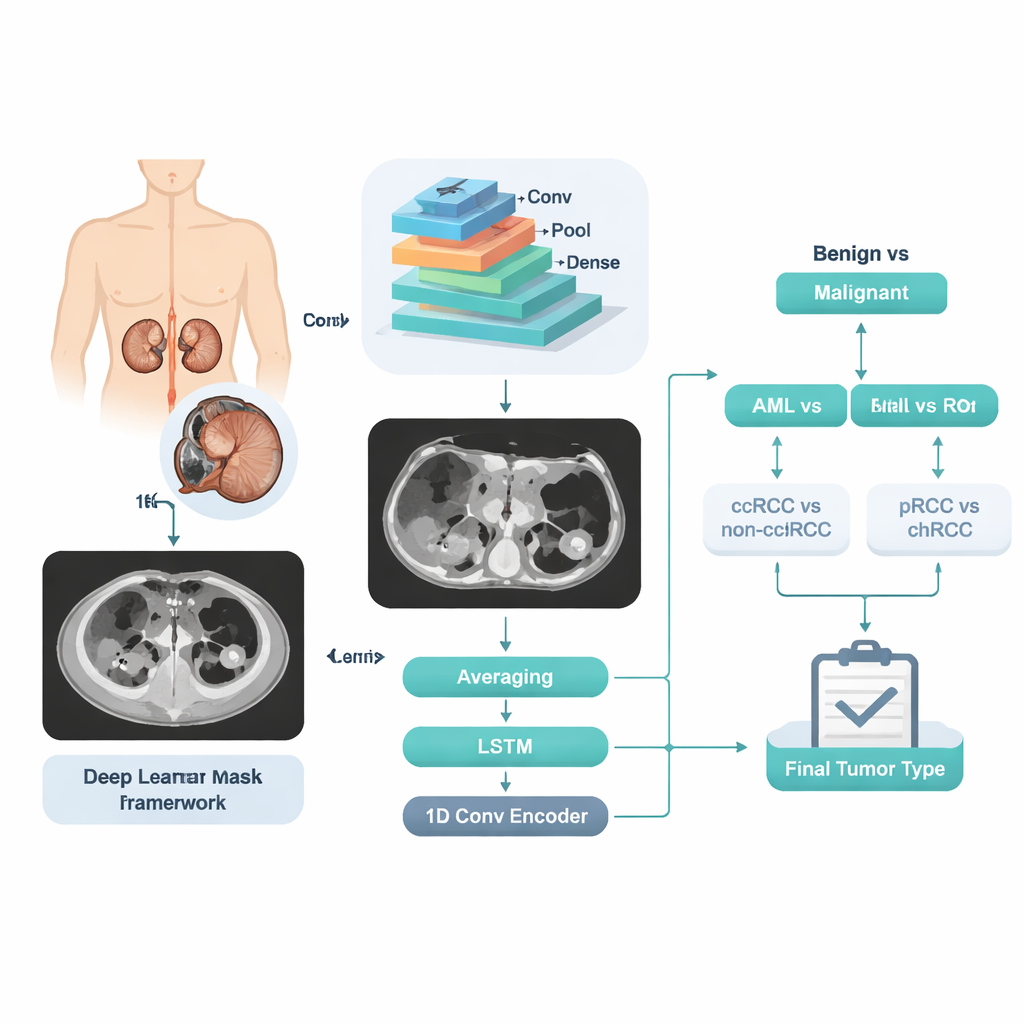

A step‑by‑step digital second opinion

Instead of asking the computer to jump directly from an image to one of five labels, the researchers designed a stepwise decision pipeline that mirrors a doctor’s reasoning. First, the system decides whether a tumor is benign or malignant. If benign, a second decision separates AML from RO. If malignant, another decision splits ccRCC from the other RCC types, followed by a final step that distinguishes pRCC from chRCC. At each step, a powerful image-analysis engine called a convolutional neural network examines many slices from the same patient. Its internal numerical “features” are then processed three different ways: by simple averaging of slice‑level predictions, by a sequence‑aware model that looks at how the tumor changes across slices, and by a compact encoding network that summarizes the whole stack into a single signature. The three opinions are blended into one final probability for that stage.

How well the AI system performed

On their main test set, the combined system reached 96.4% accuracy in separating benign from malignant tumors, with no benign cases wrongly labeled as cancer and only a small number of cancers missed. When asked to tell the two benign types apart, it achieved a perfect 100% accuracy. The more subtle tasks—distinguishing ccRCC from the other RCC types, and papillary from chromophobe cancers—were harder, but the system still managed accuracies above 90%. Importantly, the authors also tested their trained model on an entirely different public dataset collected elsewhere. Performance remained high, suggesting that the method is not just memorizing one hospital’s images but can generalize to new patients and scanners.

What this could mean for patients

In plain terms, this research shows that an AI “assistant” can read kidney CT scans in a way that closely matches, and in some respects surpasses, current manual methods for separating harmless from dangerous tumors and identifying important cancer subtypes. If further validated, such a system could help radiologists avoid unnecessary biopsies and surgeries for benign tumors while giving more confidence in early treatment decisions for aggressive cancers. For patients, that could mean fewer invasive procedures, faster answers, and more personalized care based on the exact nature of their kidney tumor.

Citation: Abdeltawab, H., Alksas, A., Ghazal, M. et al. A deep learning ensemble framework for multi-subtype renal tumor classification using contrast-enhanced CT. Sci Rep 16, 6657 (2026). https://doi.org/10.1038/s41598-026-37283-7

Keywords: kidney cancer, renal tumor imaging, deep learning, CT scan, computer-aided diagnosis