Clear Sky Science · en

SSG–CAM: enhancing visual interpretability through refined second-order gradients and evolutionary multi-layer fusion

Why Seeing Inside AI Matters

Modern image-recognition systems can spot tumors, traffic signs, or tiny parasites in blood cells with superhuman speed—but they rarely show us exactly why they made a decision. This "black box" behavior is especially troubling in medicine and safety-critical fields, where a wrong guess can have serious consequences. The paper introduces a new way to make deep-learning models visually explain themselves more clearly and reliably, helping humans see which parts of an image truly drove the AI’s choice.

From Fuzzy Heatmaps to Sharper Explanations

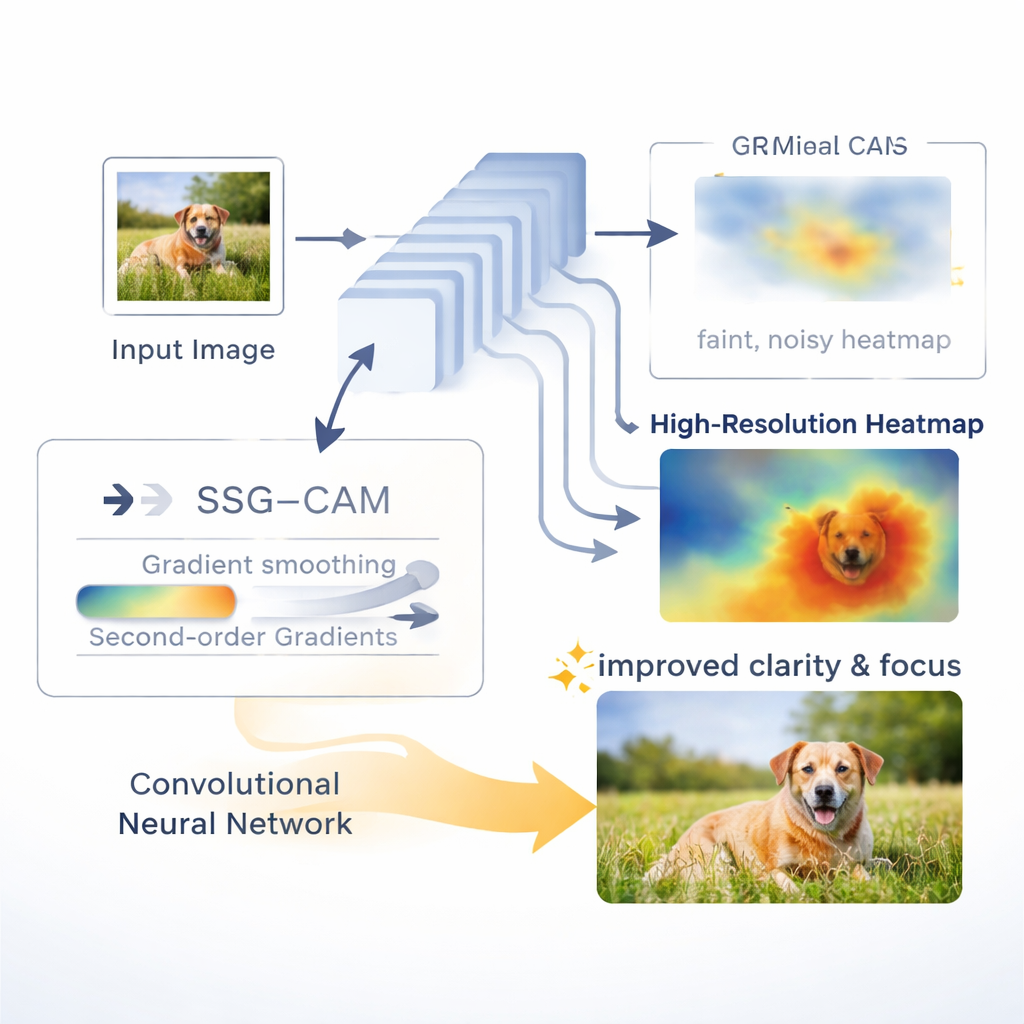

A popular family of tools called class activation maps, or CAMs, turns a neural network’s inner workings into colorful heatmaps overlaid on the original image. Bright regions show where the model "looked" to decide, for example, that an image contains a bird or a diseased cell. Existing CAM methods often rely on simple, first-layer gradient signals inside the network. These signals can be noisy or become "saturated," meaning they stop changing even when the image details still matter. As a result, heatmaps may light up large chunks of background, miss fine details, or give inconsistent explanations from one layer to another.

A Smoother, Second Look at What the Network Sees

The authors propose Smooth Second-Order Gradient CAM, or SSG–CAM. Instead of depending only on the first push of the gradients, SSG–CAM also looks at how those gradients themselves change—the second-order information. This extra layer of sensitivity helps reveal which features the network’s decision is truly hinging on, reducing the risk that important evidence is washed out. To tame random noise, SSG–CAM gently smooths the gradients using a Gaussian filter, similar to how a camera blur removes speckles while preserving shapes. Finally, it combines the smoothed first- and second-order signals in a way that emphasizes strong, reliable responses and suppresses weak or inconsistent ones, producing cleaner, more focused heatmaps.

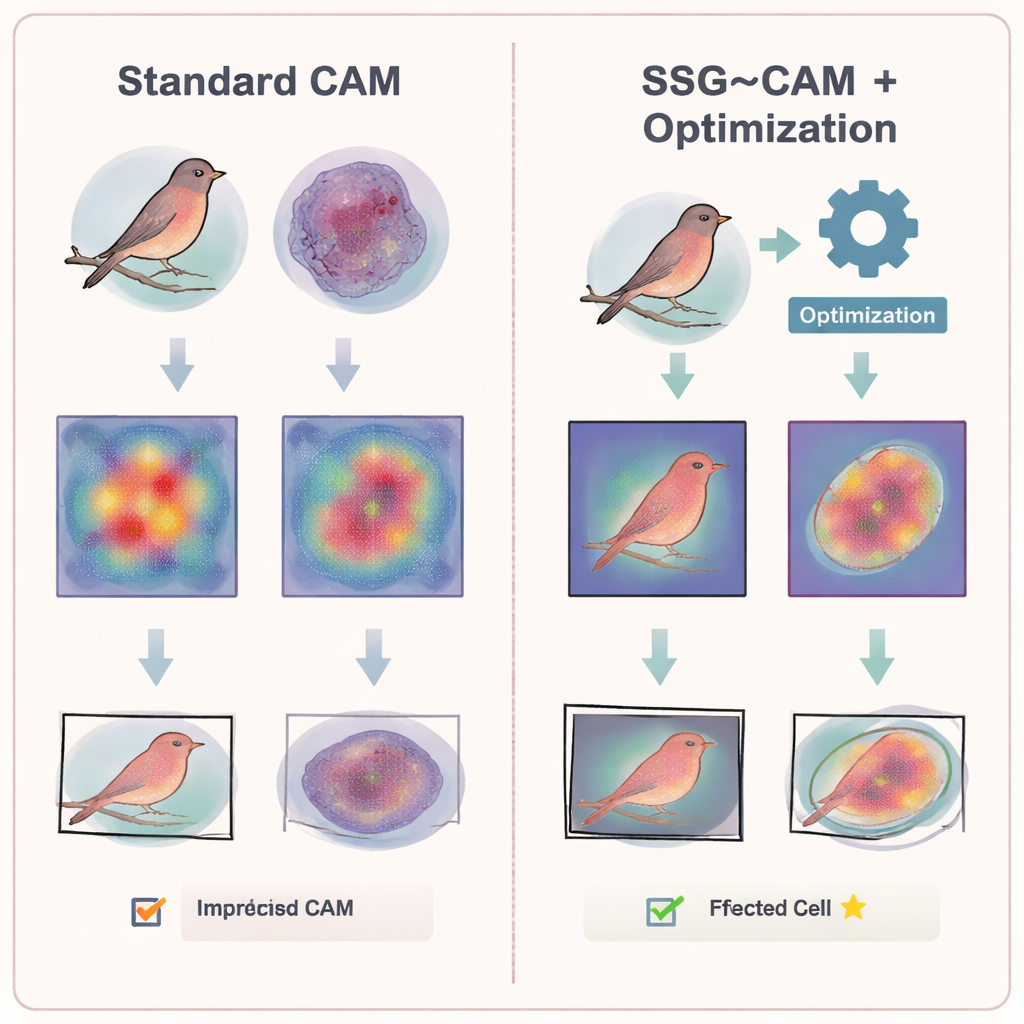

Letting Algorithms Pick the Best Layers

Deep networks do not think in a single step: early layers capture edges and textures, while deeper layers encode whole objects or concepts. Many CAM methods try to merge information from several layers, but often by hand-chosen or fixed rules. The study shows that naïvely stacking all layers together can actually hurt performance, adding low-level noise that blurs the final explanation. To solve this, the authors pair SSG–CAM with an optimization strategy called differential evolution, creating the DE–SSG–CAM framework. This algorithm automatically searches across combinations of feature layers and a few key settings, aiming to find the mixture that best matches real object shapes in a small labeled set. Once found, these settings can be reused, giving strong multi-layer explanations without costly manual tuning.

Putting the Method to the Test

The researchers put SSG–CAM and DE–SSG–CAM through a series of demanding tests. On standard image benchmarks, the new method made weakly supervised object localization—drawing boxes around objects using only image-level labels—more accurate than several popular CAM variants. It also improved weakly supervised semantic segmentation, which asks the model to label every pixel without providing detailed training masks. In an "image perturbation" experiment, the team blurred out the regions highlighted by each method. When they removed areas selected by SSG–CAM, the network’s accuracy dropped the most, indicating that these highlighted regions were truly critical to the model’s decision, not just decorative hotspots.

Finding Tiny Parasites in Blood Cells

The most striking application comes from biomedical imaging. The authors used their approach to locate malaria parasites inside red blood cell images, a task where the infected regions can be minuscule and irregular. Using only image-level infection labels for training, DE–SSG–CAM produced pseudo-masks that lined up closely with expert-drawn outlines, reaching a mean Intersection over Union of 62.38%—a strong result for such a challenging, weakly labeled problem. The framework also transferred well to a different network type, ResNet34, showing that the technique is not tied to a single architecture and can adapt across designs.

What This Means for Everyday Users

For non-specialists, the key message is that these methods make AI’s "reasoning" more visible and trustworthy. SSG–CAM offers sharper, less noisy heatmaps that better match what humans would consider the true object or lesion, while DE–SSG–CAM automatically learns how to combine information from different network depths. Together, they move visual explanations a step closer to something doctors, engineers, and regulators can rely on when asking, "Why did the model say this image shows disease—or danger?"

Citation: Chen, Z., Zhang, Y.J., Pan, L. et al. SSG–CAM: enhancing visual interpretability through refined second-order gradients and evolutionary multi-layer fusion. Sci Rep 16, 6848 (2026). https://doi.org/10.1038/s41598-026-37278-4

Keywords: explainable AI, class activation maps, deep learning visualization, medical image analysis, object localization