Clear Sky Science · en

Intelligent generation of product bionic image forms via multimodal emotion-weighted quantification

Why Friendlier Robots Matter

Many of us are beginning to share our homes and workplaces with talking speakers, chatbots, and simple robots. Yet these products often feel cold or generic, more like tools than companions. This study explores how to systematically design robots that feel friendlier and more emotionally appealing by borrowing visual cues from nature—specifically, from a famously friendly dog breed—while using modern artificial intelligence to keep the process controllable and grounded in real user feelings.

Turning Feelings Into Design Clues

Instead of asking designers to rely on intuition alone, the researchers first set out to measure how people emotionally respond to different shapes and images. They focused on a struggling commercial companion robot and asked users what kind of look they most wanted. From many descriptive words, “friendly” emerged as the top priority. To understand what “friendly” actually looks like, they showed people images of popular cat and dog breeds and asked which animal best matched that feeling. The clear winner was the Samoyed, a fluffy white dog known for its round face and smiling expression.

Reading Eyes, Faces, and Voices

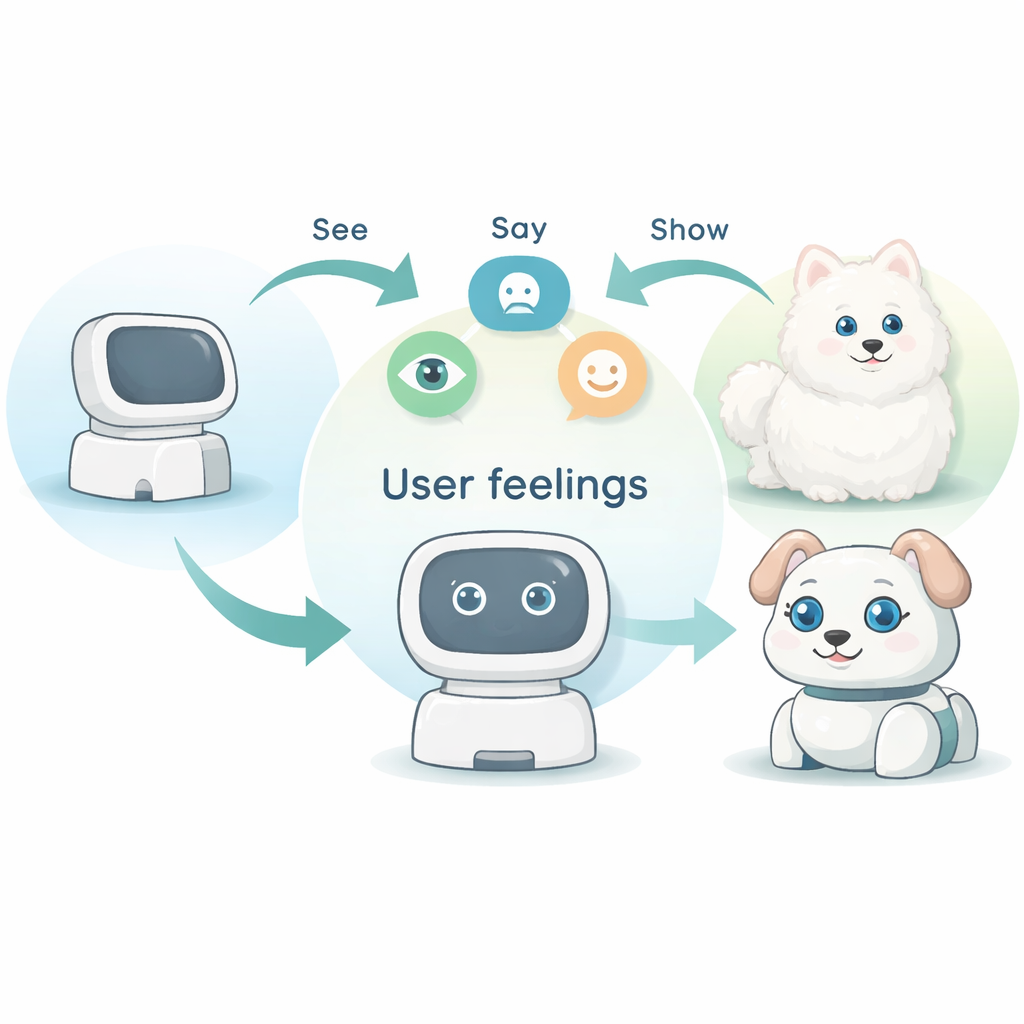

Next, the team built a detailed experiment they call BI-MEC, which looks at how people react to images on three levels at once: where their eyes move, how their facial expressions change, and what they say out loud. Participants viewed pictures of Samoyeds at different ages while an eye-tracker recorded which parts of the dog they focused on. At the same time, specialized software analyzed tiny changes in their faces and the tone of their voices to estimate emotions such as joy, calmness, interest, or boredom. The researchers then combined these signals with a psychology-based emotion dictionary to calculate a single “emotional score” for each picture, indicating how strongly and positively it made people feel.

Distilling a “Friendly Dog” Into Simple Lines

By comparing emotional scores and eye‑tracking heat maps, one Samoyed image clearly stood out as the most uplifting. The hottest areas on the heat map showed that people mainly stared at the face—especially the eyes, mouth, ears, and surrounding fur—while the body shape was far less important. Using this information, the team created an “image stimulus graphic”: a very simplified line drawing that preserved only the most emotionally important features, such as big eyes, upright ears, and a tongue, all in proportions that felt friendly. A follow‑up online test confirmed that most people could correctly match this stripped-down drawing to a Samoyed among many dog photos, showing that the essential “friendly dog” signal had been captured.

Letting AI Blend Dogs and Robots

With a simplified Samoyed drawing and a matching line version of the companion robot, the researchers turned to an AI system called StyleGAN. This tool is excellent at learning how visual features can be smoothly mixed and morphed. They trained StyleGAN on enhanced sets of robot and dog‑inspired line images and then used a slider-like control in the system’s inner “latent space” to blend between the two. Unlike another AI method they tested (CycleGAN), which produced distorted and unusable shapes, StyleGAN generated a series of designs that gradually shifted from pure dog to pure robot while keeping clean outlines and recognizable features.

Friendlier Forms, Measurable Impact

From the StyleGAN results, the team selected two intermediate designs that looked both clearly robotic and clearly influenced by the Samoyed’s friendly face. These were turned into polished 3D models and compared with the original product design in user tests. People rated all three on friendliness, overall beauty, and sense of innovation. The best new design achieved a friendliness score about 22.6 percent higher than the original, while also scoring better on aesthetics and originality. In practical terms, this shows that carefully measured human emotions, distilled into simple visual cues and then amplified by AI, can produce product forms that people feel closer to—without leaving everything to guesswork or trend-chasing.

What This Means for Everyday Products

For non-experts, the main message is that the “face” a product shows the world no longer has to come only from a designer’s hunch. By tracking how real users look, react, and speak, and then feeding these signals into advanced image‑generation tools, companies can create devices—from robots to appliances—that better match our desire for warmth, safety, and connection. This study’s dog‑inspired companion robot is just one example of a broader shift: designs that are both emotionally tuned to human feelings and efficiently generated with the help of AI.

Citation: Chen, X., Lin, L., Yang, M. et al. Intelligent generation of product bionic image forms via multimodal emotion-weighted quantification. Sci Rep 16, 6221 (2026). https://doi.org/10.1038/s41598-026-37257-9

Keywords: companion robots, emotion-driven design, bio-inspired products, AI-generated shapes, user-centered design