Clear Sky Science · en

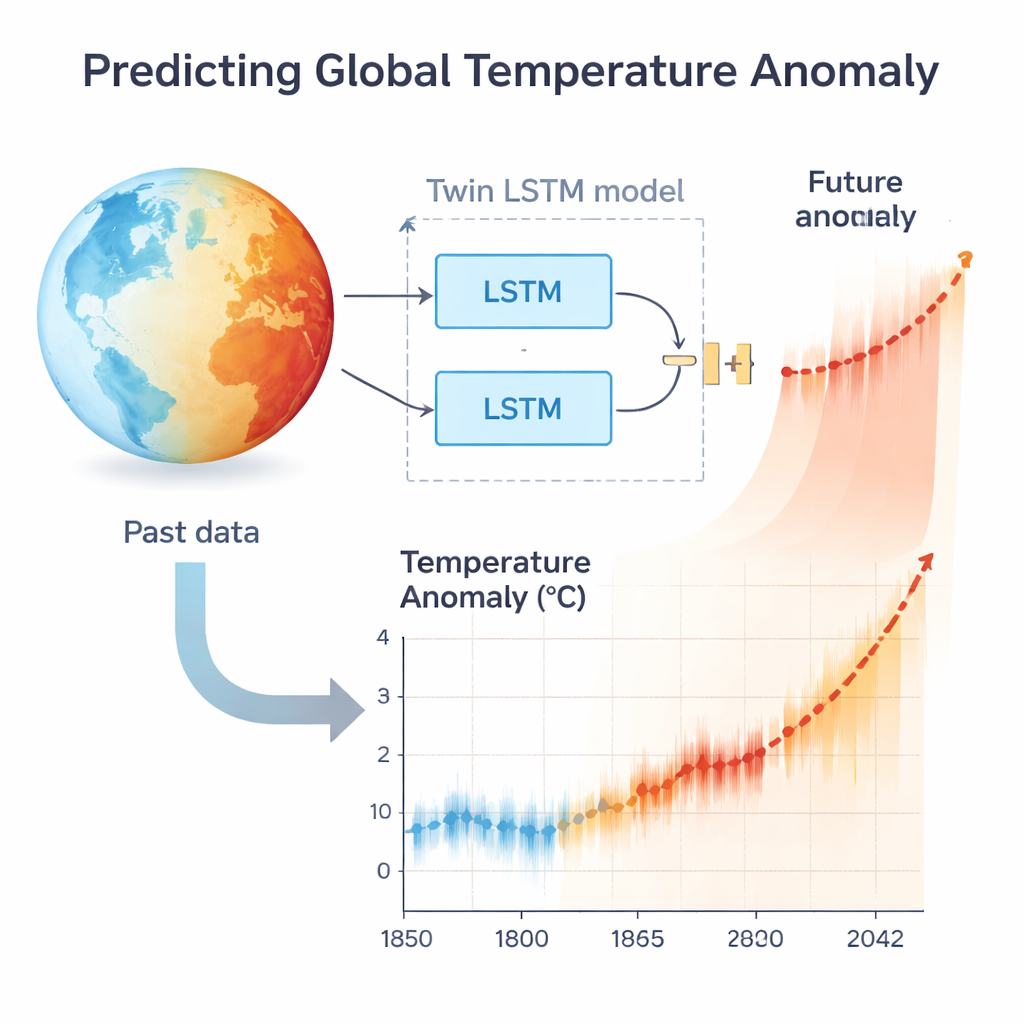

Global temperature anomaly prediction by using additive twin LSTM networks

Why a Warmer World Matters to You

Global warming can sound abstract, but its effects are anything but: rising seas, harsher heat waves, shifting storms, and pressure on food and water supplies. To prepare for what is coming, scientists need not just snapshots of today’s climate, but reliable estimates of how fast temperatures will climb in the decades ahead. This article explores a new way to use artificial intelligence to forecast how much warmer the planet is likely to become, and what that means for our near future.

From Raw Thermometers to Big-Picture Trends

Instead of working with weather reports from a single city, the researchers use a global record known as the Berkeley Earth temperature anomaly dataset. A “temperature anomaly” is simply how much warmer or cooler a given period is compared with a chosen historical baseline. Because month‑to‑month readings are noisy and heavily influenced by local quirks, the team relies on five‑year averages spanning 170 years, from the mid‑1800s to 2022. Smoothing the data this way reduces random bumps and better reveals the underlying warming trend that reflects the planet’s long‑term response to greenhouse gases and other influences.

Teaching a Neural Network to Remember the Climate

To capture that trend and project it forward, the authors turn to a kind of artificial neural network called Long Short‑Term Memory, or LSTM. LSTMs are designed to handle sequences—such as words in a sentence or temperatures over time—by deciding which pieces of past information to keep and which to forget. Traditional LSTM and related models have done well at short‑term prediction, such as guessing the next data point. But when their own guesses are fed back as input to forecast many steps into the future, small errors pile up and the long‑range outlook can drift badly away from reality.

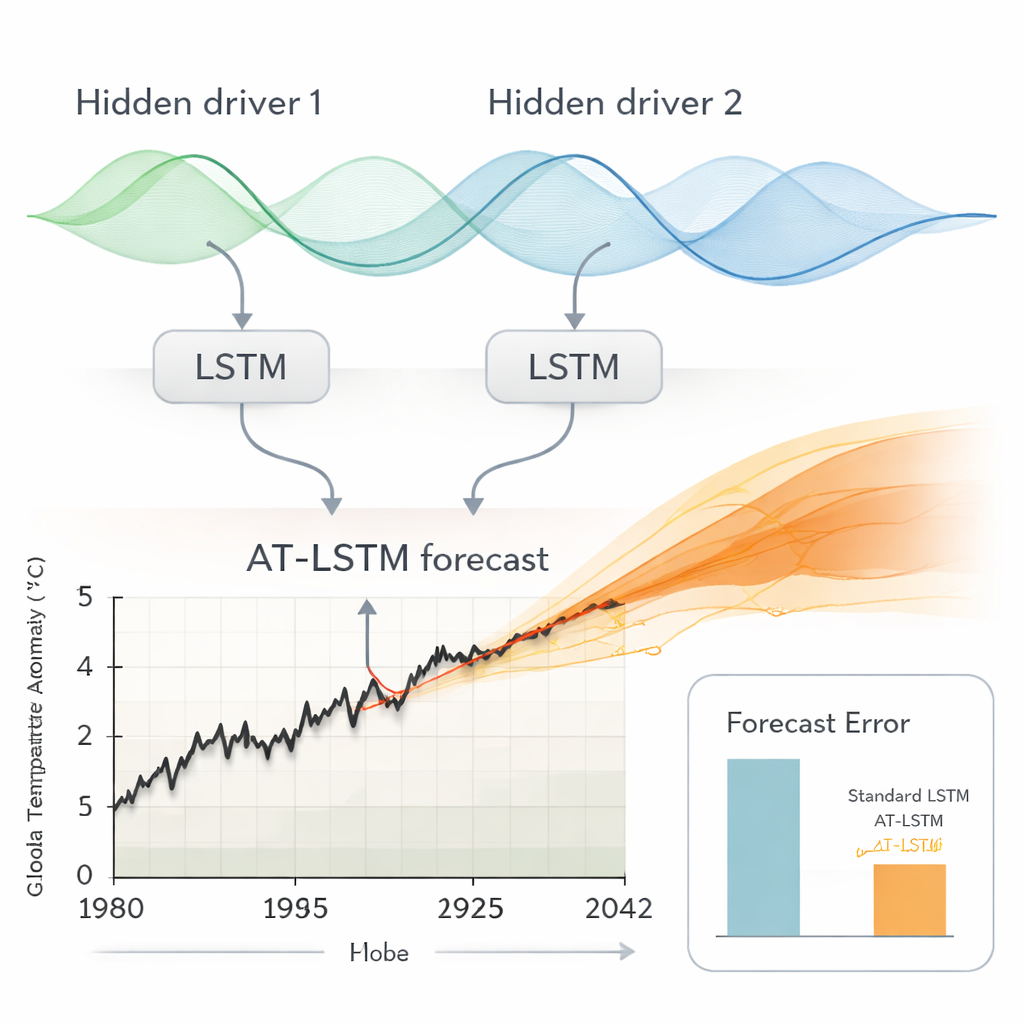

Splitting Climate Signals into Twin Streams

The central innovation of this work is an Additive Twin LSTM (AT‑LSTM). Instead of one LSTM trying to mimic every twist and turn of the climate record, the model uses two parallel LSTM branches. Each branch is free to focus on different hidden drivers in the data—for example, slow warming from greenhouse gases versus faster ups‑and‑downs tied to natural climate swings. The outputs of these twin branches are then added together and passed through a final “decoder” network that turns their combined signal into a temperature anomaly forecast. This twin design not only aligns with how climate scientists think about multiple, partly independent processes in the Earth system, it also stretches the useful range of the network’s internal signals, helping it stay more stable over long forecasting horizons.

Putting the Model Through Its Paces

To see whether AT‑LSTM truly improves long‑term forecasting, the authors carry out a two‑stage test. First, they train the model on both synthetic benchmark series—clean, computer‑generated curves that mimic different types of warming paths—and on the historical Berkeley data. They compare how well various neural‑network designs reproduce both their training data and a separate “test” portion of each series that the models never saw during training. Many models, including some hybrids that mix LSTMs with convolutional layers, look impressive by these standard measures. However, reproducing past data is not the same as reliably peering into the future.

Judging Models by How They Forecast, Not Just Fit

The second stage is closer to real‑world use. Starting from the last observed point in the test set, each model uses its own previous prediction as the next input, stepping forward 240 months—20 years—without ever being corrected by real data. This setup reveals how quickly errors snowball. Across a suite of architectures, AT‑LSTM typically shows the smallest average forecast errors and the highest statistical scores when judged on this long‑horizon task. For the global temperature anomaly record in particular, the model’s typical error over a simulated 20‑year forecast window is about 0.07 degrees Celsius, markedly lower than that of many competing deep‑learning approaches.

What the Forecast Says About Our Near Future

Armed with this better‑behaved model, the authors generate 20‑year projections for global temperature anomalies from 2022 to 2042. Training 40 versions of AT‑LSTM to capture the uncertainty in how the model learns, they find that every one of them points to continued warming. By 2042, the ensemble of forecasts clusters between about 1.05 °C and 1.67 °C above the historical baseline, with an average of 1.415 °C and an estimated uncertainty of roughly ±0.073 °C. These numbers align closely with projections from mainstream climate models and with warnings from organizations such as the Intergovernmental Panel on Climate Change. In plain language, if current patterns continue, we are likely to approach or cross the widely discussed 1.5 °C threshold within the next couple of decades, underscoring the urgency of cutting greenhouse‑gas emissions and pursuing other climate‑mitigation strategies.

Citation: Keles, C., Baran, B. & Alagoz, B.B. Global temperature anomaly prediction by using additive twin LSTM networks. Sci Rep 16, 6456 (2026). https://doi.org/10.1038/s41598-026-37255-x

Keywords: climate change, global warming, temperature anomaly, neural networks, climate forecasting