Clear Sky Science · en

When LLMs speak ZigBee: exploring low-latency and reasoning models for network traffic generation

Smart homes need believable practice runs

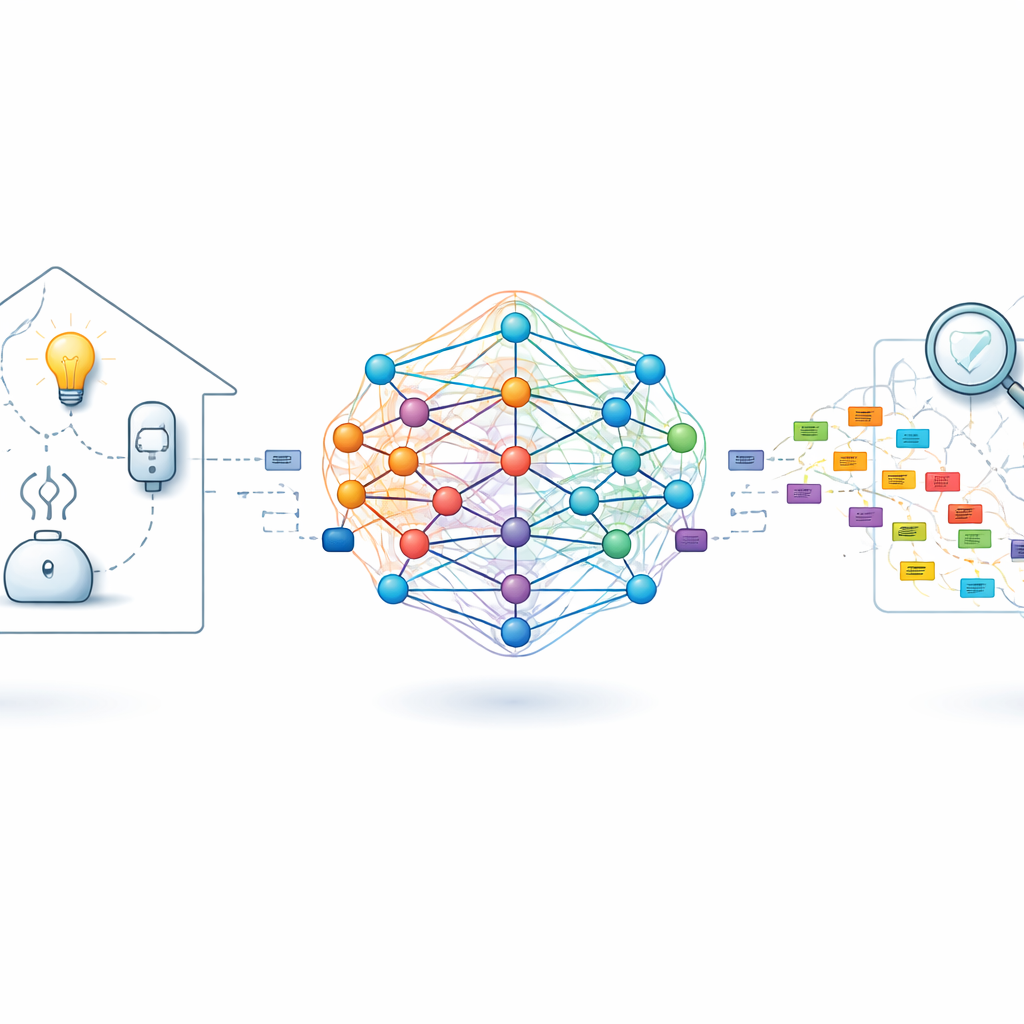

As our homes fill with smart lights, plugs, and sensors, the invisible chatter between them becomes both a convenience and a potential weak point. Engineers who build and secure these systems need safe ways to “rehearse” how networks behave under real-world conditions, including rare glitches and cyberattacks. This paper explores whether modern AI language models—the same kind used for chatbots—can be repurposed to generate realistic smart‑home network traffic, giving researchers a powerful new testbed without having to record every possible scenario from real houses.

From human language to device conversations

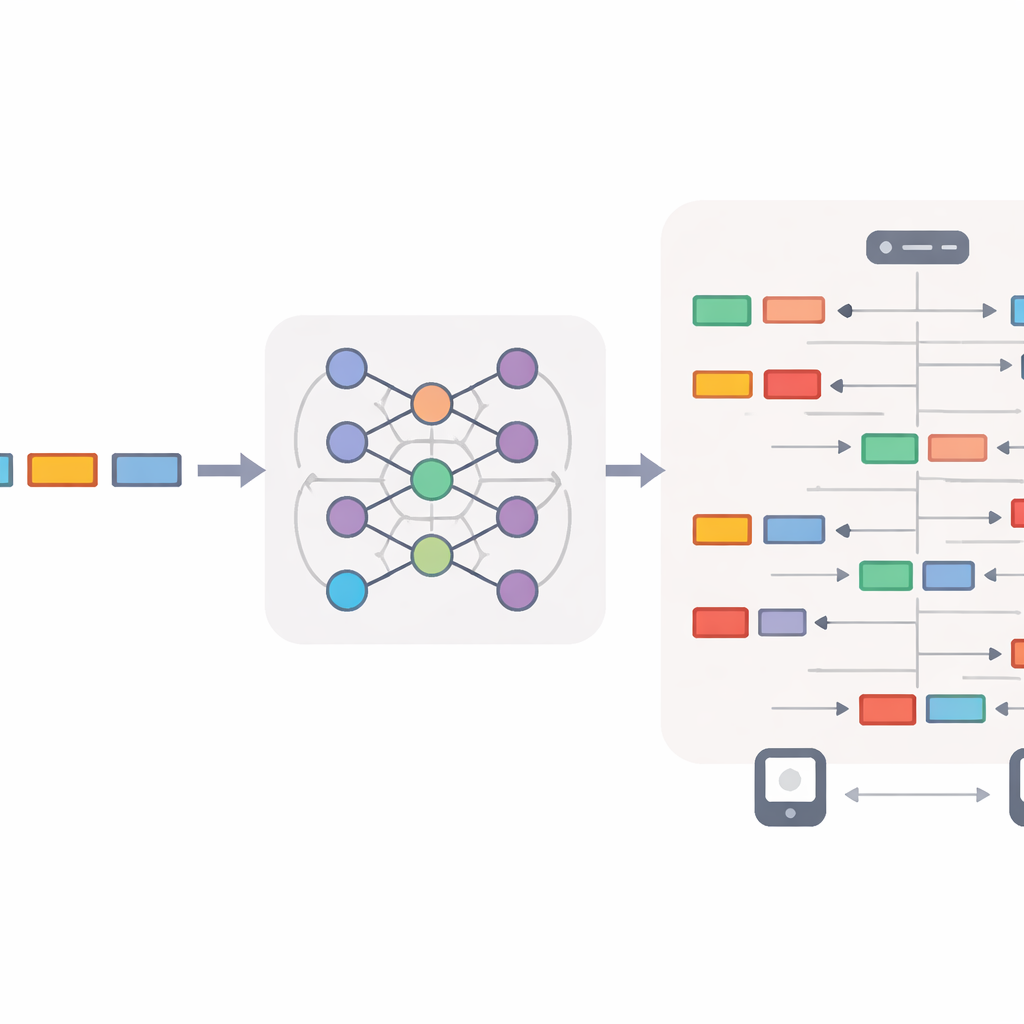

The study focuses on ZigBee, a popular wireless standard used in smart bulbs, plugs, and motion sensors. Instead of generating ordinary text, the authors feed sample ZigBee packets—time-stamped records of who talked to whom, using which protocol fields—into large language models (LLMs) from OpenAI, specifically GPT‑4.1 and GPT‑5. These models treat each packet as a structured “sentence” and learn patterns in how devices and the central hub communicate over time. The goal is not just to mimic basic statistics like average packet size, but to produce new traffic that obeys ZigBee’s strict rules, uses valid device addresses, and preserves realistic timing and request–response patterns.

Two experiments: one‑way talk and full dialogue

To test this idea, the researchers design two main experiments using a large, real smart‑home dataset called ZigBeeNet, which contains about 25 million packets collected from 15 devices over 20 days. In the first experiment, they study one‑way communication from a single smart bulb to the hub, teaching the LLM using only the first ten minutes of real traffic as examples. In the second, they move to a more lifelike setting where the bulb and hub exchange messages in both directions, including broadcasts from the hub. In both cases, a small set of example packets is shown to the model inside the prompt (few‑shot learning), and the model is asked to produce longer stretches of completely new traffic that can be converted back into standard packet capture files and inspected with common network tools.

Guiding the model with rules and human checks

Because a misplaced field or out‑of‑order timestamp can break the illusion of realism, the team builds a careful prompting and feedback setup. First, they filter and export the real packets, then craft prompts that spell out allowed device addresses, message types, and time formats. In an initial phase, a human expert reviews the model’s output, looking for issues such as invalid addresses, impossible sequence numbers, or gaps in time. Instead of manually fixing packets, they translate these findings into stricter prompt rules—for example, forbidding a device from sending to itself or requiring packet counts to stay within a realistic range. Once the rules are stable, the prompts are “frozen” and reused unchanged so later experiments are fully automatic and reproducible.

Putting LLMs up against older generators

To see whether LLMs truly add value, the authors compare GPT‑4.1 and GPT‑5 to two classic deep‑learning approaches: recurrent neural networks (RNNs) and generative adversarial networks (GANs), both adapted to generate ZigBee‑like sequences. They evaluate all models along many dimensions: how closely inter‑arrival times match real traffic, whether packets decode cleanly in standard tools, whether protocol rules and device roles are respected, how often packets repeat, and how often they exactly copy training examples. The results show that both GPT models produce almost perfectly decodable and protocol‑compliant traffic with low divergence from real timing patterns, while RNNs struggle with long‑term ordering and GANs often create unrealistically dense or semantically invalid traffic, especially for two‑way communication and longer durations.

When more “thinking” does not help

The study also probes a surprising question: does giving the reasoning‑oriented GPT‑5 more internal “think time” improve network realism? By dialing GPT‑5’s hidden reasoning effort from low to high, the authors find that greater effort makes the model slower and more verbose but does not improve, and sometimes harms, the closeness of its traffic to reality. GPT‑4.1, a faster non‑reasoning model, consistently matches or outperforms GPT‑5 on key quality metrics while using fewer computational resources. Over extended 30‑minute simulations, both LLMs maintain correct ZigBee behavior, but classical RNN and GAN baselines drift badly in timing and protocol correctness.

What this means for safer smart homes

For non‑specialists, the main message is that modern language models can learn not only human conversations but also the “language” of smart‑home devices, generating believable, rule‑following traffic on demand. The work shows that a relatively fast, low‑latency model like GPT‑4.1 can already serve as a high‑fidelity traffic generator for testing and security evaluation, potentially reducing the need to capture sensitive real‑world data. It also highlights that more complex, heavy‑weight reasoning is not always better: for tightly structured technical tasks, simpler, efficient models may be the smarter choice. As the authors release their code and data, this approach could help researchers worldwide stress‑test smart‑home systems, improve intrusion detection, and explore new network designs in a safe, synthetic playground.

Citation: Keleşoğlu, N., Sobczak, Ł. & Domańska, J. When LLMs speak ZigBee: exploring low-latency and reasoning models for network traffic generation. Sci Rep 16, 8036 (2026). https://doi.org/10.1038/s41598-026-37246-y

Keywords: smart home IoT, ZigBee traffic generation, large language models, network security testing, synthetic network data