Clear Sky Science · en

A hierarchical fusion framework for vehicle to grid energy management using predictive intelligence and learning based pricing

Why your car could help keep the lights on

Most people think of an electric car as a cleaner way to get from A to B. This paper explores a bigger idea: what if millions of parked electric vehicles (EVs) could quietly help run the power grid? By timing when cars charge and even letting them send power back, the authors show how smart software can cut electricity costs, ease strain on the grid, and make better use of solar and wind energy.

Cars, plugs, and a two-way street

The starting point is a concept called vehicle‑to‑grid, or V2G. Instead of just drawing power, an EV can also act like a small battery for the grid, charging when electricity is cheap and plentiful and discharging when demand is high. That sounds simple, but in practice it is a juggling act: drivers need their cars ready, prices change hour by hour, and solar and wind power rise and fall with the weather. Today, most systems handle these pieces separately, which leads to missed savings and unnecessary stress on power lines.

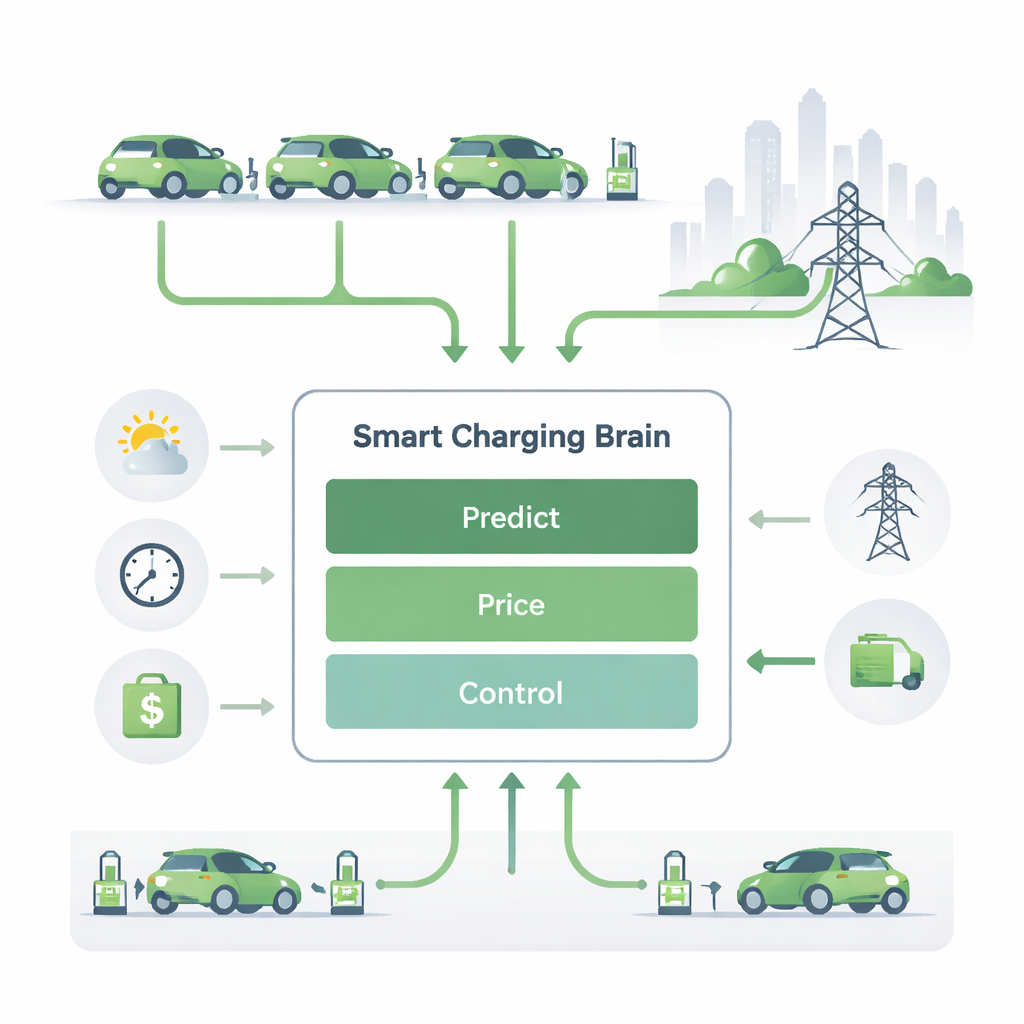

Letting machines look ahead

The first building block in the proposed framework is an artificial intelligence module that looks into the near future. It learns from past patterns of grid demand, weather, renewable output, electricity prices, and driver habits to predict when power will be cheap or expensive, and when cars are likely to be plugged in. Using these forecasts, it draws up a charging plan: fill batteries during low‑demand, low‑price hours, feed power back when demand and prices spike, and otherwise leave the car idle. In simulations, this predictive approach smooths out charging peaks, reduces stress on equipment, and still gets batteries filled on time.

Turning prices into signals, not surprises

The second piece uses ideas from economics to set prices that nudge everyone in a helpful direction. Here, EV owners, grid operators, and the power market are treated as players in a game. Each car can place a simple “bid” for when it wants to charge or sell energy, based on its battery level and current prices. The pricing layer then adjusts rates in real time so that, when the grid is under pressure, selling power from cars becomes more attractive, and when the grid is relaxed, charging becomes cheap. This approach rewards drivers for flexibility, discourages everyone from charging at the same time, and keeps overall demand within safe limits.

Teaching the system to learn from experience

The third layer is a learning‑by‑doing controller based on reinforcement learning, a branch of artificial intelligence also used in game‑playing robots. The controller “sees” the current state of each car and the grid — battery level, demand, price, and time — and must choose to charge, discharge, or wait. It receives rewards for helpful choices, such as charging when power is cheap or discharging during shortages, and penalties for wasteful actions. Over many simulated days, it discovers strategies that save money and support the grid, even when conditions change unexpectedly, such as a sudden drop in wind power.

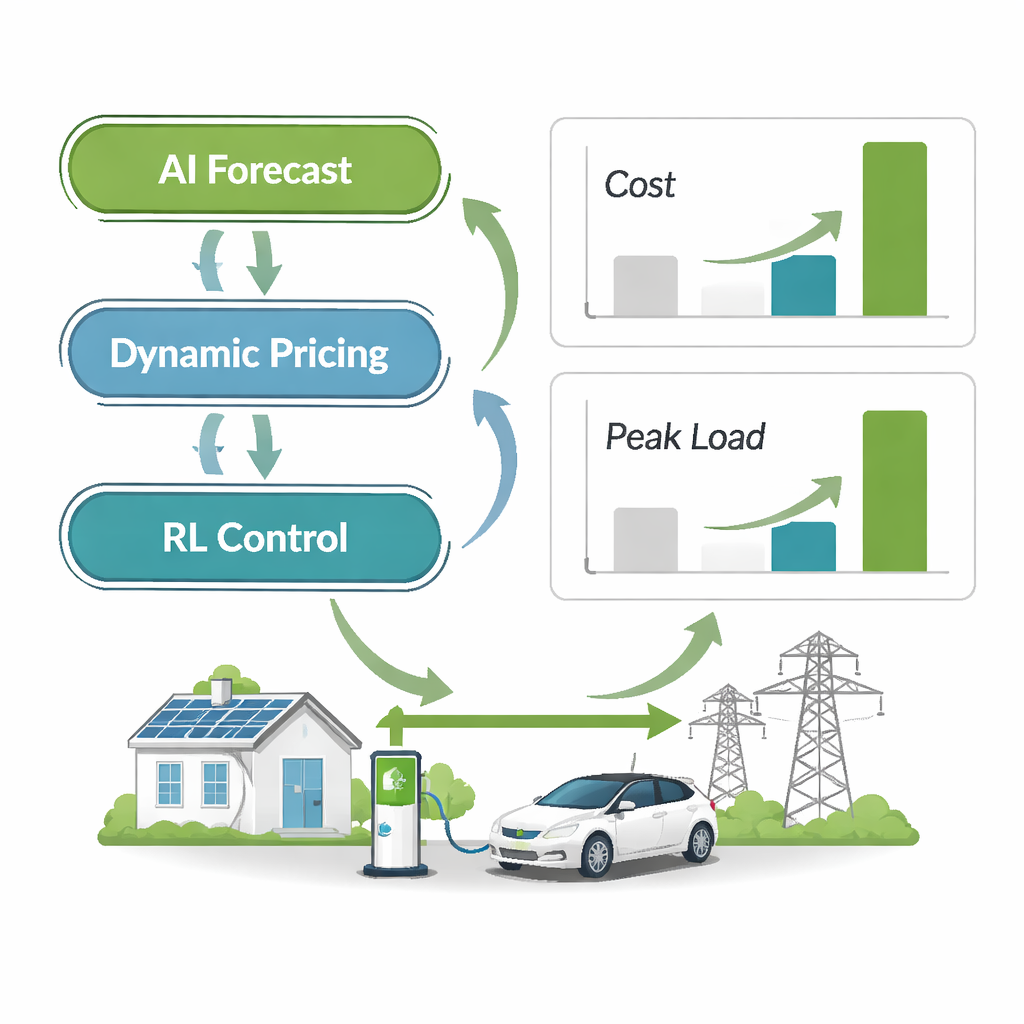

Stacking brains instead of choosing one

The key advance of this work is that these three methods do not run in isolation. The forecasting layer shapes what prices the game‑theory module is even allowed to set, so prices remain realistic. Those prices, in turn, become part of what the learning controller uses to decide its next move. This “hierarchical fusion” creates a single, coordinated decision pipeline instead of three competing systems. When tested against other popular approaches — including advanced forecasting alone, multi‑agent learning, and standard optimization techniques — the fused system consistently delivered lower charging costs and smoother grid loads, while keeping driver waiting times short.

What it means for drivers and the grid

For a layperson, the takeaway is straightforward: with the right software, parked electric cars can quietly earn money and help keep the grid stable, without drivers having to think about it. The study shows that combining prediction, smart pricing, and adaptive control can cut bills, reduce peaks in electricity use, and make better use of clean energy. Although the results are based on simulations and more work is needed on real‑world trials and battery wear, the framework points to a future where your car is not just transportation — it is also a small, intelligent power plant that cooperates with millions of others to support a more reliable and sustainable energy system.

Citation: Nandagopal, V., Bhaskar, K., Periakaruppan, S. et al. A hierarchical fusion framework for vehicle to grid energy management using predictive intelligence and learning based pricing. Sci Rep 16, 6019 (2026). https://doi.org/10.1038/s41598-026-37243-1

Keywords: vehicle-to-grid, smart charging, electric vehicles, dynamic pricing, reinforcement learning