Clear Sky Science · en

MRI neuroimaging-based Alzheimer’s disease stage classification using deep neural network with convolutional block attention module and GAN-style noise injection

Why early brain scans matter

Alzheimer’s disease slowly steals memory and independence, often long before symptoms are obvious. Families, doctors, and patients all want a way to spot the illness early, when treatments and lifestyle changes can do the most good. This study describes a new computer system that reads routine brain scans and can sort people into four stages of Alzheimer’s-related memory loss with striking accuracy, potentially giving clinicians a faster, cheaper, and more consistent second opinion.

A closer look inside the brain

The researchers focus on MRI scans, which show detailed pictures of brain structure without surgery or radiation. They use data from a large international project called the Alzheimer’s Disease Neuroimaging Initiative (ADNI), where volunteers between 55 and 90 years old regularly undergo memory testing and brain imaging. From these scans, the team extracts 2D slices of the brain and sorts them into four groups: people with no dementia, and those with very mild, mild, or moderate dementia. This reflects how Alzheimer’s typically progresses in the real world, where small changes in memory and thinking gradually worsen over time.

Teaching a computer to see subtle changes

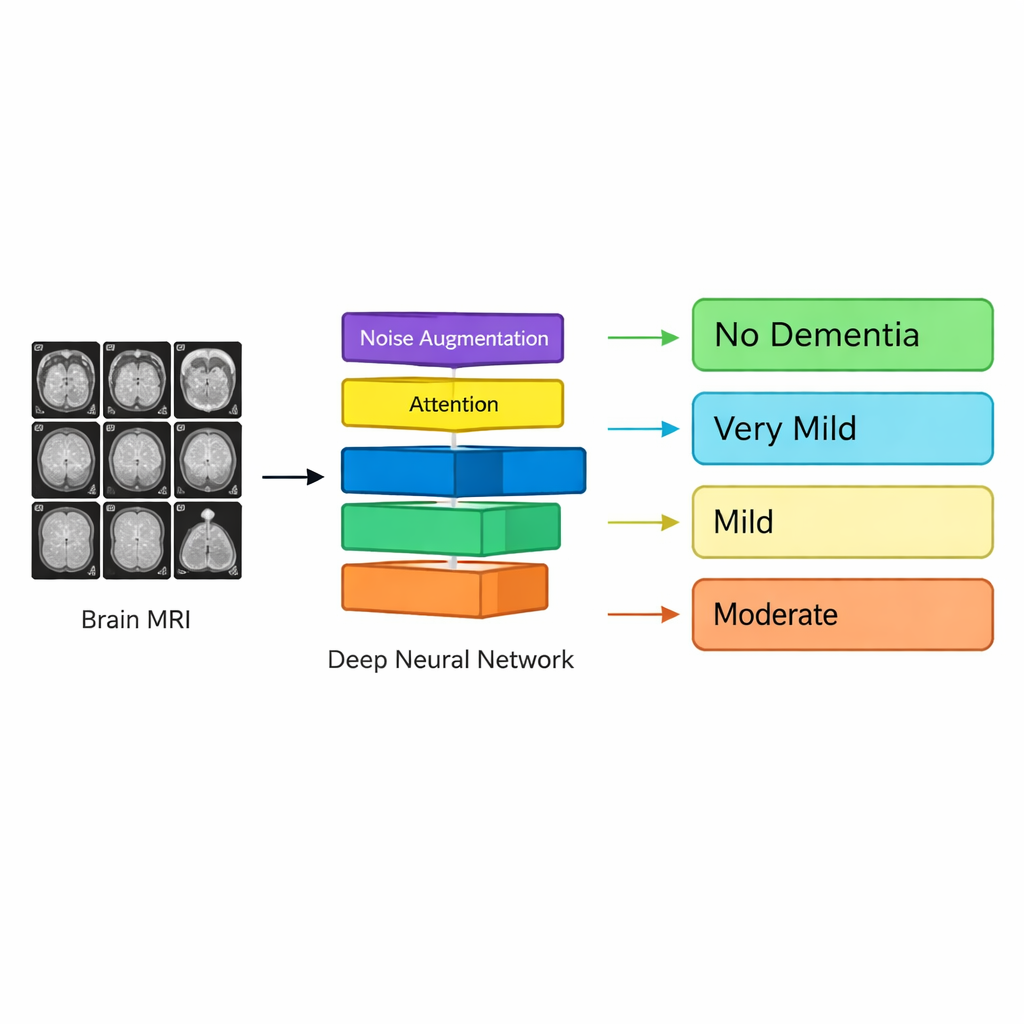

Instead of asking human experts to hand-pick brain regions and features, the authors train a deep-learning system—similar in spirit to those used for face recognition or self-driving cars—to learn directly from the images. Their model, called Neuro_CBAM-ADNet, is a type of convolutional neural network that excels at recognizing patterns in pictures. As the MRI image passes through the network, it is processed by stacked layers that detect edges, textures, and more complex shapes until the system can distinguish patterns that correlate with different dementia stages, many of which are too subtle for the naked eye.

Helping the computer focus on what matters

A key innovation is an “attention” mechanism that gently nudges the network to concentrate on the most informative parts of the scan. In practical terms, the model learns which locations and internal features of the brain tend to change as Alzheimer’s progresses—such as areas related to memory and thinking—while ignoring less relevant background. The researchers also tackle a common problem in medical data: some stages of disease are much rarer than others, so the model might otherwise become biased toward the majority class. To counter this, they generate extra training images for underrepresented groups by adding carefully controlled noise to existing scans, mimicking the natural variability found in real patients without distorting the underlying anatomy.

Putting the system to the test

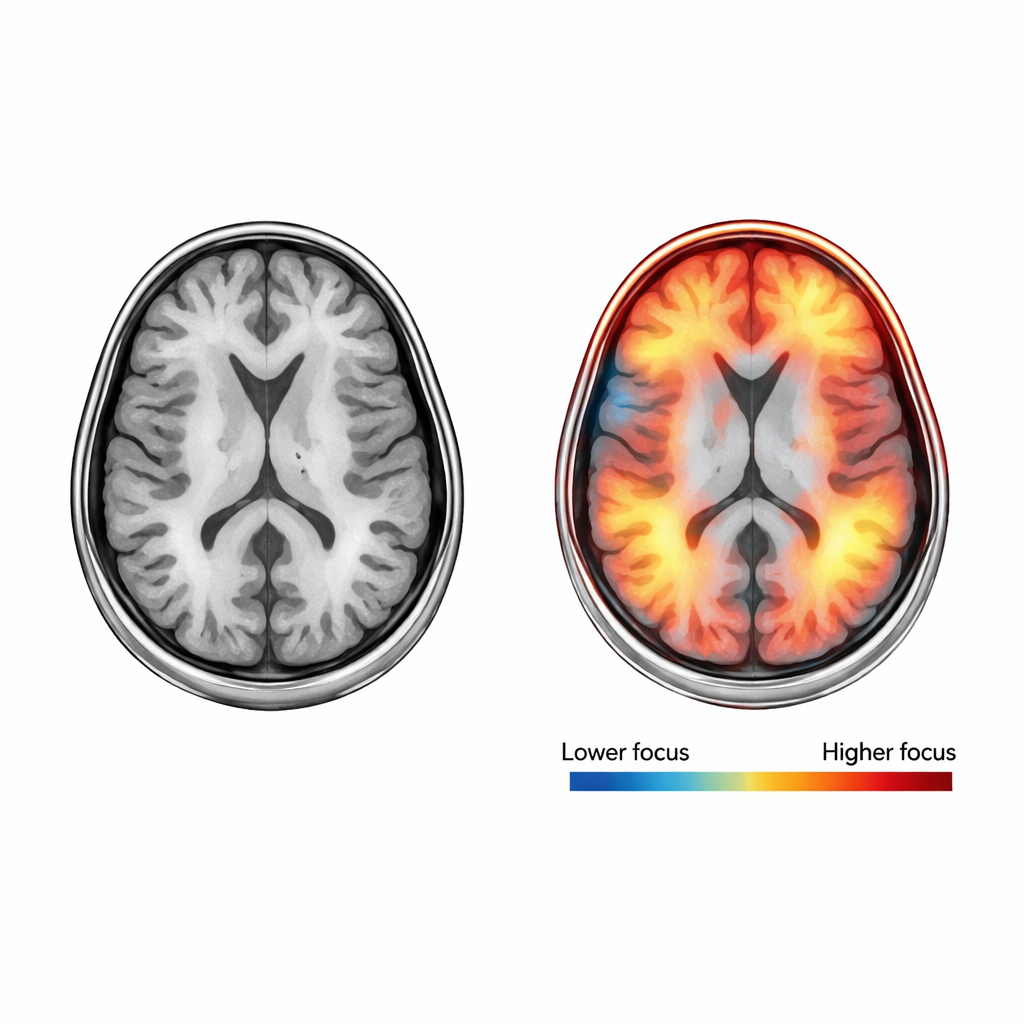

To check how reliably their system works, the team repeatedly trains and tests it on different subsets of the data, a process called cross-validation. Across five independent rounds, Neuro_CBAM-ADNet correctly classifies the dementia stage about 98 percent of the time, with similarly high scores for sensitivity (catching affected cases), precision (avoiding false alarms), and a combined measure called the F1-score. The system is particularly strong at telling apart clearly different groups, such as moderate dementia versus no dementia, and most mistakes occur between neighboring stages like no dementia and very mild dementia, where even specialists often disagree. Additional tools called Grad-CAM heatmaps show where in the brain the model is “looking” when it makes each decision, offering visual clues that can be compared with known disease markers.

What this means for patients and doctors

In plain terms, this work shows that a well-designed AI system can read brain scans and sort people into four stages of Alzheimer’s-related decline with a level of consistency that rivals, and in some cases exceeds, earlier approaches. It does this while pointing to the brain regions driving its decisions, which may build trust among clinicians. Although the tool still needs broader testing across different hospitals and scanners, it suggests a future in which routine MRI exams, combined with transparent AI, could help flag early brain changes, support more confident diagnoses, and guide treatment decisions before the disease has progressed too far.

Citation: Kumar, S., Shastri, S., Mansotra, V. et al. MRI neuroimaging-based Alzheimer’s disease stage classification using deep neural network with convolutional block attention module and GAN-style noise injection. Sci Rep 16, 6946 (2026). https://doi.org/10.1038/s41598-026-37226-2

Keywords: Alzheimer’s disease, brain MRI, deep learning, early diagnosis, medical imaging AI