Clear Sky Science · en

Different BI-RADS breast cancer diagnosis using MobileNetV1 and vision transformer based on explainable artificial intelligence (XAI)

Seeing Cancer Risk Sooner

Breast cancer is most treatable when it is found early, yet reading mammograms is difficult and time‑pressured work. This study describes a new artificial intelligence (AI) system designed not only to spot signs of cancer on mammograms with very high accuracy, but also to show doctors exactly which areas of the breast image influenced its decisions. By combining two modern image‑analysis techniques in a smart way, the system aims to support radiologists with fast, reliable, and transparent second opinions.

Why Reading Mammograms Is So Hard

Mammograms are X‑ray images of the breast used to check for early signs of cancer. Radiologists assign each exam a BI‑RADS score, a standardized scale that ranges from normal findings to clearly cancerous ones. In dense breasts, where there is a lot of glandular tissue, suspicious spots can be hidden or look similar to harmless structures. Many previous computer‑aided tools either focused only on simple yes‑or‑no cancer decisions, struggled with the full range of BI‑RADS categories, or worked like a black box, leaving doctors unsure why a particular decision was made.

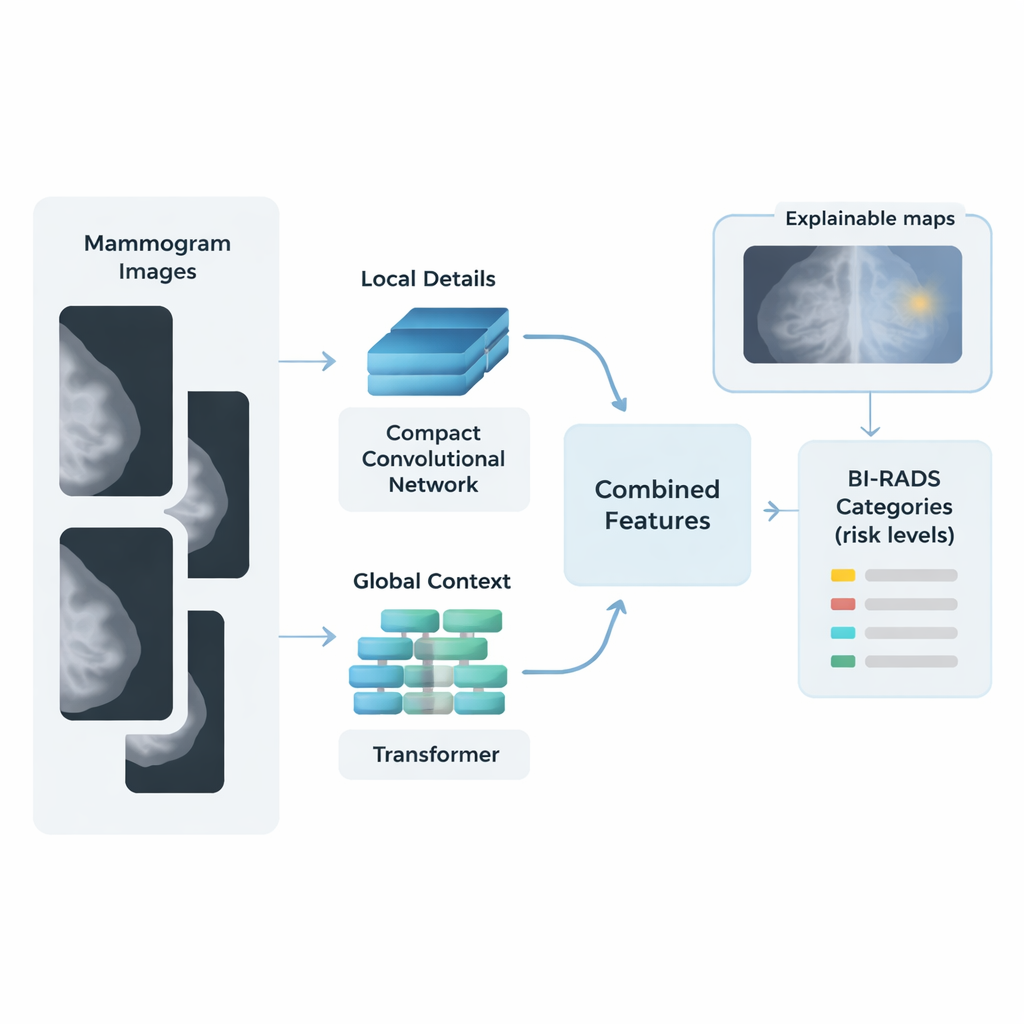

Blending Two Ways of “Looking” at an Image

The researchers built a hybrid AI framework that mimics how a careful human reader might scan a mammogram: first by examining small details, then by considering the big picture. One part of the system, based on a compact network called MobileNetV1, concentrates on local details such as tiny calcifications and sharp lesion borders. A second part, a vision transformer, breaks the image into patches and analyzes how patterns relate across the entire breast, capturing overall tissue structure and subtle distortions. The features from these two “streams” are then merged into a single, rich description of each image.

Cleaning, Balancing, and Simplifying the Data

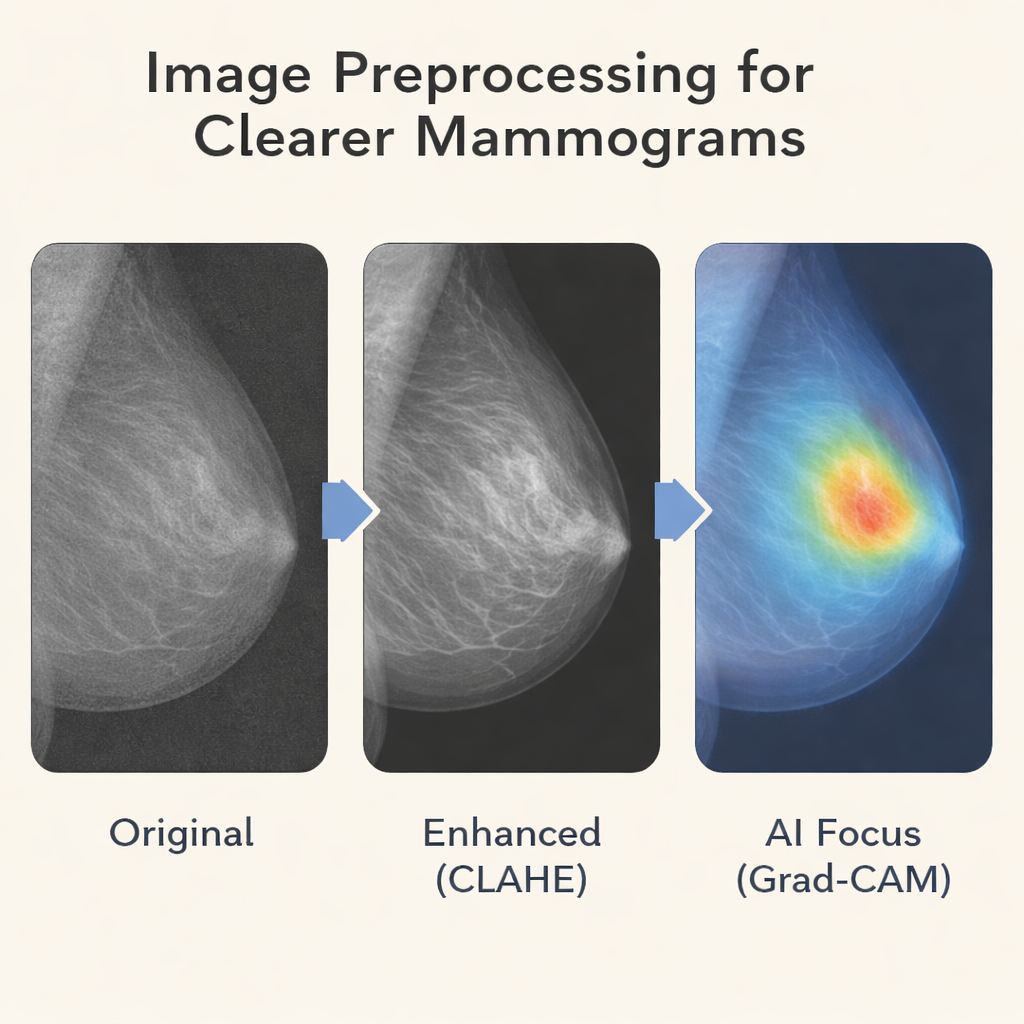

Before images enter the AI pipeline, they go through several preparation steps. The team improves contrast using a method that brightens subtle structures without exaggerating noise, making faint spots easier to see. Images are resized and normalized so the system sees them in a consistent way. To counter the fact that some BI‑RADS categories, such as clearly malignant cases, are relatively rare, the authors use data‑augmentation tricks like small rotations and flips and apply class‑aware training so that less common categories still have influence during learning. After the two streams extract features, a mathematical tool called principal component analysis compresses this information, keeping what matters most while cutting down complexity.

From Features to Risk Scores, With Explanations

For the final step, instead of relying on a heavy, opaque neural‑network classifier, the authors use many simple logistic regression models combined in a “bagging” ensemble. Each model offers a straightforward way to connect image features to BI‑RADS risk levels, and their majority vote provides stability and resistance to overfitting on the relatively modest dataset. Tested on more than 6,000 mammograms from the King Abdulaziz University Breast Cancer dataset, the hybrid system achieved over 99% accuracy, sensitivity, and specificity across the four key BI‑RADS categories it targeted: normal, probably benign, suspicious, and malignant.

Letting Doctors See What the AI Sees

To make its decisions understandable, the system employs explainable AI techniques known as Grad‑CAM and Grad‑CAM++. These produce colored heatmaps overlaid on the mammogram, highlighting the regions that most influenced the predicted BI‑RADS score. In malignant cases, the highlighted areas typically align with masses or clusters of calcifications noted by expert radiologists; in normal images, there is little or no focused activation. This visual feedback helps clinicians judge whether the model is paying attention to medically meaningful features and can reveal why certain borderline cases—such as dense tissue that mimics a lesion—are difficult even for experts.

What This Could Mean for Patients

The study shows that, on a single clinical dataset, this dual‑stream, explainable AI system can classify mammograms into multiple risk levels with accuracy comparable to, and in some respects exceeding, many previous methods. While it still needs to be tested on more diverse populations and different hospitals, the approach points toward AI tools that are not only highly accurate, but also fast enough for busy clinics and transparent enough to earn the trust of radiologists and patients. In practice, such systems could act as an extra pair of expert eyes—flagging subtle findings, reducing missed cancers, and supporting clearer, more confident conversations about breast‑cancer risk.

Citation: Abdelsabour, I., Elgarayhi, A., Sallah, M. et al. Different BI-RADS breast cancer diagnosis using MobileNetV1 and vision transformer based on explainable artificial intelligence (XAI). Sci Rep 16, 7190 (2026). https://doi.org/10.1038/s41598-026-37199-2

Keywords: breast cancer, mammography, artificial intelligence, vision transformer, explainable AI