Clear Sky Science · en

End-to-end emergency response protocol for tunnel accidents augmentation with reinforcement learning

Why smarter tunnel rescues matter

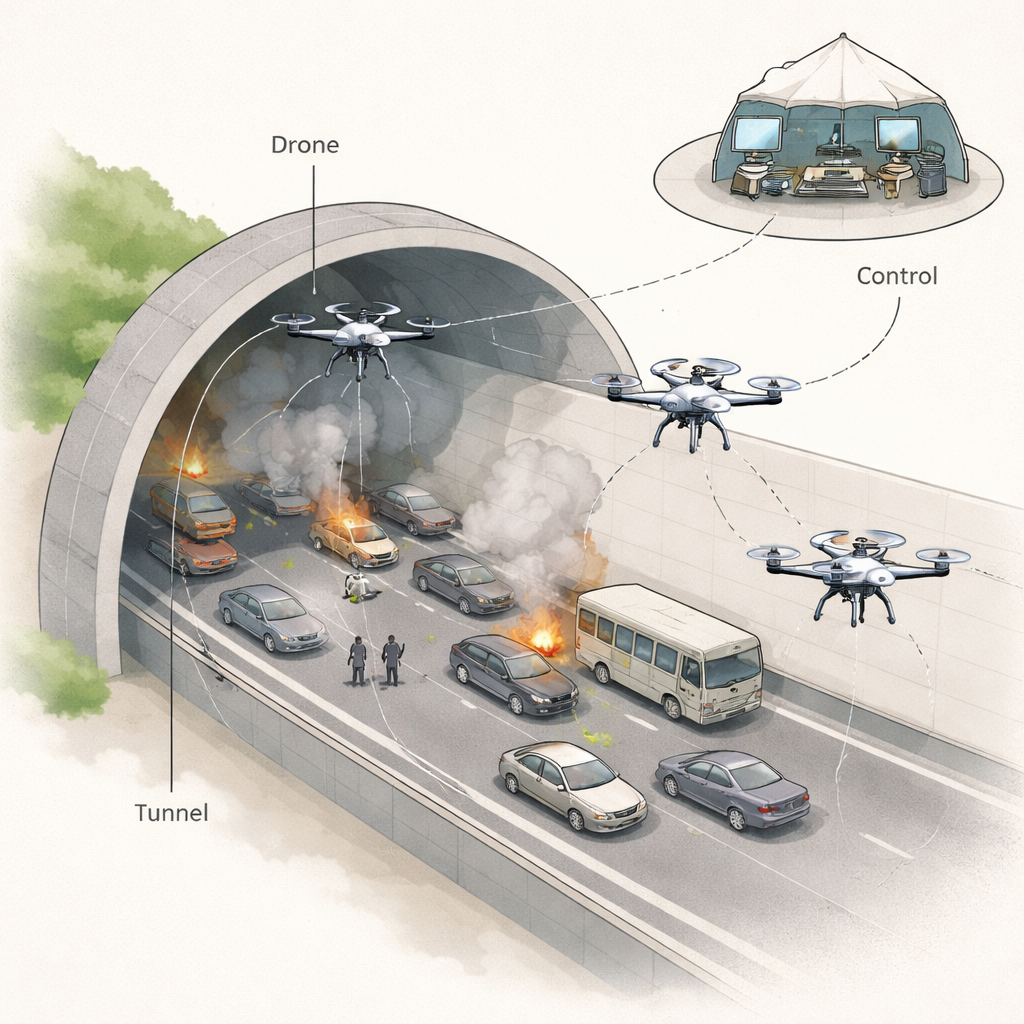

When disaster strikes in a road tunnel—whether from a crash, fire, or structural collapse—people can be trapped in a long, smoky, maze‑like tube with very few exits. Human rescuers must rush in just as visibility drops, temperatures rise, and debris blocks the way. This study explores how small flying robots, or drones, guided by a clever learning strategy, could become fast, reliable helpers in these dangerous situations, finding victims and charting safe paths while keeping human teams out of the worst hazards.

Dangerous underground bottlenecks

Modern cities rely on tunnels for highways, trains, and utilities, but the same enclosed design that makes them efficient also makes accidents inside them unusually deadly. Fires spread smoke quickly, toxic gases build up, and narrow passages can clog with crashed vehicles or falling concrete. Traditional rescue teams often enter with limited information, guessing where to go while their radios struggle to work through thick rock and concrete. Past disasters in China and Japan, among others, have shown how hard it is to reach victims in time, highlighting the need for tools that can see and think ahead in ways humans cannot.

Teaching drones to explore and search

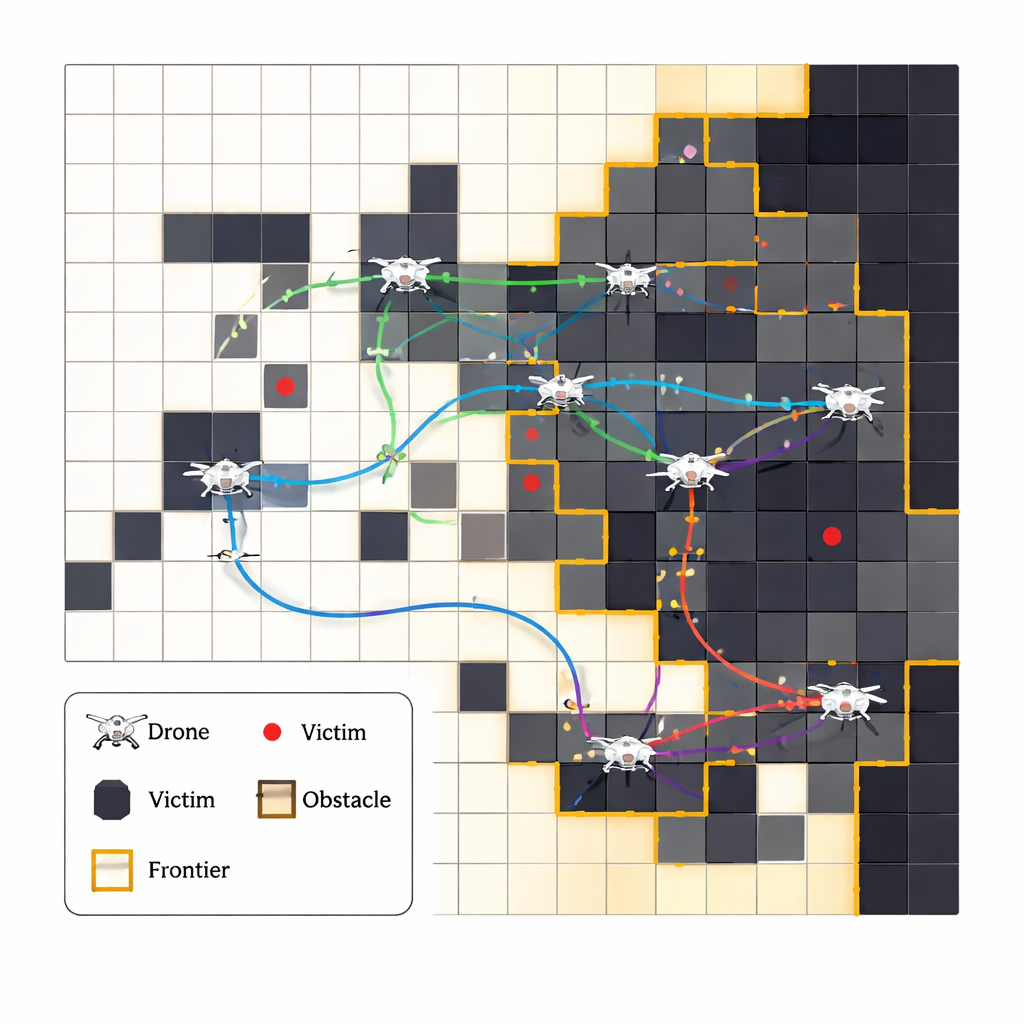

The authors propose a system where multiple autonomous drones work together to explore a damaged tunnel, build a live map, and locate trapped people. Instead of following a fixed, pre‑programmed route, each drone learns from experience using a method called reinforcement learning: it tries actions, sees what happens, and gradually discovers which choices tend to lead to faster rescues and fewer mistakes. The tunnel is represented as a grid of cells, and the drones focus on “frontiers” where known space meets unknown space, steadily pushing that boundary outward. At every step, they choose among a small set of grid movements, updating their internal tables of which moves worked best in similar situations before.

Getting many robots to cooperate without chatter

Making several drones search the same tunnel at once raises a new challenge: how do they avoid flying into each other or repeatedly scanning the same area, especially when communication may be unreliable? Instead of giving them a central boss or constant radio chatter, the researchers design a simple scoring system that quietly encourages good group behavior. A drone earns a large reward when it discovers a new victim, but it is penalized if it wastes time revisiting the same place, collides with another drone, or “fails” by depleting its battery. Over time, this pushes each drone to favor unexplored regions and steer clear of its teammates, so a kind of cooperation emerges naturally from the shared consequences, even though each one technically learns on its own.

Borrowing tricks from wolves to avoid getting stuck

Pure trial‑and‑error learning can sometimes get stuck in safe but second‑best habits—like always choosing a familiar corridor rather than trying a risky shortcut. To keep the drones curious, the team borrows ideas from a mathematical model of how grey wolves hunt in packs. This “Grey Wolf Optimization” component nudges the drones to occasionally imitate the best‑performing search patterns seen so far, while still leaving room for exploration. In practice, it shapes which new actions are tried, helping the learning process jump out of dead ends and adapt when the tunnel changes—for example, if part of the route suddenly becomes blocked by fire or debris.

Testing the approach in virtual disasters

Because it is not safe to test unproven strategies in real emergency tunnels, the researchers build detailed computer simulations that mimic narrow corridors, dead ends, obstacles, and scattered victims. They compare their learning‑based system against several other methods, including pure random wandering and standalone optimization without learning. Across both single‑drone and multi‑drone tests, their approach finds victims faster, explores more of the tunnel with fewer wasted steps, and avoids collisions more reliably. Importantly, it does this using lightweight, table‑based calculations instead of power‑hungry deep‑learning networks, meaning it could realistically run on small onboard computers during an actual emergency.

What this could mean for future rescues

The study shows that swarms of relatively simple drones, guided by carefully designed learning rules and a few ideas borrowed from nature, could become valuable partners for firefighters and rescue crews in tunnel disasters. By rapidly mapping smoky, shifting environments and homing in on likely victim locations without constant human control, such systems could shave precious minutes off response times while reducing the risks faced by first responders. Although the work so far is based on simulations and ideal sensors, it lays a practical foundation for future real‑world systems that must work under tight time, energy, and computing limits in some of the most challenging rescue settings on Earth.

Citation: ur Rehman, H.M.R., Gul, M.J., Younas, R. et al. End-to-end emergency response protocol for tunnel accidents augmentation with reinforcement learning. Sci Rep 16, 6226 (2026). https://doi.org/10.1038/s41598-026-37191-w

Keywords: tunnel emergency response, search and rescue drones, multi-agent reinforcement learning, robotic disaster management, autonomous exploration