Clear Sky Science · en

Categorical framework for quantum-resistant zero-trust AI security

Why securing AI needs a new kind of lock

As artificial intelligence spreads into hospitals, factories, and homes, the models powering these systems are becoming prime targets for hackers. At the same time, future quantum computers threaten to break many of the encryption schemes that protect today’s data. This paper introduces a new way to shield AI models that is designed to withstand both clever human attackers and tomorrow’s quantum machines, while still running on small, inexpensive devices.

Building a “never trust” fortress around AI

The authors start from a security philosophy called “zero trust.” Instead of assuming that anything inside a company network is safe, zero trust treats every access attempt as suspicious. In the proposed design, outside clients must pass through an ESP32-based broker and then an ESP32-based security agent before they can reach AI models on a protected local network. Every request is checked for who is asking, what model they want, when they are asking, and from where. Access is narrow, time-limited, and tied to specific roles, so even if one part of the system is compromised, attackers cannot freely move sideways to other models or data.

Locks that survive quantum computers

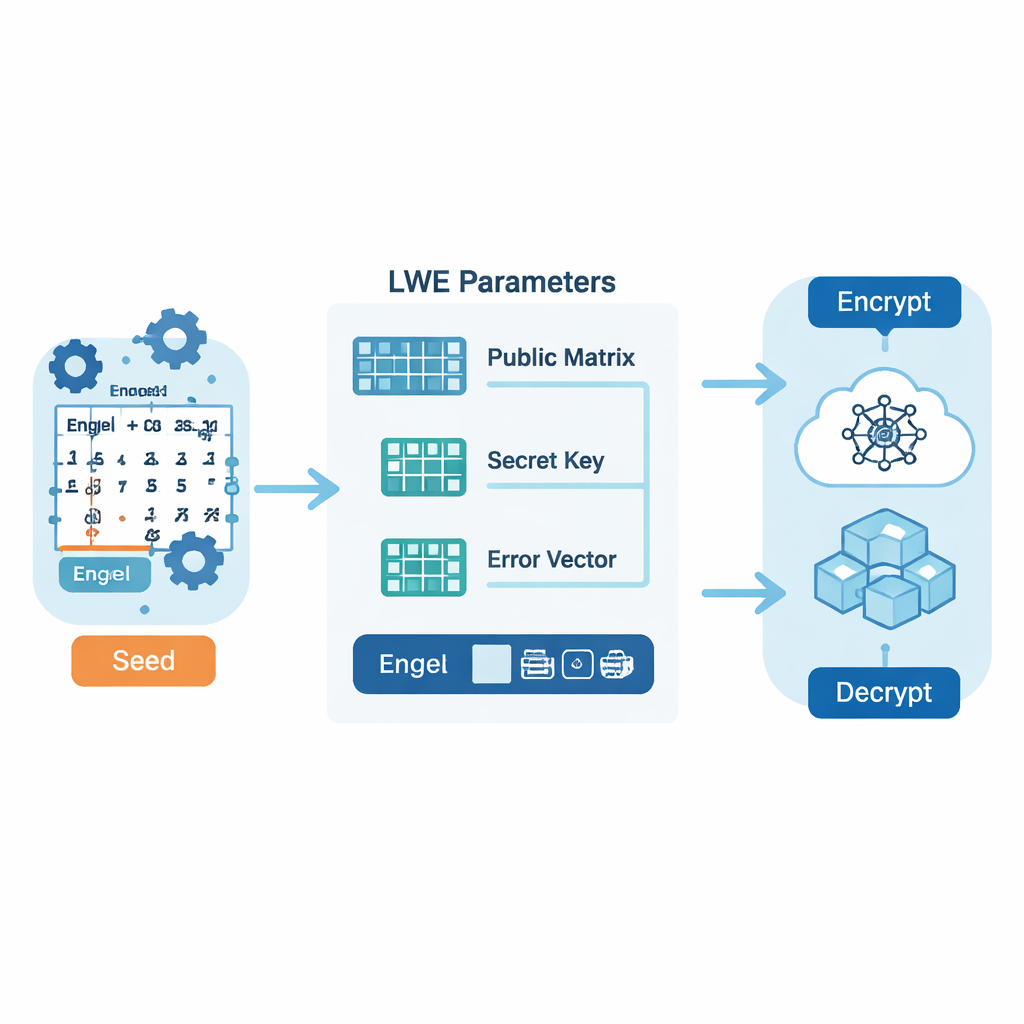

To protect the messages flowing through this zero-trust gateway, the system relies on a family of techniques known as lattice-based post-quantum cryptography. Instead of today’s familiar number-theory puzzles, these schemes hide information in high‑dimensional grids of numbers that are believed to be hard to solve even for quantum machines. A key technical twist in this work is how the authors generate the “random-looking” numbers that drive encryption. Rather than using a conventional random-number generator, they start from a secret real number and expand it into a long digit sequence using a method called an Engel expansion, then further stir it with a chaotic map. This produces a stream of values that are structured enough to store and reproduce efficiently, yet unpredictable enough to withstand known attacks.

Turning deep math into a security blueprint

What sets this framework apart is its use of a branch of mathematics called category theory to describe the entire security workflow. Instead of focusing on low-level code, category theory treats each cryptographic operation—like key generation, encryption, or shuffling random sequences—as a kind of arrow between abstract objects, and treats security policies as higher-level mappings between these arrows. By organizing the system in this way, the authors can express important guarantees—such as “decrypting after encrypting gets you back the original message,” or “changing a parameter does not silently weaken security”—as simple diagram rules. This provides a rigorous checklist that helps ensure the design remains sound even as components are swapped or upgraded.

Making strong security work on tiny hardware

In addition to theory, the paper reports a full implementation on low-cost ESP32 microcontrollers acting as the broker and agent in front of AI services. Despite performing quantum-resistant encryption, the devices remain highly efficient: encryption takes about 11 milliseconds, decryption under 3 milliseconds, and memory use leaves over 90% of the free heap available after cryptographic operations. Power measurements show a steady baseline around 300 milliwatts with brief peaks under 500 milliwatts during intensive lattice computations, levels suitable for battery-powered sensors. In testing, the system blocked 100% of more than 1,000 unauthorized access attempts while adding less than a second to overall AI response time, most of which was spent on the models themselves rather than on encryption.

Preparing for future upgrades without breaking safety

The same mathematical framework also supports “crypto‑agility”: the ability to swap out one cryptographic building block for another—say, replacing today’s lattice scheme with a future standard—without redesigning the rest of the system from scratch. In the categorical view, each cryptographic algorithm is a pluggable module, and safe transitions between them are expressed as structured mappings that preserve security goals like confidentiality and integrity. This reduces the amount of code that must change and the amount of re‑testing required when new post‑quantum standards or hardware optimizations arrive.

What this means for everyday users

For non-specialists, the practical message is that strong, future‑proof security for AI does not have to be reserved for big data centers. By combining zero‑trust checks, quantum‑resistant math, and careful reasoning about how all the parts fit together, the authors show that even small, cheap chips can act as trustworthy gatekeepers for powerful AI models. Their prototype rejects every unauthorized request, keeps delays low, and can evolve as cryptographic best practices change. If adopted widely, approaches like this could help ensure that the AI services people rely on—from smart farming to medical monitoring—remain safe even as attackers and computing technology grow more sophisticated.

Citation: Cherkaoui, I., Clarke, C., Horgan, J. et al. Categorical framework for quantum-resistant zero-trust AI security. Sci Rep 16, 7030 (2026). https://doi.org/10.1038/s41598-026-37190-x

Keywords: post-quantum cryptography, zero trust architecture, AI model security, lattice-based encryption, embedded IoT security