Clear Sky Science · en

Interpretable hybrid ensemble with attention-based fusion and EAOO-GA optimization for lung cancer detection

Why early lung cancer detection matters to everyone

Lung cancer is one of the deadliest cancers largely because it is often discovered too late, when treatment options are limited and survival chances drop sharply. Doctors increasingly rely on CT scans and computer programs to spot suspicious growths in the lungs before symptoms appear. This paper presents a new artificial intelligence (AI) system that aims to make those computer diagnoses not only more accurate, but also more reliable and easier for clinicians to understand.

How computers read lung scans

Modern AI systems can scan CT images and learn patterns that distinguish a harmless spot from a dangerous tumor. These systems, built from deep neural networks, have already shown they can rival or even surpass human experts in narrow tasks. But they face three important obstacles in real hospitals: they can overfit to one dataset and fail on new patients, they struggle with unbalanced data where some disease types are rare, and they often function as opaque “black boxes” that clinicians find hard to trust. The authors focus on these challenges for a widely used lung CT dataset that contains three types of cases: benign nodules, malignant nodules, and normal scans.

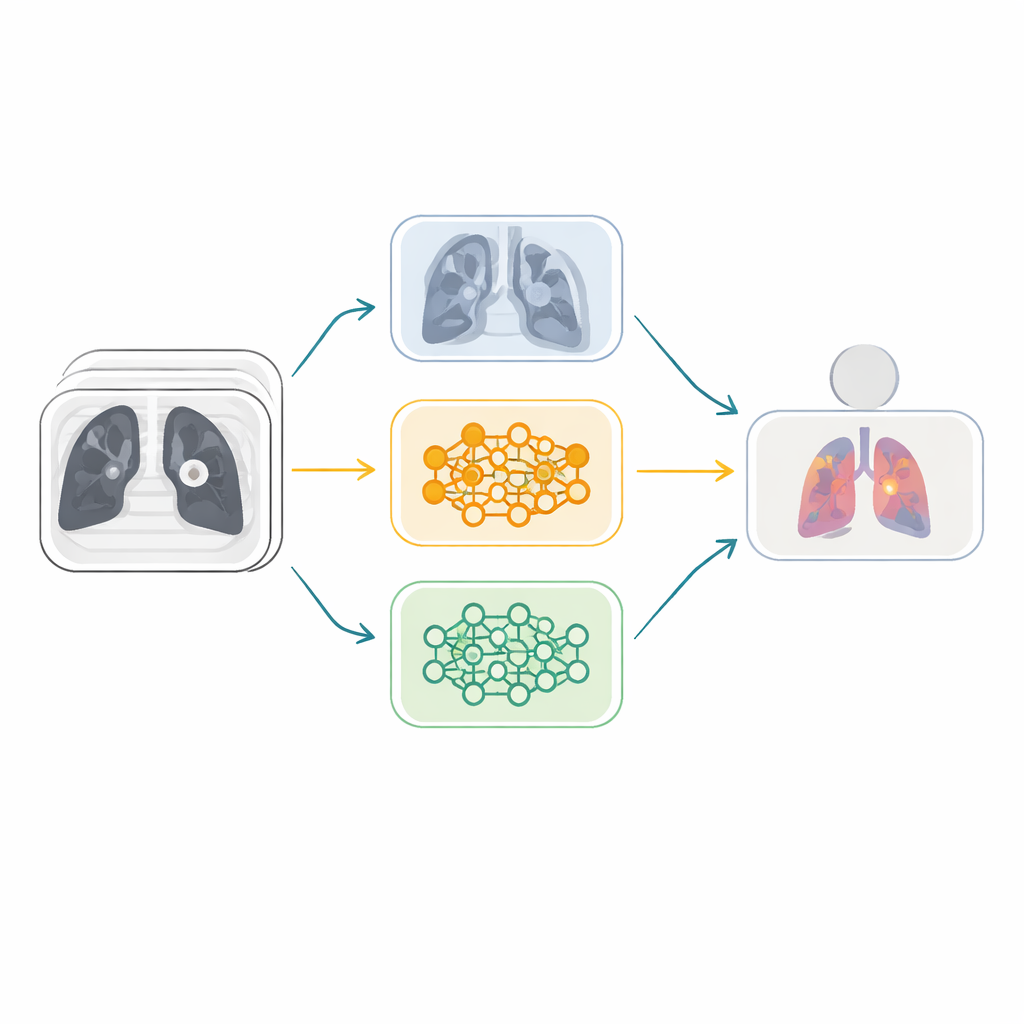

Many expert eyes instead of one

Rather than depending on a single neural network, the researchers build an ensemble—a team of different AI models that vote together. They start from six powerful image-recognition architectures originally trained on millions of everyday photos and adapt them to lung CT scans. These models are then paired into three “fusion” branches, each combining two networks with complementary strengths. Within each branch, a special attention mechanism, known as Squeeze-and-Excitation, learns which internal feature channels carry the most useful visual cues—such as subtle textures or nodule shapes—and amplifies them while downplaying less informative patterns. This helps the system focus on medically meaningful details rather than noise.

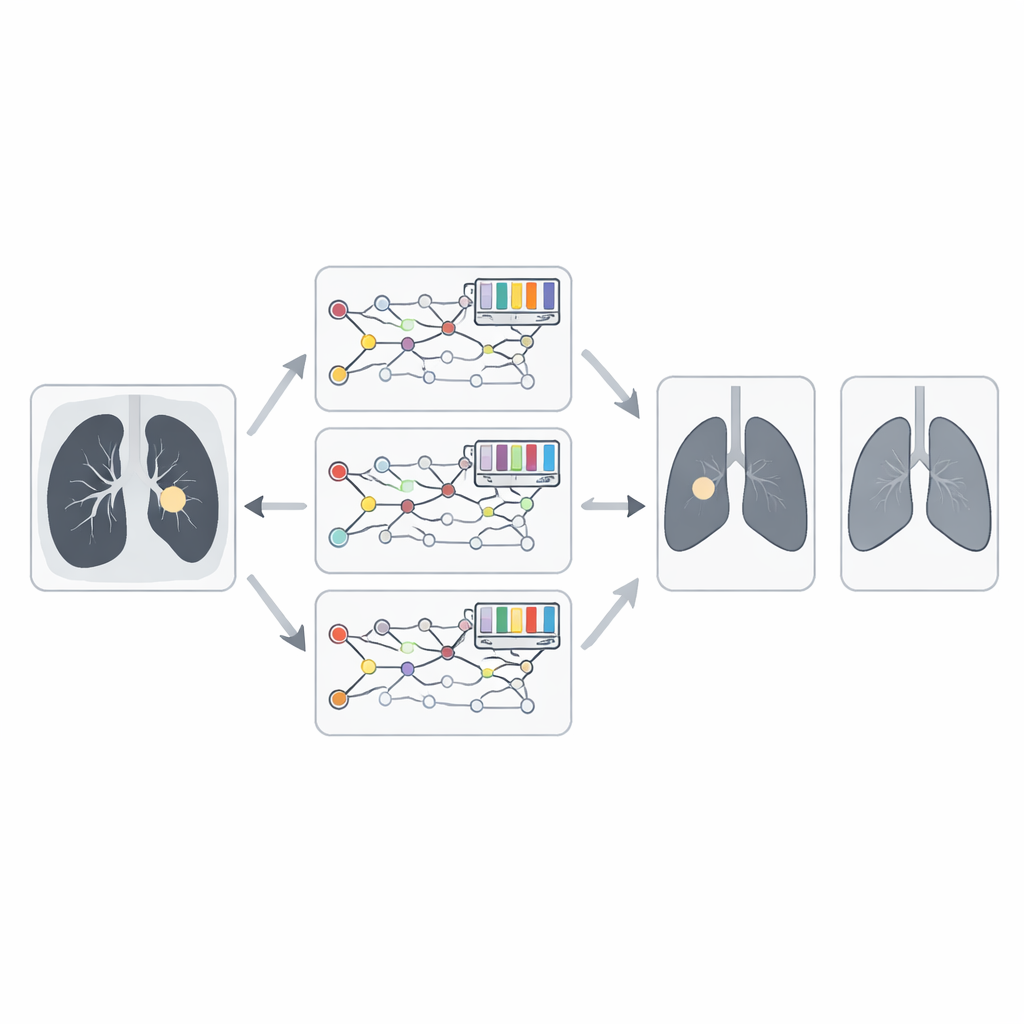

Letting nature-inspired search tune the team

Simply averaging the opinions of three strong branches still leaves room for improvement. The key idea in this work is to let a nature-inspired optimizer decide how much weight to give each branch. The team introduces an enhanced version of the Animated Oat Optimization algorithm, augmented with genetic operations like crossover and mutation. In plain terms, this algorithm treats candidate weight combinations as a population and repeatedly “evolves” them, keeping those that lead to more accurate cancer predictions and reshuffling the rest. Over many iterations, it discovers an effective balance where the most reliable fusion models contribute more heavily to the final diagnosis.

Balancing rare cases and opening the black box

Real medical data often contain many more malignant than benign or normal examples, which can bias an AI system toward over-calling cancer. To counter this, the authors use a technique called SMOTE to generate additional synthetic examples for underrepresented classes, evening out the training distribution. They also add an explanatory layer using Grad-CAM, which produces heatmaps showing the image regions that most influenced each decision. For malignant cases, the highlighted areas typically coincide with irregular, spiculated nodules; for benign or normal scans, the focus shifts to smoother tissue. This helps radiologists verify that the model is looking at the right structures rather than irrelevant artifacts.

How well the system performs on real-world data

When tested on the IQ-OTH/NCCD lung cancer dataset, the proposed ensemble reaches an impressive accuracy of about 99.4 percent, with similarly high precision, recall, and F1-score. It consistently surpasses every individual network, simpler fusion schemes, and a range of other optimization methods. Crucially, the authors also validate the model on a separate, widely used CT collection known as LIDC-IDRI, where it maintains nearly 98 percent accuracy. This external test suggests that the system generalizes beyond the images it was originally trained on, a key requirement for any tool meant to assist clinicians across different hospitals and scanner settings.

What this means for patients and clinicians

To a layperson, the most important takeaway is that combining several AI “experts,” carefully tuning how they work together, and making their reasoning more transparent can significantly improve early lung cancer detection from CT scans. The framework introduced in this paper transforms raw images into a highly accurate, relatively interpretable second opinion for radiologists. If further validated in clinical trials and adapted for everyday hospital workflows, such systems could help catch dangerous tumors earlier, reduce unnecessary follow-up tests, and ultimately improve survival and quality of life for people at risk of lung cancer.

Citation: Al Duhayyim, M., Aldawsari, M.A., Ismail, A. et al. Interpretable hybrid ensemble with attention-based fusion and EAOO-GA optimization for lung cancer detection. Sci Rep 16, 8159 (2026). https://doi.org/10.1038/s41598-026-37187-6

Keywords: lung cancer detection, CT scan analysis, deep learning ensemble, medical image AI, explainable diagnostics