Clear Sky Science · en

Iterative multiblock framework for high frequency EEG based neurological disorder detection

Why Brain Waves Matter for Early Diagnosis

Alzheimer’s and Parkinson’s disease often damage the brain years before symptoms are obvious, but doctors still lack quick and reliable tools to catch them early. This study presents a new way to read brain waves, recorded with electroencephalography (EEG), that focuses on the brain’s fastest rhythms. By carefully cleaning up these noisy signals and feeding them into an explainable artificial intelligence system, the authors show it is possible to detect neurological problems with accuracy that rivals, and sometimes surpasses, many existing approaches.

Listening to the Fastest Brain Rhythms

EEG records tiny voltage changes from the scalp as networks of neurons fire. Traditionally, doctors and researchers have paid most attention to slower rhythms, such as alpha and theta waves. But mounting evidence suggests that high-frequency “gamma” activity, above about 30 hertz, can reveal early signs of disease, from subtle memory problems to movement disorders. Unfortunately, these fast signals are easily buried under muscle twitches, eye blinks, and electrical noise. Standard tools, like the familiar Fourier and wavelet transforms, work best when signals are stable over time, which is not true for real-world EEG. As a result, much of the clinically useful detail in high-frequency activity has been hard to extract and easy to misinterpret.

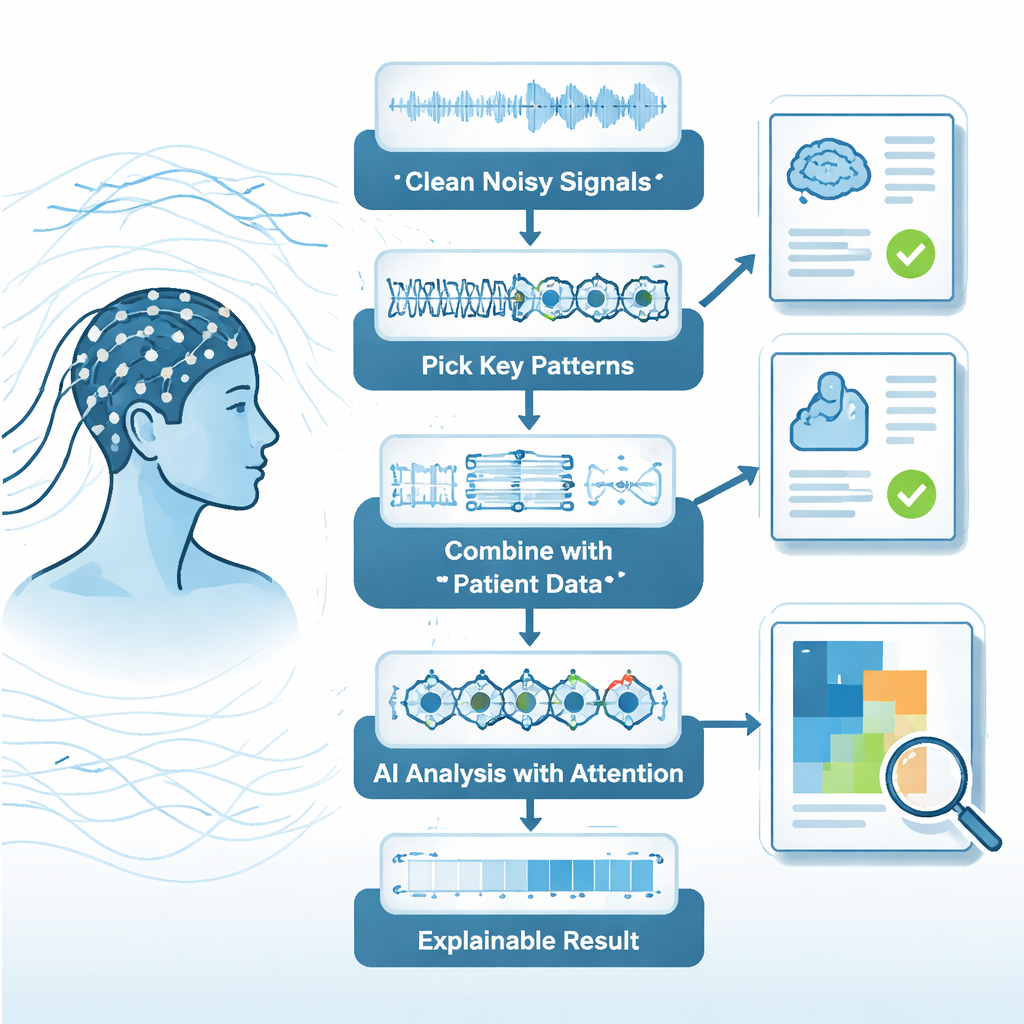

Cleaning Up Noisy Brain Signals

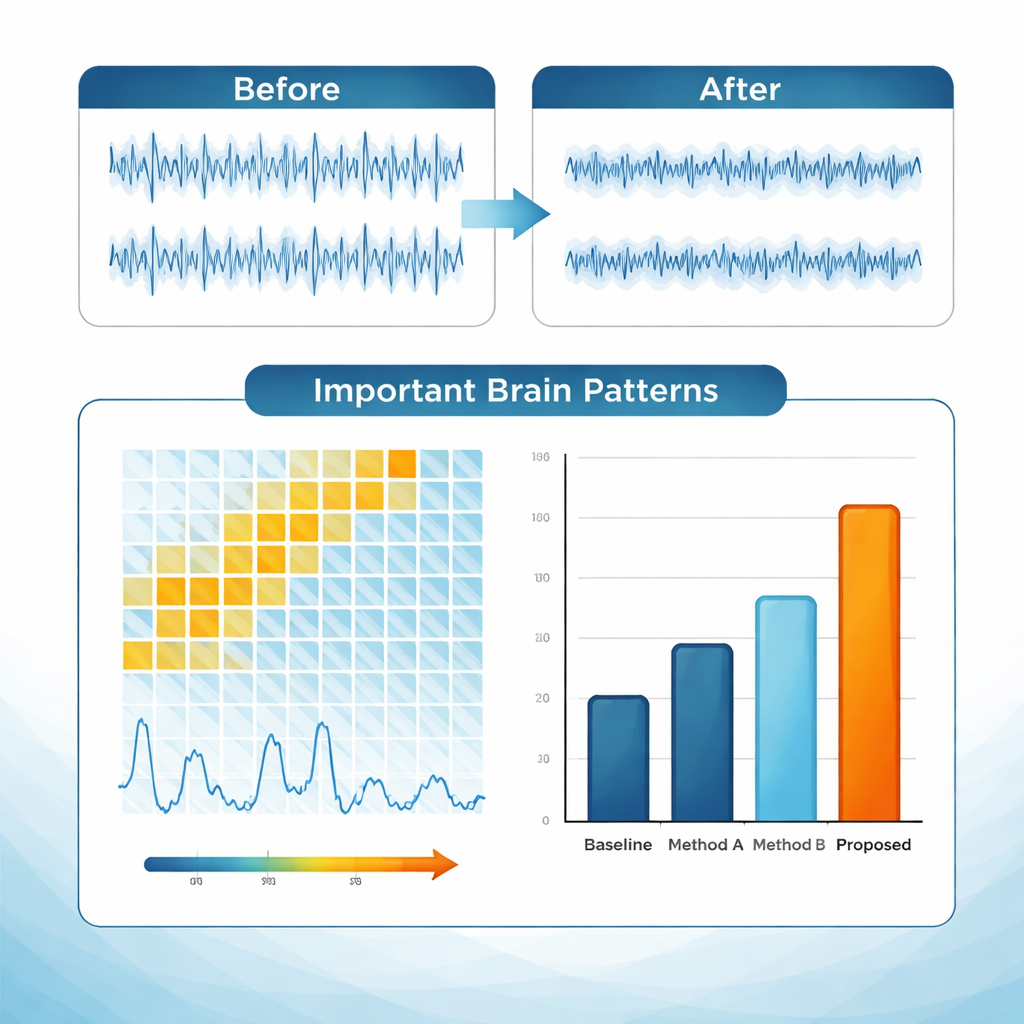

To tackle this, the authors design a multi-step "pipeline" that treats EEG analysis more like a carefully engineered production line than a single magic algorithm. First, they use an approach called the Hilbert–Huang transform combined with a modified empirical mode decomposition. In plain terms, this method automatically breaks a messy signal into simpler building blocks that better follow the brain’s actual fluctuations. It then throws away components that behave like noise—based on how little energy and complexity they contain—while preserving fast oscillations in the gamma range. This two-stage filtering substantially improves the signal-to-noise ratio, turning a cluttered raw trace into a cleaner representation of high-frequency brain activity that is more likely to reflect genuine neural events than stray artifacts.

Finding the Most Telling Patterns

Once the signals are cleaned, the framework zooms in on the most informative features. A wavelet packet transform divides each EEG component into multiple frequency bands, and a measure called Shannon entropy scores how complex and informative each band is. Bands with low scores—those that add more redundancy than insight—are discarded, shrinking the feature set by about 60% while keeping around 95% of the clinically relevant information. Crucially, the system does not rely on EEG alone. Clinical details such as age, gender, and disease history are mathematically aligned with the EEG features using a technique called canonical correlation analysis. This fusion yields a shared space where subtle links between brain activity and clinical context become easier for a computer to detect.

How the AI Learns from Brain Waves

The fused data are then analyzed by a deep-learning model built specifically for time-varying brain signals. The architecture combines convolutional layers, which scan for local patterns across channels and frequencies, with recurrent layers that track how those patterns evolve second by second. An “attention” mechanism assigns higher weight to time segments that appear most diagnostic—much like a clinician focusing on a suspicious burst of activity in a recording. To avoid being a black box, the system includes explainability tools such as Grad-CAM and integrated gradients. These produce visual maps and scores that highlight which frequencies, time windows, and clinical variables most influenced each prediction. In tests on two large public EEG databases, the framework reached about 94% accuracy, with sensitivities and specificities above 92%, outperforming several strong comparison methods.

What This Could Mean for Patients

For a layperson, the bottom line is that this work shows how a carefully staged, explainable AI system can turn complicated, noisy EEG recordings into clear, clinically meaningful insights. By making better use of fast brain rhythms and integrating them with routine patient information, the framework spots early signs of disorders like Alzheimer’s and Parkinson’s while also showing doctors why it reached its conclusions. Although further testing on everyday clinical and wearable EEG data is needed, this approach points toward future bedside or even home-based tools that could flag neurological problems sooner, guide treatment decisions, and ultimately improve quality of life for millions at risk of neurodegenerative disease.

Citation: Agrawal, R., Dhule, C., Shukla, G. et al. Iterative multiblock framework for high frequency EEG based neurological disorder detection. Sci Rep 16, 5995 (2026). https://doi.org/10.1038/s41598-026-37126-5

Keywords: EEG, neurological disorders, Alzheimer’s disease, Parkinson’s disease, brain waves