Clear Sky Science · en

Comparative analysis of supervised and ensemble models with unsupervised exploration for alzheimer’s disease prediction

Why early warning matters

Alzheimer’s disease slowly robs people of memory and independence, often long before a firm diagnosis is made. Families, doctors, and health systems all benefit when warning signs are detected early, because that is when treatment, planning, and support can make the biggest difference. This study asks a practical question: can carefully designed computer programs, trained on routine clinical and brain-scan information, spot dementia more reliably than today’s standard tools—and at the same time reveal hidden patterns in how the disease develops?

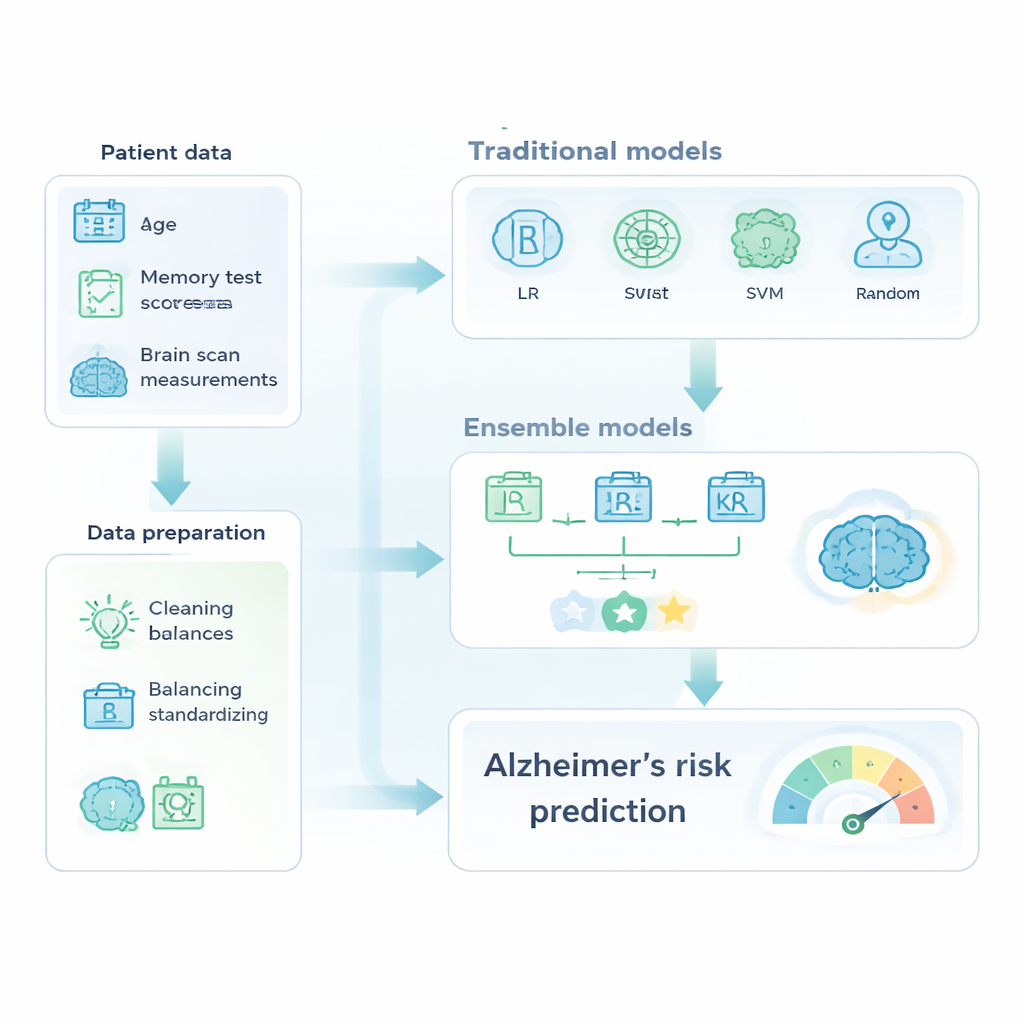

Turning patient records into usable signals

The researchers drew on a well-known data collection called OASIS-2, which follows 150 older adults aged 60 to 96 over several years. For each visit, the dataset includes basic information such as age, years of education, and socioeconomic status, as well as cognitive test scores and measurements derived from MRI brain scans, like overall brain volume. Before any prediction could happen, the team cleaned the data, removed identifiers and ambiguous cases, filled in a small number of missing values, and put all numerical measurements on a common scale. They also tackled a key real-world problem: far more people in the dataset were healthy than demented. To keep the models from simply guessing “no dementia” most of the time, the researchers used weighting schemes that make errors on the smaller, demented group count more heavily during training.

Comparing classic tools with model teams

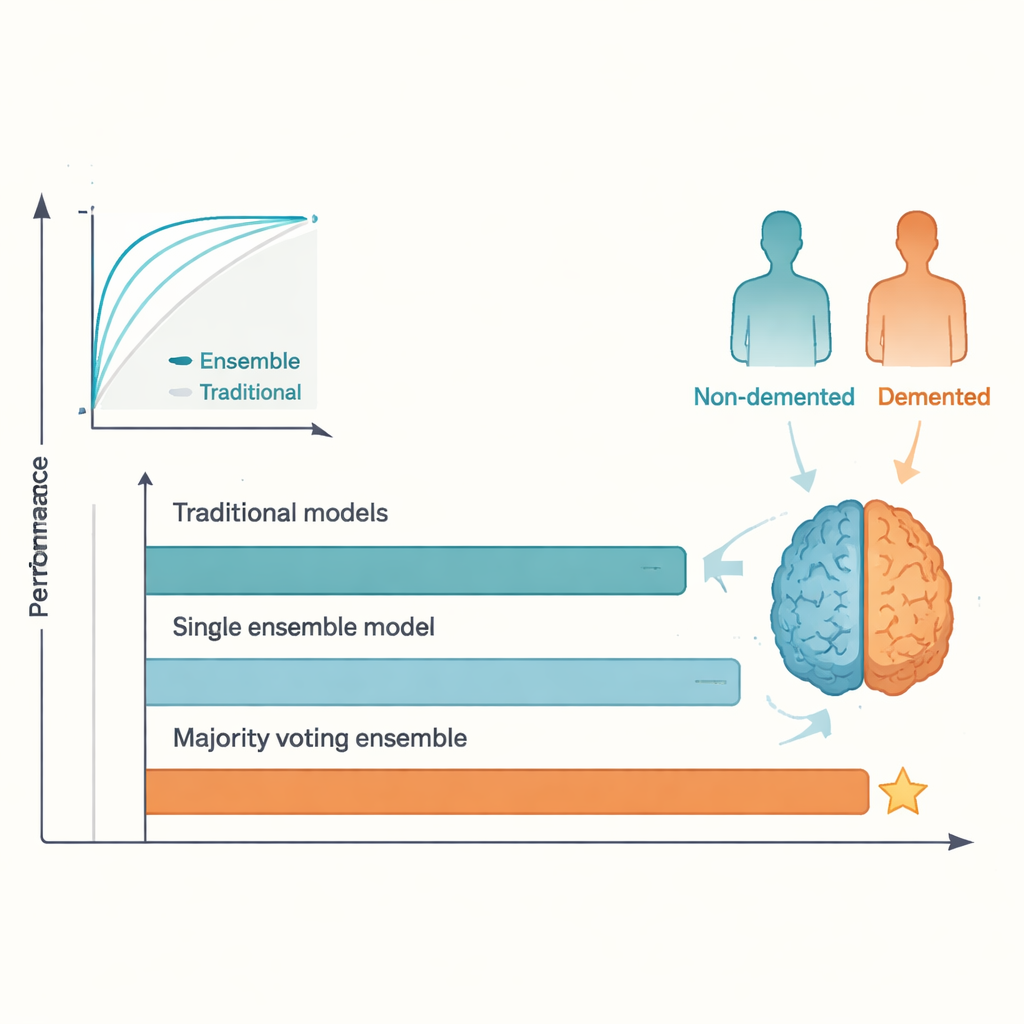

With this prepared dataset, the authors compared familiar machine-learning tools with more advanced “ensembles,” which combine several models into one stronger predictor. The classic group included logistic regression, decision trees, support vector machines, and random forests. The ensemble group featured AdaBoost, XGBoost, and a majority-voting model that blended three tuned classifiers. All models were trained on one portion of the data and tested on held-out cases, with performance judged using accuracy, the ability to correctly flag demented individuals (recall), and the area under the ROC curve, a summary of how well the model separates healthy from diseased cases.

When many minds beat one

The head-to-head results were clear. While the best traditional methods performed reasonably well, they plateaued around the level reported in earlier studies, with test accuracies in the low-to-mid 80 percent range. In contrast, the majority-voting ensemble reached about 95 percent accuracy and a similarly high ROC score, surpassing the commonly cited 92 percent benchmark. AdaBoost and other ensemble models also did better than any single traditional model. This advantage arises because different algorithms pick up on different aspects of the data; by letting them “vote,” the ensemble smooths out individual quirks and overfitting, leading to more stable predictions. The price of this gain is reduced transparency: it is harder to see, at a glance, why an ensemble made a particular decision compared with a simple regression or single tree.

Looking for natural groupings in the data

Beyond asking who has dementia, the researchers also asked how patients naturally group together, regardless of diagnosis labels. To do this, they transformed all continuous variables into ordered categories—such as ranges of age or brain volume—and applied a technique called multiple correspondence analysis to compress this rich information into a handful of underlying dimensions. They then used k-means clustering to partition these points into a small number of coherent groups. Some clusters were dominated by people with preserved brain volume and normal cognitive scores, while others contained individuals with low brain volume, poor test results, and more severe dementia ratings. The fact that these unsupervised clusters lined up well with clinical status suggests that the data carry a strong, consistent signal about disease risk and progression.

What this means for patients and clinicians

For a layperson, the takeaway is straightforward: when thoughtfully designed, teams of machine-learning models can spot Alzheimer’s-related dementia in structured clinical data more accurately than older methods, and they can do so using information that many clinics already collect. At the same time, exploratory techniques reveal that people fall into distinct profiles of brain health and cognitive function, hinting at different paths the disease might take. Although the study is limited by its modest sample size and by the complexity of interpreting ensemble models, it shows that combining powerful prediction with careful exploratory analysis can both sharpen early detection and deepen our understanding of how Alzheimer’s takes hold.

Citation: Amr, Y., Gad, W., Leiva, V. et al. Comparative analysis of supervised and ensemble models with unsupervised exploration for alzheimer’s disease prediction. Sci Rep 16, 7322 (2026). https://doi.org/10.1038/s41598-026-37122-9

Keywords: Alzheimer’s disease, dementia prediction, machine learning, ensemble models, brain imaging