Clear Sky Science · en

Class-attention pooling and token sparsity based vision transformers for chest X-ray interpretation

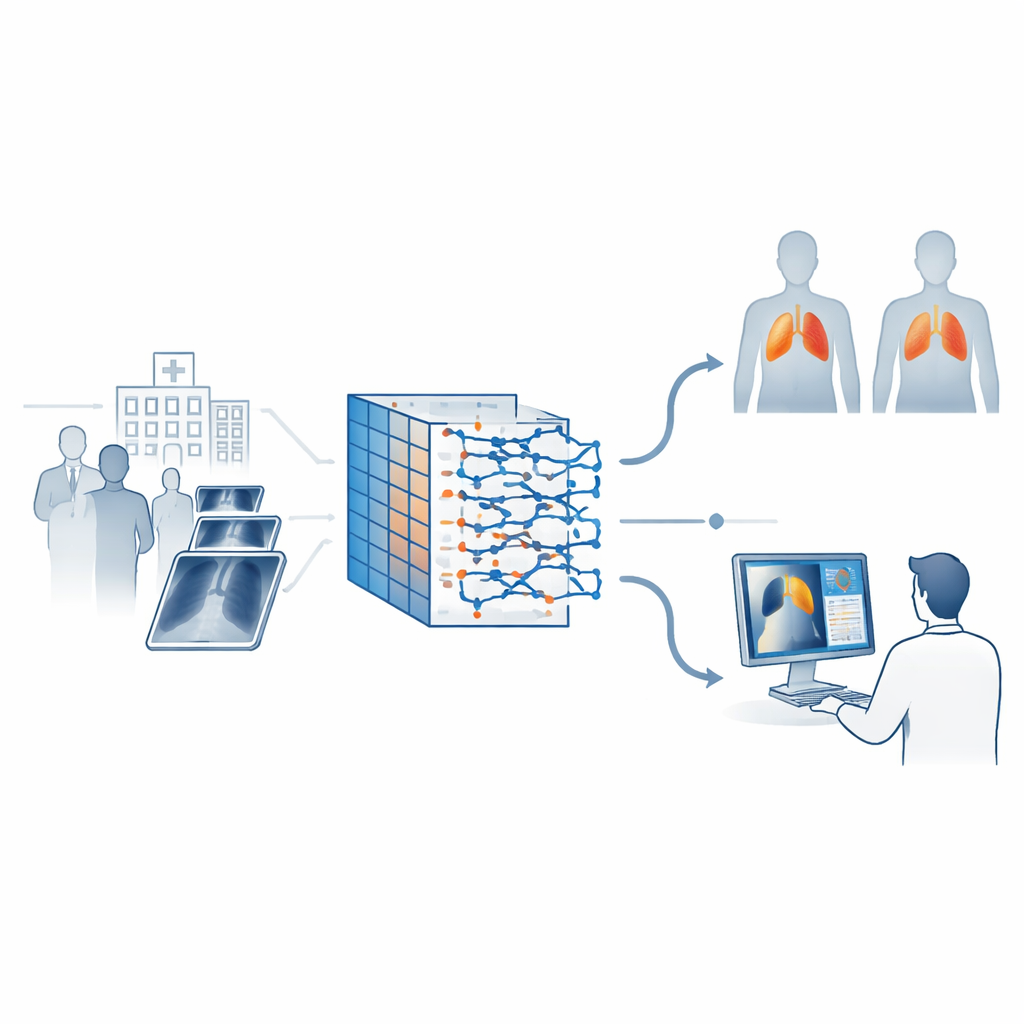

Smarter X-rays for a global lung disease

Tuberculosis remains one of the world’s deadliest infectious diseases, and chest X-rays are often the first and only imaging test available in crowded clinics, especially in low- and middle-income countries. Yet reading these scans is hard and time‑consuming, even for experts. This study presents an artificial intelligence system designed not only to spot signs of tuberculosis on chest X-rays with very high accuracy, but also to show doctors exactly which parts of the lungs influenced its decision, aiming to build trust and support faster, more consistent diagnoses.

Why reading chest images is so challenging

Chest X-rays are cheap, quick and widely available, making them an attractive tool for mass screening. The catch is that tuberculosis can appear in subtle ways that are easily missed, particularly when images are noisy, under- or over-exposed, or taken with older equipment. Human readers may disagree with each other, and busy clinics can overwhelm radiologists. Traditional computer programs addressed this by measuring hand‑crafted features in the images and feeding them into standard machine‑learning models, but these early systems struggled when scans came from new hospitals or had different technical settings.

From neural networks to attention-focused vision

Deep learning, especially convolutional neural networks, improved matters by learning patterns directly from pixels, achieving strong results on tuberculosis datasets. However, these networks mainly focus on local neighborhoods in the image and can miss broader patterns that span both lungs. Newer models called vision transformers view an X-ray as a grid of small patches and learn how each patch relates to every other, capturing long‑range structure. While powerful, off‑the‑shelf transformers may attend to unimportant regions and can be difficult to interpret, raising concerns about whether their decisions align with clinical reasoning.

A tailored AI pipeline for lung scans

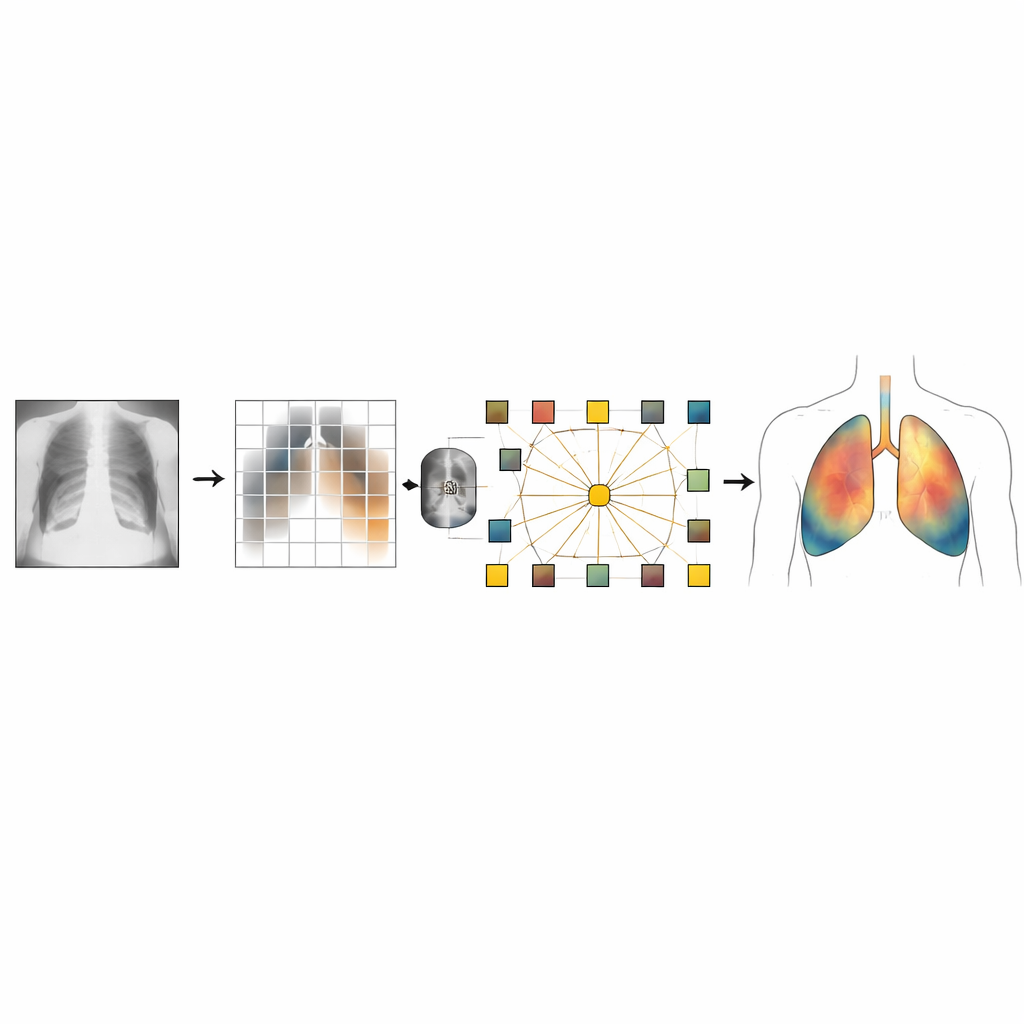

The authors design a customized vision transformer to address these weaknesses for chest X-rays. First, every image is carefully pre‑processed: it is resized, normalized and often passed through a contrast‑enhancement technique that makes faint lung lesions stand out while avoiding over‑sharpening. A lightweight convolutional stage at the front of the model extracts fine details such as edges and textures that matter in medical images. The scan is then split into small patches, each turned into a token the transformer can process.

Teaching the model where to look

To help the system keep track of anatomy, the model uses a position-encoding mechanism that injects information about where each patch lies in the lungs, rather than treating all locations as interchangeable. It also introduces special "class" tokens, one per disease category, that learn to gather the most relevant evidence from all patches. A sparsity strategy encourages the network to rely on only a subset of the most informative tokens, discarding background patterns and noise. The training recipe includes techniques like random dropping of tokens, careful learning‑rate scheduling and mixed‑precision computation, all chosen to stabilize learning on limited medical data and to avoid overfitting to quirks of the training images.

Seeing what the AI sees

Crucially, the system is built to explain itself. After making a prediction of "tuberculosis" or "normal," the model generates heatmaps using a method known as Grad‑CAM. These colored overlays highlight which lung regions most influenced the decision. The authors design their explanation pipeline to show balanced examples from both diseased and healthy cases, so radiologists can verify that the tool is looking at clinically meaningful structures rather than irrelevant artifacts. On two open tuberculosis datasets, the approach reached validation accuracy near 98 percent and an area‑under‑curve close to perfect discrimination, though the authors caution that their image-level data split may slightly overestimate real‑world performance and that external testing is still needed.

What this means for future care

In plain terms, this work demonstrates an AI system that can rapidly and accurately flag likely tuberculosis cases on chest X-rays while also drawing a clear visual "map" of its reasoning. Such a tool could help triage patients in resource‑constrained clinics, reduce missed cases and provide a consistent second opinion for radiologists. At the same time, the authors stress that their model has been tested only on two public datasets, focuses on a single disease label, and lacks full clinical validation. Future steps include extending the method to multiple lung conditions, adapting it to 3D scans such as CT, validating its explanations with radiologists and testing it across hospitals. Still, the study marks a promising move toward AI that is not just accurate, but also transparent and trustworthy in the fight against tuberculosis.

Citation: Lokunde, V., Sundar, K., Khokhar, A. et al. Class-attention pooling and token sparsity based vision transformers for chest X-ray interpretation. Sci Rep 16, 8035 (2026). https://doi.org/10.1038/s41598-026-37109-6

Keywords: tuberculosis, chest X-ray, vision transformer, explainable AI, medical imaging