Clear Sky Science · en

Evaluating gait system vulnerabilities through PPO and GAN-generated adversarial attacks

Why fooling a walking pattern matters

Most of us recognize friends and family by the way they walk, even from a distance. Computers can now do something similar: "gait recognition" systems analyze a person’s walking style to identify them without fingerprints or face scans. These tools are increasingly used in security and surveillance. This study asks an unsettling question: how easy is it to trick such systems using tiny, carefully crafted changes that people cannot notice—but machines can? The answer has big implications for privacy, security, and how much we can trust artificial intelligence in high-stakes settings.

How computers read the way we walk

Modern gait recognition relies on deep learning, the same family of techniques behind face recognition and self-driving cars. Instead of looking at a single snapshot, these systems combine many frames of a walking person into a single “gait energy image,” a kind of blurred silhouette that captures how body parts move over a full step cycle. A custom-built neural network then learns to distinguish one person’s gait from another’s, even when clothing or carried items change. In tests on two major research collections of walking videos (the CASIA-B and OU-ISIR datasets), the authors’ baseline model correctly identified people in more than 97% of cases—strong performance that might suggest the technology is ready for real-world deployment.

The invisible stickers that fool smart cameras

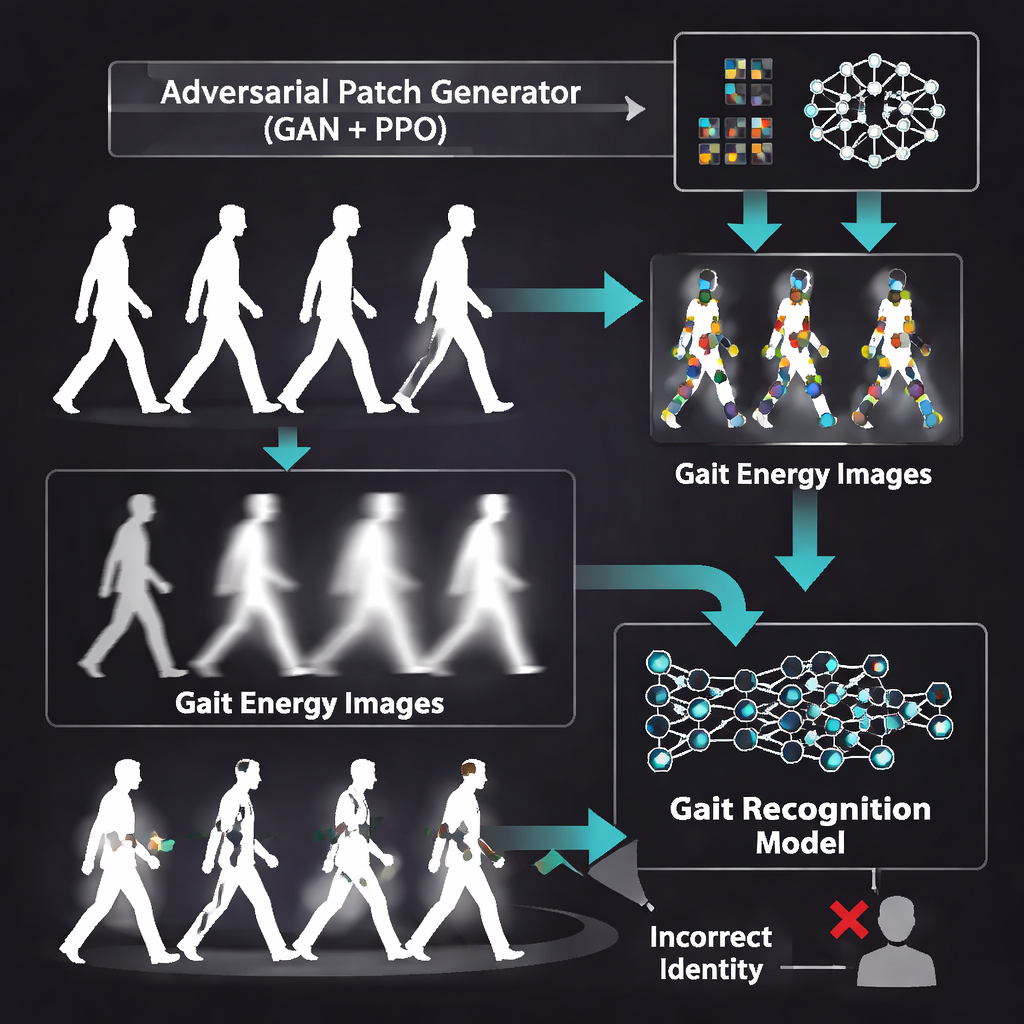

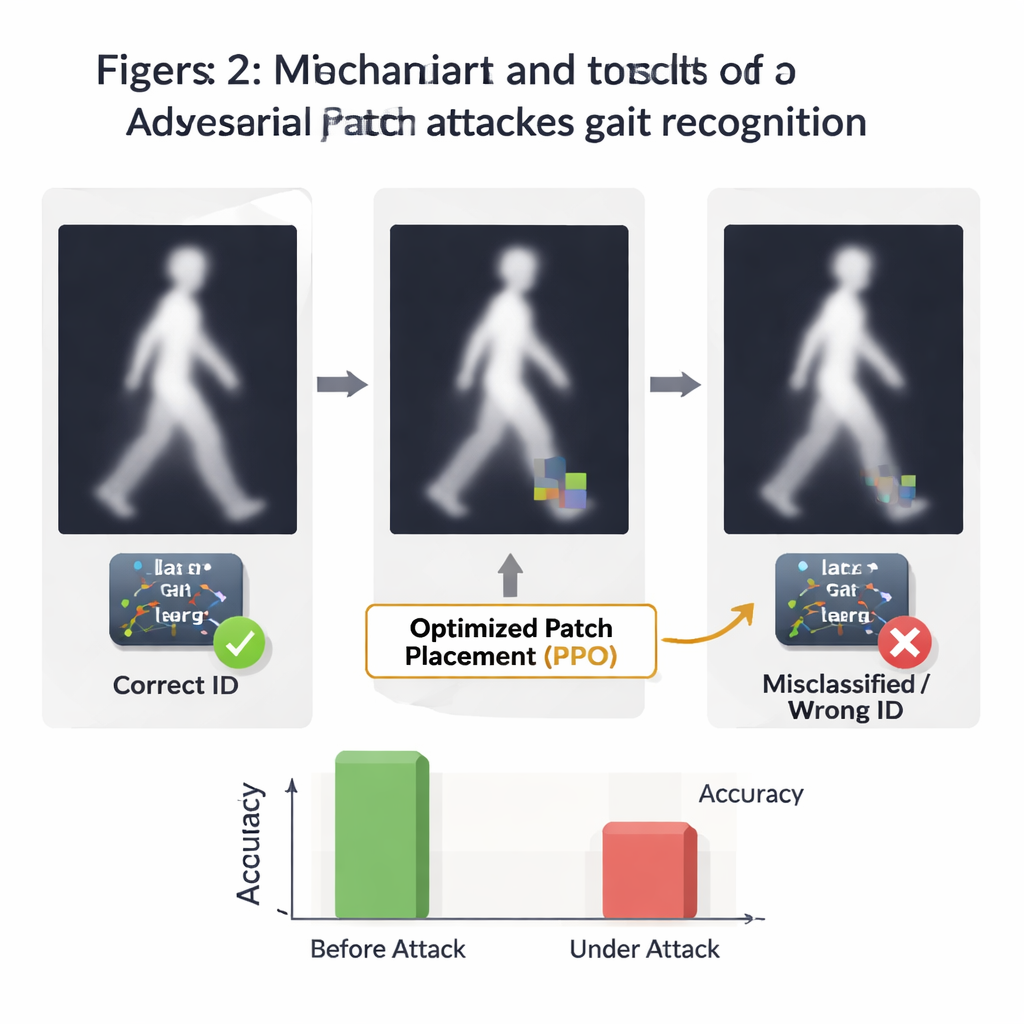

The heart of the paper is not building a better ID system, but breaking it on purpose to expose weaknesses. The researchers generate small “adversarial patches”—square regions of altered pixels that look harmless, yet are mathematically tuned to confuse the neural network. To create these patches, they use a Generative Adversarial Network (GAN), a kind of AI that learns to produce realistic-looking images by competing against a built-in critic. The GAN is trained directly on gait energy images so that its output blends naturally into the ghostly silhouettes. These patches are designed to be subtle enough that a human glancing at the walking pattern would likely not notice anything odd.

Letting a learning agent find the weak spots

Where a patch is placed can matter as much as how it looks. To discover the most damaging locations, the authors turn to a reinforcement learning method called Proximal Policy Optimization (PPO). They treat each gait image like a grid-shaped environment and let a software “agent” move the patch around—up, down, left, or right—while watching how much the recognition system’s confidence drops. When a position causes the model to misidentify the person, the agent is rewarded; when it fails, it is penalized. Over many episodes, the agent learns a policy for placing patches in especially vulnerable regions of the gait image, often near moving body parts that the model relies on most.

What happens when the attack is unleashed

After training both the patch generator and the placement strategy, the team attacks their own high-performing gait recognizer. Under normal conditions, the system shows excellent accuracy, low false alarms, and strong separation between correct and incorrect matches. Once adversarial patches are added, however, performance drops sharply. Depending on how aggressively the patch is allowed to move across the image, attack success rates climb above 60%, and the fraction of correctly identified people can fall to nearly one third of its original level. Curves that once showed near-perfect discrimination between genuine users and impostors sag toward the line of random guessing, revealing how easily the model can be pushed off course without visible distortions.

What this means for everyday security

For non-specialists, the takeaway is clear: a gait recognition system that appears highly accurate in the lab can be surprisingly fragile in the face of clever, machine-designed tampering. The study shows that combining generative image tools with trial-and-error learning can produce tiny, nearly invisible changes that cause serious identification errors. Rather than a blueprint for real-world abuse, the work is meant as a warning and a testing framework. It gives system designers a way to probe and measure how vulnerable their models are, and it underscores the need to build defenses against such attacks before gait recognition is widely trusted for surveillance, access control, or other critical applications.

Citation: Saoudi, E.M., Jaafari, J. & Jai Andaloussi, S. Evaluating gait system vulnerabilities through PPO and GAN-generated adversarial attacks. Sci Rep 16, 6039 (2026). https://doi.org/10.1038/s41598-026-37011-1

Keywords: gait recognition, biometric security, adversarial attacks, deep learning, reinforcement learning