Clear Sky Science · en

Real-world performance of the AI diagnostic system IDx-DR in the diagnosis of diabetic retinopathy and its main confounders

Why this new eye test matters

For people living with diabetes, vision loss from eye damage can creep in silently and permanently. Regular eye checks prevent many cases of blindness, but there are not enough eye doctors to examine everyone as often as needed. This study looked at a fully automated artificial intelligence (AI) system, called IDx-DR, to see how well it can spot diabetic eye disease in everyday clinical practice, and what real-world obstacles still stand in the way.

A growing need for fast eye checks

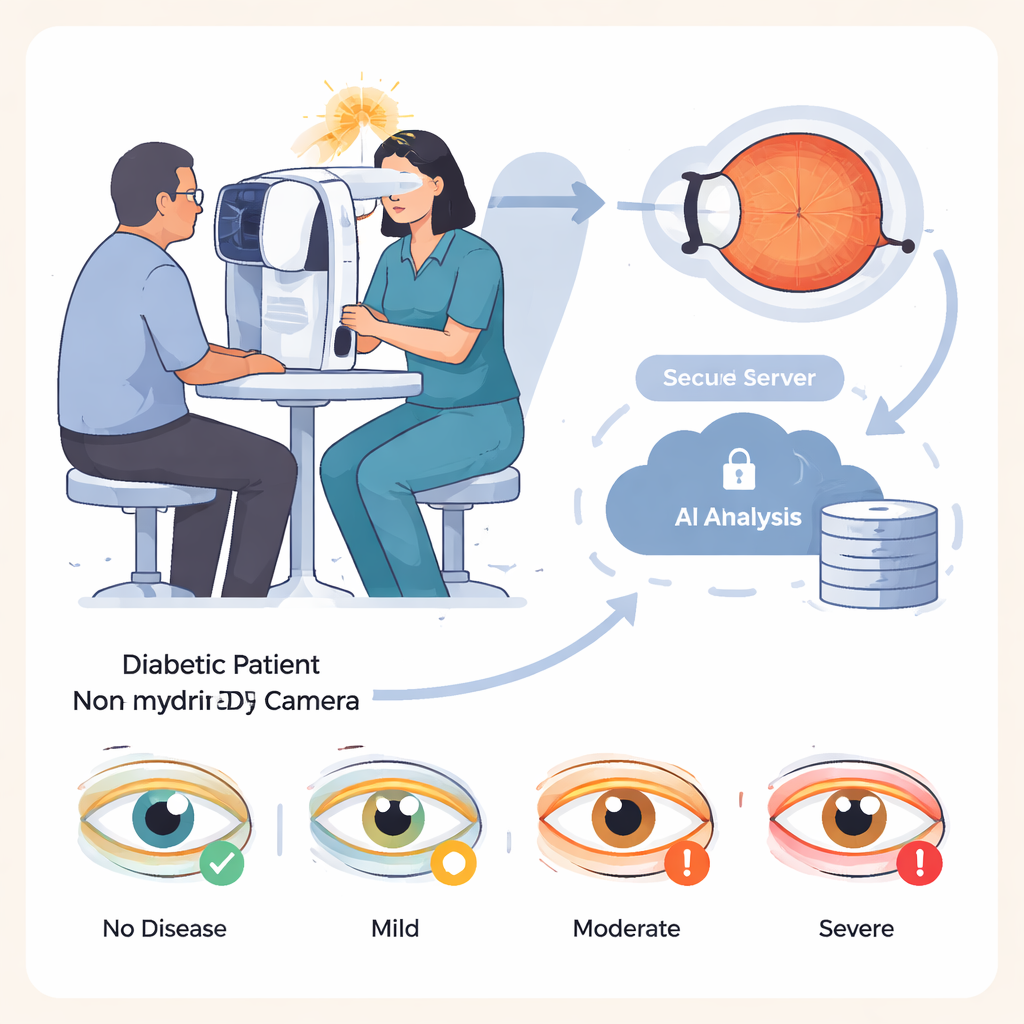

Diabetes is on the rise worldwide, and roughly one in three people with diabetes develops damage to the light-sensitive tissue at the back of the eye, a condition known as diabetic retinopathy. Caught early, this damage can be treated to greatly reduce the risk of blindness. The challenge is that screening millions of people requires time, training, and expensive equipment. IDx-DR aims to ease this burden: nurses or trained assistants take photographs of the retina with a special camera, and the images are sent to cloud-based software that automatically classifies the eye as having no, mild, moderate, or severe disease, without an eye doctor on site.

Putting the AI system to the test

The researchers evaluated IDx-DR in 875 patients with diabetes treated at a specialized hospital in Germany. The group was broad, including children as young as 8 and adults up to 92 years old, and both major types of diabetes. For each person, assistants took four retinal photographs in a darkened room, without using eye drops to enlarge the pupils, to mimic a typical primary-care screening visit. The AI system analyzed these images and produced a single diagnosis per patient, based on the more severely affected eye. All patients also received a full eye examination by experienced ophthalmologists using dilating drops, which served as the gold-standard comparison, and the stored photographs were later graded by ophthalmologists who did not know the AI results.

How well did the AI recognize disease?

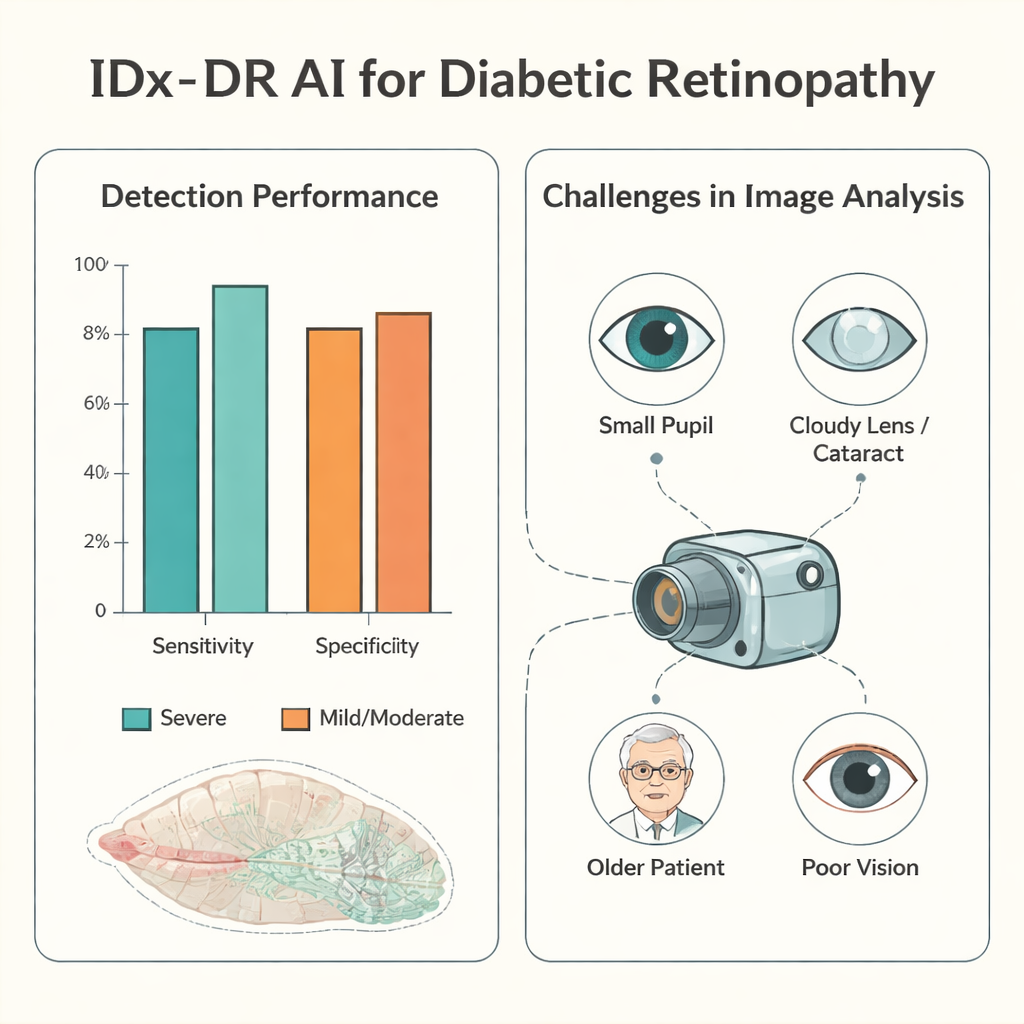

When good-quality photographs were available, the AI performed especially well for the most dangerous cases. For severe diabetic eye disease, its sensitivity—the share of truly affected patients it correctly flagged—was about 94%, and its specificity—how often it correctly reassured those without severe disease—was about 90%. In more than half of patients with analyzable images, the AI’s four-level grading exactly matched the doctors’ dilated eye exam. When it disagreed, it tended to be cautious: it more often labeled disease as worse than it really was than missed serious problems. Underestimating the severity, which could delay needed treatment, occurred in less than 5% of patients with usable images, and very rarely in those with truly severe disease.

The hidden hurdles: getting usable pictures

The major weak point was not the AI’s decision-making but the practicality of obtaining images it could interpret. In roughly one in ten patients, the staff could not capture a retinal photograph at all, and in about one in four, the AI judged the images too poor to analyze. The study probed why. Smaller pupils were a key factor: patients with tight pupils under 3 millimeters had far fewer usable images. Older age, cloudy eye lenses (cataracts), existing diabetic swelling in the retina, and low visual acuity also made photography and analysis harder. Even the person taking the pictures mattered. With training and experience, an examiner’s rate of unusable images dropped sharply and the time needed per patient fell, but after a long break from practice, performance slipped again.

What this means for future eye care

For lay readers, the main message is that autonomous AI can safely help identify people with advanced diabetic eye damage, especially where eye doctors are scarce. However, its usefulness depends heavily on clear retinal photographs, which are harder to obtain in older patients, those with small pupils or cataracts, or in rushed, understaffed settings. The study suggests that better camera protocols, careful training of staff, and possibly selective use of pupil-dilating drops could greatly improve the system’s real-world impact. For now, IDx-DR looks promising as a triage tool to prioritize who needs to see an eye specialist soonest, rather than as a complete replacement for human eye exams.

Citation: Hunfeld, E., Tayar, A., Paul, S. et al. Real-world performance of the AI diagnostic system IDx-DR in the diagnosis of diabetic retinopathy and its main confounders. Sci Rep 16, 4349 (2026). https://doi.org/10.1038/s41598-026-36970-9

Keywords: diabetic retinopathy, artificial intelligence, retinal imaging, medical screening, eye health