Clear Sky Science · en

QLSA-MOEAD integration for precision task scheduling in heterogeneous computing environments

Why smarter computer scheduling matters

From earthquake simulations to space telescopes, today’s science runs on sprawling computer systems that mix many kinds of chips—traditional CPUs, graphics processors, and reconfigurable hardware. Deciding which chip should run which piece of work, and in what order, is surprisingly hard and can waste time and energy if done poorly. This paper presents a new way to orchestrate these complex workloads so that big jobs finish faster, use the hardware more fully, and, in some cases, consume less power.

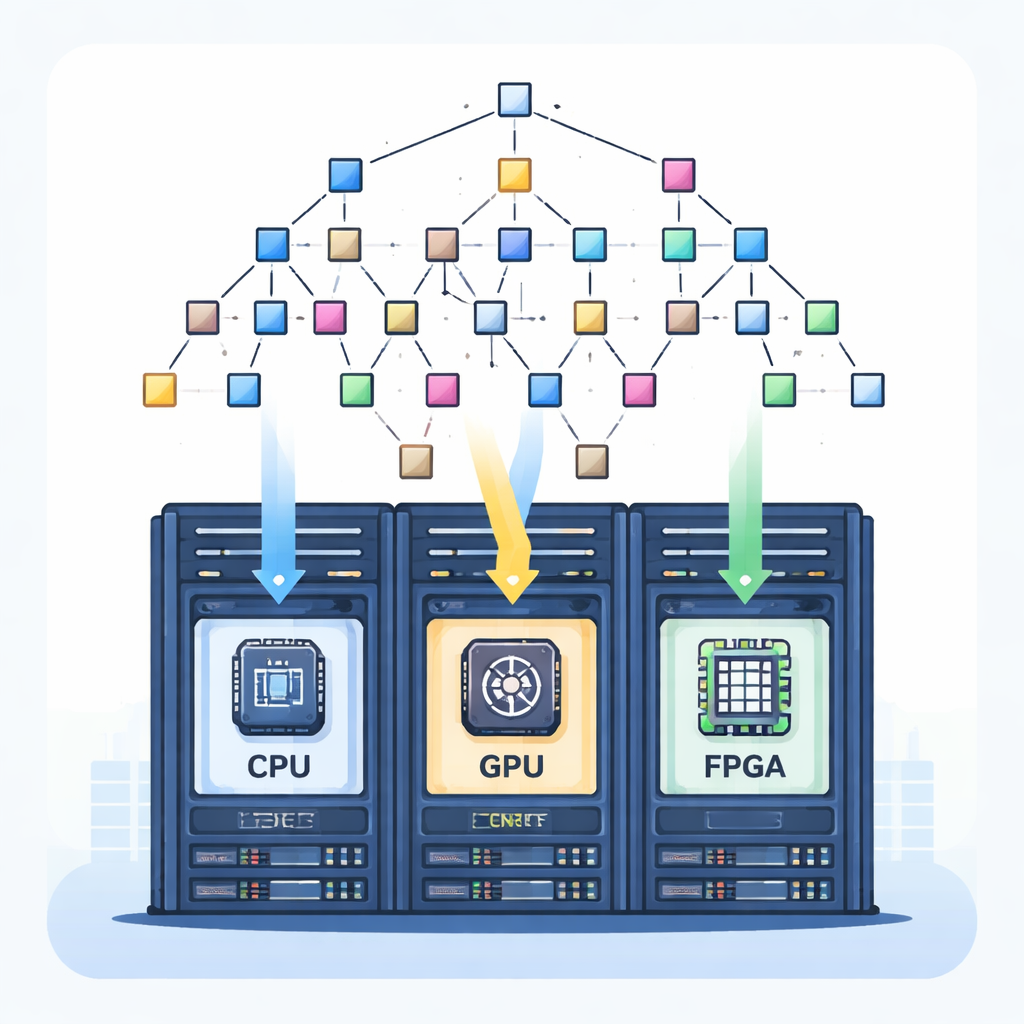

Different chips, tangled jobs

Modern high-performance computers are “heterogeneous”: they combine CPUs, GPUs, FPGAs and other accelerators, each with distinct strengths. Scientific and industrial applications often break their work into many small tasks connected by data dependencies, naturally forming a Directed Acyclic Graph (DAG). Some tasks must finish before others can start, and tasks may run faster or slower depending on the chip they land on. The challenge is to assign hundreds of interdependent tasks to a mix of processors so that the overall completion time is short, the machines are kept busy rather than idle, and, for certain workflows, energy use stays in check. Mathematically, this is an NP-hard problem, meaning brute force search is infeasible for realistic systems.

Why older methods fall short

Traditional scheduling approaches often assume a stable environment and focus on a single goal, such as minimizing completion time. Well-known heuristics like HEFT order tasks by priority, while metaheuristics such as simulated annealing or tabu search roam the space of possible schedules looking for improvements. These methods can work well on smaller or simpler systems, but they typically start from random initial schedules, do not adapt once conditions change, and struggle to juggle several goals at once—like time, hardware load balance, and energy. Recent machine-learning-based schedulers add adaptivity, but usually require large training datasets and still lack a principled way to produce a full set of trade-off solutions for multiple objectives.

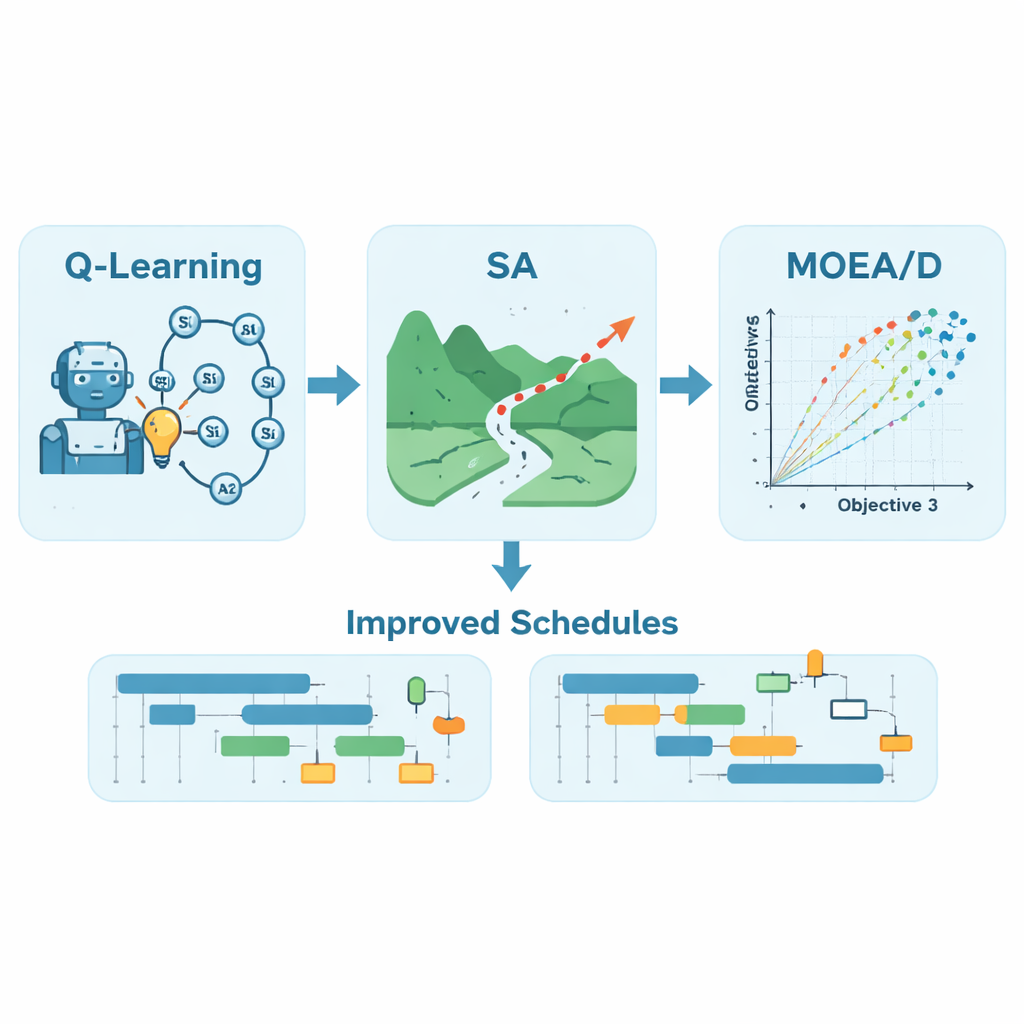

A hybrid learner that plans and refines

The authors propose QLSA-MOEAD, a hybrid framework that blends three ideas: Q-learning, simulated annealing, and a multi-objective evolutionary technique called MOEA/D. First, a Q-learning agent is trained to build task orders by trial and error. It repeatedly constructs schedules, observes how long they take to finish, and updates a table of “Q-values” that capture which choices tend to lead to better outcomes. Instead of relying on fixed rules, the agent gradually learns good patterns for mapping tasks to processors, including how to react when new tasks appear during execution. Using this learned policy, the system generates a strong initial schedule rather than a random one, giving the optimization process a head start.

Fine-tuning and balancing competing goals

Next, simulated annealing nudges the learned schedule by swapping pairs of tasks and occasionally accepting worse options to escape local dead ends, much like shaking a puzzle to settle into a better configuration. Finally, MOEA/D treats the scheduling problem as truly multi-objective. Instead of collapsing all aims into a single score, it decomposes the problem into many subproblems, each representing a different trade-off between finishing soon and keeping processors evenly loaded—and, for a seismic hazard workflow called CyberShake, also lowering energy use. An evolutionary process explores these trade-offs in parallel, exchanging information among neighboring subproblems to produce a diverse “Pareto front” of schedules where improving one goal would worsen another.

Putting the method to the test

To gauge performance, QLSA-MOEAD was tested on 20 workflow cases, including synthetic Fast Fourier Transform and molecular workloads, a large astronomical image-stitching workflow (Montage), and the real-world CyberShake earthquake simulation. Across 16 synthetic cases, the new method delivered the best solution quality in 14, shortening completion times and improving hardware utilization compared with several advanced baselines. For CyberShake, where energy was also optimized, it achieved two- to four-fold improvements in a standard multi-objective quality measure over the previous state of the art, while keeping a good spread of trade-off solutions. In dynamic tests where new tasks arrive on the fly, the learned scheduler could react in under two milliseconds, adjusting plans much faster than recomputing everything from scratch, though sometimes at the cost of reduced optimality when communication delays were extreme.

What this means for everyday computing

For a non-specialist, the message is that smarter, learning-based schedulers can make large, mixed-chip computers both faster and greener without constant human tuning. By combining an experience-based planner (Q-learning), a careful local search (simulated annealing), and a trade-off explorer (MOEA/D), the proposed framework consistently finds schedules that finish big jobs sooner, keep expensive hardware better utilized, and, for some applications, cut energy use. While there are still limits—such as training cost and performance drops in the most extreme conditions—the study shows a practical path toward more autonomous, efficient orchestration of complex scientific and industrial workflows.

Citation: Saad, A., Abd el-Raouf, O., Hadhoud, M. et al. QLSA-MOEAD integration for precision task scheduling in heterogeneous computing environments. Sci Rep 16, 7194 (2026). https://doi.org/10.1038/s41598-026-36916-1

Keywords: task scheduling, heterogeneous computing, reinforcement learning, multi-objective optimization, energy-efficient workflows