Clear Sky Science · en

Causal inference shapes crossmodal postdiction in multisensory integration

How Later Sights and Sounds Rewrite What We Just Experienced

Think back to noticing a friend calling your name on a busy street and suddenly realizing they had been shouting for a while. It can feel as if your mind goes back in time and rewrites what you heard and saw a moment ago. This study explores how the brain combines what comes through the eyes and ears over a short time window and shows that later sights and sounds can literally change what we believe we saw in the past.

A Strange Trick of Flashes and Beeps

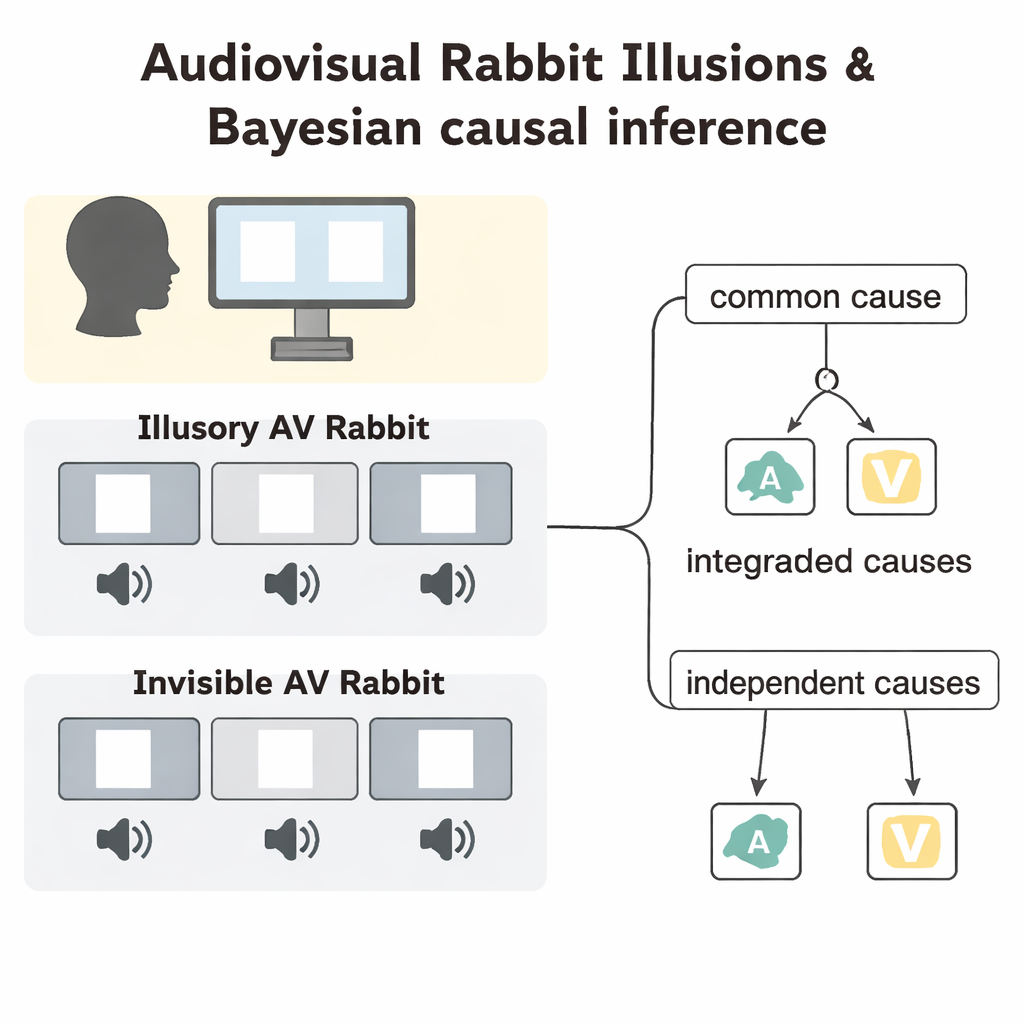

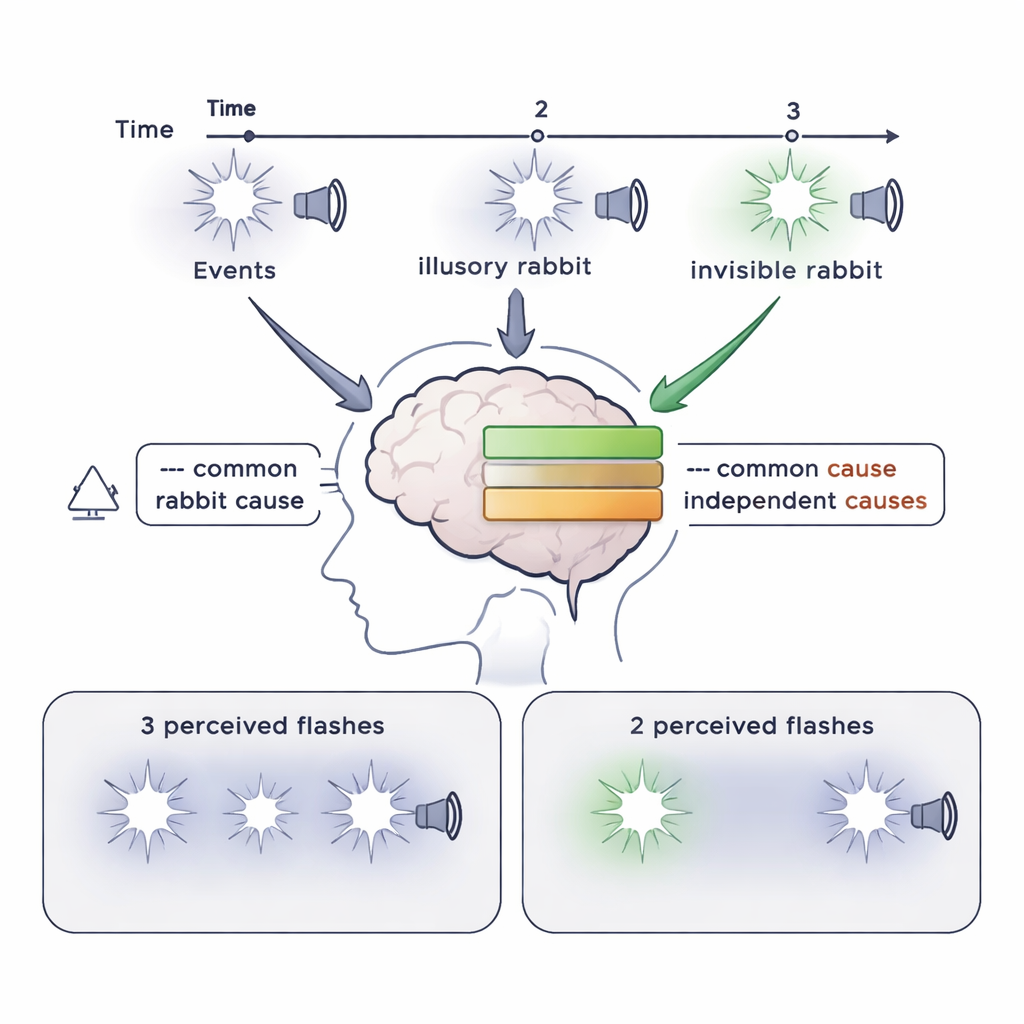

The researchers focused on two curious illusions called the “Illusory Audiovisual (AV) Rabbit” and the “Invisible AV Rabbit.” In these illusions, brief flashes of light on a screen are paired with quick beeps from a speaker. Sometimes a flash is missing but a beep is present; other times a flash is present without a beep. When the flashes and beeps are arranged in a specific sequence and occur close together in time, people reliably report seeing an extra flash that never appeared, or fail to see a flash that really was there. Crucially, the last flash–beep pair in the sequence can change how people perceive earlier moments, showing that perception does not simply move forward in time but can be edited after the fact.

Testing How the Brain Chooses a Single Story

To understand the hidden rules behind these illusions, the team presented 28 carefully designed conditions to 28 volunteers. Participants were told to ignore the sounds and simply report how many flashes they saw and where they appeared on a row of five possible positions. The flash sequences could move left or right or even change direction, and the sounds could be perfectly synchronized with the flashes or offset by about two-tenths of a second. This design reduced simple guessing strategies and allowed the researchers to probe when the brain would combine sight and sound and when it would keep them separate. They then measured how often people reported illusory middle flashes (the “Illusory Rabbit”) or missed real middle flashes (the “Invisible Rabbit”).

When Timing Lines Up, Illusions Take Over

The results showed that illusion trials produced far more illusory or missing flashes than control trials in which flashes appeared alone or in simpler audiovisual combinations. When flashes and beeps were perfectly aligned in time, participants reported the illusions in roughly 40 percent of trials. But when the sounds led or lagged behind the flashes by 225 milliseconds, the illusion rates dropped. This suggests that the brain has a limited “multisensory time window”—lasting a few hundred milliseconds—within which it is willing to treat sights and sounds as part of the same event. Inside this window, later events can retroactively change how earlier flashes are perceived; outside it, the brain is more likely to treat vision and hearing as unrelated streams.

A Brain That Weighs Causes Like a Statistician

To explain these findings, the authors compared four computational models of how the brain might combine sensory information. The key model was a Bayesian Causal Inference (BCI) model, which assumes that the brain behaves a bit like a statistician: it weighs prior expectations and noisy sensory evidence to decide whether vision and hearing come from a single common cause or from separate causes. If a common cause is likely, the model merges flashes and beeps into a single event, giving more weight to the more reliable sense—in this case, the sharp and precise beeps. Three alternative models either always fused sight and sound, always kept them separate, or used causal inference but ignored the final flash–beep pair when making its decision, and thus could not fully capture postdiction.

Why the Bayesian Story Fits Best

The BCI model best matched people’s behavior across all conditions. It accurately reproduced high illusion rates in the key rabbit conditions, lower rates in control trials, and the drop in illusions when flashes and beeps were out of sync. Importantly, when the researchers removed the influence of the last flash–beep pair from the causal calculation, the model consistently underestimated how often illusions occurred. This indicates that the brain does not just build a percept from the first event forward; instead, it accumulates evidence across the entire sequence and then retrospectively decides on the most likely storyline. When the last flash–beep strongly supports a single shared cause, the brain is more willing to “fill in” a missing flash or erase a weak one in the middle.

What This Means for Everyday Perception

In everyday life, our senses are constantly flooded with overlapping sights and sounds. This work suggests that the brain waits a short moment, gathers information from past, present, and slightly future events, and then settles on a coherent interpretation—sometimes at the cost of accuracy. The Bayesian causal inference framework provides a simple explanation: our brains favor a single, plausible story of what happened, even if that means adding or erasing details after the fact. In other words, what you think you saw a fraction of a second ago can be quietly rewritten by what you hear or see next.

Citation: Günaydın, G., Moran, J.K., Rohe, T. et al. Causal inference shapes crossmodal postdiction in multisensory integration. Sci Rep 16, 7490 (2026). https://doi.org/10.1038/s41598-026-36884-6

Keywords: multisensory integration, audiovisual illusion, causal inference, postdiction, Bayesian perception