Clear Sky Science · en

Shadow fading prediction at 18 GHz through physics guided learning in vegetative corridors

Why orchard Wi‑Fi matters

Modern farms are filling up with sensors, drones, and autonomous machines that all need reliable, high‑speed wireless connections. But trees are surprisingly good at blocking radio waves, especially at the higher frequencies that future 6G networks want to use for fast data. This paper explores how radio signals at 18 GHz travel along the “corridors” formed by rows of fruit trees, and shows how blending physics with machine learning can give farmers and engineers much better tools to plan wireless networks in orchards.

Walking a signal through a tunnel of trees

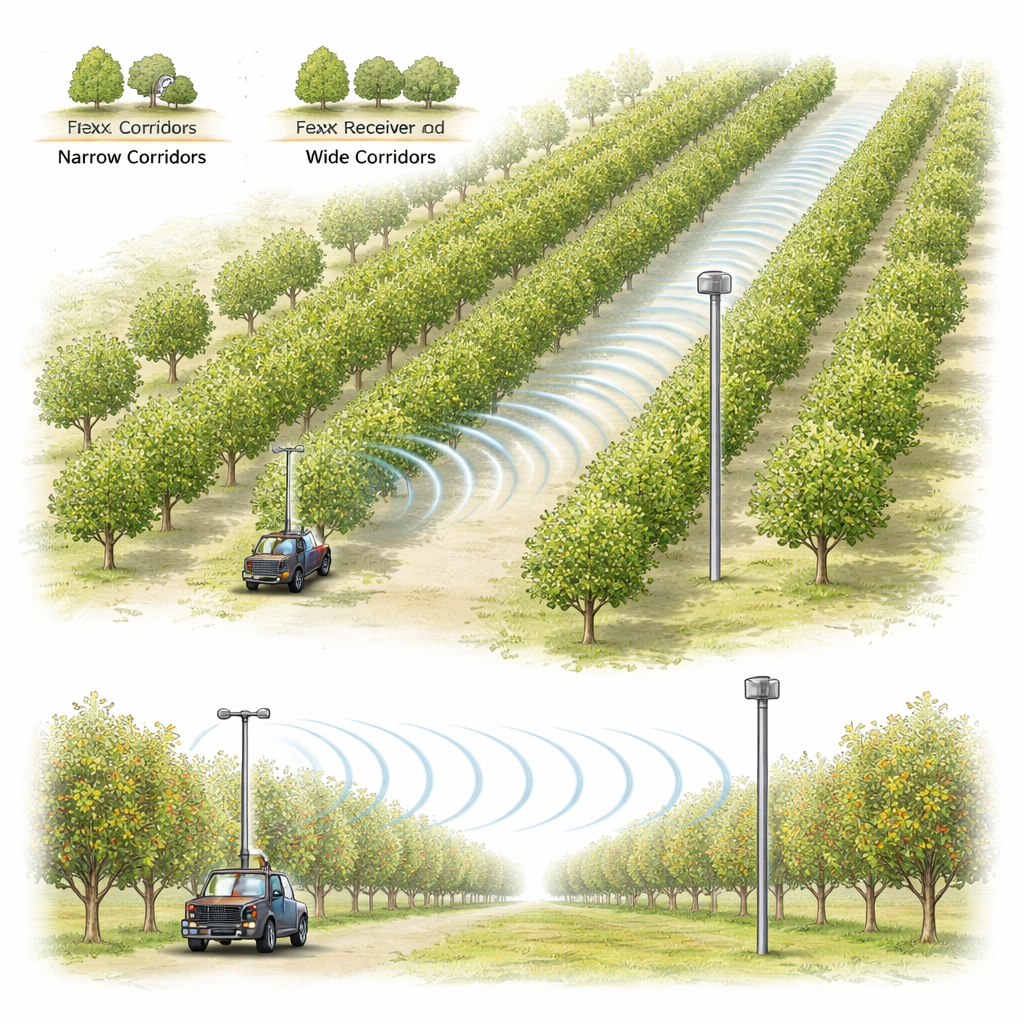

The researchers carried out a large measurement campaign in a custard apple orchard in Chile. The trees were planted in neat rows, creating long straight corridors much like green tunnels. Along three different corridors—two wide and one narrow—they placed a fixed receiver at about mid‑tree height and walked a transmitter away from it over 160 meters at a slow, steady pace. They repeated this for three transmitter heights (below, at, and above the receiver height), ending up with nine distinct geometric setups and more than 17,000 signal measurements. All equipment was carefully calibrated so that any changes in received power reflected only how the orchard itself affected the radio waves.

When simple distance rules are not enough

In wireless engineering, a common starting point is a simple “path loss” rule: the farther apart the antennas, the weaker the signal, with the weakening rate summarized by a single number called the path‑loss exponent. Using this standard model, the team found an average exponent of about 2.5 for the whole orchard, meaning the signal faded faster than in free space. On the surface, this model looked decent—it captured the general downward trend with distance—but the actual data showed a wide scatter of several decibels around that trend. When the researchers fitted the same model separately to each of the nine geometries, both the exponent and the amount of variability changed a lot from one corridor and height to another. This revealed that the extra fading caused by the trees is not just random noise; it depends systematically on the corridor width and antenna heights.

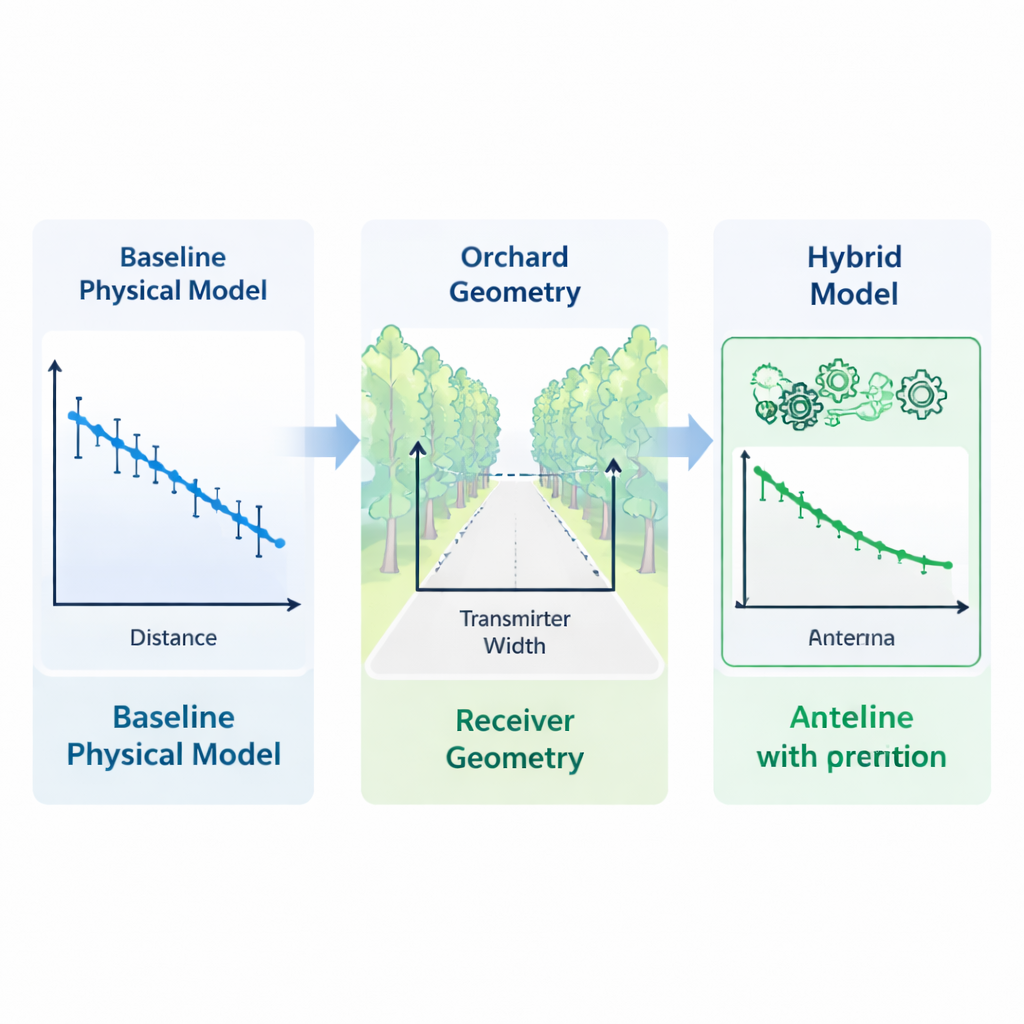

Teaching a model what the trees are doing

To capture this hidden structure, the authors built a “hybrid” model in two stages. First, they kept the trusted physics‑based distance rule as a backbone, using it to remove the basic effect of separation between antennas. What remained were the deviations—called shadow fading—caused mainly by the vegetation and geometry. Second, they fed these deviations to a lightweight machine‑learning system that was told about key geometric ingredients: link distance, corridor width, transmitter and receiver heights, and simple combinations of these (such as width times distance or height relative to width). A straightforward linear model handled the main geometric trends, while a popular boosting algorithm (XGBoost) added small nonlinear corrections on top. Crucially, the learning step focused only on what the physics model could not already explain.

How narrow lanes of trees can help a signal

When the team tested many different learning methods, an interesting pattern emerged. Complex stand‑alone machine‑learning models—random forests, gradient boosting, and others—looked fine when predicting new positions inside already measured corridors, but their performance collapsed when asked to predict entirely new combinations of corridor width and antenna height. In some cases they did worse than the simple distance‑only rule. By contrast, the hybrid model not only reduced the typical prediction error by about a quarter compared with the basic model, it actually did better on unseen corridor setups than on held‑out positions within known setups. The analysis showed that corridor width was the single most powerful factor: narrow corridors tended to guide the signal forward like a loose waveguide, while wide corridors let more energy leak sideways into the trees, increasing loss.

What this means for connected farming

For non‑specialists, the key message is that we can predict how well future 6G‑style links will work in orchards without having to measure every single row of trees. By keeping a simple, understandable physics model at the core and letting machine learning fill in the subtler effects of orchard layout, the authors created a tool that stays accurate even when the corridor geometry changes. In practical terms, this means more confident design of sensor networks and autonomous vehicle links in farms, smaller safety margins in the link budget, and clearer rules of thumb—such as recognizing that corridor width is a major lever for connectivity. While the exact numbers will change for other tree species and seasons, the study shows a promising path for combining physics and data to bring robust wireless coverage to the fields.

Citation: Celades-Martínez, J., Diago-Mosquera, M.E., Peña, A. et al. Shadow fading prediction at 18 GHz through physics guided learning in vegetative corridors. Sci Rep 16, 5916 (2026). https://doi.org/10.1038/s41598-026-36878-4

Keywords: precision agriculture, wireless propagation, vegetation attenuation, hybrid machine learning, FR3 band