Clear Sky Science · en

Object detection on low-compute edge SoCs: a reproducible benchmark and deployment guidelines

Why tiny chips for smart cameras matter

Many of the "smart" devices around us—security cameras, drones, factory sensors, and doorbells—need to spot people and objects in real time, but they rely on very small, low-power chips instead of power-hungry data-center hardware. Companies often choose popular YOLO object detection models, yet the advertised speed of these chips says little about how well things actually run in the field. This paper takes a hard, experimental look at how nine modern YOLO variants behave on three widely used low-cost Rockchip processors, revealing what really controls speed, energy use, and reliability when intelligence moves to the edge.

Three everyday chips under the microscope

The authors focus on three commercial system-on-chips (SoCs) that quietly power many embedded vision systems: the small RV1106, the mid-range RK3568, and the more capable RK3588. Each combines ordinary processor cores with a dedicated neural processing unit (NPU) and external memory. On these platforms, the team deploys nine YOLO models—three generations (YOLOv5, YOLOv8, YOLO11) at three sizes (Nano, Small, Medium)—all trained on the same benchmark dataset. They carefully convert the models into a common format, quantize them down to 8-bit arithmetic, compile them with Rockchip’s tools, and then run hundreds of timed tests to obtain stable measurements of delay, power, and energy per processed frame.

Speed is not what the spec sheet suggests

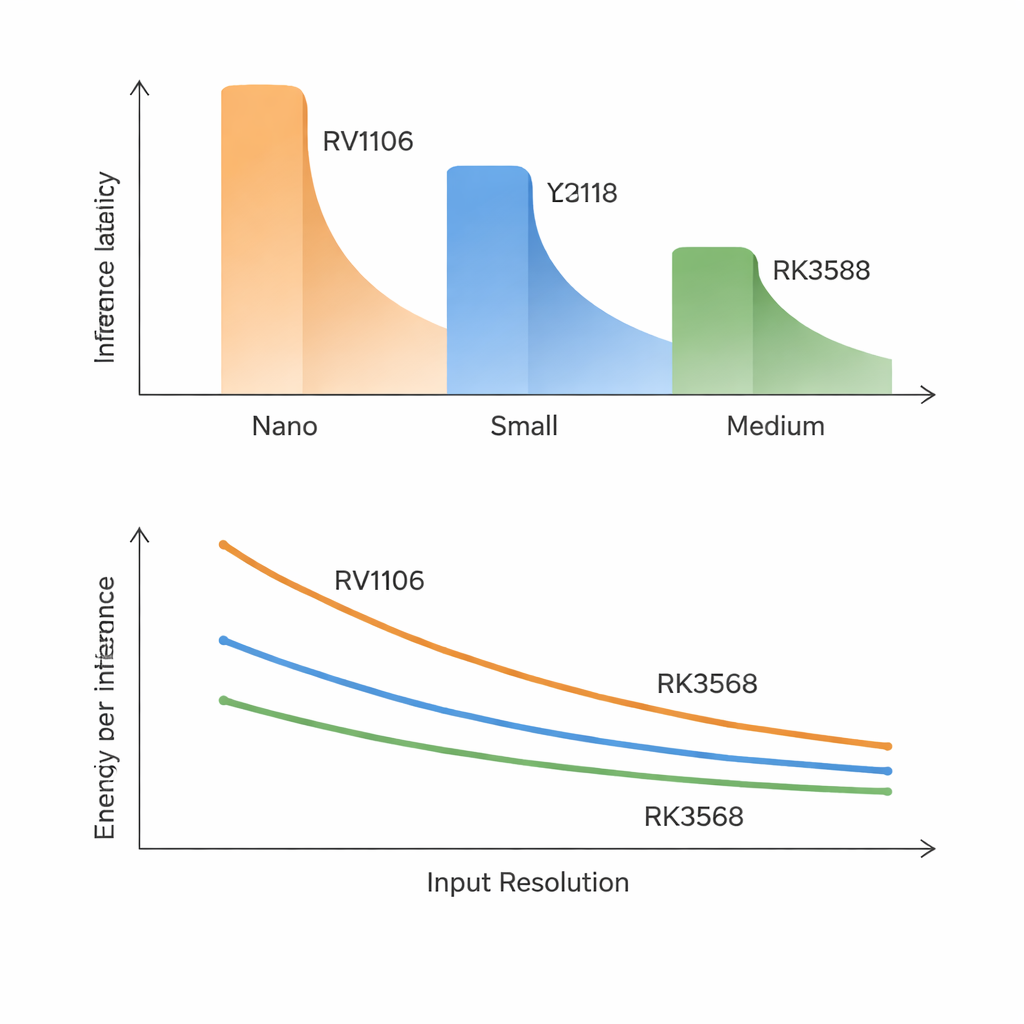

One of the clearest lessons is that traditional model and chip numbers are poor predictors of real speed. On the slowest chip, even the smallest models take around 70–100 milliseconds per frame and medium-sized ones are far too slow for real-time use. The fastest chip can run Nano and many Small models near the 30-frames-per-second mark, but larger models still fall short of very high frame-rate targets. Surprisingly, delay lines up more closely with how accurate a model is than with how many math operations or parameters it has. Newer, more accurate YOLO designs add internal blocks that are friendly to accuracy but awkward for these NPUs to execute, so “smarter” often means “noticeably slower” on such hardware.

When bigger images and shared memory bite back

The study shows that making input images larger does not just increase work smoothly. In theory, doubling width and height should make the cost grow fourfold, but on low-bandwidth chips it can grow even faster. As images get bigger, intermediate data no longer fits comfortably and must be shuffled repeatedly to off-chip memory. On the smallest and mid-range SoCs, this turns into a traffic jam: medium-sized models slow down far more than expected, and heavy background memory use from other tasks can inflate delays by 50–270%. In contrast, the RK3588, with far higher memory bandwidth, handles resolution increases gracefully and barely flinches under extra CPU or memory load, highlighting that memory speed—not raw compute—is often the true bottleneck.

More cores and more power do not guarantee efficiency

Rockchip’s fastest chip includes a three-core NPU, but running YOLO across multiple cores delivers only modest benefits. For most models, splitting work across two or three cores trims delay by less than 10%, and sometimes performance even degrades. The overhead of coordinating cores and sharing the same memory pool cancels out much of the theoretical gain. Power measurements add another twist: all three SoCs draw only a few watts while running, yet their energy per processed frame can differ by a factor of three. The higher-end RK3588 uses more power at any instant but finishes the work so quickly that it often ends up being the most energy-efficient choice, especially for medium-sized models and higher resolutions.

Practical takeaways for real-world devices

For readers thinking about smart cameras, robots, or IoT gadgets, the message is straightforward. On the smallest chips, only the tiniest YOLO models at moderate image sizes are practical, and even then real-time video is a stretch. Mid-range chips can comfortably support small models and occasionally medium ones if frame rates or battery life can be relaxed. The high-end RK3588 finally makes it realistic to run more accurate, medium-sized YOLO variants while still keeping energy per frame in check. Across the board, the paper argues that designers should choose models with the hardware firmly in mind, pay close attention to memory bandwidth, and favor memory-saving tricks over chasing ever larger networks. What ultimately matters is not the advertised tera-operations per second, but whether the entire system can deliver fast, stable, and energy-conscious object detection in the messy conditions of the real world.

Citation: Kong, C., Li, F., Yan, X. et al. Object detection on low-compute edge SoCs: a reproducible benchmark and deployment guidelines. Sci Rep 16, 5875 (2026). https://doi.org/10.1038/s41598-026-36862-y

Keywords: edge AI, object detection, embedded vision, YOLO models, low-power SoC