Clear Sky Science · en

Explainable artificial intelligence for sedimentary facies segmentation

Reading Earth’s History from Rock Cylinders

To understand how rivers, deltas, and coastlines evolved—and how stable the ground beneath our cities really is—geologists study long cylinders of sediment drilled from the subsurface. Interpreting these cores is slow, expert work. This study shows how artificial intelligence (AI), combined with tools that reveal its inner reasoning, can help automate that task while still letting scientists see why the computer reached a given conclusion.

Why Sediment Cores Matter

Subsurface sediments record past floods, sea‑level changes, earthquakes, and shifts in climate. Specialists divide each core into “facies,” layers that reflect distinct environments such as river channels, floodplains, coastal swamps, or offshore muds. These distinctions guide everything from paleoclimate reconstructions to assessments of earthquake hazard and ground stability. But careful facies mapping demands years of sedimentology training, and even experts face ambiguities when layers look alike or cores are damaged. Making this work more accessible and consistent is a key motivation for applying AI.

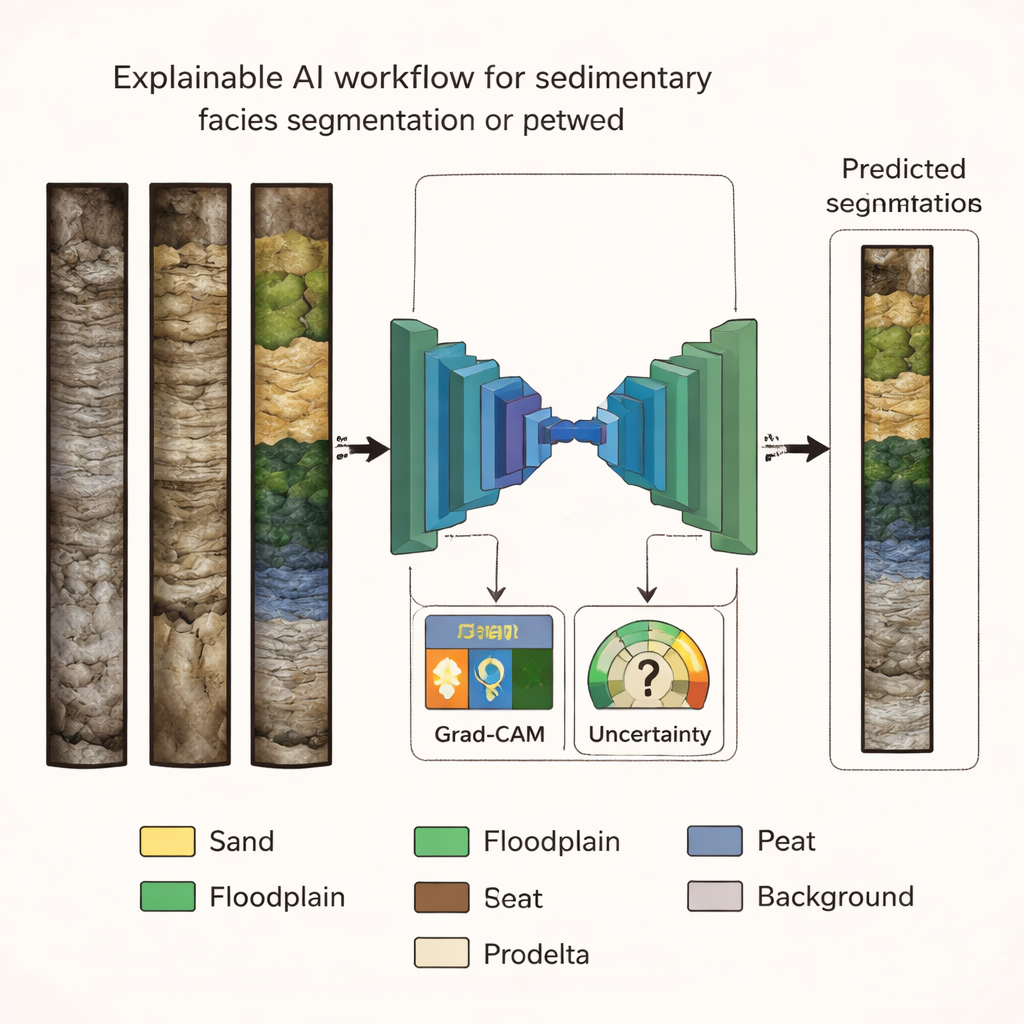

Teaching a Neural Network to See the Layers

The authors used a public dataset of high‑resolution core photographs from Holocene (last ~11,700 years) deposits in northern Italy. Each image was painstakingly labeled into six main facies—fluvial sand, well‑ and poorly‑drained floodplain muds, swamp deposits, peat layers, and offshore (prodelta) clays—plus a background class. They trained several versions of a popular image‑segmentation architecture, U‑Net, each with a different “backbone” that learns visual features. By comparing accuracy and related metrics on both a validation set and an unseen test set, they found that a model based on the EfficientNet‑B7 backbone offered the best balance of high performance and reliable generalization to new cores.

Looking at Rock with a Wider Lens

Human geologists rarely decide a facies based on a tiny spot; they read trends up and down the core, such as gradual fining or thickening of layers. To mimic this, the team tested how much vertical context the AI should see at once by training the best architecture on different patch sizes cut from the images. When the model viewed only small 128×128‑pixel patches, its predictions were noisy and facies bands appeared broken. As patch size increased through 256 and 384 pixels up to 512×512 pixels, the segmentation became smoother and closer to expert interpretation, with facies bodies preserved as continuous units. Performance gains leveled off between 384 and 512 pixels, suggesting that roughly this scale captures most of the useful context for the task.

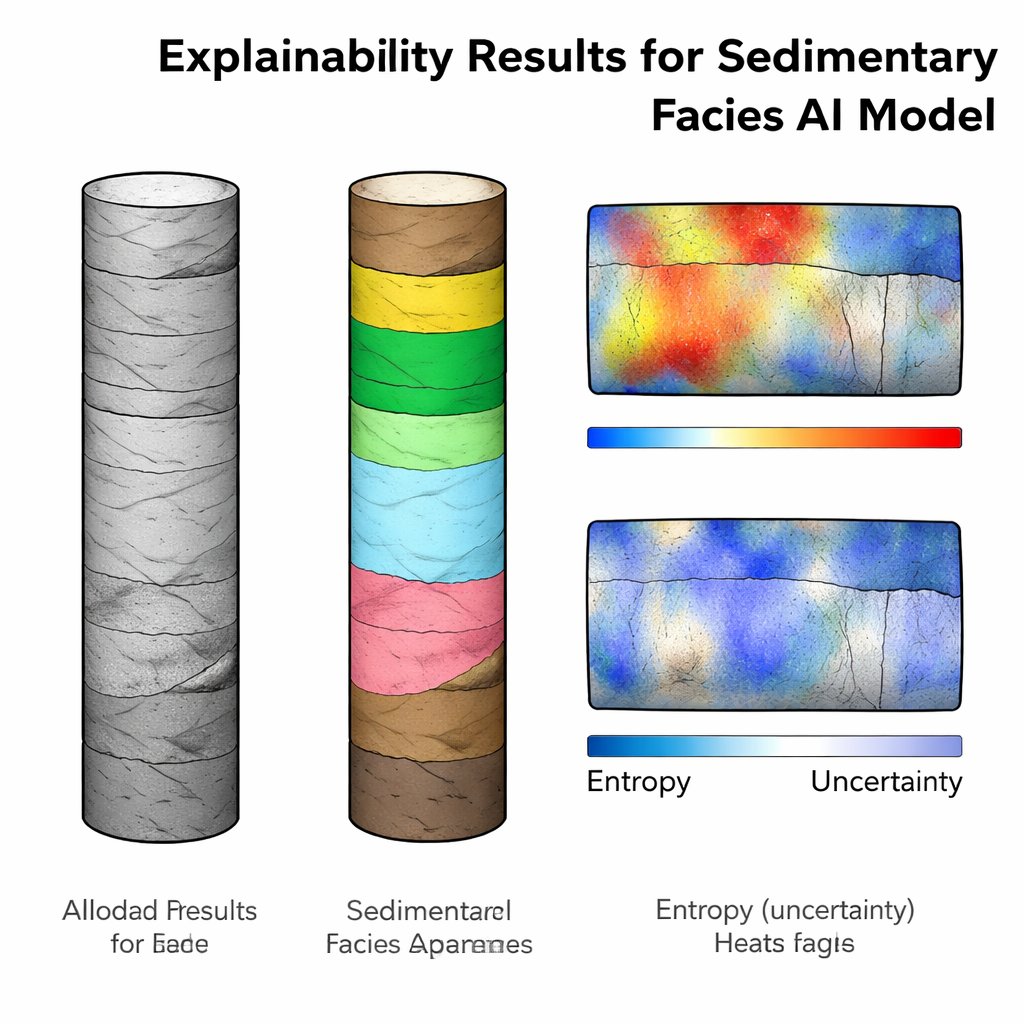

Opening the Black Box with Heat and Uncertainty Maps

High scores alone are not enough when AI informs decisions about hazards or resources; users need to see how and where the model is “looking.” The authors therefore applied two families of explainability tools. First, they used Grad‑CAM to produce saliency maps—heatmaps that highlight image regions most influential for each facies decision. These maps lined up well with the labeled facies, emphasizing, for example, organic‑rich zones for peat and swamp and clearly separating sediment from background. Importantly, some overlap, such as peat activations within swamp areas, matched the way sedimentologists conceptually group these environments. Second, they estimated predictive entropy by running the model many times with random dropout and summarizing how stable its predictions were at each pixel. High‑entropy zones often appeared near boundaries between facies, in thin interbedded sands within muds, or in portions of cores disturbed during drilling—precisely where experts themselves would hesitate. Yet many high‑uncertainty areas were still classified correctly, flagging intervals that deserve a second look rather than wholesale rejection of the results.

From Case Study to Practical Tool

Together, this work delivers more than an accurate model: it offers a complete, transparent pipeline for sediment‑core analysis. By carefully choosing the network architecture, matching its field of view to human reasoning, and pairing every prediction with visual explanations and uncertainty estimates, the authors show how AI can support rather than replace expert judgement. The same approach can be adapted to other geoscience images—from landslides to reservoir rocks—where trust, interpretability, and open data are as crucial as raw accuracy.

Citation: Di Martino, A., Carlini, G. & Amorosi, A. Explainable artificial intelligence for sedimentary facies segmentation. Sci Rep 16, 5984 (2026). https://doi.org/10.1038/s41598-026-36765-y

Keywords: explainable AI, sedimentary facies, geoscience imaging, core analysis, model uncertainty