Clear Sky Science · en

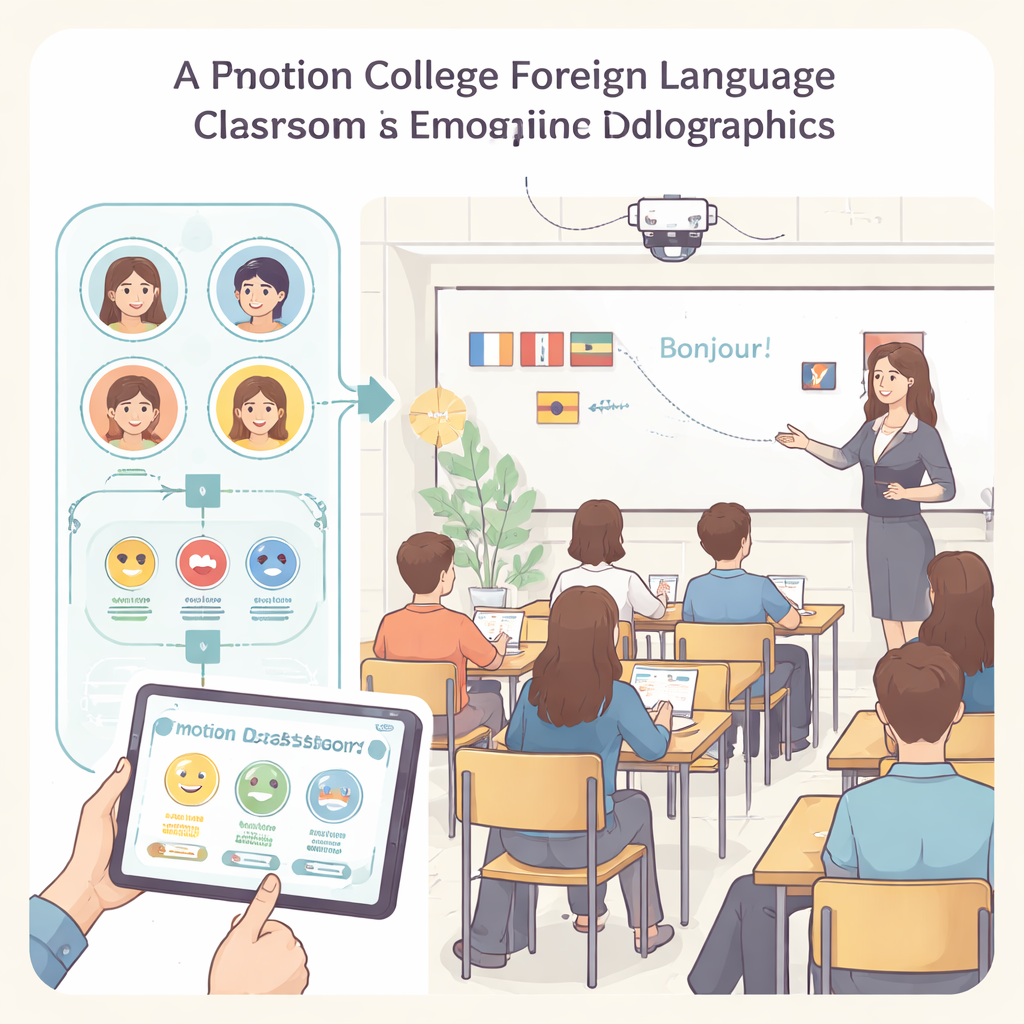

Exploring students’ emotion recognition and teachers’ teaching feedback in college foreign language classroom based on AFCNN model

Why Your Teacher Might Soon Read the Room with AI

Anyone who has sat through a dull class knows that boredom can quietly kill learning. Yet teachers often have only their intuition to guess how students are feeling in the moment. This study explores a new way to give college foreign language teachers a kind of “emotional dashboard” powered by artificial intelligence (AI). By reading students’ facial expressions in real time, the system helps teachers adjust their teaching on the spot and, in the long run, supports their professional growth.

Feelings Matter as Much as Grammar

Foreign language classes are not just about vocabulary lists and grammar rules. They are social spaces where confidence, anxiety, curiosity, and boredom all shape how well students learn. Past research has shown that teacher training usually concentrates on methods and subject knowledge while paying less attention to students’ in‑class emotions. Traditional tools, such as end‑of‑semester surveys or post‑class chats, arrive too late to rescue a struggling lesson. The authors argue that if teachers could see how emotions shift minute by minute, they could respond faster—speeding up, slowing down, or changing activities before students mentally check out.

Turning Faces into Helpful Signals

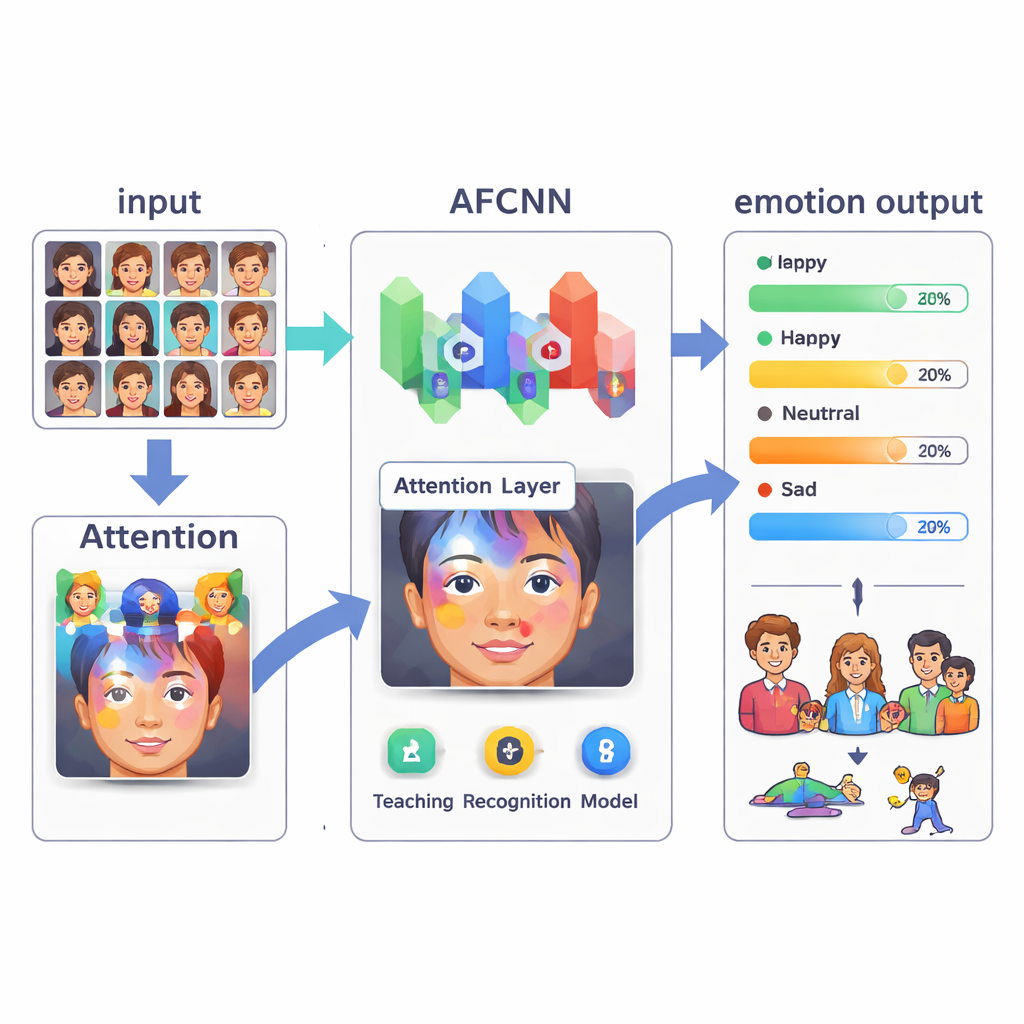

The heart of the study is a deep learning model called an Attention Feature Convolutional Neural Network, or AFCNN. In simple terms, a camera in the classroom captures students’ faces while they are learning. The model then follows three steps: it finds each face, pulls out features linked to expressions, and classifies them into one of seven basic emotions, such as happiness, sadness, fear, or a neutral state. A special "attention" mechanism helps the AI focus on the most informative parts of the face—like the eyes or mouth—while ignoring distractions. Unlike older approaches that work best with clean, front‑facing photos, this system is designed to handle more realistic conditions, such as partial views, hands on faces, or students looking sideways.

How Well the System Actually Works

To test the AFCNN, the researchers trained it on a well‑known collection of facial images labeled with emotional categories and expanded the data with simple tweaks such as rotation and brightness changes. They then compared its performance with two established image‑recognition models, VGG16 and ResNet18. Under clear conditions with no obstruction, the new model correctly identified emotions about 81% of the time and was especially good at recognizing happy and neutral expressions, reaching around the mid‑80% range in accuracy. When faces were partially blocked—by hair, hands, or hats—accuracy dropped for all systems, but AFCNN still outperformed the others and showed more balanced results across different emotions, suggesting it is more robust for real classrooms.

From Emotional Readouts to Better Lessons

The study goes beyond raw accuracy and asks whether this technology actually improves teaching. In a month‑long trial with 200 college foreign language teachers, half used the emotion‑recognition system and half taught as usual. Teachers with access to real‑time emotional feedback changed their teaching plans more than twice as often during a lesson, reported higher satisfaction with their teaching, and saw greater student participation and interaction. The researchers also designed a simple mapping from emotion patterns to suggested responses—for example, shifting to discussion or review when signs of confusion or frustration appear—moving the system from merely observing emotions to actively guiding behavior.

What This Means for Future Classrooms

In everyday terms, this research suggests that future classrooms may have a quiet assistant watching students’ faces and whispering to the teacher when the room is losing energy or when many students seem puzzled. The AFCNN system is not perfect—it still struggles with subtle emotions like disgust or fear, and it depends on high‑quality labeled images—but it shows that AI can reliably pick up emotional trends and that teachers can use this information to teach more responsively. For students, that could mean classes that feel more engaging and supportive; for teachers, it offers a new tool for professional development that blends psychology, education, and AI into a smarter, more human‑aware learning environment.

Citation: Shi, L. Exploring students’ emotion recognition and teachers’ teaching feedback in college foreign language classroom based on AFCNN model. Sci Rep 16, 5657 (2026). https://doi.org/10.1038/s41598-026-36747-0

Keywords: classroom emotion recognition, AI in education, foreign language teaching, teacher professional development, deep learning models