Clear Sky Science · en

Diagnosis of disorders of consciousness using nonlinear feature derived EEG topographic maps via deep learning

Listening for Signs of Awareness

When a loved one lies unresponsive after a severe brain injury, families and doctors face a heartbreaking question: is there any awareness left inside, and if so, how much? Traditional bedside exams can miss subtle signs of consciousness, leading to misdiagnoses that affect care, rehabilitation, and even end-of-life decisions. This study explores a new way to "listen" to the injured brain using EEG recordings, a mathematical measure of signal complexity, and deep-learning algorithms to better distinguish between two major conditions: the vegetative state and the minimally conscious state.

Two Very Different Unresponsive States

After serious brain injury, some patients open their eyes but show no clear signs of awareness; they are described as being in a vegetative state, also called unresponsive wakefulness syndrome (VS/UWS). Others may occasionally follow simple commands, track objects, or react meaningfully to voices or touch; these patients are said to be in a minimally conscious state (MCS). Although the behaviors look similar at a glance, the chances for recovery and the kind of rehabilitation needed can be very different. Yet even expert clinical teams misclassify up to 40 percent of these patients when relying mostly on bedside observation. The authors set out to support clinicians with an objective brain-based tool that could work at the bedside and does not depend on the patient’s ability to move or speak.

Measuring Brain Complexity with Sound and Silence

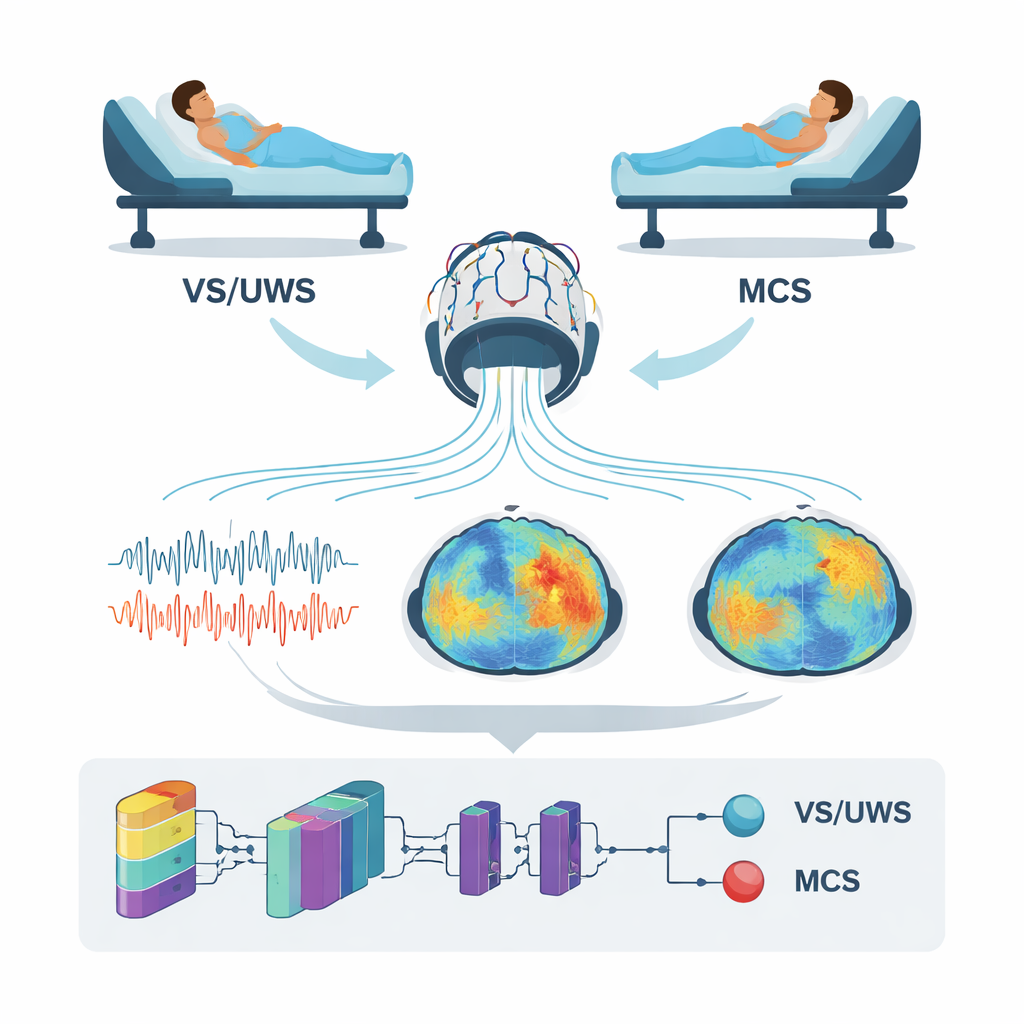

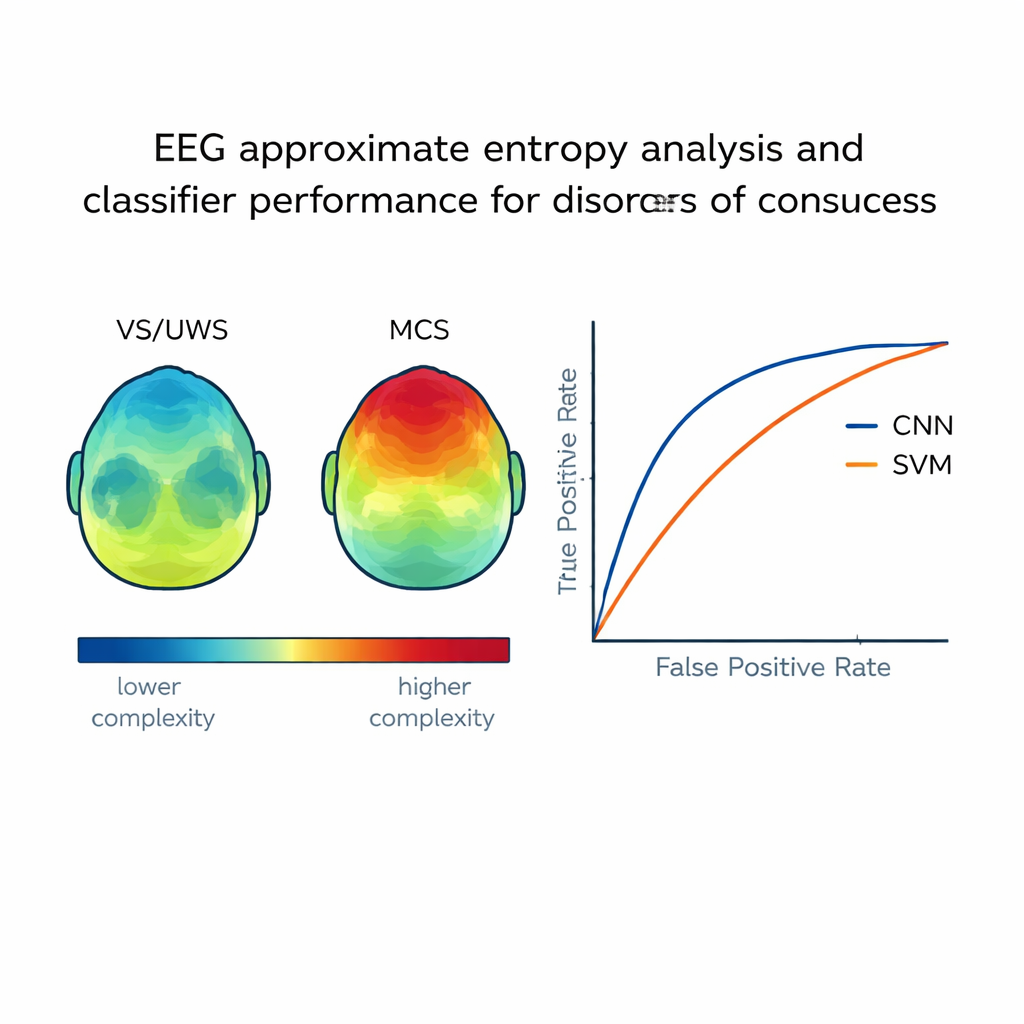

The researchers studied 104 adults with disorders of consciousness who were carefully evaluated with a standardized coma recovery scale. Each patient had their brain activity recorded with a 19-channel EEG system while resting quietly and again while listening to their favorite upbeat music, chosen based on family interviews. Rather than focusing on traditional brainwaves, the team calculated a nonlinear measure called approximate entropy, which captures how complex and unpredictable the EEG signal is over time. In simple terms, higher entropy reflects richer, more varied brain activity, which has been linked to conscious processing. The entropy values from each scalp electrode were turned into colorful topographic maps, creating a kind of “complexity portrait” of the brain in both rest and music conditions.

Teaching a Neural Network to Read the Maps

To turn these maps into a diagnostic aid, the team trained a convolutional neural network (CNN)—a type of deep-learning system often used in image recognition—to distinguish VS/UWS from MCS. For every patient, multiple 1-second EEG segments were converted into entropy maps and assembled into images that served as input to the CNN. In parallel, the authors built two more traditional machine-learning models: a support vector machine and a generalized regression neural network, using selected numerical features from the EEG. They then compared how well each approach labeled an independent test group of patients whose true diagnosis was known from careful clinical assessment.

Clear Differences in Brain Signals and Better Accuracy

The study found that patients in a minimally conscious state showed higher entropy in several brain regions than those in a vegetative state, especially over the left side of the head and during preferred music. In MCS patients, higher entropy values were meaningfully linked to higher scores on the coma recovery scale, suggesting that the measure tracks real differences in awareness. When it came to automatic classification, the CNN performed best: it correctly distinguished the two groups about 90 percent of the time and achieved a high summary accuracy measure (AUC 0.90). The support vector machine did reasonably well, while the generalized regression network lagged behind. Together, these results indicate that feeding image-like brain maps into a deep-learning model can capture subtle spatial patterns that simpler methods miss.

What This Could Mean for Patients and Families

For non-specialists, the key conclusion is that the brain’s “signal complexity” during rest and while listening to meaningful music carries valuable clues about hidden awareness. By turning these clues into easy-to-interpret maps and letting a neural network learn from them, the researchers created a tool that can help tell apart patients who are truly unaware from those who retain a fragile but real form of consciousness. While the work needs to be confirmed in larger and more diverse groups of patients, it points toward a future in which routine EEG recordings, combined with thoughtfully chosen sounds and modern artificial intelligence, offer a more reliable voice for those who cannot speak for themselves.

Citation: Qu, S., Wu, X., Huang, L. et al. Diagnosis of disorders of consciousness using nonlinear feature derived EEG topographic maps via deep learning. Sci Rep 16, 7417 (2026). https://doi.org/10.1038/s41598-026-36733-6

Keywords: disorders of consciousness, EEG, deep learning, vegetative state, minimally conscious state