Clear Sky Science · en

Latency and energy-aware adaptive service migration in mobile edge computing

Why moving apps closer to you matters

Every time you play an online game in your car, stream AR directions on your phone, or a sensor in a smart city sends data, those digital tasks have to be computed somewhere. Mobile Edge Computing (MEC) pushes that work from far‑away data centers to small servers sitting near cellular base stations, cutting delay and making apps feel more responsive. But keeping these services close to moving users means frequently “moving” (migrating) the running application between nearby edge servers. Too much moving wastes energy and money; too little creates lag and frustration. This study explores how to strike a smart balance using advanced machine learning.

Balancing speed and electricity use

Most earlier research on MEC service migration focused mainly on one goal: keeping user‑perceived delay as low as possible. That typically means chasing a user as they move and repeatedly shifting their app to the nearest server. However, every migration consumes extra communication energy and adds its own delay. Many previous methods also assumed plenty of server capacity and stable conditions, ignoring the reality that edge servers are resource‑constrained, compete for many users, and see rapidly changing loads and wireless quality. The authors argue that migration energy should be treated as a core objective, on equal footing with delay, and that migration policies must adapt online to user movement, server load, and network fluctuations.

From math problem to learning agent

The researchers first build a detailed mathematical model of a MEC system with multiple base stations, co‑located edge servers, and mobile users. Each user offloads computing tasks to nearby servers over wireless links. Total service delay is broken into three parts: time to send the task to the base station, time to compute it on the server, and time spent if the service is moved between servers over wired backhaul. Migration energy is modeled mainly from the amount of data that must be transferred when a service moves. The overall goal is to minimize both delay and migration energy while respecting limits on each server’s computing capacity and each service’s deadline. Solving this mixed‑integer, nonlinear problem exactly is computationally intractable in real time, so the team turns to deep reinforcement learning, where an agent learns good decisions by interacting with a simulated environment.

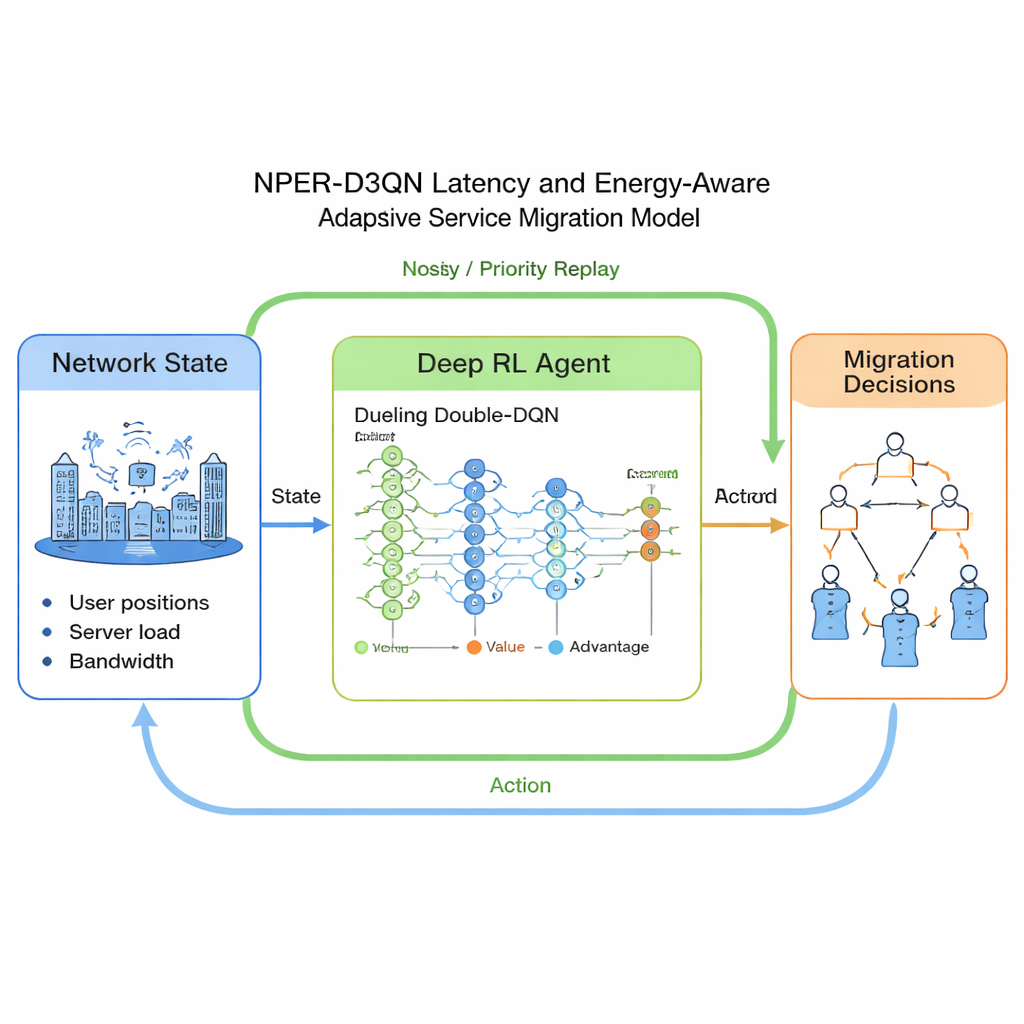

How the adaptive migration brain works

The proposed method, called NPER‑D3QN, is a sophisticated variant of Deep Q‑Networks (DQN). The agent’s input “state” summarizes where users are, how far they are from their serving base station, how loaded each edge server is, available computing capacity, wireless data rates, and how large and compute‑hungry each service is. Its “actions” are choices of which edge server should host each user’s service in the next time slot. The reward function encourages low delay relative to each service’s deadline while penalizing migration energy, pushing the agent to trade off speed against electricity use. Technically, the model combines three ideas: a dueling network that separately estimates the value of being in a state and the benefit of each action, a “double” Q‑learning structure that reduces over‑optimistic estimates, and two exploration helpers—noisy networks and prioritized experience replay—that allow it to learn faster and more reliably in complex, changing conditions.

Putting the approach to the test

To see how well NPER‑D3QN works, the authors simulate a city‑like grid with dozens of base stations and up to hundreds of mobile users moving randomly and sending tasks of varying sizes. Edge servers have limited computing power and can host only a fixed number of virtual machines, creating realistic queuing and contention. They compare their method with six state‑of‑the‑art baselines, including classic DQN, improved double‑dueling variants, and schemes that either always chase the nearest server or focus solely on minimizing delay. Across a range of scenarios, NPER‑D3QN converges to good strategies faster and consistently achieves lower average service delay, lower migration‑related energy consumption, and fewer rejected migrations when servers are full. In a large‑scale test with 720 users and 96 servers, it cuts delay by up to about two‑thirds and migration energy by over 90% compared with some alternatives, while keeping computation time per decision within practical limits.

What this means for future connected services

For non‑specialists, the takeaway is that simply pushing apps closer to users is not enough: we also need intelligent control of when and where running services move. This work shows that a learning‑based controller can “juggle” the competing goals of responsiveness, energy savings, and limited edge capacity without hand‑crafted rules. If similar systems are deployed in real networks, they could help operators deliver smoother experiences for applications like autonomous driving, immersive AR, and industrial IoT while holding down electricity bills and infrastructure strain. The authors note that their study is simulation‑based and omits some real‑world details such as full server power consumption and imperfect monitoring, but it marks a promising step toward greener, more adaptable edge computing.

Citation: Li, L., Lv, J., Wang, S. et al. Latency and energy-aware adaptive service migration in mobile edge computing. Sci Rep 16, 6178 (2026). https://doi.org/10.1038/s41598-026-36711-y

Keywords: mobile edge computing, service migration, deep reinforcement learning, latency optimization, energy efficiency