Clear Sky Science · en

Secant Optimization Algorithm for efficient global optimization

Smarter Search for Tough Problems

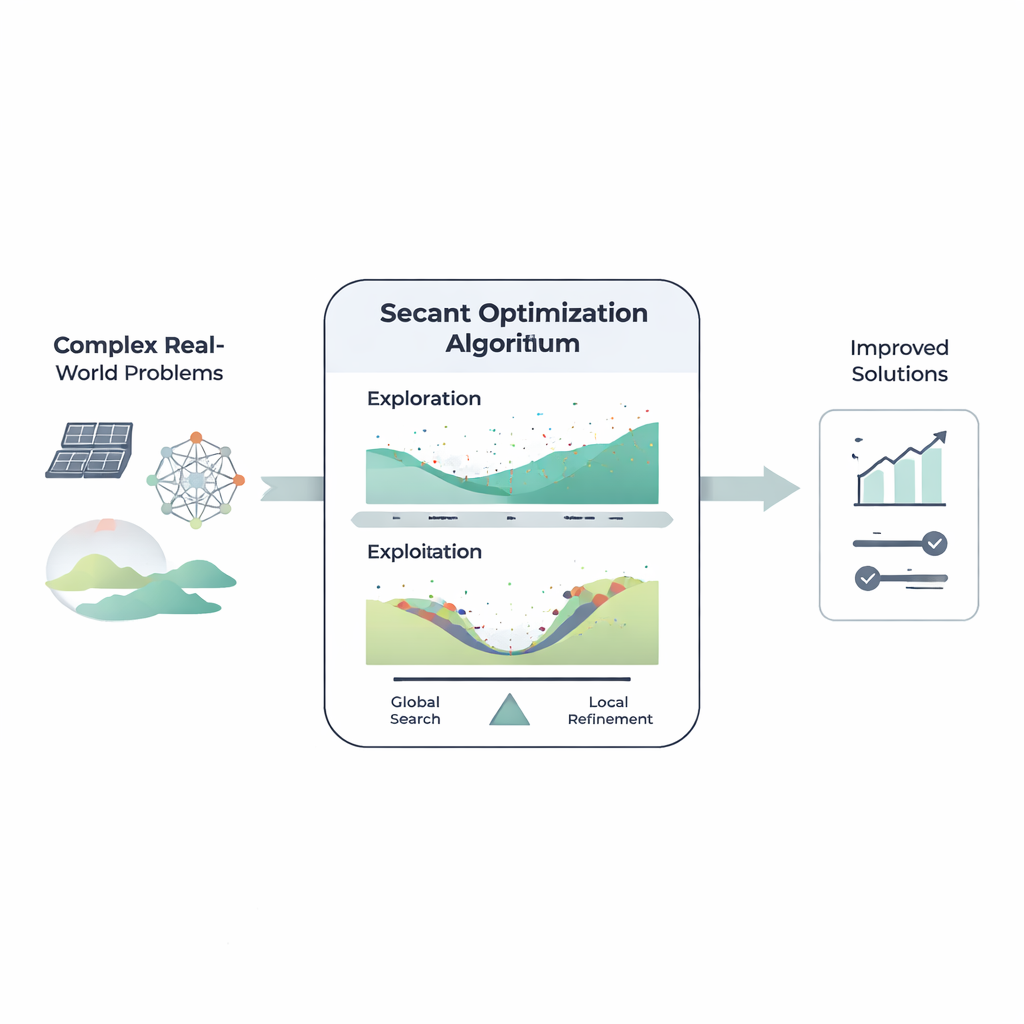

From designing cleaner solar panels to training accurate image-recognition systems, many of today’s challenges boil down to the same task: searching through an enormous space of possibilities to find a good solution. This paper introduces the Secant Optimization Algorithm (SOA), a new way to conduct that search more efficiently. Inspired by a classic idea from calculus but engineered for messy, real-world data, SOA aims to be both fast and dependable when traditional methods struggle.

Why Optimization Needs New Ideas

Modern engineering and data science problems often involve dozens or hundreds of adjustable settings, multiple goals, and tangled relationships that are hard to write down in simple formulas. Classic techniques that follow exact gradients, like steepest descent, can fail when the landscape is rough, full of many local traps, or when derivatives are hard or impossible to compute. In response, researchers have developed “metaheuristic” algorithms that mimic nature, physics, or mathematics to explore these difficult landscapes. These methods, such as genetic algorithms or swarm optimizers, have proven remarkably versatile but still face trade-offs between roaming widely and zooming in precisely.

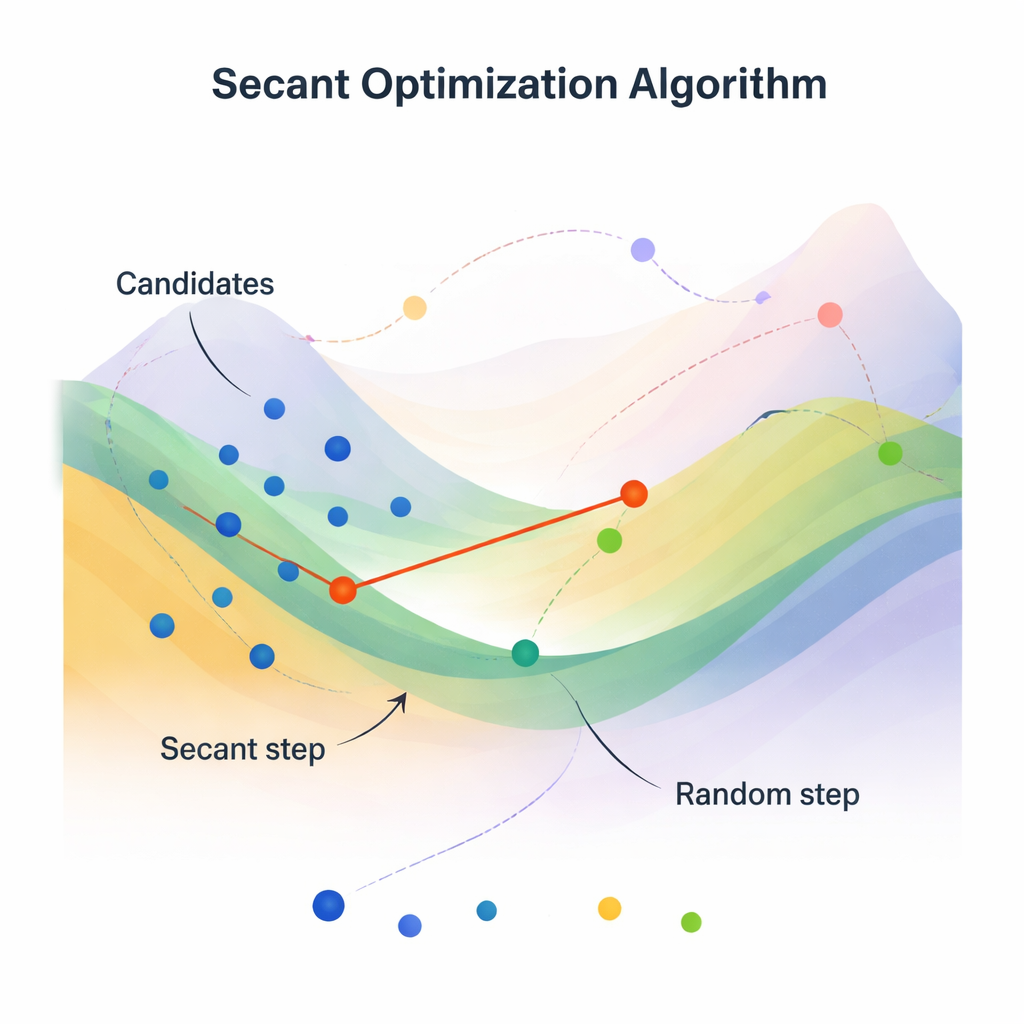

Turning a Textbook Trick into a Search Engine

The heart of SOA is the secant method, an old numerical trick for finding where a curve crosses zero without needing exact derivatives. Instead of using a slope computed from calculus, the secant method draws a straight line between two nearby points on the curve and uses that line as a rough slope. SOA generalizes this idea to many dimensions and many candidate solutions at once. It maintains a population of vectors (possible answers) and repeatedly updates them using secant-like steps that approximate how the objective function is trending, but only from function values. This makes the method attractive in settings where gradients are noisy, expensive, or undefined, such as tuning neural network hyperparameters by looking at validation error.

Balancing Wide Exploration with Sharp Focus

SOA’s design explicitly separates how it explores and how it refines. In the exploration stage, each candidate is adjusted using a secant-based rule that combines information from the current best solution, the current vector, and a randomly chosen peer. This helps steer the search in directions that appear promising, without being purely random. In the exploitation stage, SOA introduces an “expansion factor” and controlled randomness. It nudges solutions toward the best, the average, the closest, and even the farthest points in the population, and mixes in random walks. A simple mutation rule occasionally keeps an old position instead of the new one, which preserves diversity. Together, these mechanics help SOA escape local traps while still homing in on high-quality answers.

Testing on Benchmarks and Real Devices

To see whether SOA is more than a clever idea on paper, the authors test it on widely used benchmark families known as CEC2021 and CEC2020. These functions are designed to be punishing: some are low-dimensional but full of false minima; others stretch to 50 or 100 dimensions. Across these tests, SOA is compared with two groups of competing algorithms, including 11 math-inspired methods and 9 recent or variant optimizers. Using statistics such as average error, variability, convergence curves, and rank-based tests, SOA consistently matches or outperforms most rivals, particularly in reaching good solutions quickly and reliably. The authors then move beyond synthetic tests to two demanding real-world tasks: estimating key parameters in photovoltaic (PV) models and automatically tuning the hyperparameters of convolutional neural networks for several image datasets.

From Solar Panels to Neural Networks

In solar energy, accurate models of PV cells and modules are essential to predict output and optimize operation. The team applies SOA to several standard PV models, including single-diode, double-diode, and full module descriptions. Using measured current–voltage data, SOA adjusts model parameters to minimize error and is shown to achieve lower or comparable root-mean-square error than a range of established optimizers. In machine learning experiments, SOA is used to tune the architecture and training settings of a convolutional neural network on MNIST and related image datasets. Here too, the algorithm finds hyperparameter combinations that yield competitive or superior classification accuracies relative to other automated search strategies.

What This Means in Practice

For non-specialists, the main message is that SOA offers a practical new “search engine” for hard optimization problems where the landscape is rough and gradients are not available. By borrowing the geometry of the secant method and embedding it into a population-based search with well-balanced randomness, the algorithm often converges faster and more accurately than many current alternatives. Because it is relatively simple, derivative-free, and light on tuning parameters, SOA can be plugged into a variety of applications—from designing more efficient solar power systems to configuring deep learning models—making it a promising addition to the toolbox for engineers and data scientists alike.

Citation: Ibrahim, M.Q., Qaraad, M., Hussein, N.K. et al. Secant Optimization Algorithm for efficient global optimization. Sci Rep 16, 6659 (2026). https://doi.org/10.1038/s41598-026-36691-z

Keywords: global optimization, metaheuristic algorithms, secant optimization algorithm, photovoltaic modeling, hyperparameter tuning