Clear Sky Science · en

Real time fire and smoke detection using vision transformers and spatiotemporal learning

Why Faster Fire Alerts Matter

Fires in homes, factories, and forests can turn deadly in minutes. Today, many alarms still rely on heat or smoke sensors that react only after flames are well established. This article describes a new computer-vision system that can spot signs of fire and smoke in camera feeds almost instantly, even in challenging conditions like low light or heavy haze. By combining several advanced artificial intelligence techniques into a single model, the researchers aim to give firefighters, city planners, and environmental agencies a much earlier warning signal—potentially saving lives, property, and ecosystems.

The Growing Challenge of Detecting Flames

Modern cities and forests are increasingly monitored by cameras, but teaching computers to reliably recognize fire and smoke in those images and videos is tricky. Traditional approaches use neural networks that work well on still images or short clips, yet they often struggle in messy real-world scenes. A single snapshot might show something that looks like smoke but is only fog or exhaust. Video-focused systems can track how shapes move over time, but they tend to be slow and demanding on hardware. As a result, earlier models frequently raise false alarms or miss subtle, fast-changing signs of danger—especially in poor lighting, dense smoke, or cluttered backgrounds.

A Hybrid AI "Watcher" for Images and Video

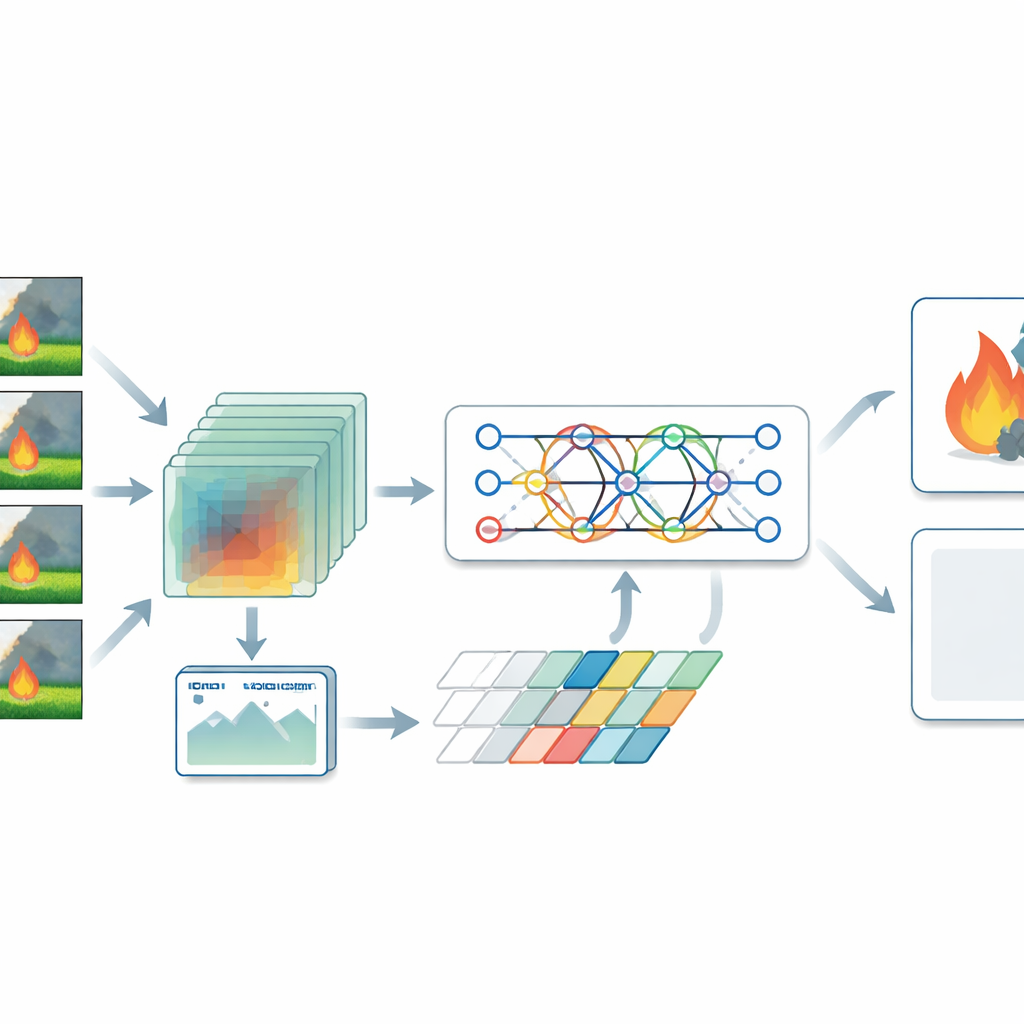

The authors propose a hybrid model that treats fire detection as both a spatial and a temporal problem. For still images, they use a type of neural network called a vision transformer, which looks at an image as a patchwork of regions and learns how distant areas relate to each other. This helps it notice broad patterns, such as wisps of smoke spreading across a valley or flames scattered in a forest. For video, the system relies on a three-dimensional convolutional network that processes stacks of frames at once, capturing how smoke and fire change over time. A transformer encoder then examines those changing patterns and focuses attention on the moments and regions most likely to indicate danger, rather than giving equal weight to every frame.

Blending Clues and Balancing the Data

A key step in the system is a fusion layer that blends detailed still-image clues with motion patterns from video. By combining these complementary views, the model can better distinguish real fires from harmless look-alikes such as sunset glare, fog, or clouds. The researchers also realized that many public datasets contain far more fire than non-fire examples, which can bias a model toward over-reporting flames. To counter this, they generated a wide variety of realistic non-fire scenes through careful data augmentation—changing brightness, cropping, and flipping images, and simulating situations like foggy mornings or dim interiors. They then trained the model with a loss function that explicitly balances mistakes on fire and non-fire cases, improving reliability in day-to-day use.

Putting the System to the Test

To see how well their approach works, the authors tested it on two widely used datasets: one of nearly a thousand still images from the NASA Space Apps Challenge, and another of fire-related videos from Kaggle. After preprocessing and balancing, they trained and evaluated their hybrid model alongside well-known baselines such as ResNet, VGG, LSTM, pure 3D convolutional networks, and several hybrid pairings of these older methods. The new system reached about 99.2% accuracy on the NASA images and 98.3% on the video dataset, clearly outperforming the traditional models, which typically ranged from the mid-80s to mid-90s. It also ran quickly enough—tens of milliseconds per frame—and with a modest model size, making it suitable for deployment on edge devices like small GPUs and embedded boards.

What This Means for Everyday Safety

In everyday terms, this research shows that a thoughtfully designed AI can watch camera feeds in real time and reliably answer a simple but vital question: "Is there fire or dangerous smoke here right now?" By combining broad visual context, motion over time, and smart attention to the most telling details, the hybrid model sharply reduces both missed fires and false alarms. With further tuning and exposure to more varied scenes—such as dense cities, underground spaces, and extreme weather—it could become a practical backbone for smarter alarm systems, wildfire monitoring networks, and industrial safety tools that react faster and more accurately than many of today’s solutions.

Citation: Lilhore, U.K., Sharma, Y.K., Venkatachari, K. et al. Real time fire and smoke detection using vision transformers and spatiotemporal learning. Sci Rep 16, 8928 (2026). https://doi.org/10.1038/s41598-026-36687-9

Keywords: fire detection, smoke detection, computer vision, transformer models, real-time monitoring