Clear Sky Science · en

A self attention based deep learning framework for accurate and efficient dental disease detection in OPG radiographs

Why Smarter Dental Scans Matter

Most of us only think about dental X-rays when we sit in the dentist’s chair, but these images quietly carry life‑changing information. Tooth decay, gum disease and missing teeth affect billions of people, yet early warning signs are easy to miss, even for trained experts staring at crowded panoramic scans. This study explores how a new generation of artificial intelligence can read these wide “smile‑shaped” images quickly and accurately, helping dentists spot trouble sooner and reduce the chances of painful and costly treatments later.

The Growing Burden Inside Our Mouths

Oral diseases are now among the world’s most common health problems, affecting an estimated 3.5 billion people. Cavities, gum inflammation, hardened plaque (called calculus) and missing teeth are not just cosmetic issues; they can cause chronic pain, infection and difficulty eating, and are linked to broader health risks. Young people are increasingly affected, and tooth loss in older adults can sharply reduce quality of life. Traditional checkups—looking, probing and reading X‑rays by eye—remain the main line of defense, but they depend heavily on a clinician’s experience and can overlook small or early‑stage damage hidden in complex images.

Turning Panoramic X‑rays into Data

The researchers focus on a common type of dental image called an orthopantomogram, or OPG—a single wide X‑ray that shows all the teeth and both jaws at once. Because OPGs are already taken routinely in many clinics and use a modest radiation dose, they are an ideal target for automation. The team assembled more than 5,000 images representing four common conditions: cavities (caries), calculus, gingivitis and hypodontia (missing teeth). Before teaching a computer to recognize these problems, they carefully prepared the images—standardizing size and brightness, reducing noise and using a separate model to crop out everything except the dental arch, so the AI would focus on teeth and gums rather than distracting background anatomy.

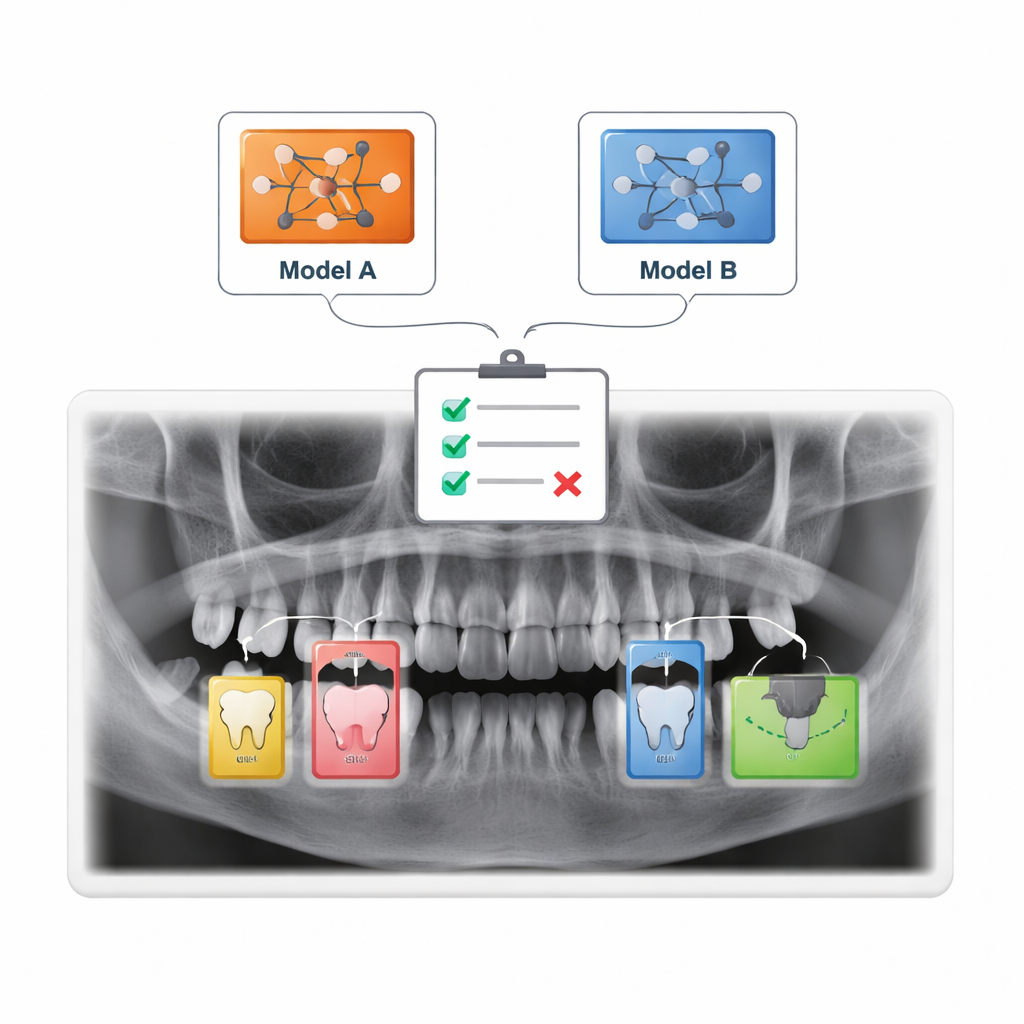

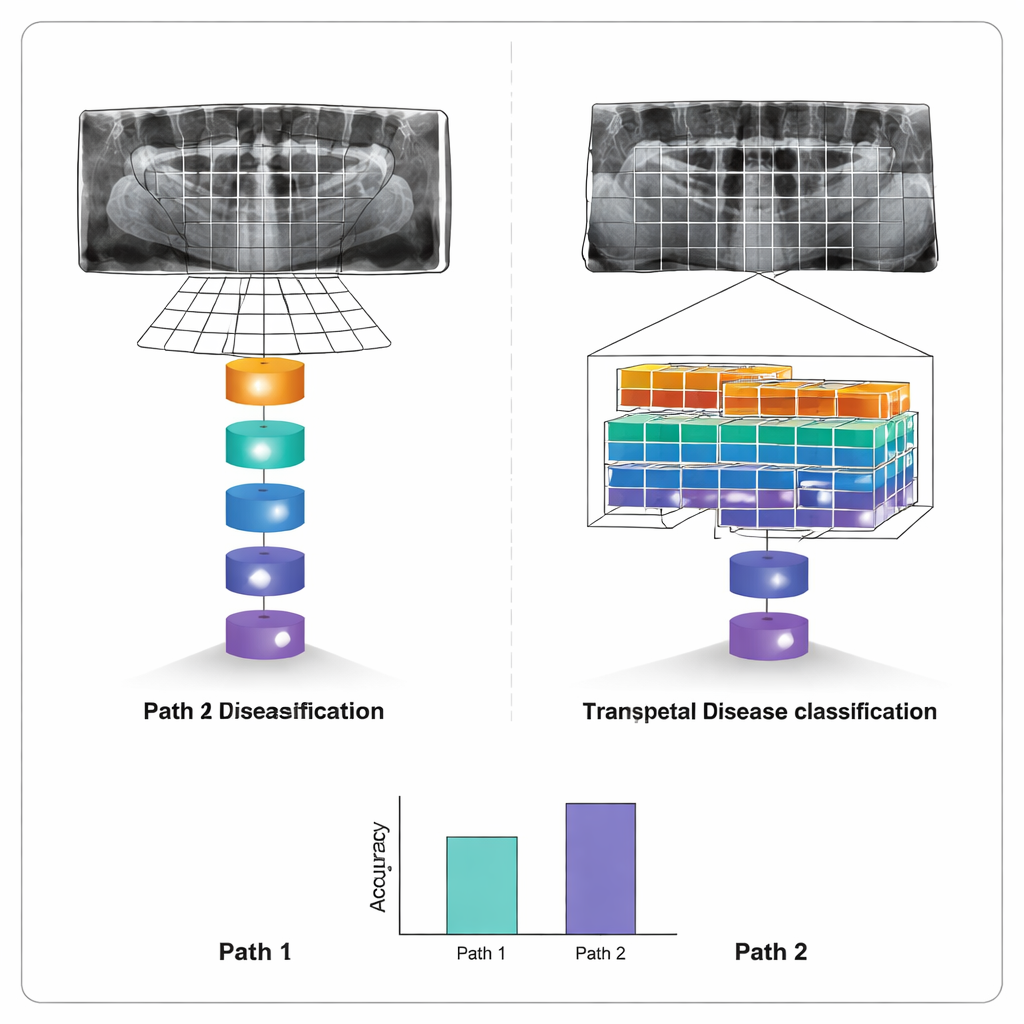

Two Rival AIs: Global View vs. Windowed View

To read the X‑rays, the study compares two “transformer” models, a class of AI that has recently revolutionized language and image analysis. The first, called a Vision Transformer, slices each X‑ray into many small patches and then analyses all of them together, learning how distant parts of the mouth relate to each other. The second, known as a Swin Transformer, also breaks the image into pieces but concentrates on local windows that slide across the picture, building a hierarchy from fine details to broader patterns. Both models were trained on the same dataset and evaluated using standard measures of diagnostic performance, including how often they correctly flag diseased and healthy images.

How Well the Machines Diagnose Teeth

After training, both systems proved remarkably capable. The Vision Transformer correctly classified about 96% of test images, with similarly high precision and recall—meaning it rarely raised false alarms and seldom missed disease. The Swin Transformer performed only slightly less well, at about 95% accuracy, but used computation more efficiently thanks to its windowed design. The biggest edge for the Vision Transformer appeared in spotting small cavities, where its ability to consider the whole mouth at once helped it pick up tiny, low‑contrast defects. Cropping images to focus on the dental arch further improved results, confirming that removing irrelevant regions makes the models more reliable.

What This Means for Future Dental Visits

For patients, the message is not that computers will replace dentists, but that they can act as an extra pair of sharp eyes. This work shows that modern AI can scan a panoramic dental X‑ray and accurately sort it into common disease categories in seconds, highlighting areas that deserve a closer look. While the study is based on a single combined dataset and still needs larger, real‑world trials, it suggests that transformer‑based systems could one day help standardize diagnoses, reduce missed problems and make advanced dental care more accessible—especially in busy or resource‑limited clinics.

Citation: Bhoopalan, R., Mirdula, S., Kannusamy, P. et al. A self attention based deep learning framework for accurate and efficient dental disease detection in OPG radiographs. Sci Rep 16, 5914 (2026). https://doi.org/10.1038/s41598-026-36672-2

Keywords: dental AI, panoramic X-ray, tooth decay detection, deep learning, oral health