Clear Sky Science · en

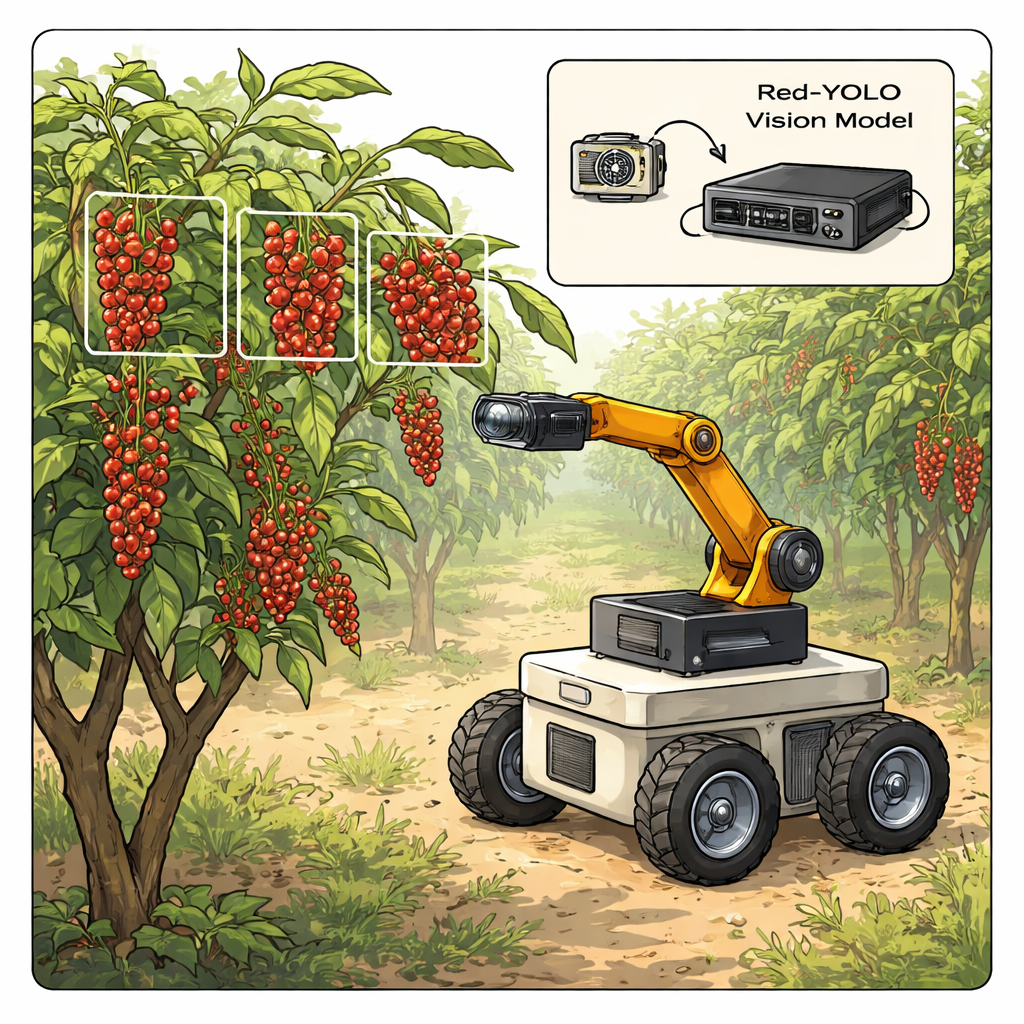

A lightweight YOLO-based model for accurate detection of red pepper clusters in robotic harvesting

Smarter Robots for Spicy Harvests

Sichuan peppercorns, the tiny red husks that give Sichuan cuisine its signature tingling heat, are surprisingly hard to pick. The fruits grow in dense, prickly clusters that can easily be damaged, and harvesting them by hand is slow, seasonal work. This study introduces a new computer vision system, called Red-YOLO, designed to help small, mobile robots quickly and accurately spot these delicate pepper clusters in real orchards, even when fruits overlap or hide behind leaves.

Why Pepper Picking Is So Tricky

Unlike large, smooth fruits such as apples, red peppercorns grow as many tiny berries packed together on thorny branches. The clusters can look very different from one tree to another: some are tight and compact, others are loose and diffuse, and all are surrounded by confusing backgrounds of branches, leaves, and changing light. For a robot, seeing where one cluster ends and another begins—and how firmly each is packed—is essential. The gripping force and even the size of the robot’s picking tool must change depending on how tightly the fruits are bunched, or the peppers’ fragile oil sacs may burst, reducing quality and value.

Building a Real-World Image Library

Because no public image collections existed for this crop, the researchers first had to create their own dataset. Over two growing seasons in Sichuan’s Hanyuan County, they photographed pepper trees in real orchards using a consumer smartphone, capturing 960 high-resolution, square images under different sun angles and times of day. Each image was carefully labeled by hand, distinguishing between compact and diffuse clusters. To teach the computer to handle variety, they digitally altered many of the images—adjusting brightness and contrast, flipping them horizontally, distorting some grids, and rotating views. This expanded the training set to more than 4,300 images, while a small set of untouched photos was kept aside to honestly test how well the final system would perform.

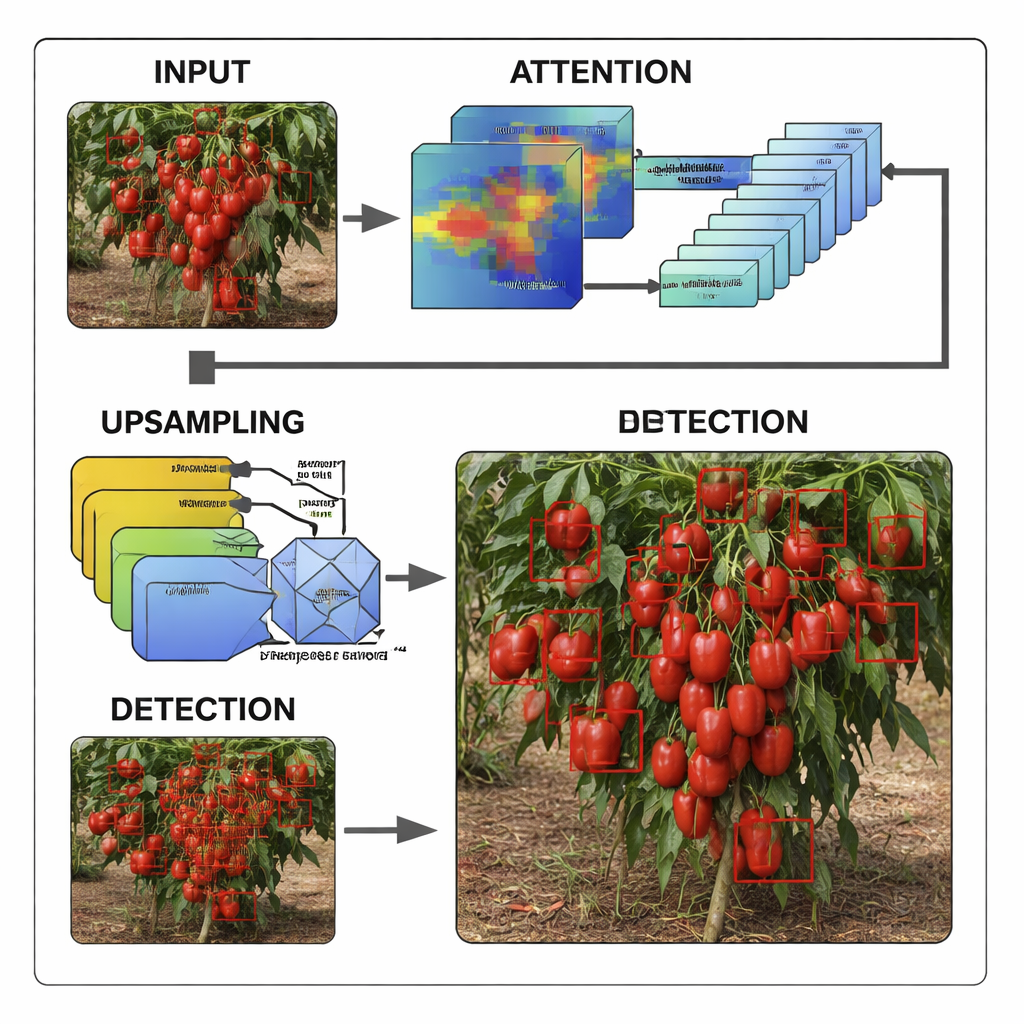

A Leaner, Sharper Computer Vision Model

At the heart of the system is YOLOv8, a widely used “you only look once” object detection model that finds objects in a single, fast pass instead of in multiple slower stages. The team tailored a very small version of this model and then reshaped it specifically for red pepper clusters. They added an attention module that teaches the network to focus on channels and regions most likely to contain fruit while ignoring distractions like sky, branches, and distant trees. They redesigned parts of the network so that it can reuse information more efficiently and cut down on unnecessary calculations. They also replaced a simple resizing step with a smarter upsampling block that rebuilds fine details and boundaries around overlapping peppers, helping the model distinguish where crowded clusters begin and end.

Fast, Accurate Vision for Small Robots

To see whether these changes were worthwhile, the researchers compared Red-YOLO with both older, heavier detection systems and a range of modern lightweight YOLO variants. Traditional multi-stage models, though powerful, were simply too slow and resource-hungry for compact orchard robots. Several newer YOLO versions fared better but struggled with small, partially hidden clusters or busy backgrounds, often missing fruits or mistaking leaves for peppers. Red-YOLO struck a better balance: it detected pepper clusters with higher overall accuracy and recall than all comparison models, while keeping the model size to under three million parameters and the computation load low enough for embedded processors. Tests in varied orchard scenes showed that Red-YOLO consistently found clusters even when fruits were tiny, shaded, or heavily overlapped.

From Lab Model to Orchard Helper

For non-specialists, the key outcome is practical: this work shows that a compact, carefully tuned vision system can give small harvesting robots a reliable “eye” in the field. With Red-YOLO, a robot can automatically select whether it is handling a compact or diffuse cluster and adjust its gripper size and force before picking, reducing damage and saving labor. While the current study focuses on one pepper variety in a single region, the same approach—building focused datasets and refining lean detection models—could be extended to other specialty crops. As these vision systems become more robust and widely deployed, they may help make harvesting faster, safer, and more consistent, ensuring a steady supply of the peppers that power some of the world’s favorite flavors.

Citation: Zhao, H., He, J., Li, Y. et al. A lightweight YOLO-based model for accurate detection of red pepper clusters in robotic harvesting. Sci Rep 16, 5879 (2026). https://doi.org/10.1038/s41598-026-36671-3

Keywords: robotic harvesting, pepper detection, computer vision, lightweight YOLO, smart agriculture