Clear Sky Science · en

A hybrid local-global feature attention network for thin section rock image classification

Why smarter rock images matter

Rocks buried deep underground hold clues to where we can safely build tunnels, find groundwater, or tap new oil and gas reserves. Geologists study razor-thin slices of these rocks under a microscope, but carefully labeling thousands of images by hand is slow and subjective. This study introduces a new artificial intelligence system, called HFANet, that learns to recognize rock types from these thin-section images with near-perfect accuracy, potentially speeding up geological surveys and making them more consistent.

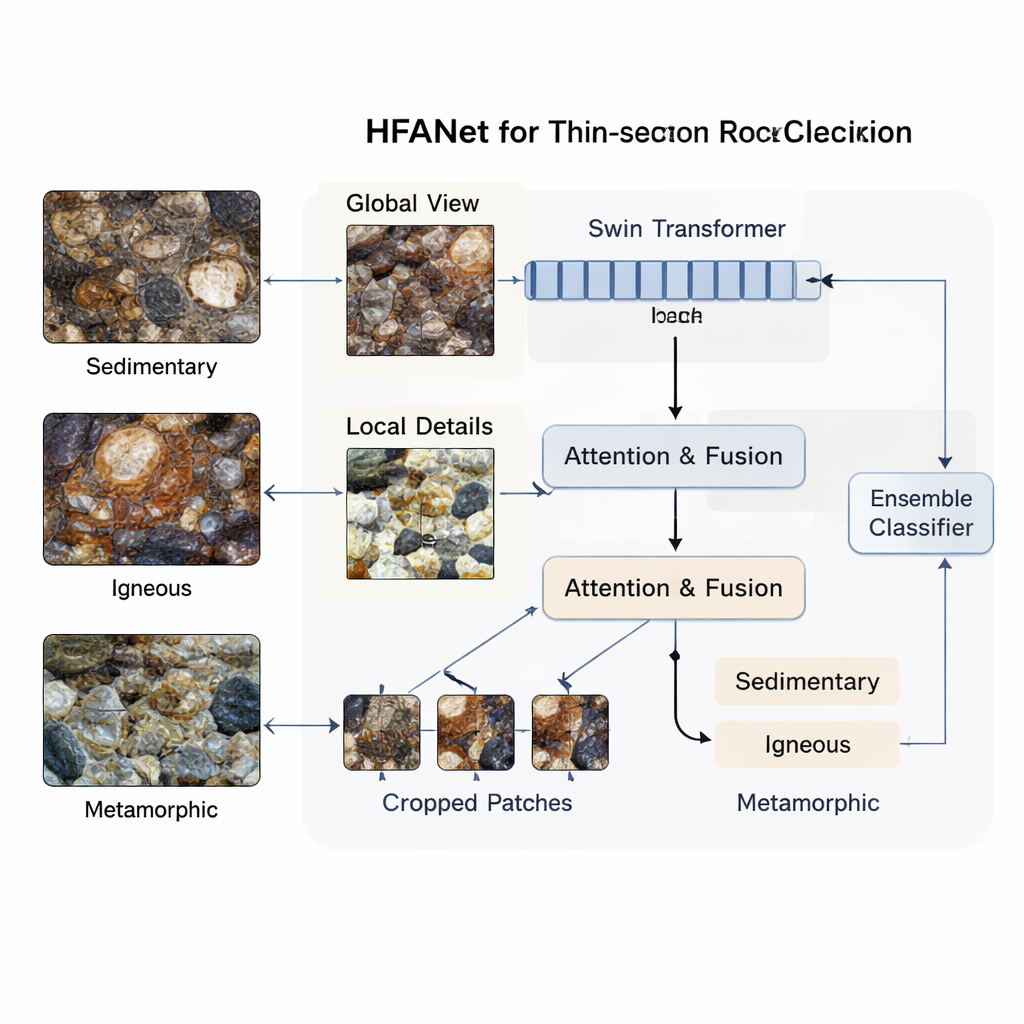

Seeing the big picture and the tiny details

Most computer vision tools are good at either seeing broad patterns or focusing on fine details, but not both at once. Thin rock slices are especially tricky: sandstones, lavas, and metamorphic rocks can look confusingly similar when you zoom in or out. HFANet tackles this by splitting the problem into two complementary views. One branch of the network looks at the entire image to capture the overall structure and mineral patterns across the field of view. The other branch divides the image into smaller patches, examining textures, grain edges, and tiny fractures in each piece.

Teaching the network where to pay attention

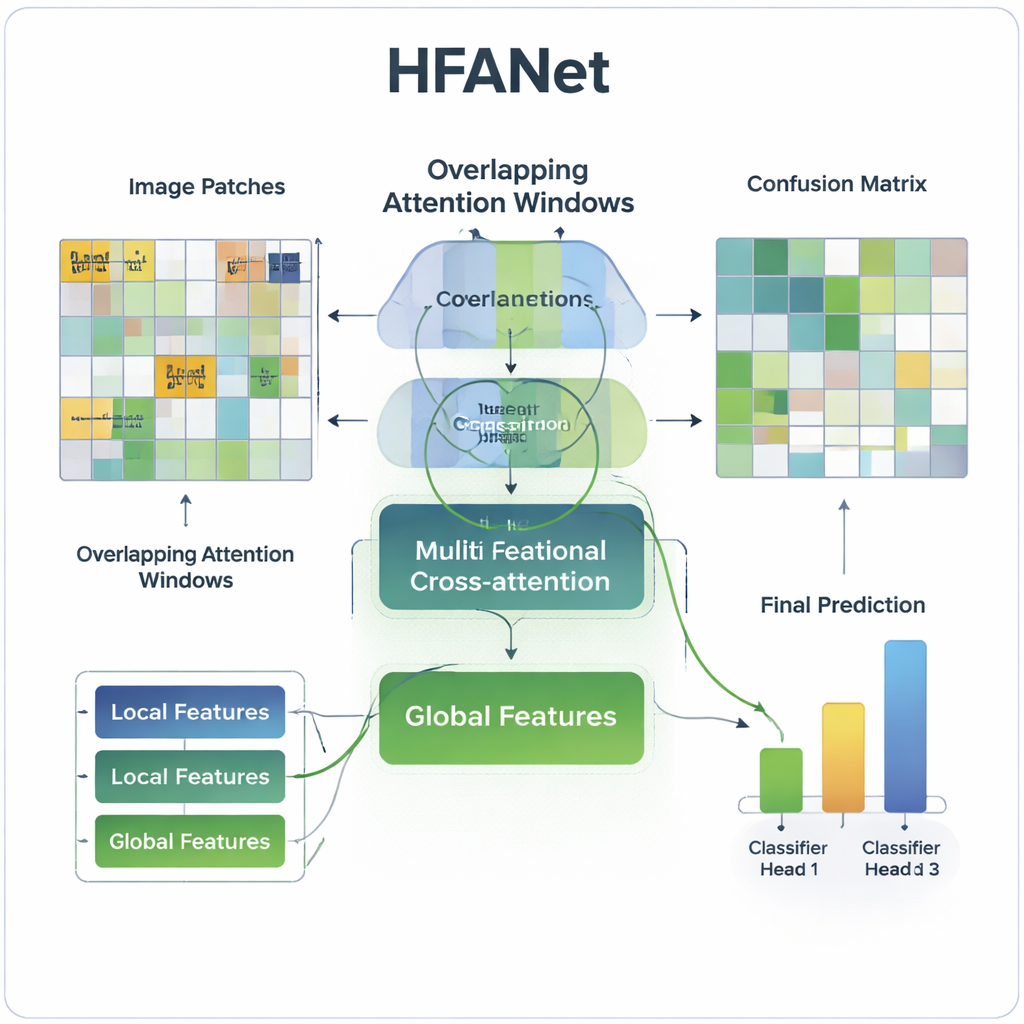

Simply running two branches in parallel is not enough; they need to talk to each other. HFANet uses attention mechanisms—mathematical tools that tell the model which parts of an image matter most for a decision. First, the patch-focused branch learns which local regions carry the most useful information by letting patches "pay attention" to one another. Then, a cross-talk stage lets global and local features guide each other in both directions. The global view nudges the model toward geologically meaningful areas, while the detailed patches feed back subtle textures and boundaries into the global summary. This back-and-forth attention helps the system lock onto key signals, such as the difference between two very similar sandstones, that would otherwise cause confusion.

Blending human-crafted clues with deep learning

In addition to what the network learns on its own, the authors bring in traditional image descriptors long used by geologists and image analysts. These include measurements of color balance, texture roughness, and brightness variations that capture, for example, how grains stand out from the background or how ordered a fabric appears. HFANet treats these classic features as another data source, feeding them into the global branch and letting the network learn how to weight them. This fusion adds only a tiny computational cost but measurably improves accuracy, especially in challenging igneous rocks where subtle shifts in texture and mineral mix make classification harder.

Benchmarking performance and testing generality

The researchers trained and evaluated HFANet on a large teaching dataset from Nanjing University that includes over 2,600 microscope images covering 108 rock types—sedimentary, igneous, and metamorphic. On fine-grained tasks, such as telling one sedimentary subtype from another, HFANet exceeded 99% accuracy and achieved perfect scores on ranking-based metrics that measure how well the model separates classes. Across all three main rock groups combined, it consistently beat widely used CNN and Transformer models. The team then asked a harder question: how does the model behave on a different collection of mineral thin sections it never saw during training? Here, a simpler network actually produced slightly higher raw accuracy, but HFANet still showed the best ability to rank the correct class highly, suggesting that its internal representation of rock patterns remains strong even when imaging conditions change.

Looking inside the model’s reasoning

To check whether HFANet focuses on geologically meaningful regions, the authors compared the model’s attention maps with expert annotations. In example images of volcanic sedimentary rocks, HFANet highlighted volcanic glass fragments, crystal debris, and fractures—structures human experts use to name and interpret those rocks. Its focus lined up well with hand-drawn masks of important features and was more precise than standard visualization tools applied to a leading baseline model. This alignment suggests the system is not just memorizing colors or noise, but is keying in on boundaries, fabrics, and grain relationships that matter scientifically.

What this means for future geological work

For everyday geoscience, HFANet points toward automated tools that can quickly and reliably label thin-section images, flag ambiguous cases, and help standardize teaching collections. While its dual-branch, attention-heavy design is more computationally demanding than simpler networks, it delivers a rare combination of accuracy, interpretability, and respect for geological structure. With further work on speeding up the model and adapting it to new microscopes and rock suites, systems like HFANet could become trusted assistants to human experts, handling routine rock classification while freeing geologists to focus on complex interpretation and decision-making.

Citation: Wei, P., Fan, C., Yang, X. et al. A hybrid local-global feature attention network for thin section rock image classification. Sci Rep 16, 6446 (2026). https://doi.org/10.1038/s41598-026-36669-x

Keywords: rock thin-section images, deep learning classification, attention networks, geological image analysis, petrography automation