Clear Sky Science · en

A high-performance training-free pipeline for robust random telegraph signal characterization via adaptive wavelet-based denoising and Bayesian digitization methods

Why tiny signal flickers matter

Inside modern electronics and even living cells, important events can look like tiny clicks in time: a signal suddenly jumps up, stays there for a while, then drops back down. These jumps, known as random telegraph signals, can reveal when a single defect in a chip traps an electron, or when a molecular machine in biology switches state. But in real measurements these jumps are buried under hiss and hum from many other noise sources. This paper introduces a fast, training-free analysis pipeline that can automatically clean up such data, recover the hidden jump patterns, and do so reliably enough for future technologies like quantum devices and next‑generation sensors.

Seeing jumps in a sea of noise

A random telegraph signal is like a light that randomly flips between two or more brightness levels. From these flip patterns, researchers can infer how long a defect or molecular site tends to stay “on” or “off,” and how strong its effect is. That information, in turn, speaks directly to the reliability of nanoscale transistors, image sensors, and quantum bits. The challenge is that real signals are rarely clean: they are mixed with “white” noise, which spreads evenly over all frequencies, and “pink” or 1/f noise, which drifts slowly and can completely hide the underlying steps. As devices shrink and we monitor them at ever finer time resolution, these noise sources grow in importance, making it harder to tell apart genuine physical events from background clutter.

A smarter cleanup and counting pipeline

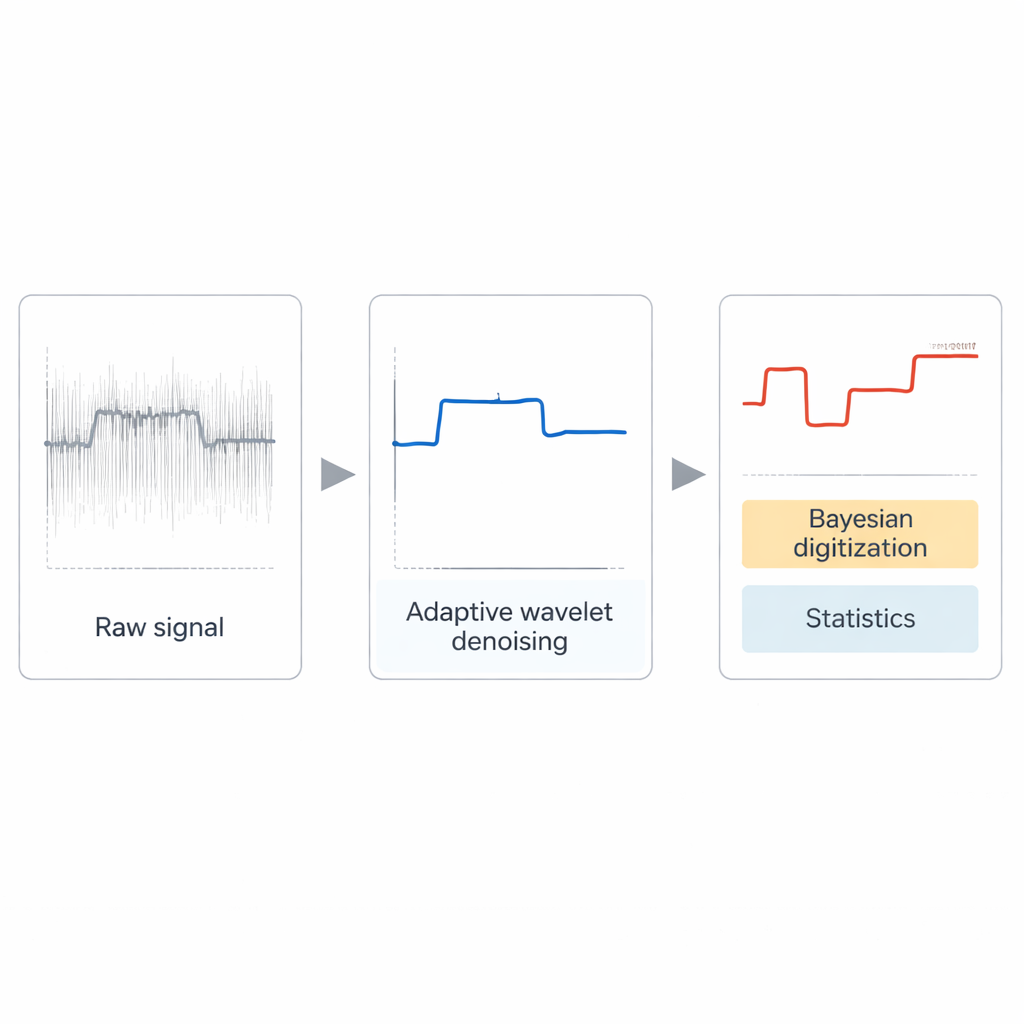

The authors propose a three-step, modular pipeline that works without any machine-learning training. First, an advanced wavelet-based tool, the dual-tree complex wavelet transform, adaptively denoises the raw signal. Its settings are chosen automatically from simple properties of the data, so users do not need to hand-tune parameters. This stage is especially good at removing fast, white noise while preserving the sharp edges of real jumps. Next, the cleaned signal is analyzed statistically to find the most common amplitude levels, like identifying the most frequently visited rungs on a ladder. Finally, a lightweight Bayesian step translates the smoothed signal into a digital record of which level is active at each moment and calculates how long each state typically lasts.

Putting the method to the test

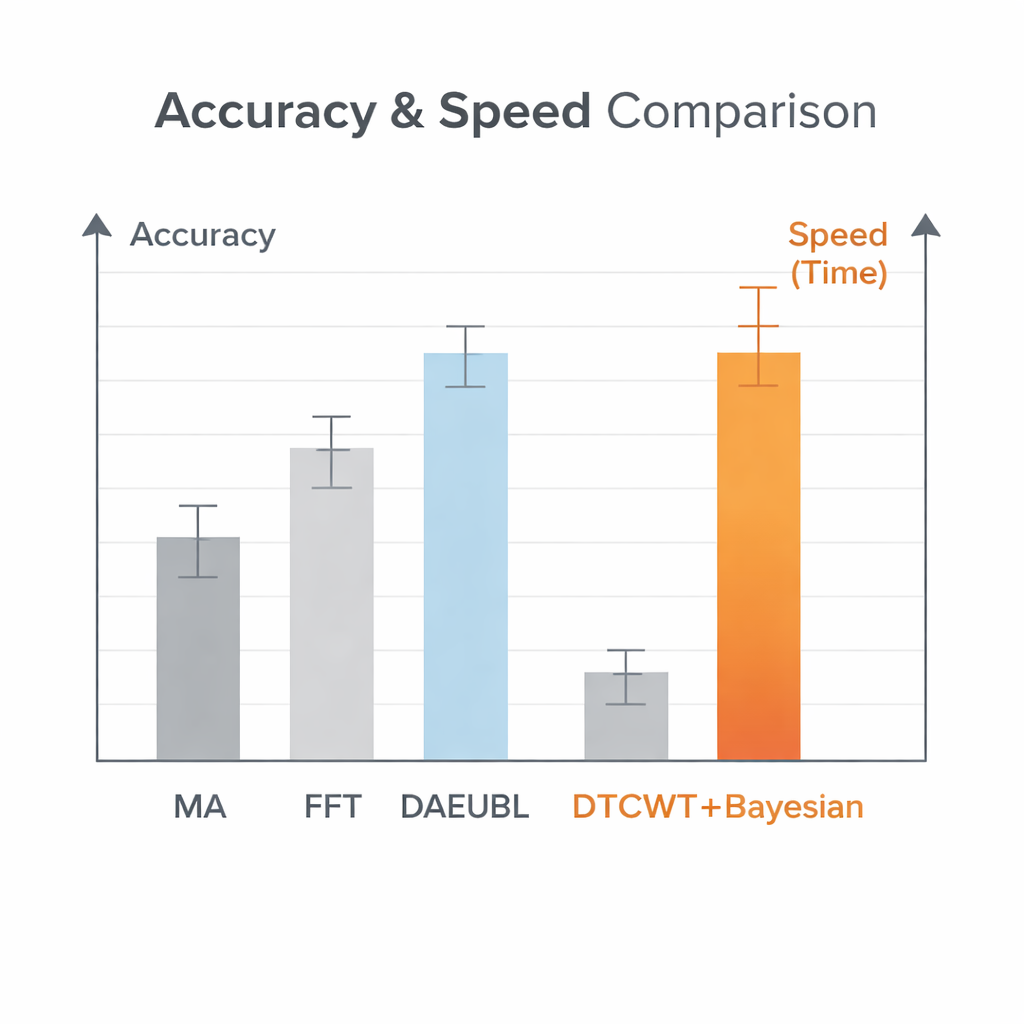

To judge how well the pipeline works, the team built large synthetic data sets in which the true jump patterns are known in advance. They generated thousands of random telegraph signals with one, two, or three independent “traps,” then mixed in controlled amounts of white or pink noise. This allowed them to check how accurately different methods recover key quantities: the number of active traps, the size of each jump, the fraction of time each state is active, and how long the signal lingers in each state before switching. They compared four complete workflows: simple moving-average filtering, filtering in the frequency domain, a powerful neural-network-based denoiser, and their new wavelet-plus-Bayesian pipeline. While the neural network achieved the highest score on a basic signal-to-noise measure, the new method more consistently identified the correct number of traps, estimated jump sizes more accurately, and remained robust even when noise levels were very high or pink noise was dominant.

Fast enough for real-time devices

Beyond accuracy, speed and memory demands are critical when dealing with very long recordings. A single hundred-second measurement at nanosecond resolution can contain billions of data points, too large for many neural-network models to process in a reasonable time. The proposed pipeline processes long signals up to about 83 times faster than the neural baseline, at the cost of using up to three times more memory—still a practical trade-off on modern hardware. The authors also apply their method to real data from carbon nanotube devices operated at low temperatures. Even though there is no “ground truth” in these experiments, the pipeline produces clear, interpretable step patterns and reasonable state statistics without any retraining or device-specific tuning, and offers knobs for experts who wish to explore alternative interpretations.

What this means going forward

In simple terms, this work delivers a reliable “click detector” for very noisy, high-speed measurements. It shows that with carefully designed, training-free tools, researchers can automatically clean up complex random telegraph signals, correctly count how many independent switching sites are present, and measure how strongly and how often they act. Because the method is fast, transparent, and easy to adapt, it can underpin future automated test benches for semiconductor manufacturing, quantum random number generators, and studies of fluctuating signals in chemistry and biology. Rather than being a one-off trick, the pipeline serves as a foundation on which more specialized or smarter modules can be built for increasingly complex devices.

Citation: Bai, T., Kapoor, A. & Kim, N.Y. A high-performance training-free pipeline for robust random telegraph signal characterization via adaptive wavelet-based denoising and Bayesian digitization methods. Sci Rep 16, 7455 (2026). https://doi.org/10.1038/s41598-026-36656-2

Keywords: random telegraph signal, signal denoising, Bayesian analysis, semiconductor noise, time series