Clear Sky Science · en

Intelligent recognition of students’ behavior for smart learning environments

Why smarter classrooms need to see what students are doing

In many classrooms, teachers must guess who is following along, who is lost, and who is quietly off-task. This paper explores how artificial intelligence can automatically recognize what students are doing—such as reading, writing, or raising a hand—from ordinary classroom photos. By turning raw images into reliable measures of classroom activity, the system aims to give teachers real-time feedback on engagement without relying on time‑consuming observation or intrusive monitoring.

From messy photos to focused snapshots

Real classrooms are crowded, busy, and visually confusing. A single image may contain dozens of students, overlapping bodies, and distracting background details like walls, screens, and posters. The authors build on a public image collection called SCB‑05, which contains thousands of classroom photos labeled with specific behaviors—such as hand‑raising, reading, writing, standing, talking, or interacting at the blackboard. Instead of feeding entire scenes to the computer, the system first uses annotation files to crop out just the regions around each student or teacher. This preprocessing step removes much of the visual clutter, so the model can focus on posture, hand position, and other clues that distinguish one behavior from another.

How the AI learns new behaviors from very few examples

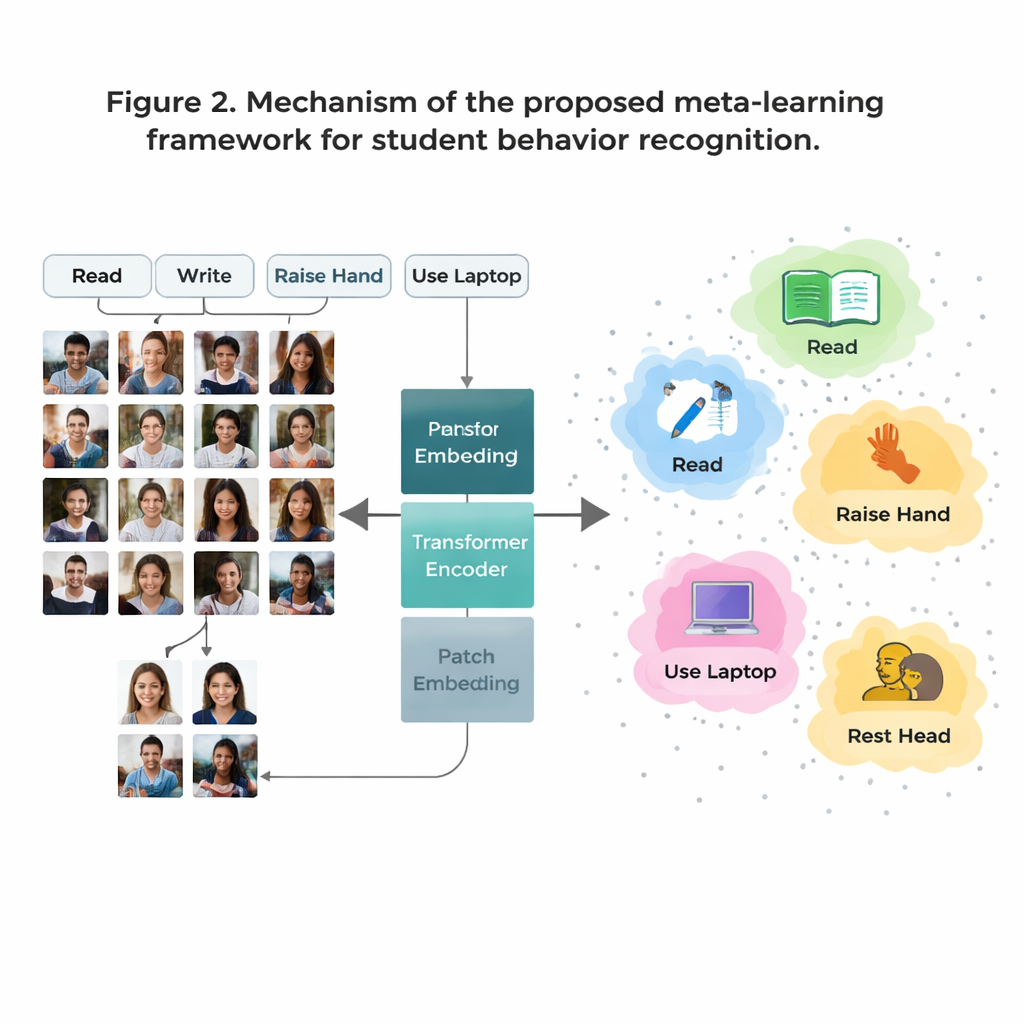

A major hurdle is that some classroom behaviors are common in the data (like reading) while others are rare (like brief on‑stage interactions). Collecting enough labeled images for every possible behavior is expensive and raises privacy concerns. To overcome this, the authors use a strategy called “few‑shot learning,” in which the model is trained to recognize new classes from only a handful of examples. They organize training as many small tasks, each containing just a few behaviors and a few sample images per behavior. For every task, the system forms a simple “prototype” for each behavior by averaging its internal representation of those examples. New images are then classified by seeing which prototype they are closest to, allowing the model to adapt quickly even when data are scarce.

Seeing the whole classroom, not just small details

Traditional image systems called convolutional neural networks tend to focus on small local patterns, like edges or textures. That can be limiting when two behaviors, such as reading and writing, look very similar up close. This work replaces those older networks with a Vision Transformer, a model that splits each image into patches and learns how all patches relate to one another. This global view helps the system understand subtle posture differences and long‑range cues—such as the relationship between a raised hand and a teacher at the front of the room. The team further sharpens the model by training it to pull together images of the same behavior while pushing apart look‑alike but different behaviors, with extra emphasis on “hard” confusing cases. This makes the internal map of behaviors cleaner and easier to separate.

How well it works and why it matters

On the SCB‑05 benchmark, the proposed method reaches about 91% overall accuracy and strong scores on more demanding measures that account for imbalanced data. Common behaviors like reading and hand‑raising are recognized especially well, while rarer ones like blackboard writing remain more challenging but still perform better than with earlier systems. Visual inspections of the model’s internal clusters show that different behaviors form tight, well‑separated groups, indicating that the AI has learned distinct “signatures” of classroom actions. When tested on a different classroom dataset with new camera angles and layouts, performance dropped only slightly, suggesting that the learned representation is not tied to a single room or school.

What this means for teaching and learning

In everyday terms, the study shows that computers can reliably spot many key student behaviors from still images, even when they have seen only a few examples of each. Rather than replacing teachers, such systems could quietly summarize who is engaged, who frequently seeks help, or which activities tend to lose attention—all without tracking student identities. With further work on privacy, fairness, and video over time, this kind of behavior‑aware AI could become a powerful ally for educators designing more responsive and inclusive learning environments.

Citation: Abozeid, A., Alrashdi, I. & Al-Makhlasawy, R.M. Intelligent recognition of students’ behavior for smart learning environments. Sci Rep 16, 5674 (2026). https://doi.org/10.1038/s41598-026-36633-9

Keywords: smart classroom, student behavior, computer vision, few-shot learning, vision transformer