Clear Sky Science · en

AI-Assisted detection of corneal nerve structural abnormalities in early diabetic keratopathy: development and validation of a deep learning framework

Why tiny eye nerves matter in diabetes

Diabetes is well known for damaging large nerves in the feet and legs, often leading to pain, numbness, and even amputations. But long before this damage becomes obvious, the smallest nerves in the body can start to malfunction. The clear window at the front of the eye—the cornea—is packed with these tiny fibers. This study shows how advanced imaging and artificial intelligence (AI) can work together to spot early nerve damage in the cornea, potentially offering a new, painless way to catch nerve problems in people with diabetes before they become severe.

Seeing early nerve damage through the eye

Today’s tests for diabetic nerve damage are far from perfect. Simple bedside checks depend on a doctor’s skill and the patient’s responses, and they often miss subtle, early changes. More precise tests, like nerve conduction studies or skin biopsies, are invasive, expensive, and not practical for routine screening. The cornea, however, can be examined noninvasively using in vivo confocal microscopy, a specialized camera that captures highly magnified images of corneal nerves. Researchers have already shown that overall loss of these nerves tracks with the severity of diabetic nerve disease. But the earliest warning signs are not always about how many nerves there are; they can be about tiny structural defects along otherwise intact fibers.

Focusing on tiny hotspots called microneuromas

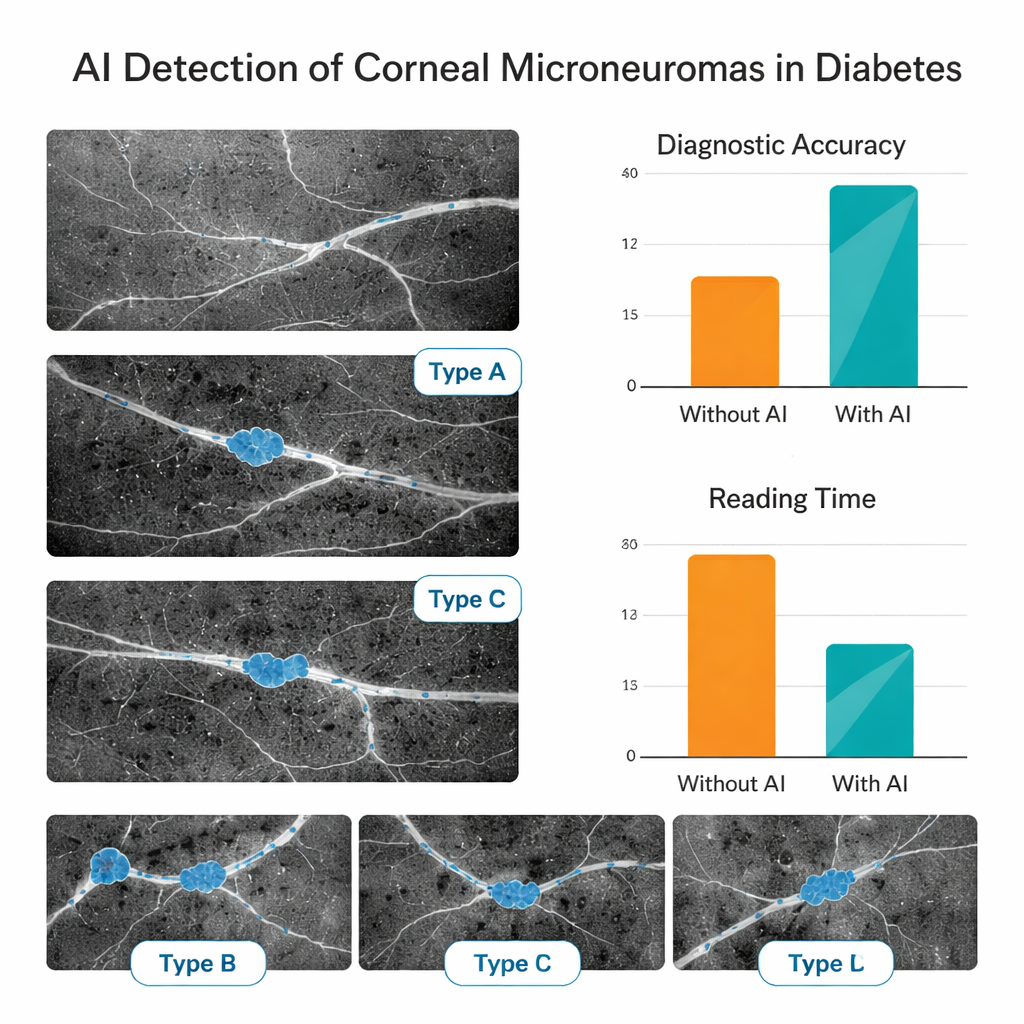

In recent years, doctors using high-powered microscopes have noticed small, bright, swollen spots along corneal nerves in people with diabetes. These “microneuromas” are thought to reflect stressed or regenerating nerve endings and may appear before large areas of nerve loss. The team behind this study set out to teach a computer to recognize these subtle features automatically. They gathered more than 5,000 corneal images from people with diabetes and healthy volunteers at two eye centers in China. Experienced corneal specialists carefully filtered out poor-quality images, labeled where microneuromas were present, and sorted them into three visible patterns: localized swellings, larger bulb-like enlargements, and more diffuse bright patches.

Training an AI assistant to read nerve images

Using these expert-labeled images, the researchers built a multi-step deep learning system. First, one AI model screened out blurry or off-target images and kept only those that clearly showed the key nerve layer. A second model judged whether a given image contained microneuromas at all. A third outlined the exact regions where these lesions appeared, and three additional models categorized them into the three visual types. The system was trained on data from one hospital and then tested both on unseen images from the same center and on a fully independent group from another hospital, to check whether it worked reliably across different patient groups and imaging sessions.

How well the AI performed in practice

The AI proved highly accurate at basic quality control, correctly judging usable images more than 97% of the time. When deciding whether microneuromas were present, it correctly classified images roughly 81–84% of the time in both internal and external test sets. Its ability to segment and subtype lesions was also strong, with performance remaining reasonably high even on data from the second center. To see whether this mattered in real-world reading, the team asked junior eye doctors—with little formal training in this imaging technique—to read a separate set of 150 images first on their own and then with AI support. With the AI’s guidance, their diagnostic accuracy jumped from about 69% to 88%, and their average reading time per image was cut by more than half, suggesting that such tools could speed up clinics and reduce eye strain for clinicians.

What this could mean for people with diabetes

This study shows that a carefully trained AI system can automatically find and describe tiny nerve abnormalities in the cornea, and that doing so can substantially help less-experienced doctors interpret complex eye scans. While the research is still early and based on retrospective data from two centers, it strengthens the idea that the eye’s surface can act as a “window” into the health of the body’s small nerves. If future multi-center, long-term studies confirm that corneal microneuromas reliably signal early diabetic nerve damage, this kind of AI-assisted imaging could become a quick, noninvasive way to screen people with diabetes, track progression, and perhaps intervene before nerve injury becomes permanent.

Citation: Pan, J., Shi, X., Wan, L. et al. AI-Assisted detection of corneal nerve structural abnormalities in early diabetic keratopathy: development and validation of a deep learning framework. Sci Rep 16, 5846 (2026). https://doi.org/10.1038/s41598-026-36576-1

Keywords: diabetic neuropathy, corneal nerves, microneuromas, deep learning, in vivo confocal microscopy