Clear Sky Science · en

Adapting quality function deployment to translate patient feedback into prioritized technical requirements for healthcare artificial intelligence

Why Patient Voices Matter for Hospital AI

When you leave an online review after a hospital visit, it can feel like your words vanish into the void. This study shows how those comments could instead become a powerful steering wheel for the artificial intelligence (AI) tools hospitals are increasingly using to monitor quality and patient experience. By turning thousands of patient reviews into clear priorities for engineers, the authors propose a way to build hospital AI that is not only smart on paper but genuinely responsive, fair, and useful in real life.

From Online Reviews to Actionable Signals

The researchers began with a simple question: what if we treated patient comments as the main blueprint for designing healthcare AI? They collected nearly 15,000 Google Maps reviews from 53 private hospitals in one Malaysian state and focused on the 1,279 reviews that raised serious complaints. Rather than relying on a few experts to read everything by hand, they used large language models—advanced text-processing AI—to sort each comment into detailed themes such as staff behavior, communication issues, waiting times, billing problems, and accessibility. Human experts checked a sample and found strong agreement with the AI’s coding, suggesting that this automated reading of patient voices was reliable enough to guide design decisions.

What Patients Actually Complain About

When the team grouped the detailed themes into broader categories, a clear picture emerged. The most common concerns were about how patients were treated as people, not just their medical conditions. Service quality, professionalism, and communication made up almost 40% of complaints each, followed closely by long waiting times and appointment issues. Topics like facilities, finances, and patient rights also appeared, but less often. Using statistical techniques, the authors turned these patterns into six big “need” areas, such as service and communication, clinical care and experience, patient flow, amenities, money matters, and rights and access. They then rated how serious and how frequent each problem was, creating a score that shows which areas most urgently need improvement.

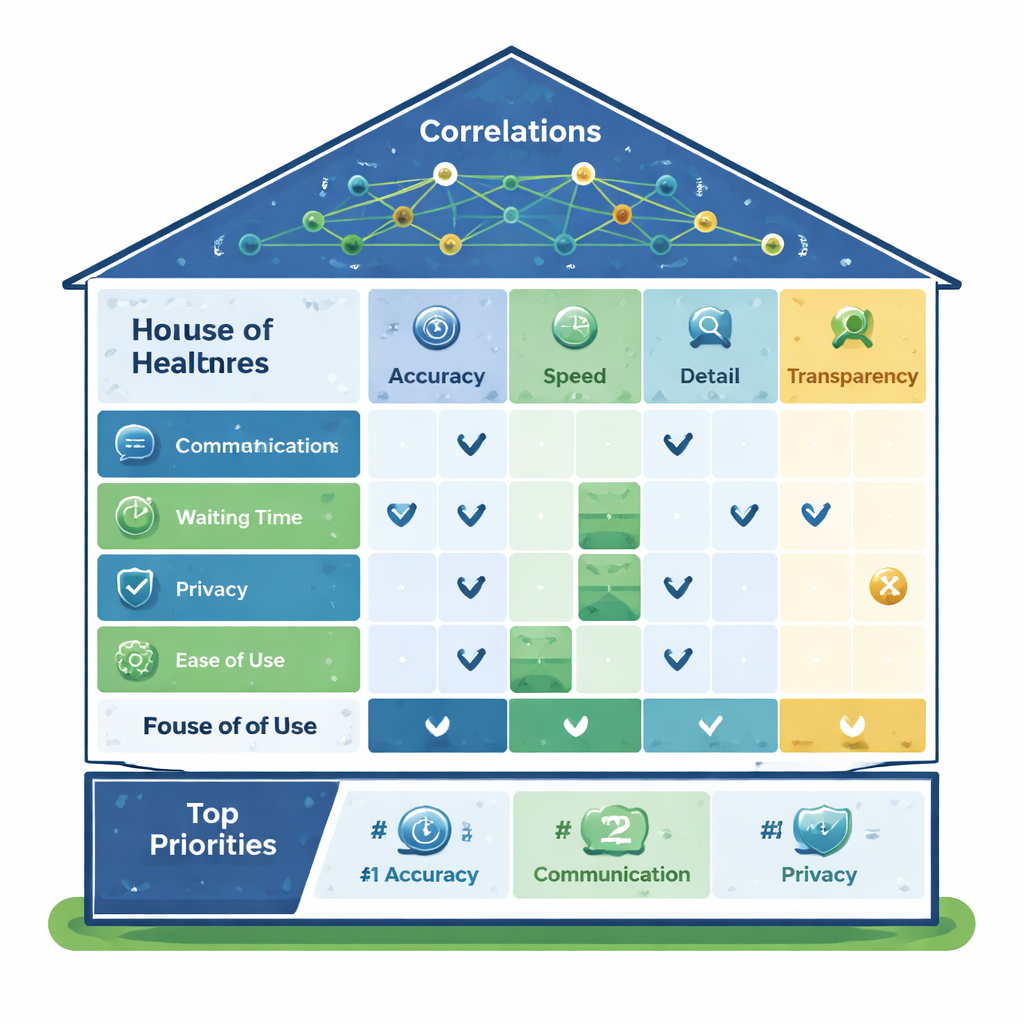

Building a House of Needs and Solutions

To connect what patients want with how engineers build AI systems, the authors adapted a design method called Quality Function Deployment, often visualized as a “House of Quality.” On the left side of this house sit the patient needs; across the top are the AI features that can be tuned, such as how accurately the system reads text, how precisely it detects sentiment, how finely it can sort comments into categories, how quickly it works, and how well it filters out fake reviews. In the middle is a grid showing how strongly each technical feature helps address each patient need. At the bottom, the method calculates priority scores, showing which AI capabilities should receive the most investment if the goal is to improve real patient experience rather than just technical benchmarks.

Which AI Features Matter Most

The analysis revealed a clear hierarchy. The top priority was “granular categorization”—the ability of the AI to sort patient comments into very specific, meaningful buckets rather than vague labels. Close behind came accurate sentiment analysis and solid basic text interpretation (how faithfully the AI understands what patients are saying). Together, these form a critical cluster: organizing what people talk about, capturing how they feel, and reading their words correctly. Human–AI agreement—how closely the system’s judgments match those of human reviewers—ranked next, highlighting the need for oversight and trust. Speed and real-time processing also mattered, but the study found trade-offs: pushing for ultra-fast responses can undermine the depth and detail of analysis. Detecting fake reviews, while useful for data quality, ranked lowest in direct impact on patient satisfaction.

What This Means for Patients and Hospitals

For a lay reader, the takeaway is straightforward: if hospitals want AI to improve care you actually feel, they must start by listening carefully to patient voices at scale and then design their technology around those concerns. This framework offers a step-by-step way to do that, turning messy review text into a ranked list of features for engineers to build and improve. While the current results come from private hospitals in Malaysia and still need real-world testing in other settings, the core idea is widely applicable: measure what matters to patients, link it systematically to how AI is built, and keep repeating the cycle. Done well, this approach could help move healthcare AI from impressive lab scores to tangible gains in courtesy, clarity, timeliness, and trust at the bedside.

Citation: Muda, N., Sulaiman, M.H. Adapting quality function deployment to translate patient feedback into prioritized technical requirements for healthcare artificial intelligence. Sci Rep 16, 5713 (2026). https://doi.org/10.1038/s41598-026-36550-x

Keywords: patient feedback, healthcare AI, human-centered design, quality improvement, natural language processing