Clear Sky Science · en

Serial femtosecond crystallography data processing at the global science data hub center at KISTI

Why tiny crystals need big computers

Modern X‑ray lasers can take “molecular movies” of proteins and other molecules by firing ultra‑short, ultra‑bright pulses at countless tiny crystals. This approach, called serial femtosecond crystallography, produces a flood of images that reveal how molecules look and move at room temperature. But there is a catch: a single experiment can generate terabytes of data, far more than a typical lab computer can handle quickly. This paper explains how Korea’s national data hub, GSDC at KISTI, was built and tested to process these huge datasets efficiently, and what practical lessons scientists can use to get from raw images to 3D structures without long delays.

From laser flashes to structure snapshots

In serial femtosecond crystallography, an X‑ray free electron laser (XFEL) fires rapid pulses at streams or arrays of microscopic crystals. Each crystal is hit only once, producing a single “snapshot” diffraction pattern before it is destroyed. To reconstruct the full three‑dimensional structure of the molecule, scientists must combine hundreds of thousands to millions of these snapshots. Many images are useless—some contain no signal, others show multiple overlapping crystals. Useful images (“hits”) have to be detected, sorted, and converted into intensity data that can be merged into a high‑quality structure. Doing this in anything close to real time demands high‑performance computing, especially when the laser is running at tens of pulses per second.

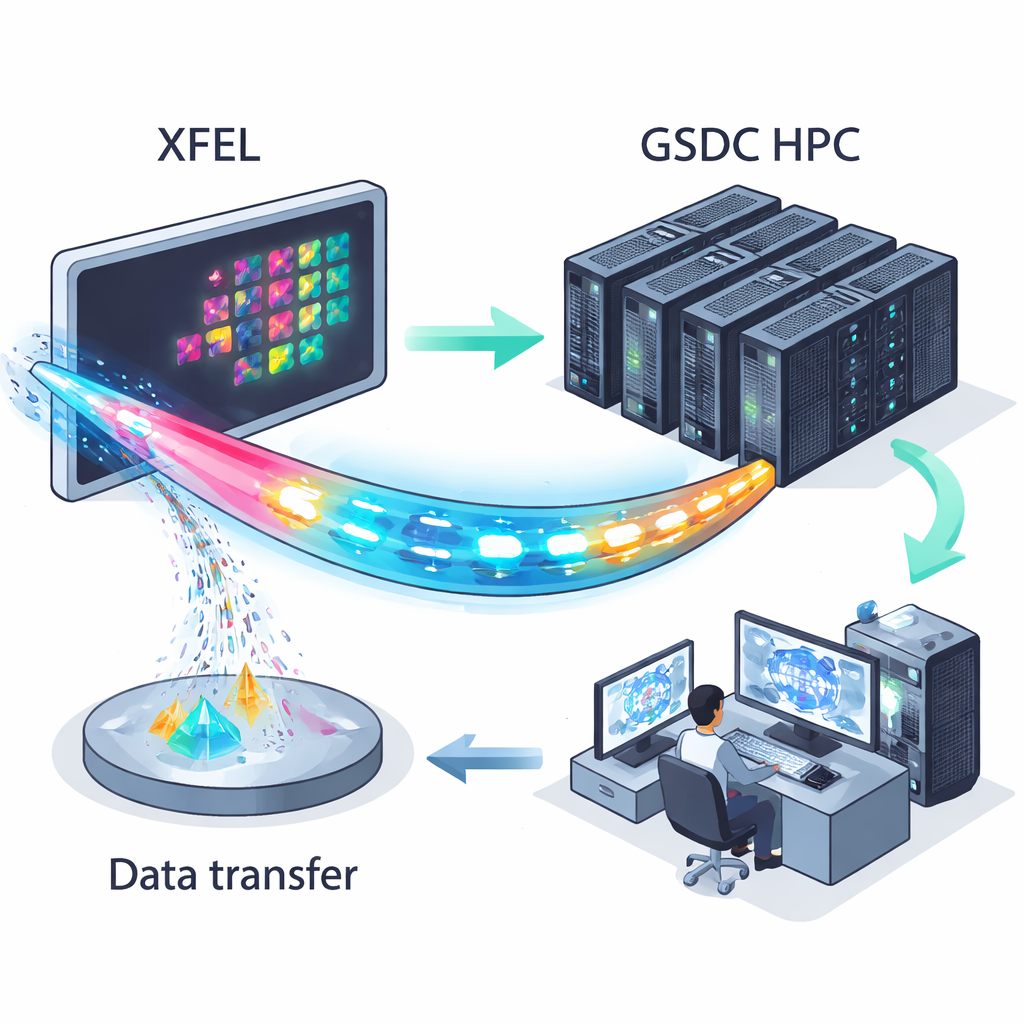

A national data hub for X‑ray experiments

The Global Science Data hub Center (GSDC) at KISTI was set up as a national‑scale facility to serve data‑intensive sciences, from particle physics to genomics. For serial crystallography at the Pohang Accelerator Laboratory XFEL (PAL‑XFEL), GSDC operates three dedicated servers equipped with dozens of CPU cores, hundreds of gigabytes of memory, and a high‑speed parallel storage system. During experiments at PAL‑XFEL’s nanocrystallography station, diffraction images are collected on a fast X‑ray detector and streamed to GSDC over a 10‑gigabit‑per‑second link. A single 12–24 hour experiment can generate several to almost ten terabytes of data. At GSDC, users log in remotely, filter out non‑useful frames, and run specialized software—such as CrystFEL and its associated indexing programs—to turn raw images into refined structural data.

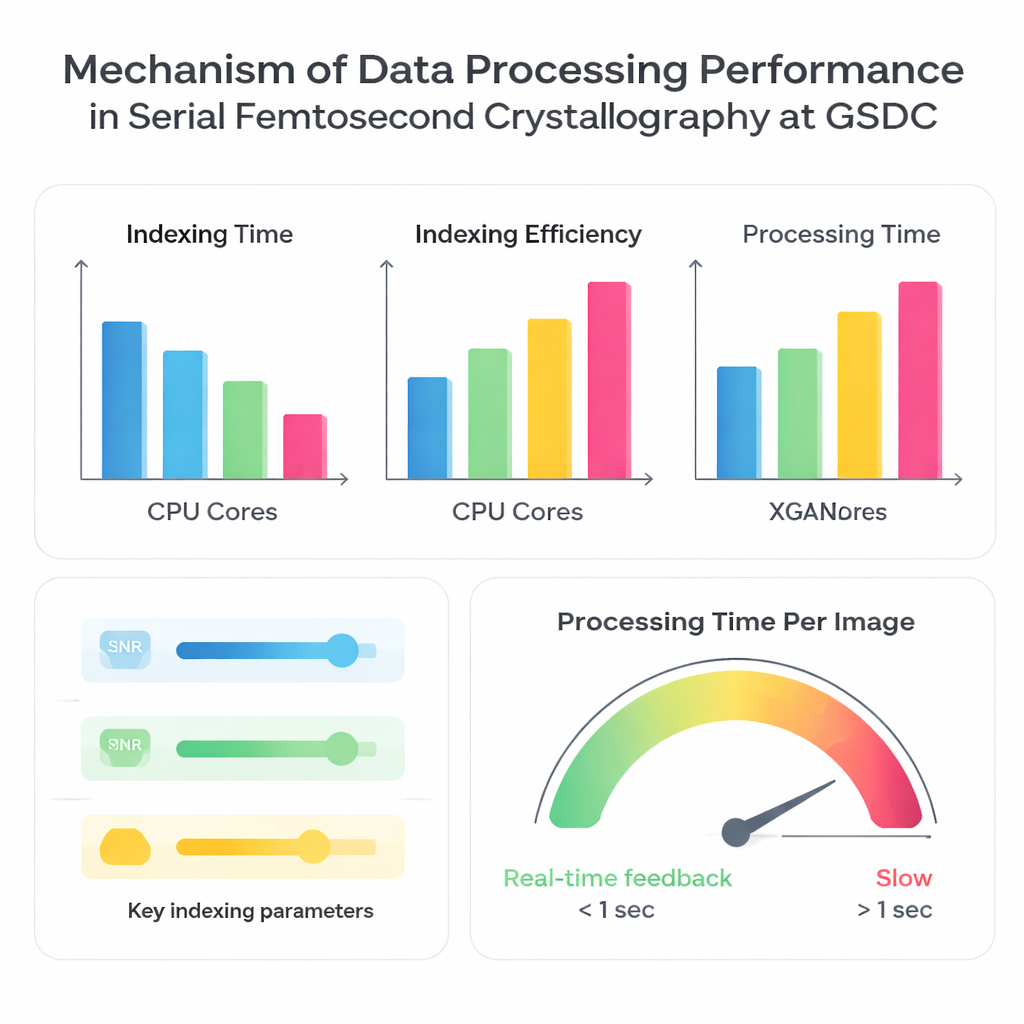

How many processors help, and when

The authors put the GSDC system to the test using three previously collected datasets from different proteins. First, they asked how well the processing speed improves when more CPU cores are used in parallel. As expected, using more processors reduced the total time needed to index images, but not in a perfectly proportional way. Going from 10 to about 30–40 CPU cores gave strong gains, after which the benefits tapered off. Beyond that point, additional cores added overhead and were held back by limits such as memory bandwidth, input/output speed when reading many small files, and coordination among many parallel tasks. This makes clear that “more cores” is not always better; there is a sweet spot where the hardware is used efficiently without being bottlenecked.

The trade‑off between speed and completeness

Next, the team compared four widely used indexing algorithms—XDS, DirAx, MOSFLM, and XGANDALF—on the same computing platform. Some methods, like XDS and DirAx, were faster overall but identified a smaller fraction of images that could be successfully turned into useful diffraction patterns. Others, such as MOSFLM and XGANDALF, were slower but converted more images into usable data and generally produced better statistical quality in the final merged dataset. The authors also explored how simple input choices influence both speed and success rate: raising the signal‑to‑noise threshold or turning off multi‑crystal indexing made processing faster but reduced how many images could be used; lowering the threshold or enabling multi‑crystal handling did the opposite. Crucially, even small errors in the detector geometry—such as the distance between detector and sample—caused indexing to fail more often and made processing dramatically slower, because the software kept trying and rejecting incorrect solutions.

What this means for future experiments

By systematically measuring how hardware choices, software algorithms, and user‑controlled settings affect performance, this study turns a complex data‑handling challenge into a set of practical guidelines. For scientists planning PAL‑XFEL experiments, it shows when parallel processing is most effective, which indexing programs are better for quick feedback versus maximum data quality, and why careful calibration of the detector geometry matters so much. The authors conclude that GSDC already enables efficient processing and, in some cases, real‑time feedback during data collection, but further expansion of computing resources will be needed as repetition rates and dataset sizes continue to grow. For non‑experts, the key message is that making “movies” of molecules is not only a triumph of advanced lasers and detectors—it also depends critically on well‑designed computing centers that can keep up with the data deluge.

Citation: Nam, K.H., Na, SH. Serial femtosecond crystallography data processing at the global science data hub center at KISTI. Sci Rep 16, 6786 (2026). https://doi.org/10.1038/s41598-026-36540-z

Keywords: serial femtosecond crystallography, X-ray free electron laser, high-performance computing, data processing, protein structure