Clear Sky Science · en

Configurational effects of personal innovativeness, self-efficacy, and perceived risk on AI adoption in media students

Why this matters for tomorrow’s media

Artificial intelligence is no longer just a futuristic headline for newsrooms and film studios—it is becoming a core tool that today’s media students must decide whether, and how, to use. This study looks closely at what drives or blocks those decisions. By examining hundreds of university media students in China, the authors uncover how curiosity, confidence, and fear interact to shape whether young journalists, producers, and content creators actually embrace AI in their daily work.

Curious minds in an AI-powered classroom

The media industry is rapidly shifting toward human–machine collaboration: algorithms recommend stories, generate images, and even draft news copy. Yet media schools have struggled to keep pace, often adding AI topics in a piecemeal way and focusing more on tools than on students’ own motivations. This study argues that to prepare future media professionals, educators need to understand not just what AI can do, but how students feel about using it. The researchers extend a classic framework from technology research, the Technology Acceptance Model, to include three human factors especially relevant to AI: personal innovativeness (how eager students are to try new things), AI self-efficacy (how capable they feel using AI), and perceived risk (how dangerous or worrisome they think AI might be).

What shapes students’ first impressions of AI

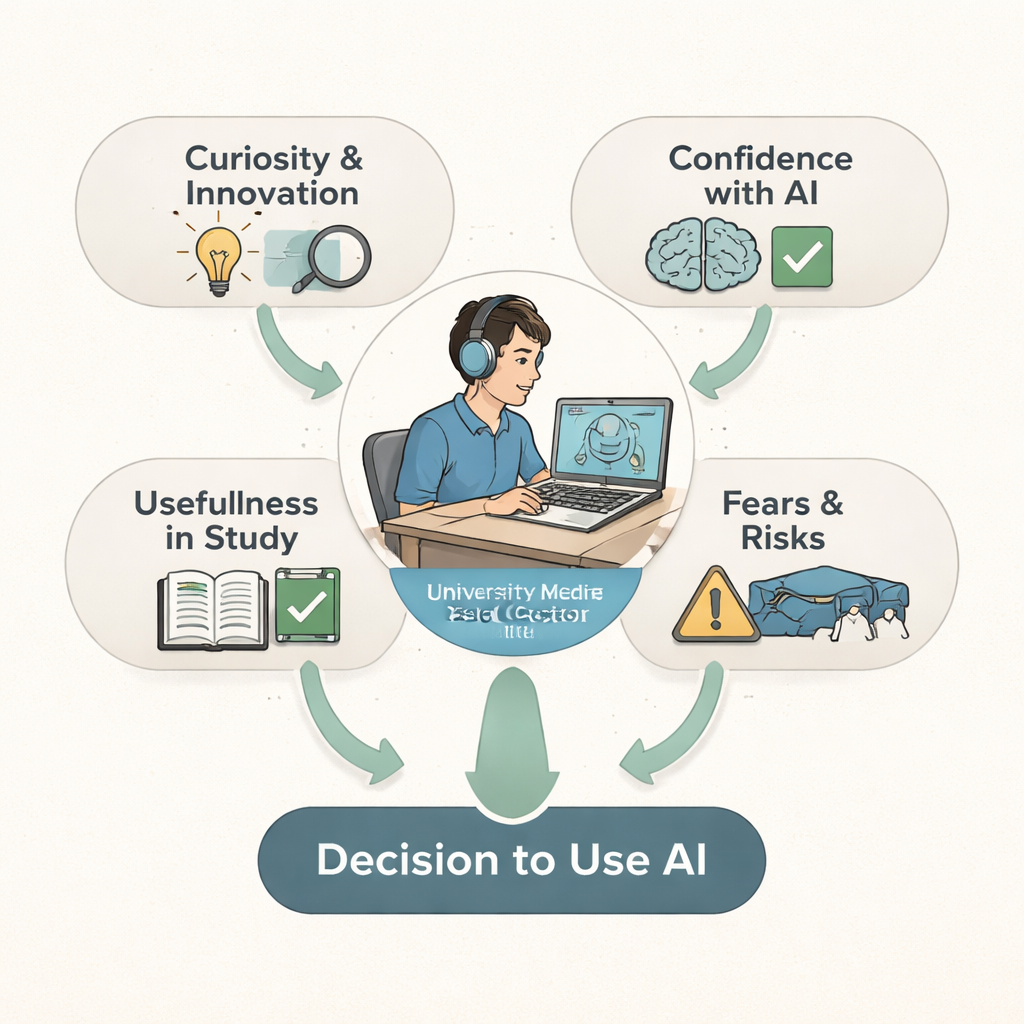

Surveying 588 media students, the authors find that both curiosity and confidence strongly color how useful and how easy AI appears. Students who see themselves as innovative are more likely to believe AI tools will help them and to think they can be handled without much hassle. Likewise, students who feel competent with AI report higher expectations that these tools will improve their work and be manageable in practice. These beliefs—about usefulness and ease—turn out to be the main gateways through which inner traits like innovativeness and self-belief are translated into actual willingness to use AI in study and creative projects.

When benefits meet fear and doubt

Perceived usefulness and ease of use are not the whole story. The study shows that perceived risk—worries about privacy, bias, errors, or loss of control—can weaken the pull of both. Even when students think AI is helpful and simple, strong concerns can blunt their intention to rely on it. Using advanced statistical modeling and a comparative method that looks at combinations of conditions rather than single causes, the authors show that no one factor is sufficient on its own. Instead, students’ decisions emerge from intersecting configurations of motivation, skill, and risk perception, reflecting the messy reality of how people weigh new technologies that affect their future careers.

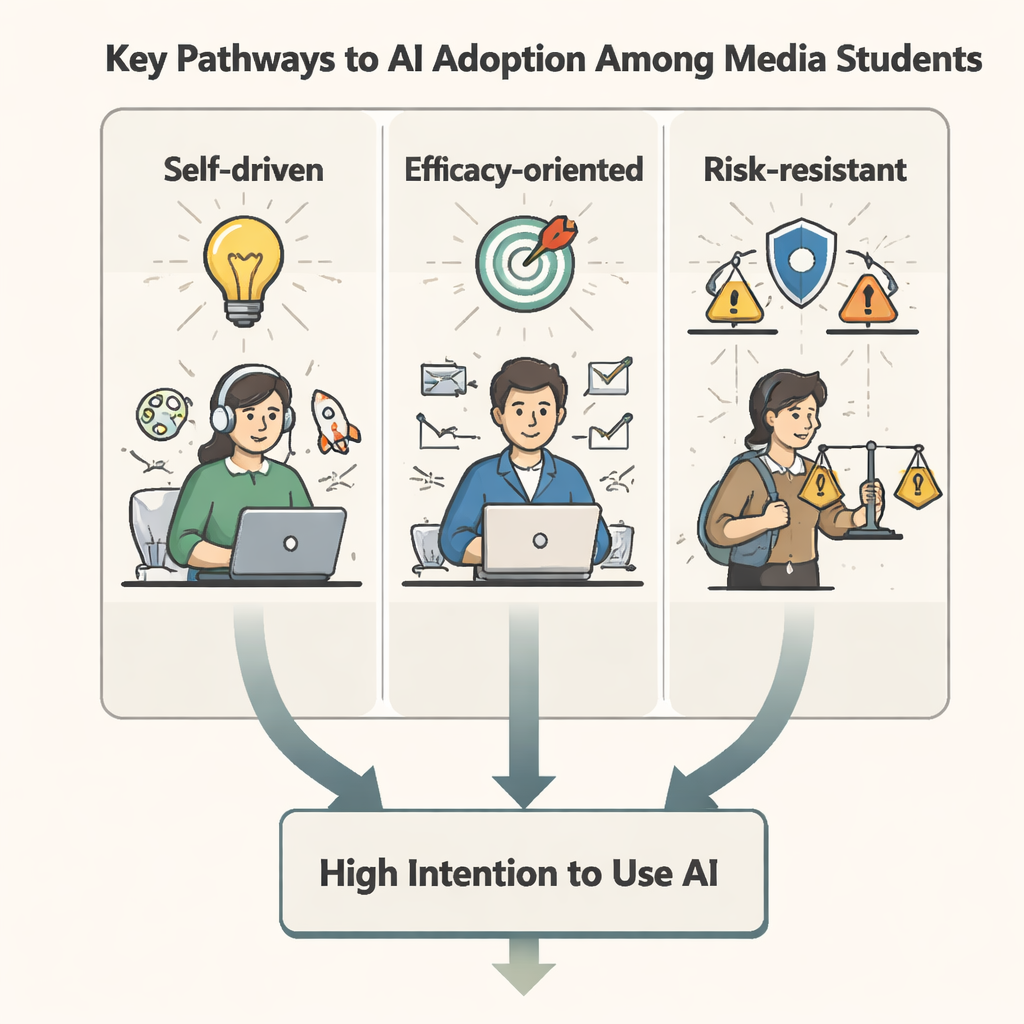

Three different roads to saying “yes” to AI

The study identifies three main patterns that lead to a high intention to use AI tools. In the “self-driven” pathway, students with strong personal innovativeness and high AI self-efficacy are willing adopters even if the tools are not especially simple or risk-free; their inner drive carries them forward. In the “efficacy-oriented” pathway, students’ belief in their own ability to handle AI compensates for worries and boosts adoption, even when perceived usefulness is mixed. Finally, in the “risk-resistant” pathway, students with very high AI self-efficacy can withstand significant concern about AI’s dangers: they still choose to use AI because they trust themselves to manage problems. Across all three patterns, internal traits and perceptions work together, rather than in isolation, to shape behavior.

What this means for media education

For a general reader, the key takeaway is that getting media students to use AI wisely is not only about installing the latest software or mandating new courses. It is about nurturing curiosity, building hands-on confidence, and openly addressing fears. The authors conclude that sustainable AI adoption in media education requires human-centered design: curricula that strengthen students’ sense of agency, demonstrate clear benefits in real media tasks, and teach them to understand and manage risks. If educators do this well, tomorrow’s journalists and storytellers will not simply be pushed into using AI—they will choose to use it, with both enthusiasm and critical judgment.

Citation: Lan, Y., Liu, S., Chen, H. et al. Configurational effects of personal innovativeness, self-efficacy, and perceived risk on AI adoption in media students. Sci Rep 16, 5681 (2026). https://doi.org/10.1038/s41598-026-36538-7

Keywords: AI adoption, media students, technology acceptance, digital journalism education, perceived risk